SVG drawing, fill-rule

297 views

Skip to first unread message

Andrew Varga

Jan 27, 2022, 11:36:50 AM1/27/22

to WebGL Dev List

Hi all,

I'm wondering about how browsers are drawing SVGs, in particular with the fill-rule attribute. Are there any resources that point to an overview of the implementation or the source code if it's open?

Or maybe any WebGL applications that implement this? three.js has an SVGLoader but fill-rule is not supported in it.

Thanks,

Andrew

I'm wondering about how browsers are drawing SVGs, in particular with the fill-rule attribute. Are there any resources that point to an overview of the implementation or the source code if it's open?

Or maybe any WebGL applications that implement this? three.js has an SVGLoader but fill-rule is not supported in it.

Thanks,

Andrew

Ken Russell

Jan 28, 2022, 4:48:02 PM1/28/22

to WebGL Dev List

Hi Andrew,

Each browser's implementation of SVG rendering is different, and most have a large number of layers, bottoming out in a complex rendering library like Skia or Cairo. Just finding all the software layers in a browser like Chromium and pointing them out would take a lot of time. http://cs.chromium.org/ might be a useful tool.

If you have a more concrete question that would help.

Also, note that if you're trying to get an SVG image into a WebGL texture, a recommended path is to first draw the SVG image into a 2D canvas, and then upload that 2D canvas into a WebGL texture. That should exercise better-tested code paths in all browsers than trying to upload the SVG image to WebGL directly via e.g. texImage2D.

-Ken

--

You received this message because you are subscribed to the Google Groups "WebGL Dev List" group.

To unsubscribe from this group and stop receiving emails from it, send an email to webgl-dev-lis...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/webgl-dev-list/49b45e13-d461-433c-9938-d3a4ab4aca7bn%40googlegroups.com.

Andrew Varga

Jan 31, 2022, 12:17:31 PM1/31/22

to WebGL Dev List

Hi Ken,

thank you, that is useful, didn't know about this best practice about SVG -> WebGL texture uploads.

What I'm searching for is the high level approach about how an SVG is renderered in real time, especially the <path> element if it's animated.

As soon as the path data is animated, the SVG would need to be re-uploaded into a texture every frame, which might not be feasible performance wise - there can be many SVGs.

Or if the SVG itself is animated then instead of uploading the entire SVG content into a texture in full size and rendering that texture on a quad with appropriate transforms, it would be better to render only the visible part depending on the camera view to keep it sharp instead of pixelated (so in screen space) and also avoid rendering parts which may be cut off the screen. In this case the SVG would need to be re-uploaded every frame too, instead of just animating the quad with the svg texture.

If I ignore self-colliding paths and compound paths (ie. multiple subpaths) with various fill-rule settings, then I can triangulate the shape and simply draw the triangles. Of course I may have to do a finer level of triangulation depending on the screen space size of the SVG, but at least the drawing is fast if the SVG/camera is only being translated during animation.

However this doesn't work when compound paths/fill-rule needs to be supported, and that's why I was wondering what browsers do. Do they create triangles, or do they implement fill-rule in the fragment shader? Do they convert the paths into a hierarchy of boolean operations?

In the SVG spec fill-rule is defined by shooting rays to infinity and counting intersections when determining whether a point is inside the shape or not, and I wonder if that's how it's actually implemented or if there is a better/faster way?

I found an open source library "paper.js" which handles fill-rule among other things, but its renderer backend is canvas 2d so it fall backs to that for the actual drawing it seems:

https://github.com/paperjs/paper.js/blob/a11d39630039356e95942bf34e6ecc130424026c/src/item/Shape.js#L266

There is also Figma which does support it internally I think and does use WebGL but it's not open (also the Spector.js inspection doesn't work with Figma for some reason, maybe due to wasm).

Thanks,

Andrew

thank you, that is useful, didn't know about this best practice about SVG -> WebGL texture uploads.

What I'm searching for is the high level approach about how an SVG is renderered in real time, especially the <path> element if it's animated.

As soon as the path data is animated, the SVG would need to be re-uploaded into a texture every frame, which might not be feasible performance wise - there can be many SVGs.

Or if the SVG itself is animated then instead of uploading the entire SVG content into a texture in full size and rendering that texture on a quad with appropriate transforms, it would be better to render only the visible part depending on the camera view to keep it sharp instead of pixelated (so in screen space) and also avoid rendering parts which may be cut off the screen. In this case the SVG would need to be re-uploaded every frame too, instead of just animating the quad with the svg texture.

If I ignore self-colliding paths and compound paths (ie. multiple subpaths) with various fill-rule settings, then I can triangulate the shape and simply draw the triangles. Of course I may have to do a finer level of triangulation depending on the screen space size of the SVG, but at least the drawing is fast if the SVG/camera is only being translated during animation.

However this doesn't work when compound paths/fill-rule needs to be supported, and that's why I was wondering what browsers do. Do they create triangles, or do they implement fill-rule in the fragment shader? Do they convert the paths into a hierarchy of boolean operations?

In the SVG spec fill-rule is defined by shooting rays to infinity and counting intersections when determining whether a point is inside the shape or not, and I wonder if that's how it's actually implemented or if there is a better/faster way?

I found an open source library "paper.js" which handles fill-rule among other things, but its renderer backend is canvas 2d so it fall backs to that for the actual drawing it seems:

https://github.com/paperjs/paper.js/blob/a11d39630039356e95942bf34e6ecc130424026c/src/item/Shape.js#L266

There is also Figma which does support it internally I think and does use WebGL but it's not open (also the Spector.js inspection doesn't work with Figma for some reason, maybe due to wasm).

Thanks,

Andrew

Thomas Welter

Jan 31, 2022, 12:35:45 PM1/31/22

to WebGL Dev List

Hi Andrew,

You can use a triangulation library that supports fill rules.

The expectation viewer shows you winding rule settings. The resulting triangles can be used with webgl.

Thomas

Op vrijdag 28 januari 2022 om 22:48:02 UTC+1 schreef Kenneth Russell:

Ken Russell

Jan 31, 2022, 2:14:37 PM1/31/22

to WebGL Dev List

Hi Andrew,

If you're interested in the lowest-level algorithms used for GPU accelerated path rasterization, then on the Chromium side take a look at Skia: https://skia.org/ . You could do some investigation into the GPU backend, Ganesh, and see what algorithms are currently being used for path rendering. Skia implements all of Chromium's web page rendering, including the 2D canvas rendering context, all elements' rasterization, and the compositing phase after all rasterization.

It's even possible to compile Skia to WebAssembly, and run on top of WebGL inside a Canvas element! See the CanvasKit project:

If you build it yourself you could add logging to see which code paths are taken for animated path rendering.

-Ken

To view this discussion on the web visit https://groups.google.com/d/msgid/webgl-dev-list/f0963829-cd35-451e-a70e-905bf2cbe088n%40googlegroups.com.

Andrew Varga

Jan 31, 2022, 6:18:37 PM1/31/22

to WebGL Dev List

Hi Ken, Thomas,

thank you both, these are really useful links, I'm digging into them. CanvasKit definitely looks very interesting too, looks like it's already being used in production by some web apps (with Flutter) which is a good sign.

Thank you,

Andrew

thank you both, these are really useful links, I'm digging into them. CanvasKit definitely looks very interesting too, looks like it's already being used in production by some web apps (with Flutter) which is a good sign.

Thank you,

Andrew

Joe Eagar

Mar 18, 2022, 3:08:01 PM3/18/22

to WebGL Dev List

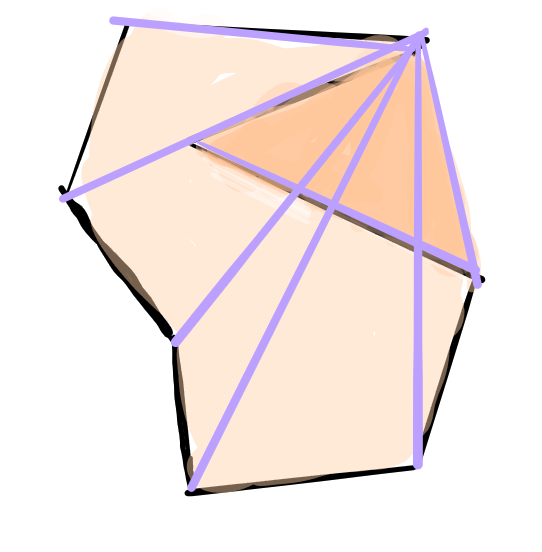

You can use the stencil buffer for this. Pick a point in the polygon then fan out triangles to all the other points.

Draw the triangles into the stencil buffer in increment mode. Pixels outside the polygon will get an even number of stencil values, ones inside odd.

The nice thing about this technique is you don't have to tessellate the polygon.

I've made a somewhat crappy illustration. The dark colored triangle has drawn on top of other triangles; thus the stencil

value is even, which means it's outside the (concave) polygon.

Andrew Varga

Oct 12, 2022, 8:57:09 AM10/12/22

to webgl-d...@googlegroups.com

Hi Joe,

thank you and sorry for not catching your reply sooner, I'm only getting back to this problem now. This solution with the stencil buffer does look intriguing!

The downside I see is that I have to do this additional stencil buffer pass before actually rendering the polygon, and during rendering I have to read the stencil buffer which may be slower?

Also I'm wondering about the border of these triangles, since the stencil buffer is either even or odd there would be hard pixelation at the border of these triangles unless I do something about it..

Not having to triangulate is nice, but if I have a polygon that has no curves, then I only have to triangulate the path data once (otherwise I'd have to do it if its screen space size changes to make sure curves remain smooth) so it's not a big problem.

Nevertheless I'll try it out and see what it looks like.

Thank you,

Andrew

The downside I see is that I have to do this additional stencil buffer pass before actually rendering the polygon, and during rendering I have to read the stencil buffer which may be slower?

Also I'm wondering about the border of these triangles, since the stencil buffer is either even or odd there would be hard pixelation at the border of these triangles unless I do something about it..

Not having to triangulate is nice, but if I have a polygon that has no curves, then I only have to triangulate the path data once (otherwise I'd have to do it if its screen space size changes to make sure curves remain smooth) so it's not a big problem.

Nevertheless I'll try it out and see what it looks like.

Thank you,

Andrew

To view this discussion on the web visit https://groups.google.com/d/msgid/webgl-dev-list/82f71865-38d3-4c9a-9f91-7ad67c040012n%40googlegroups.com.

Reply all

Reply to author

Forward

0 new messages