Log Rotation / Archival

swapnils

F Tux

- Each Wazuh Server node is estimated to be able to handle about 3000 Wazuh Agents correctly. If your endpoint count is below that, and you don't need High Availability, you can probably do away with just one node.

- The Wazuh Indexer Cluster needs to have an odd number of nodes. So once again, 1 node should be fine for smaller environments, and as soon as you need HA, or your data volumes outgrow the single node install, you must jump to 3 nodes.

- The KB/s figures vary wildly between endpoint types, but you can deduce the maximum network throughput in the following manner:

- The default Events Per Second (EPS) cap is set at 500 EPS

- We estimate the average Event to be about 1KB in size

- Alert information gets compressed to about 1/10th of their original size

All this being considered, your maximum throughput per agent should be about 50 KB/s if you don't modify the EPS limit.

- In order to keep only a week of Hot Storage, you need to set up an Index Management Policy which would delete indices older than 7 days.

- A recommended practice for cold storage (the data you want to rotate to AWS), is to enable <logall_json> in the ossec.conf file.

This will generate a file at /var/ossec/logs/archives/archives.json which will include a json object per every event the Wazuh Server receives, irrespective of whether that event triggered an alert.

That file will automatically get rotated and compressed by Wazuh on a daily basis, so with a little cronjob magic you can scp them to your S3 bucket daily and delete the older ones.

With this method, if you ever need to go back, you can not only recreate your full environment from a certain timeframe, but also analyze any data that might have gone below the radar (ie. not triggered a rule).

swapnils

Allen Shau

Federico Gustavo Galland

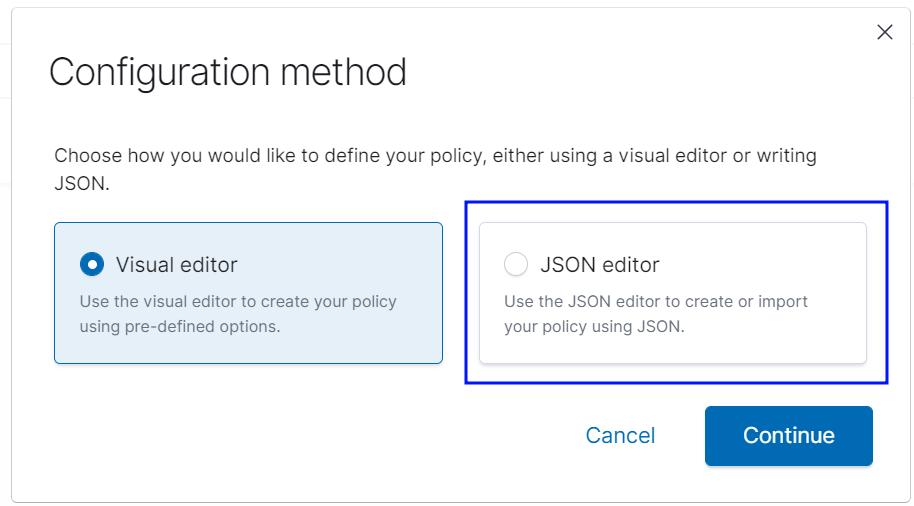

After you accept this, the policy will be applied.

Federico Gustavo Galland

Allen Shau

--

You received this message because you are subscribed to a topic in the Google Groups "Wazuh mailing list" group.

To unsubscribe from this topic, visit https://groups.google.com/d/topic/wazuh/L-yz_vK1lGg/unsubscribe.

To unsubscribe from this group and all its topics, send an email to wazuh+un...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/wazuh/4ed8d2b9-71bc-433b-a40c-685fbee1d761n%40googlegroups.com.

Federico Gustavo Galland

|

|

Allen Shau

Federico Gustavo Galland

swapnils

Federico Gustavo Galland

All the raw logs from Manager (/var/ossec/logs/archives/archives.json) will be auto archived by Wazuh and those (zips) need to be moved to AWS. *Only if logall is enabled.*

Index policies are specific to Indexers and Indices older than 90 days will be auto deleted with this policy.

Do I have an option not to delete these indices and compress those instead and move to AWS? Will it be of any use?

Or only raw logs at manager are enough to regenerate new indices?

--

You received this message because you are subscribed to a topic in the Google Groups "Wazuh mailing list" group.

To unsubscribe from this topic, visit https://groups.google.com/d/topic/wazuh/L-yz_vK1lGg/unsubscribe.

To unsubscribe from this group and all its topics, send an email to wazuh+un...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/wazuh/b7fc2e9d-37de-4d95-9891-1e250aa97fb7n%40googlegroups.com.

swapnils

Federico Gustavo Galland

>> Hot/Warm/Cold Phase -- Is it a good practice to implement this? In few of the scenarios I read, each node is configured with different phase. I understood that hot would be ongoing, warm would be less used & cold would be least used. If it is advisable to configure this, could you please share KBs so that I can study this?

- Is there an external requirement that I should adhere to in terms of data retention?

- If there is not, how long do I want to keep data?

- I there is, does it mention minimum periods of retention in an accesible manner (hot storage)?

>> Enable archives -- Is it a good practice to implement this? Could you please help me share relevant KB to configure this?

To view this discussion on the web visit https://groups.google.com/d/msgid/wazuh/1b348e47-bd99-4adb-81c0-d9b4084e5e43n%40googlegroups.com.

swapnils

Federico Gustavo Galland

- An index policy that deletes indices after 120 days

- logall_json enabled, saving all events to /var/log/archives/

- A script moving your archives files to your S3 bucket periodically

To view this discussion on the web visit https://groups.google.com/d/msgid/wazuh/82e99d80-2447-494d-bbab-9f0fa4d484bbn%40googlegroups.com.

swapnils

Hello Federico,

Thank you for your continuous support! I have started integrating the agents now.

I have observed following -

* After enabling logall_json, I could see two files getting created on both master and a worker which loooks identical in size and grows upto hundreds of gigs.

MASTER > `-rw-r——- 2 wazuh wazuh 3.6G Nov 25 11:33 /var/ossec/logs/archives/archives.json`

MASTER > `-rw-r——- 2 wazuh wazuh 3.6G Nov 25 11:33 /var/ossec/logs/archives/2022/Nov/ossec-archive-25.json`

WORKER > `-rw-r——- 2 wazuh wazuh 1.6G Nov 25 11:40 /var/ossec/logs/archives/archives.json`

WORKER > `-rw-r——- 2 wazuh wazuh 1.6G Nov 25 11:40 /var/ossec/logs/archives/2022/Nov/ossec-archive-25.json`

Is it creating two duplicate files? Is it a normal behavior?

* Compared to wazuh-manager, when checked on wazuh-indexers, I was shocked as hardly any space getting consumed (few hundred MBs). Will it grow later post data processing?

Regards,

swapnils

Federico Gustavo Galland

MASTER > `-rw-r——- 2 wazuh wazuh 3.6G Nov 25 11:33 /var/ossec/logs/archives/archives.json`

MASTER > `-rw-r——- 2 wazuh wazuh 3.6G Nov 25 11:33 /var/ossec/logs/archives/2022/Nov/ossec-archive-25.json`

WORKER > `-rw-r——- 2 wazuh wazuh 1.6G Nov 25 11:40 /var/ossec/logs/archives/archives.json`WORKER > `-rw-r——- 2 wazuh wazuh 1.6G Nov 25 11:40 /var/ossec/logs/archives/2022/Nov/ossec-archive-25.json`

Is it creating two duplicate files? Is it a normal behavior?

* Compared to wazuh-manager, when checked on wazuh-indexers, I was shocked as hardly any space getting consumed (few hundred MBs). Will it grow later post data processing?

To view this discussion on the web visit https://groups.google.com/d/msgid/wazuh/9ba02b5e-c1ce-496a-be54-78445d366cddn%40googlegroups.com.

+1 (844) 349 2984

+1 (844) 349 2984 wazuh.com

wazuh.com federico...@wazuh.com

federico...@wazuh.com f-galland

f-galland