Keysight Ecal accuracy comparing METAS Zo states with Firmware Calibration

86 views

Skip to first unread message

Michael Smith

May 19, 2022, 2:24:57 PM5/19/22

to VNA Tools

After finally getting my error corrected Ecal impedance states stored as databased cal standard, I am now able to perform error correction while measuring an ecal module and then setup a calibration config using raw measured ecal impedance states vs. the stored databased standards and then perform error correction. However, there are some issues I've noted. First my setup:

Keysight N5247B PNA-X

Keysight N4694-6003 67GHz Ecal Module

Instrument setup: 10MHz-50GHz, 300Hz IF BW, 5000 points, 0db power

Steps:

- Measure the ecal states including switch terms and save as raw measurement data

- Measure my DUT (50 ohm transmission line, 50 ohm Beatty standard)

- Create a cal config of raw impedance states referencing databased ecal states

- Perform error correction of the raw data

- (BTW: getting to this point took several tries as METAS kept crashing and exiting during either calibration computation or error correction computation.

- Visualize the error-corrected data with uncertainty information

- Export the waveforms as S2P

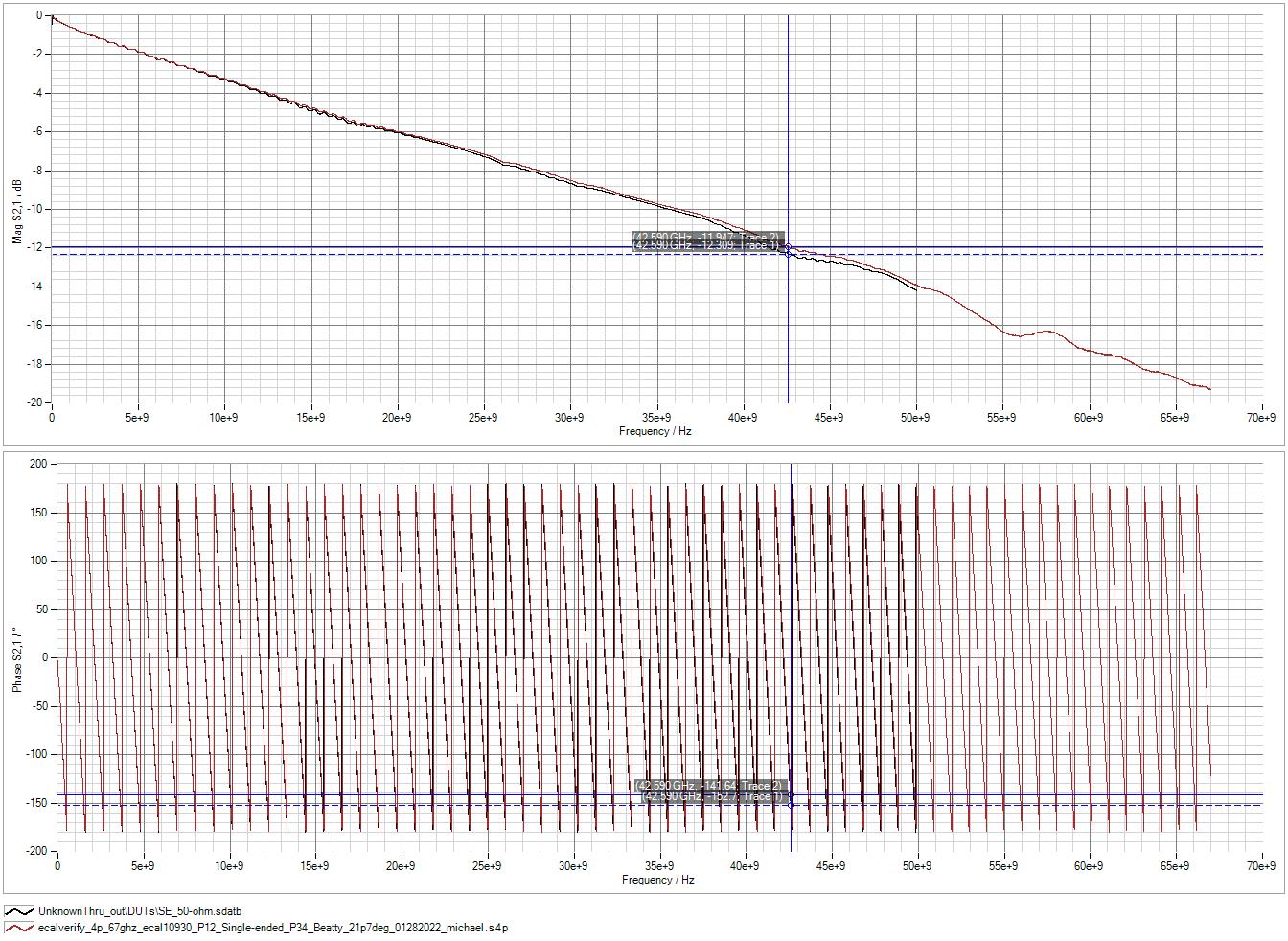

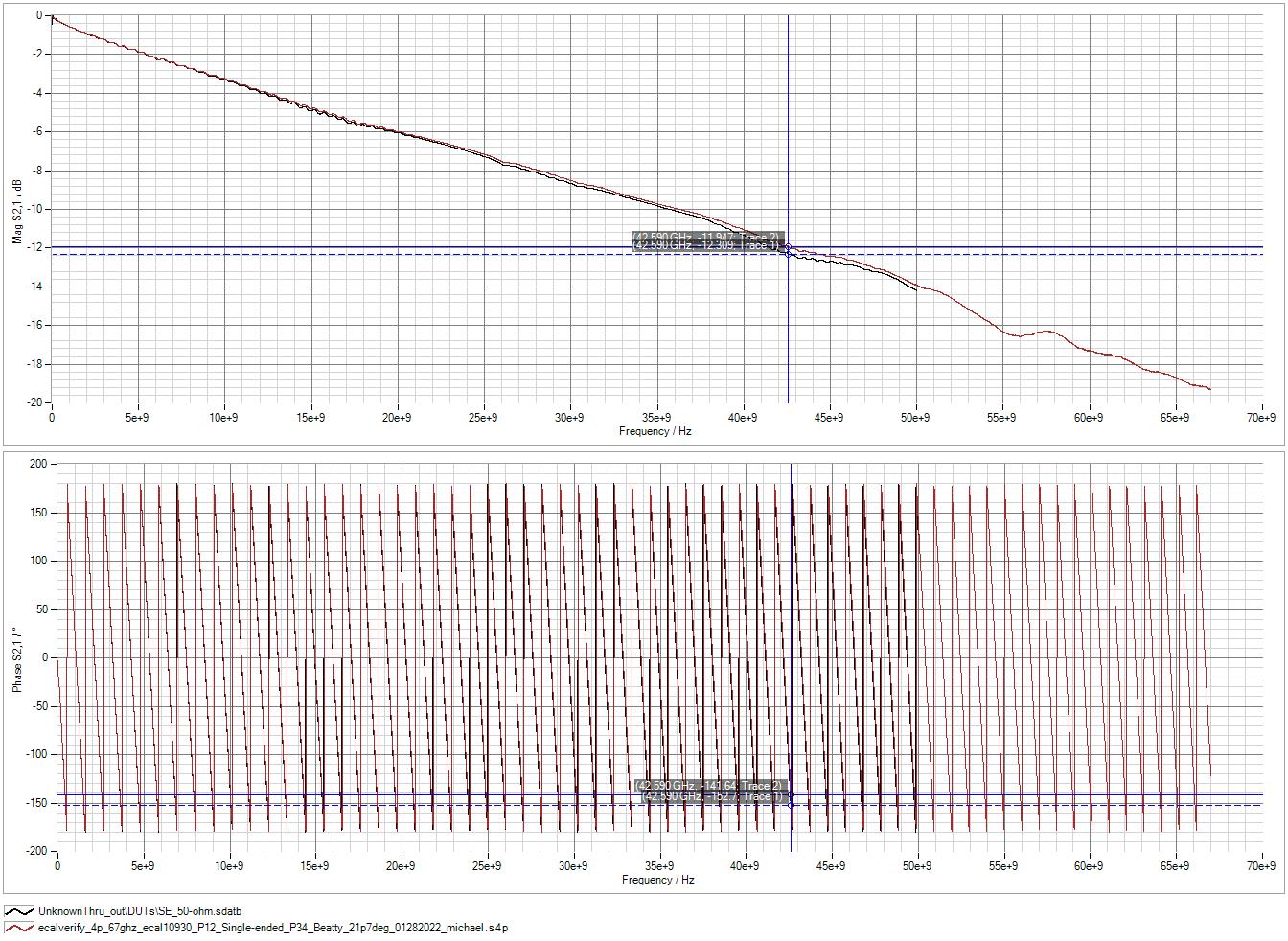

- Import into PLTS and compare with same DUT error-corrected using PLTS (Ecal Calibration with internal firmware) with same Ecal module under same lab ambient conditions.

I notice a few things:

- There is significantly more noise on both the 50 ohm and the beatty standard from the METAS calibrated data than PLTS/firmware calibration

- There is a greater computed rise time in the time domain plots from the METAS calibrated data than PLTS

- There is a divergence in S11 between the two but not until around 24GHz.

my obvious question is "WHY" to everything above. Why more noise? The S2P doesn't contain any uncertainty data. The noise was also evident in the visualization inside METAS, not as a result of S2P conversion. Why is there more rise time shown when the frequency content of both does not exceed 50GHz? The time domain conversions for both waveforms would be treated the same by PLTS. Why is there an impedance difference shown between the two 50 ohm traces but relatively similar between the two traces of the Beatty standard?

I'd love to get to the bottom of this since we need to correlate results from calibration with PLTS (our traditional process and BKM) with that of METAS calibration using Ecal (and also probe calibration) to keep moving forward.

Thanks!

M

Michael Wollensack METAS

May 20, 2022, 2:03:45 AM5/20/22

to VNA Tools

Hi,

what kind of calibration algorithm did you chose using the ECal and METASA VNA Tools? SOLT?

I think the firmware is using Unknown Thru if you use the ECal.

This could explain the ripple on the S12 of the 50 Ohm Line.

The reason for that is that the transmission states of the ECal are not very stable.

So please r-compute it again using Unknown Thru on VNA Tools.

Regards

Michael

Michael Smith

May 23, 2022, 4:54:16 PM5/23/22

to VNA Tools

Thanks Michael. I'm giving that another go with unknown thru's in for the AB1/AB2. If this works, then would it have been unnecessary to save the error corrected AB1 and AB2 as cal standards?

Michael Wollensack METAS

May 24, 2022, 4:55:15 AM5/24/22

to VNA Tools

Hi,

>

If this works, then would it have been unnecessary to save the error corrected AB1 and AB2 as cal standards?

Yes

> I get the same results using an ideal thru

make sure that you generate a new calibration config of the type Unknown Thru and not SOLT.

I think you just replaced the definition for the transmission standard in an existing calibration config of the type SOLT.

Regards

Michael

Michael Smith

May 25, 2022, 3:23:44 PM5/25/22

to VNA Tools

You're right. That's exactly what I did. I'll try again with unknown thru type. Looks like switch terms are needed for that mode, so I'll start over with my cal to obtain those.

Michael Smith

Jun 1, 2022, 4:16:18 PM6/1/22

to VNA Tools

The unknown thru calibration config asks for switch terms. When I perform a measurement series of the ECU, only the A, B, and AB states are saved. Which of those would be considered "switch terms" to satisfy the unknown thru config, or is that necessary? Should I only be concerned about the 7 impedance states for A and for B for ports 1-4 (4-port cal)?

Michael Wollensack METAS

Jun 2, 2022, 2:10:53 AM6/2/22

to VNA Tools

Hi,

you can measure all four S-Parameters and the Switch Terms (Sx,x and Switch Terms Ports: 1, 2) of the AB states of your ECal and the you can use the switch terms of AB1 for your calibration config. And the S-Parameters of your AB+ state you use as unknown thru standard.

A better way would be to use a physical thru (ideal thru or an adapter) and not the Ecal between the ports. Then this ideal thru or adapter could be your unknown thru.

Regards

Michael

Michael Smith

Jun 7, 2022, 4:06:04 PM6/7/22

to VNA Tools

I keep making incremental progress on this methodology, Michael, but progress nonetheless.

Using unknown thru, I now have VERY good correlation of my test structure compared to the same structure measured with a firmware ecal. There are some gaps, but I attribute those to using a non-databased mechanical cal from the beginning to characterize the ecal impedance states which I later saved as a databased standard. I think my next step is to properly characterize the ecal states using a databased mechanical kit. Since I don't currently own one of those, it will be some time before I can build up the accuracy but at least for now, the major building blocks are in place. Much appreciation for all your help.

Reply all

Reply to author

Forward

0 new messages