Connecting ScyllaDB manager to multi-DC cluster

13 afișări

Accesați primul mesaj necitit

Mike Tihonchik

<mike.tihonchik@gmail.com>25 oct. 2022, 16:33:4325.10.2022

– ScyllaDB users

I have 8-node cluster running in AWS (4 DSs, 2 boxes each)

sh-4.4$ nodetool status

Datacenter: DC1

===============

Status=Up/Down

|/ State=Normal/Leaving/Joining/Moving

-- Address Load Tokens Owns Host ID Rack

UN 172.31.xx.xxx 7.29 MB 256 ? ab431b6f-538f-4a77-b6ba-ae01820328c1 alpha

UN 172.31.xx.xxx 9.32 MB 256 ? 6bb031d3-ecde-4151-a1ae-f33d0189a091 beta

Datacenter: DC2

===============

Status=Up/Down

|/ State=Normal/Leaving/Joining/Moving

-- Address Load Tokens Owns Host ID Rack

UN 172.31.xx.x 7.92 MB 256 ? 817e0515-88f0-4bea-99d2-3da80115f41a beta

UN 172.31.xx.xx 8.9 MB 256 ? 22462f0c-c19c-4dc0-b6b0-faa5f7413376 alpha

Datacenter: DC3

===============

Status=Up/Down

|/ State=Normal/Leaving/Joining/Moving

-- Address Load Tokens Owns Host ID Rack

UN 172.31.xx.xx 8.3 MB 256 ? 3f2d70b3-6159-4b5d-9e67-5d805e6da058 alpha

UN 172.31.xx.xxx 9.43 MB 256 ? 917415d9-5568-45ea-9c50-aa4a9e573604 beta

Datacenter: DC4

===============

Status=Up/Down

|/ State=Normal/Leaving/Joining/Moving

-- Address Load Tokens Owns Host ID Rack

UN 172.31.xx.xxx 7.88 MB 256 ? 1029bd26-0e1f-434a-8edb-428ed0780bdb alpha

UN 172.31.xx.xx 8.18 MB 256 ? 57a8c866-0d00-4ded-aa5c-5c4ada9e4bcd beta

Datacenter: DC1

===============

Status=Up/Down

|/ State=Normal/Leaving/Joining/Moving

-- Address Load Tokens Owns Host ID Rack

UN 172.31.xx.xxx 7.29 MB 256 ? ab431b6f-538f-4a77-b6ba-ae01820328c1 alpha

UN 172.31.xx.xxx 9.32 MB 256 ? 6bb031d3-ecde-4151-a1ae-f33d0189a091 beta

Datacenter: DC2

===============

Status=Up/Down

|/ State=Normal/Leaving/Joining/Moving

-- Address Load Tokens Owns Host ID Rack

UN 172.31.xx.x 7.92 MB 256 ? 817e0515-88f0-4bea-99d2-3da80115f41a beta

UN 172.31.xx.xx 8.9 MB 256 ? 22462f0c-c19c-4dc0-b6b0-faa5f7413376 alpha

Datacenter: DC3

===============

Status=Up/Down

|/ State=Normal/Leaving/Joining/Moving

-- Address Load Tokens Owns Host ID Rack

UN 172.31.xx.xx 8.3 MB 256 ? 3f2d70b3-6159-4b5d-9e67-5d805e6da058 alpha

UN 172.31.xx.xxx 9.43 MB 256 ? 917415d9-5568-45ea-9c50-aa4a9e573604 beta

Datacenter: DC4

===============

Status=Up/Down

|/ State=Normal/Leaving/Joining/Moving

-- Address Load Tokens Owns Host ID Rack

UN 172.31.xx.xxx 7.88 MB 256 ? 1029bd26-0e1f-434a-8edb-428ed0780bdb alpha

UN 172.31.xx.xx 8.18 MB 256 ? 57a8c866-0d00-4ded-aa5c-5c4ada9e4bcd beta

On a separate box, I have setup ScyllaDB manager. I want to use my cluster DB to store any manager related data (rather than setting-up another stand-alone instance for manager), hence I crated new key space:

CREATE KEYSPACE IF NOT EXISTS n3_scylla_manager WITH replication = {'class': 'SimpleStrategy', 'replication_factor': 1};

Manager has following configuration (right now pointing to two boxes in DC1):

# Scylla Manager database, used to store management data.

database:

hosts:

- 172.31.xx.xxx

- 172.31.xx.xxx

# Local datacenter name, specify if using a remote, multi-dc cluster.

# local_dc: DC1

#

# Keyspace for management data.

keyspace: n3_scylla_manager

# replication_factor: 3

database:

hosts:

- 172.31.xx.xxx

- 172.31.xx.xxx

# Local datacenter name, specify if using a remote, multi-dc cluster.

# local_dc: DC1

#

# Keyspace for management data.

keyspace: n3_scylla_manager

# replication_factor: 3

if I leave the portion in red commented out, ScyllaDB manager start-up fine, but I am pretty sure it is a wrong configuration. If I uncomment that line, it blows-up on the start. I have read that for multi-DC setup, the name should be to the closest DC to the manager (in my case it does not matter, but let's say it is DC1). On a start, I get the following error:

{"L":"INFO","T":"2022-10-25T20:26:33.192Z","M":"Migrating schema","n3_scylla_manager","_trace_id":"vYvYWhS4TIS_4yN_nLdTnA"}

{"L":"ERROR","T":"2022-10-25T20:26:33.766Z","M":"Bye","error":"db init: list migrations: Cannot achieve consistency level for cl LOCAL_QUORUM. Requires 1, alive 0

STARTUP ERROR: db init: list migrations: Cannot achieve consistency level for cl LOCAL_QUORUM. Requires 1, alive 0","_trace_id":"vYvYWhS4TIS_4yN_nLdTnA","errorStack":"......

systemd[1]: scylla-manager.service: Main process exited, code=exited, status=1/FAILURE

systemd[1]: scylla-manager.service: Failed with result 'exit-code'.

{"L":"ERROR","T":"2022-10-25T20:26:33.766Z","M":"Bye","error":"db init: list migrations: Cannot achieve consistency level for cl LOCAL_QUORUM. Requires 1, alive 0

STARTUP ERROR: db init: list migrations: Cannot achieve consistency level for cl LOCAL_QUORUM. Requires 1, alive 0","_trace_id":"vYvYWhS4TIS_4yN_nLdTnA","errorStack":"......

systemd[1]: scylla-manager.service: Main process exited, code=exited, status=1/FAILURE

systemd[1]: scylla-manager.service: Failed with result 'exit-code'.

Tried to find the solution, but no success yet.. What am I doing wrong?

Tomer Sandler

<tomer@scylladb.com>25 oct. 2022, 17:19:2025.10.2022

– scylladb-users@googlegroups.com

Using the Scylla Cluster, that you plan to register in Scylla Manager server as the cluster on which the repair/backup tasks will run, as Scylla Manager's backend DB is not a great idea.

You already deploy Scylla Manager (SM) server on a separate instance (it most NOT run on the Scylla Cluster nodes), SM single node deployment comes with single node Scylla Ent as the backend DB - that's the most common deployment.

There is another option to deploy a separate Scylla cluster as the backend DB for the SM server, in order to have redundancy. But that's hardly ever used.

Don't forget you also need to install Scylla Manager Agent on each of the nodes in the cluster you wish to manage.

I should also point out that Scylla Manager is FREE only when used to manage up to 5 nodes. Your cluster is 8 nodes (2 x 4 DCs), thus require Scylla Ent license.

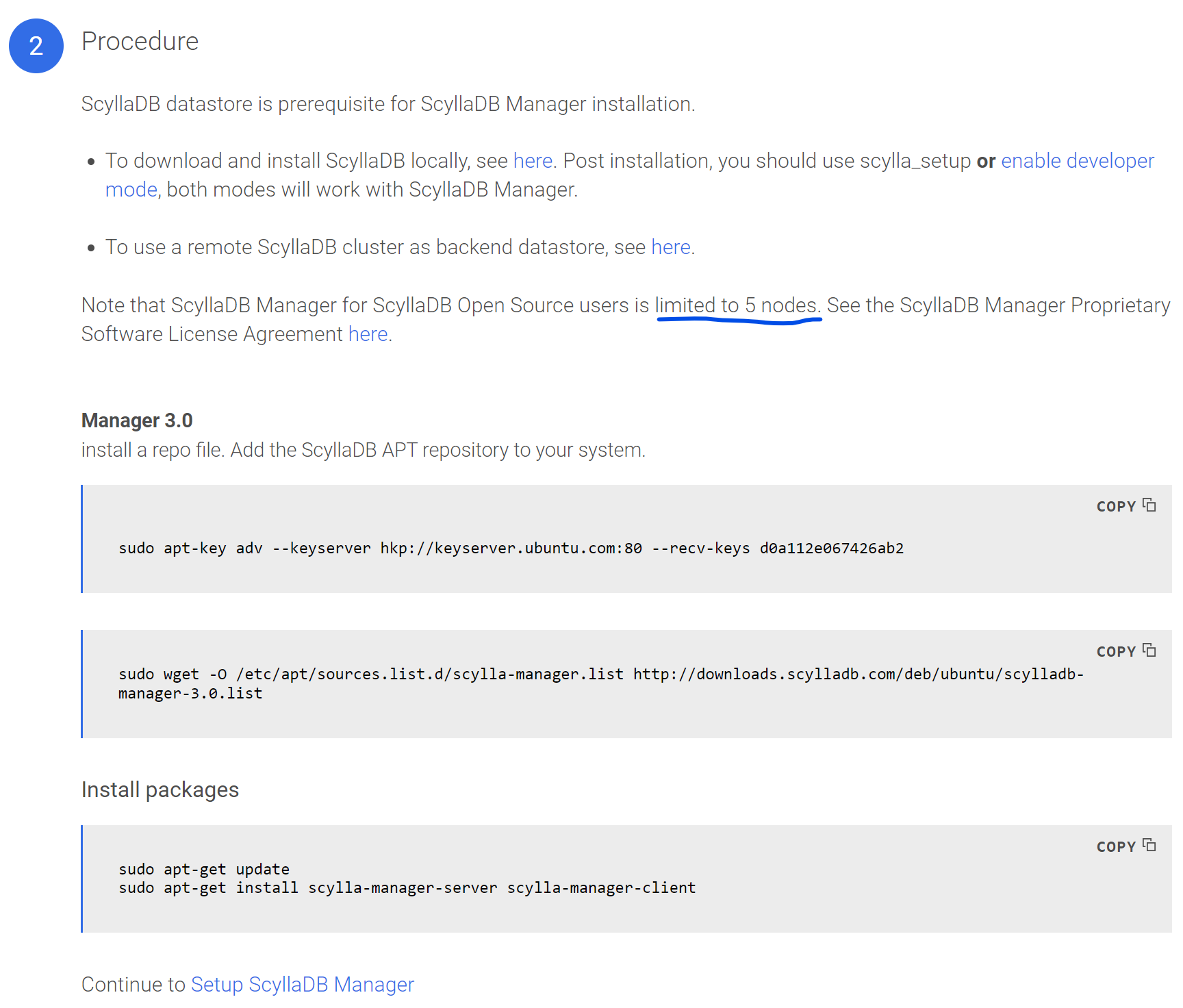

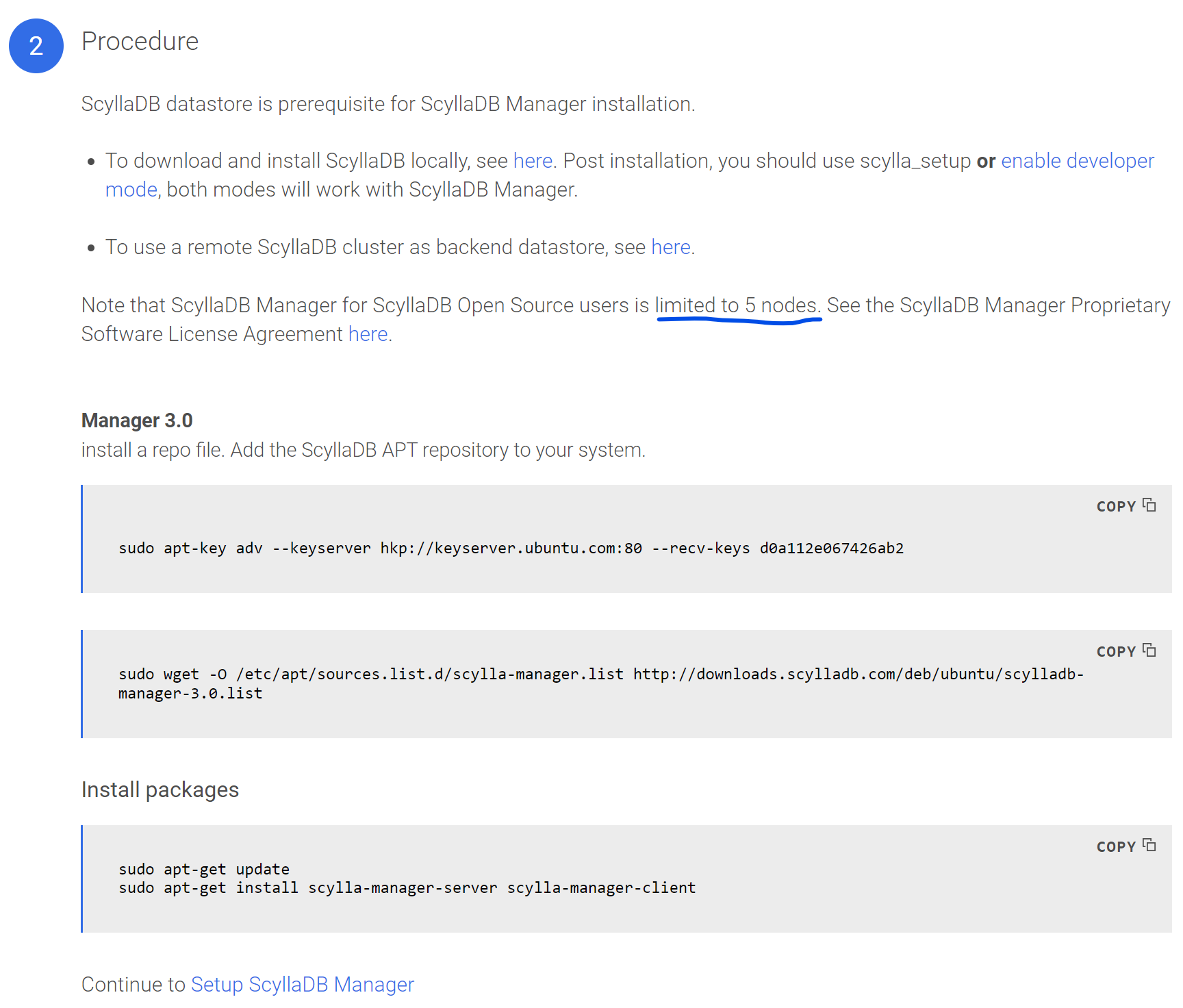

Example of download for Ubuntu 20.04: https://www.scylladb.com/download/?platform=ubuntu-20.04&version=manager-3.0#manager

You already deploy Scylla Manager (SM) server on a separate instance (it most NOT run on the Scylla Cluster nodes), SM single node deployment comes with single node Scylla Ent as the backend DB - that's the most common deployment.

There is another option to deploy a separate Scylla cluster as the backend DB for the SM server, in order to have redundancy. But that's hardly ever used.

Don't forget you also need to install Scylla Manager Agent on each of the nodes in the cluster you wish to manage.

I should also point out that Scylla Manager is FREE only when used to manage up to 5 nodes. Your cluster is 8 nodes (2 x 4 DCs), thus require Scylla Ent license.

Example of download for Ubuntu 20.04: https://www.scylladb.com/download/?platform=ubuntu-20.04&version=manager-3.0#manager

--

You received this message because you are subscribed to the Google Groups "ScyllaDB users" group.

To unsubscribe from this group and stop receiving emails from it, send an email to scylladb-user...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/scylladb-users/c6225fa0-468d-48a3-983a-8519d263ec6fn%40googlegroups.com.

mike tihonchik

<mike.tihonchik@gmail.com>26 oct. 2022, 00:30:0326.10.2022

– scylladb-users@googlegroups.com

Thank you Tomer. Quick follow up question. I know that the free version of Scylla Manager supports only 5 nodes, so does that mean it will not run on my environment at all? I was hoping to be able to connect at least 5 out of my 8 nodes to Scylla Manager (I am working on the POC and need to prove that it is worth purchase Enterprise edition). So is that not going to run at all? Or is it possible to connect 5 out of 8?

The rest makes sense, I will setup a separate Scylla instance for backup/restore

You received this message because you are subscribed to a topic in the Google Groups "ScyllaDB users" group.

To unsubscribe from this topic, visit https://groups.google.com/d/topic/scylladb-users/cAGT3pFJK14/unsubscribe.

To unsubscribe from this group and all its topics, send an email to scylladb-user...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/scylladb-users/CAO_awthL4t670bXWmkesWGrHOMnRmw5UgmLx4paQaiPNWWvc0A%40mail.gmail.com.

Guy Carmin

<guycarmin@scylladb.com>26 oct. 2022, 04:17:3526.10.2022

– scylladb-users@googlegroups.com

Hi Mike,

you should be able to connect 5/8 nodes.

Having said that - if you are running a POC to prove the value of Scylla Enterprise we would be happy to assist you and start an "official POC" If you want (then you would be bale to use the Enterprise version AND getting support and assistance from our tech team.

If this is interesting - please let me know

Cheers,

Guy.

To view this discussion on the web visit https://groups.google.com/d/msgid/scylladb-users/CAAGRjTM3Zw%3Dmr8SqaOX73VnteVHNYOzH3fb_xxZZfhZSwWyQ8Q%40mail.gmail.com.

Tomer Sandler

<tomer@scylladb.com>26 oct. 2022, 12:06:5526.10.2022

– scylladb-users@googlegroups.com

Running it on a portion from a cluster (5/8 nodes) is problematic for the following reasons:

1. Performing repair/backup/both is meant to be performed on the whole cluster. Running it on a portion is counter productive for the purpose of repair (data consistency) and backup (DR)

2. This use case doesn't get QA coverage, as it's not a valid use case. Scylla Manager Agent must be installed on all nodes in the cluster. You might experience repair/backup tasks failure

My recommendation to you is to test it using a ScyllaDB cluster size of =< 5 nodes. Or better yet, join forces with our Solution Architects to demonstrate the best value of the Enterprise Edition.

1. Performing repair/backup/both is meant to be performed on the whole cluster. Running it on a portion is counter productive for the purpose of repair (data consistency) and backup (DR)

2. This use case doesn't get QA coverage, as it's not a valid use case. Scylla Manager Agent must be installed on all nodes in the cluster. You might experience repair/backup tasks failure

My recommendation to you is to test it using a ScyllaDB cluster size of =< 5 nodes. Or better yet, join forces with our Solution Architects to demonstrate the best value of the Enterprise Edition.

To view this discussion on the web visit https://groups.google.com/d/msgid/scylladb-users/CAAGRjTM3Zw%3Dmr8SqaOX73VnteVHNYOzH3fb_xxZZfhZSwWyQ8Q%40mail.gmail.com.

Răspundeți tuturor

Răspundeți autorului

Redirecționați

0 mesaje noi