Heap increase from ~25 GB to ~35 GB after upgrade to 3.5

303 views

Skip to first unread message

Sven Selberg

Apr 21, 2022, 5:57:19 AM4/21/22

to Repo and Gerrit Discussion

Hi,

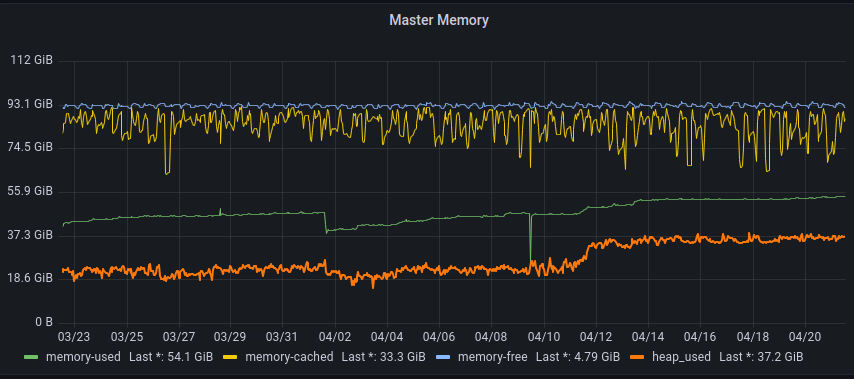

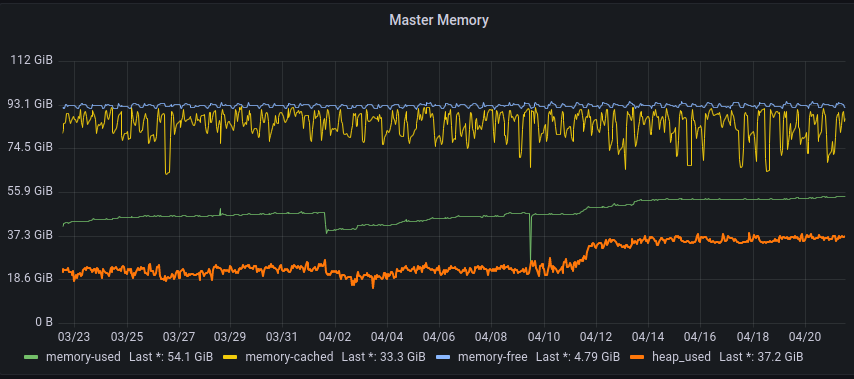

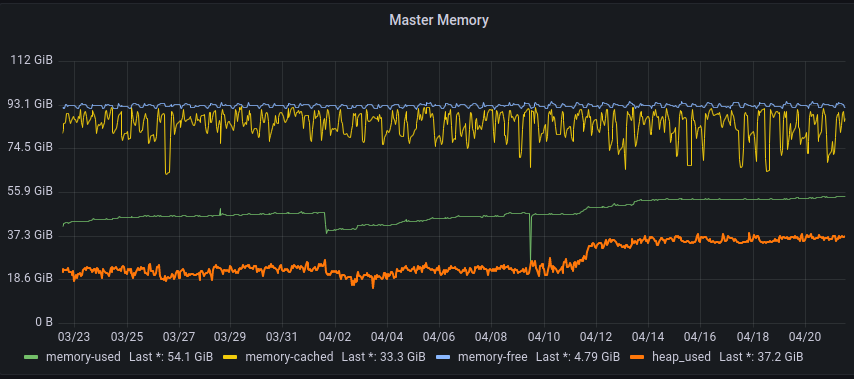

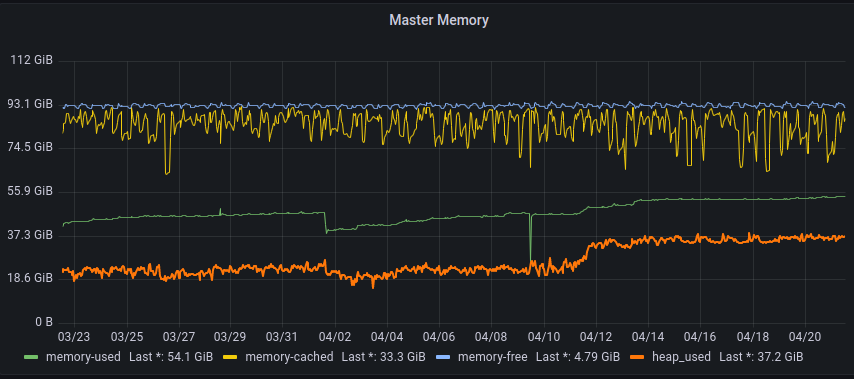

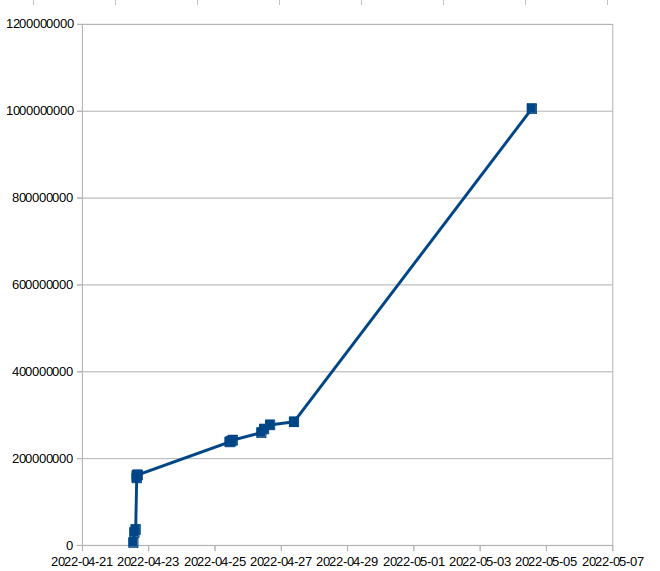

Before upgrade we had a rather steady mean-heap-usage of ~25 GB, after upgrading to v3.5.1 (or once people started working the following monday) the heap-usage is now consistently ~10 GB larger, a 40 % increase.

Is this expected of v3.5.1?

I cannot find any reports of similar findings by others so it might be something specific to our setup.

If so does anyone have any tips regarding likely culprits?

/Sven

/Sven

Sven Selberg

Apr 21, 2022, 6:06:25 AM4/21/22

to Repo and Gerrit Discussion

To partially answer my own question with a heap histogram:

Feels odd that 8-9 GB of a 35 GB heap should consist of metrics-related objects...

num #instances #bytes class name (module)

-------------------------------------------------------

1: 80632689 14235174048 [B (java...@11.0.14.1)

2: 21190716 3262721856 [Ljava.util.concurrent.ConcurrentHashMap$Node; (java...@11.0.14.1)

3: 71315481 2282095392 java.lang.String (java...@11.0.14.1)

4: 2813057 2246859920 [I (java...@11.0.14.1)

5: 21309494 2216187376 java.util.concurrent.ConcurrentHashMap (java...@11.0.14.1)

6: 42338725 2032258800 com.google.gerrit.metrics.dropwizard.DropWizardMetricMaker$TimerImpl

7: 21169188 1524181536 com.google.gerrit.metrics.dropwizard.TimerImpl1

8: 21169309 1185481304 com.google.gerrit.metrics.AutoValue_Field

9: 23548142 1130310816 java.util.concurrent.ConcurrentHashMap$Node (java...@11.0.14.1)

10: 21839033 1048273584 java.util.HashMap$Node (java...@11.0.14.1)

11: 21169187 1016120976 com.google.gerrit.metrics.dropwizard.TimerImpl1$1

12: 21169283 677417984 [Lcom.google.gerrit.metrics.Field;

13: 1020330 613677080 [J (java...@11.0.14.1)

14: 23854059 572497416 java.util.Optional (java...@11.0.14.1)

15: 21287008 340592128 java.lang.Object (java...@11.0.14.1)

16: 20 319291872 [Lorg.h2.util.CacheObject;

17: 82437 308442936 [Ljava.util.HashMap$Node; (java...@11.0.14.1)

18: 899505 214747856 [Ljava.lang.Object; (java...@11.0.14.1)

19: 2260005 162328344 [Lorg.h2.value.Value;

20: 3818449 152737960 org.eclipse.jgit.lib.ObjectId

Feels odd that 8-9 GB of a 35 GB heap should consist of metrics-related objects...

num #instances #bytes class name (module)

-------------------------------------------------------

1: 80632689 14235174048 [B (java...@11.0.14.1)

2: 21190716 3262721856 [Ljava.util.concurrent.ConcurrentHashMap$Node; (java...@11.0.14.1)

3: 71315481 2282095392 java.lang.String (java...@11.0.14.1)

4: 2813057 2246859920 [I (java...@11.0.14.1)

5: 21309494 2216187376 java.util.concurrent.ConcurrentHashMap (java...@11.0.14.1)

6: 42338725 2032258800 com.google.gerrit.metrics.dropwizard.DropWizardMetricMaker$TimerImpl

7: 21169188 1524181536 com.google.gerrit.metrics.dropwizard.TimerImpl1

8: 21169309 1185481304 com.google.gerrit.metrics.AutoValue_Field

9: 23548142 1130310816 java.util.concurrent.ConcurrentHashMap$Node (java...@11.0.14.1)

10: 21839033 1048273584 java.util.HashMap$Node (java...@11.0.14.1)

11: 21169187 1016120976 com.google.gerrit.metrics.dropwizard.TimerImpl1$1

12: 21169283 677417984 [Lcom.google.gerrit.metrics.Field;

13: 1020330 613677080 [J (java...@11.0.14.1)

14: 23854059 572497416 java.util.Optional (java...@11.0.14.1)

15: 21287008 340592128 java.lang.Object (java...@11.0.14.1)

16: 20 319291872 [Lorg.h2.util.CacheObject;

17: 82437 308442936 [Ljava.util.HashMap$Node; (java...@11.0.14.1)

18: 899505 214747856 [Ljava.lang.Object; (java...@11.0.14.1)

19: 2260005 162328344 [Lorg.h2.value.Value;

20: 3818449 152737960 org.eclipse.jgit.lib.ObjectId

/Sven

Sven Selberg

Apr 21, 2022, 6:47:37 AM4/21/22

to Repo and Gerrit Discussion

On Thursday, April 21, 2022 at 12:06:25 PM UTC+2 Sven Selberg wrote:

On Thursday, April 21, 2022 at 11:57:19 AM UTC+2 Sven Selberg wrote:Hi,Before upgrade we had a rather steady mean-heap-usage of ~25 GB, after upgrading to v3.5.1 (or once people started working the following monday) the heap-usage is now consistently ~10 GB larger, a 40 % increase.

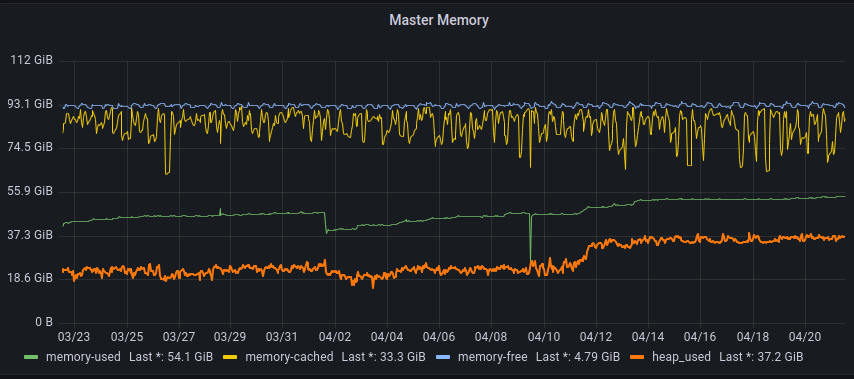

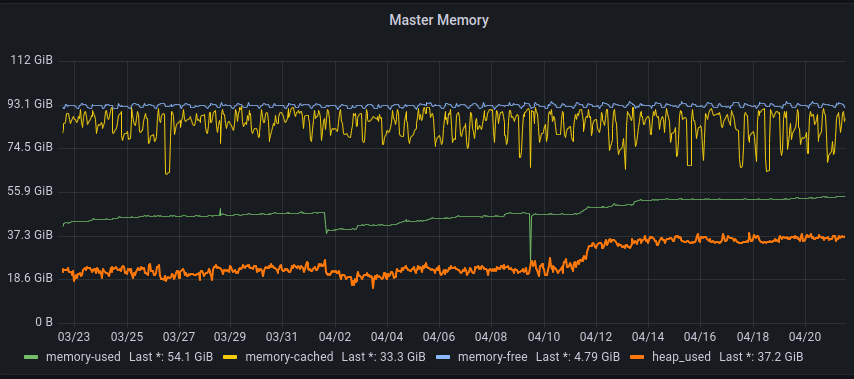

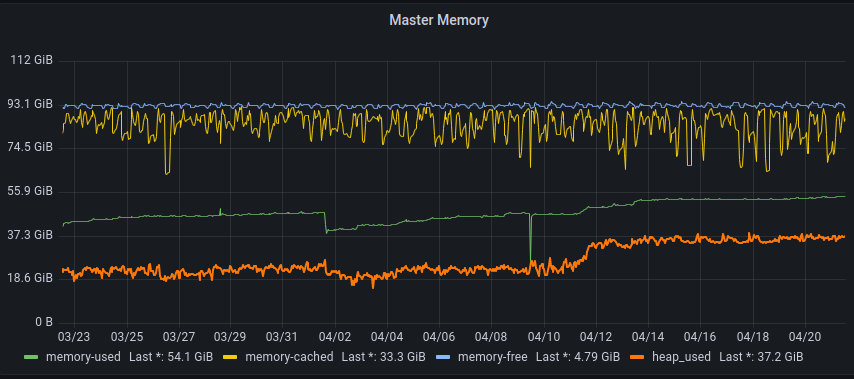

Memory/Heap profile past 30 days for reference, upgrade was performed on April 9:th.

Matthias Sohn

Apr 21, 2022, 8:11:42 AM4/21/22

to Sven Selberg, Repo and Gerrit Discussion

On Thu, Apr 21, 2022 at 12:47 PM Sven Selberg <sven.s...@axis.com> wrote:

On Thursday, April 21, 2022 at 12:06:25 PM UTC+2 Sven Selberg wrote:On Thursday, April 21, 2022 at 11:57:19 AM UTC+2 Sven Selberg wrote:Hi,Before upgrade we had a rather steady mean-heap-usage of ~25 GB, after upgrading to v3.5.1 (or once people started working the following monday) the heap-usage is now consistently ~10 GB larger, a 40 % increase.Memory/Heap profile past 30 days for reference, upgrade was performed on April 9:th.Is this expected of v3.5.1?I cannot find any reports of similar findings by others so it might be something specific to our setup.If so does anyone have any tips regarding likely culprits?To partially answer my own question with a heap histogram:

Feels odd that 8-9 GB of a 35 GB heap should consist of metrics-related objects...

maybe this is related to https://bugs.chromium.org/p/gerrit/issues/detail?id=15531 ?

num #instances #bytes class name (module)

-------------------------------------------------------

1: 80632689 14235174048 [B (java...@11.0.14.1)

some of the classnames in this histogram are truncated too much to be recognizable

2: 21190716 3262721856 [Ljava.util.concurrent.ConcurrentHashMap$Node; (java...@11.0.14.1)

3: 71315481 2282095392 java.lang.String (java...@11.0.14.1)

4: 2813057 2246859920 [I (java...@11.0.14.1)

5: 21309494 2216187376 java.util.concurrent.ConcurrentHashMap (java...@11.0.14.1)

6: 42338725 2032258800 com.google.gerrit.metrics.dropwizard.DropWizardMetricMaker$TimerImpl

7: 21169188 1524181536 com.google.gerrit.metrics.dropwizard.TimerImpl1

8: 21169309 1185481304 com.google.gerrit.metrics.AutoValue_Field

9: 23548142 1130310816 java.util.concurrent.ConcurrentHashMap$Node (java...@11.0.14.1)

10: 21839033 1048273584 java.util.HashMap$Node (java...@11.0.14.1)

11: 21169187 1016120976 com.google.gerrit.metrics.dropwizard.TimerImpl1$1

12: 21169283 677417984 [Lcom.google.gerrit.metrics.Field;

13: 1020330 613677080 [J (java...@11.0.14.1)

14: 23854059 572497416 java.util.Optional (java...@11.0.14.1)

15: 21287008 340592128 java.lang.Object (java...@11.0.14.1)

16: 20 319291872 [Lorg.h2.util.CacheObject;

17: 82437 308442936 [Ljava.util.HashMap$Node; (java...@11.0.14.1)

18: 899505 214747856 [Ljava.lang.Object; (java...@11.0.14.1)

19: 2260005 162328344 [Lorg.h2.value.Value;

20: 3818449 152737960 org.eclipse.jgit.lib.ObjectId

/Sven

--

--

To unsubscribe, email repo-discuss...@googlegroups.com

More info at http://groups.google.com/group/repo-discuss?hl=en

---

You received this message because you are subscribed to the Google Groups "Repo and Gerrit Discussion" group.

To unsubscribe from this group and stop receiving emails from it, send an email to repo-discuss...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/repo-discuss/373da857-9178-4e7f-8035-5b02a466d719n%40googlegroups.com.

Sven Selberg

Apr 22, 2022, 4:04:18 AM4/22/22

to Repo and Gerrit Discussion

On Thursday, April 21, 2022 at 2:11:42 PM UTC+2 Matthias Sohn wrote:

On Thu, Apr 21, 2022 at 12:47 PM Sven Selberg <sven.s...@axis.com> wrote:On Thursday, April 21, 2022 at 12:06:25 PM UTC+2 Sven Selberg wrote:On Thursday, April 21, 2022 at 11:57:19 AM UTC+2 Sven Selberg wrote:Hi,Before upgrade we had a rather steady mean-heap-usage of ~25 GB, after upgrading to v3.5.1 (or once people started working the following monday) the heap-usage is now consistently ~10 GB larger, a 40 % increase.Memory/Heap profile past 30 days for reference, upgrade was performed on April 9:th.Is this expected of v3.5.1?I cannot find any reports of similar findings by others so it might be something specific to our setup.If so does anyone have any tips regarding likely culprits?To partially answer my own question with a heap histogram:

Feels odd that 8-9 GB of a 35 GB heap should consist of metrics-related objects...maybe this is related to https://bugs.chromium.org/p/gerrit/issues/detail?id=15531 ?num #instances #bytes class name (module)

-------------------------------------------------------

1: 80632689 14235174048 [B (java...@11.0.14.1)some of the classnames in this histogram are truncated too much to be recognizable

Sven Selberg

Apr 22, 2022, 4:41:19 AM4/22/22

to Repo and Gerrit Discussion

On Friday, April 22, 2022 at 10:04:18 AM UTC+2 Sven Selberg wrote:

On Thursday, April 21, 2022 at 2:11:42 PM UTC+2 Matthias Sohn wrote:On Thu, Apr 21, 2022 at 12:47 PM Sven Selberg <sven.s...@axis.com> wrote:On Thursday, April 21, 2022 at 12:06:25 PM UTC+2 Sven Selberg wrote:On Thursday, April 21, 2022 at 11:57:19 AM UTC+2 Sven Selberg wrote:Hi,Before upgrade we had a rather steady mean-heap-usage of ~25 GB, after upgrading to v3.5.1 (or once people started working the following monday) the heap-usage is now consistently ~10 GB larger, a 40 % increase.Memory/Heap profile past 30 days for reference, upgrade was performed on April 9:th.Is this expected of v3.5.1?I cannot find any reports of similar findings by others so it might be something specific to our setup.If so does anyone have any tips regarding likely culprits?To partially answer my own question with a heap histogram:

Feels odd that 8-9 GB of a 35 GB heap should consist of metrics-related objects...maybe this is related to https://bugs.chromium.org/p/gerrit/issues/detail?id=15531 ?

It looks like the performance metrics is the culprit. metrics-reporter-prometheus reports that there are 3178 available metrics (of which 1941 is 0.0).

Edwin Kempin

Apr 22, 2022, 4:44:32 AM4/22/22

to Sven Selberg, Repo and Gerrit Discussion

On Fri, Apr 22, 2022 at 10:41 AM Sven Selberg <sven.s...@axis.com> wrote:

On Friday, April 22, 2022 at 10:04:18 AM UTC+2 Sven Selberg wrote:On Thursday, April 21, 2022 at 2:11:42 PM UTC+2 Matthias Sohn wrote:On Thu, Apr 21, 2022 at 12:47 PM Sven Selberg <sven.s...@axis.com> wrote:On Thursday, April 21, 2022 at 12:06:25 PM UTC+2 Sven Selberg wrote:On Thursday, April 21, 2022 at 11:57:19 AM UTC+2 Sven Selberg wrote:Hi,Before upgrade we had a rather steady mean-heap-usage of ~25 GB, after upgrading to v3.5.1 (or once people started working the following monday) the heap-usage is now consistently ~10 GB larger, a 40 % increase.Memory/Heap profile past 30 days for reference, upgrade was performed on April 9:th.Is this expected of v3.5.1?I cannot find any reports of similar findings by others so it might be something specific to our setup.If so does anyone have any tips regarding likely culprits?To partially answer my own question with a heap histogram:

Feels odd that 8-9 GB of a 35 GB heap should consist of metrics-related objects...maybe this is related to https://bugs.chromium.org/p/gerrit/issues/detail?id=15531 ?It looks like the performance metrics is the culprit. metrics-reporter-prometheus reports that there are 3178 available metrics (of which 1941 is 0.0).

Weird, I thought those metrics were disabled in 3.5.1:

To view this discussion on the web visit https://groups.google.com/d/msgid/repo-discuss/6c8371c6-0c3e-41d9-8946-d3b4c249920an%40googlegroups.com.

Sven Selberg

Apr 22, 2022, 4:49:31 AM4/22/22

to Repo and Gerrit Discussion

On Friday, April 22, 2022 at 10:41:19 AM UTC+2 Sven Selberg wrote:

On Friday, April 22, 2022 at 10:04:18 AM UTC+2 Sven Selberg wrote:On Thursday, April 21, 2022 at 2:11:42 PM UTC+2 Matthias Sohn wrote:On Thu, Apr 21, 2022 at 12:47 PM Sven Selberg <sven.s...@axis.com> wrote:On Thursday, April 21, 2022 at 12:06:25 PM UTC+2 Sven Selberg wrote:On Thursday, April 21, 2022 at 11:57:19 AM UTC+2 Sven Selberg wrote:Hi,Before upgrade we had a rather steady mean-heap-usage of ~25 GB, after upgrading to v3.5.1 (or once people started working the following monday) the heap-usage is now consistently ~10 GB larger, a 40 % increase.Memory/Heap profile past 30 days for reference, upgrade was performed on April 9:th.Is this expected of v3.5.1?I cannot find any reports of similar findings by others so it might be something specific to our setup.If so does anyone have any tips regarding likely culprits?To partially answer my own question with a heap histogram:

Feels odd that 8-9 GB of a 35 GB heap should consist of metrics-related objects...maybe this is related to https://bugs.chromium.org/p/gerrit/issues/detail?id=15531 ?It looks like the performance metrics is the culprit. metrics-reporter-prometheus reports that there are 3178 available metrics (of which 1941 is 0.0).

For comparison v3.4 has around 700 available metrics

Luca Milanesio

Apr 22, 2022, 4:54:59 AM4/22/22

to Repo and Gerrit Discussion, Luca Milanesio, Sven Selberg

On 22 Apr 2022, at 09:49, Sven Selberg <sven.s...@axis.com> wrote:

On Friday, April 22, 2022 at 10:41:19 AM UTC+2 Sven Selberg wrote:

On Friday, April 22, 2022 at 10:04:18 AM UTC+2 Sven Selberg wrote:

On Thursday, April 21, 2022 at 2:11:42 PM UTC+2 Matthias Sohn wrote:

On Thu, Apr 21, 2022 at 12:47 PM Sven Selberg <sven.s...@axis.com> wrote:

On Thursday, April 21, 2022 at 12:06:25 PM UTC+2 Sven Selberg wrote:On Thursday, April 21, 2022 at 11:57:19 AM UTC+2 Sven Selberg wrote:Hi,Before upgrade we had a rather steady mean-heap-usage of ~25 GB, after upgrading to v3.5.1 (or once people started working the following monday) the heap-usage is now consistently ~10 GB larger, a 40 % increase.Memory/Heap profile past 30 days for reference, upgrade was performed on April 9:th.

<Memory-profile-heap-increase-v3.5.1.png>Is this expected of v3.5.1?I cannot find any reports of similar findings by others so it might be something specific to our setup.If so does anyone have any tips regarding likely culprits?To partially answer my own question with a heap histogram:

Feels odd that 8-9 GB of a 35 GB heap should consist of metrics-related objects...

maybe this is related to https://bugs.chromium.org/p/gerrit/issues/detail?id=15531 ?

It looks like the performance metrics is the culprit. metrics-reporter-prometheus reports that there are 3178 available metrics (of which 1941 is 0.0).

For comparison v3.4 has around 700 available metrics

Luca.

To view this discussion on the web visit https://groups.google.com/d/msgid/repo-discuss/66ff17f9-d773-409c-a38e-a2b48244112cn%40googlegroups.com.

<Memory-profile-heap-increase-v3.5.1.png>

Sven Selberg

Apr 22, 2022, 5:01:16 AM4/22/22

to Repo and Gerrit Discussion

On Friday, April 22, 2022 at 10:44:32 AM UTC+2 Edwin Kempin wrote:

On Fri, Apr 22, 2022 at 10:41 AM Sven Selberg <sven.s...@axis.com> wrote:On Friday, April 22, 2022 at 10:04:18 AM UTC+2 Sven Selberg wrote:On Thursday, April 21, 2022 at 2:11:42 PM UTC+2 Matthias Sohn wrote:On Thu, Apr 21, 2022 at 12:47 PM Sven Selberg <sven.s...@axis.com> wrote:On Thursday, April 21, 2022 at 12:06:25 PM UTC+2 Sven Selberg wrote:On Thursday, April 21, 2022 at 11:57:19 AM UTC+2 Sven Selberg wrote:Hi,Before upgrade we had a rather steady mean-heap-usage of ~25 GB, after upgrading to v3.5.1 (or once people started working the following monday) the heap-usage is now consistently ~10 GB larger, a 40 % increase.Memory/Heap profile past 30 days for reference, upgrade was performed on April 9:th.Is this expected of v3.5.1?I cannot find any reports of similar findings by others so it might be something specific to our setup.If so does anyone have any tips regarding likely culprits?To partially answer my own question with a heap histogram:

Feels odd that 8-9 GB of a 35 GB heap should consist of metrics-related objects...maybe this is related to https://bugs.chromium.org/p/gerrit/issues/detail?id=15531 ?It looks like the performance metrics is the culprit. metrics-reporter-prometheus reports that there are 3178 available metrics (of which 1941 is 0.0).Weird, I thought those metrics were disabled in 3.5.1:

Yes, I even manually confirmed that the war file we are running has that patch (just to be absolutely positively sure).

Sven Selberg

Apr 22, 2022, 5:16:34 AM4/22/22

to Repo and Gerrit Discussion

On Friday, April 22, 2022 at 10:54:59 AM UTC+2 lucamilanesio wrote:

On 22 Apr 2022, at 09:49, Sven Selberg <sven.s...@axis.com> wrote:On Friday, April 22, 2022 at 10:41:19 AM UTC+2 Sven Selberg wrote:On Friday, April 22, 2022 at 10:04:18 AM UTC+2 Sven Selberg wrote:On Thursday, April 21, 2022 at 2:11:42 PM UTC+2 Matthias Sohn wrote:On Thu, Apr 21, 2022 at 12:47 PM Sven Selberg <sven.s...@axis.com> wrote:On Thursday, April 21, 2022 at 12:06:25 PM UTC+2 Sven Selberg wrote:On Thursday, April 21, 2022 at 11:57:19 AM UTC+2 Sven Selberg wrote:Hi,Before upgrade we had a rather steady mean-heap-usage of ~25 GB, after upgrading to v3.5.1 (or once people started working the following monday) the heap-usage is now consistently ~10 GB larger, a 40 % increase.Memory/Heap profile past 30 days for reference, upgrade was performed on April 9:th.<Memory-profile-heap-increase-v3.5.1.png>Is this expected of v3.5.1?I cannot find any reports of similar findings by others so it might be something specific to our setup.If so does anyone have any tips regarding likely culprits?To partially answer my own question with a heap histogram:

Feels odd that 8-9 GB of a 35 GB heap should consist of metrics-related objects...maybe this is related to https://bugs.chromium.org/p/gerrit/issues/detail?id=15531 ?It looks like the performance metrics is the culprit. metrics-reporter-prometheus reports that there are 3178 available metrics (of which 1941 is 0.0).For comparison v3.4 has around 700 available metricsWhat the configuration value for tracing.exportPerformanceMetrics in your case? Can you add some logging?

tracing.exportPerformanceMetrics is default (false).

However, above that setting I found tracing.performanceLogging which is default true.

After setting this to false [1] the "available metrics" was now in the hundreds again (haven't deployed it to production to check heap impact).

Which is odd cause the config documentation clearly states that:

"This setting has no effect if no PerformanceLogger plugins are installed, because then performance logging is always disabled." [2]

However, above that setting I found tracing.performanceLogging which is default true.

After setting this to false [1] the "available metrics" was now in the hundreds again (haven't deployed it to production to check heap impact).

Which is odd cause the config documentation clearly states that:

"This setting has no effect if no PerformanceLogger plugins are installed, because then performance logging is always disabled." [2]

Perhaps this setting should also be default disabled...?

I'll deploy that config setting to production and hope for the best.

[1]

[tracing]

performanceLogging = false

performanceLogging = false

Edwin Kempin

Apr 22, 2022, 5:23:36 AM4/22/22

to Sven Selberg, Repo and Gerrit Discussion

Nice investigation. Yes, I think tracing.performanceLogging should be changed to false by default and its description should be corrected.

It's true that tracing.performanceLogging has no effect if no PerformanceLogger is installed, however with the PerformanceMetrics class Gerrit installs one PerformanceLogger always so that having no PerformanceLogger is never the case.

That would also explain the increase in heap, it's not caused by the additional metrics added by PerformanceLogger, but by implicitly turning on collection of performance events for all requests.

To view this discussion on the web visit https://groups.google.com/d/msgid/repo-discuss/3b06d757-27b1-4932-85b9-16c6ec95687dn%40googlegroups.com.

Sven Selberg

Apr 22, 2022, 5:34:12 AM4/22/22

to Repo and Gerrit Discussion

I owe a big thanks to Luca for his invaluable assistance when trying to triangulate the issue.

Without his pointers it would have taken a lot longer to figure out what was going on.

Without his pointers it would have taken a lot longer to figure out what was going on.

Sven Selberg

Apr 22, 2022, 10:18:18 AM4/22/22

to Repo and Gerrit Discussion

Oh, snap!

The performance metrics weren't there because there was nothing yet to measure performance of. Once somethings started happening on the staging server the performance-metrics turned up again in available metrics.

Furthermore things looked good in production after changing the setting but heap continuously rising and metrics objects took more and more space on the heap.

The performance metrics weren't there because there was nothing yet to measure performance of. Once somethings started happening on the staging server the performance-metrics turned up again in available metrics.

Furthermore things looked good in production after changing the setting but heap continuously rising and metrics objects took more and more space on the heap.

Too soon to tell though.

Sven Selberg

Apr 26, 2022, 5:46:16 AM4/26/22

to Repo and Gerrit Discussion

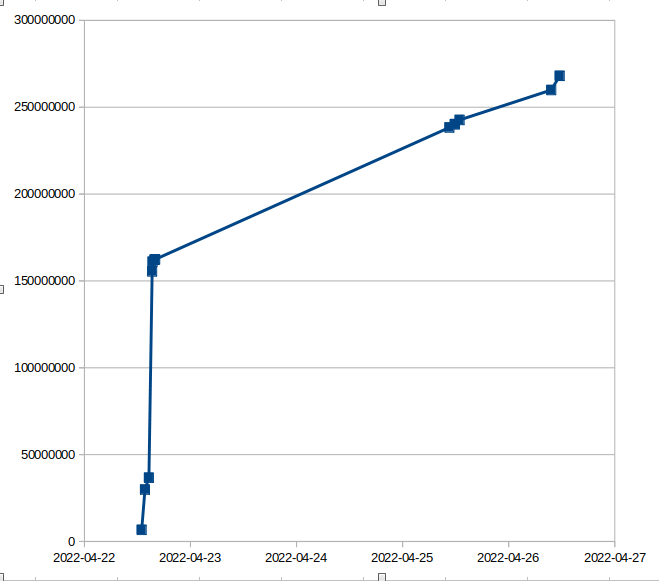

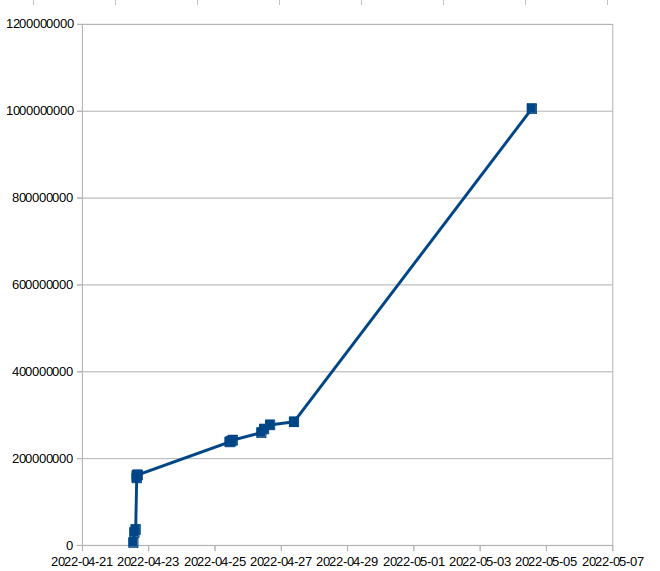

After watching the heap for a couple of days:

Heap usage in general is much more reasonable after disabling performanceLogging.

However it seems like the metric objects are accumulating on the heap which is worrying.

There might be an underlying issue that was exacerbated by the performance metrics.

Don't know if this is related to core Gerrit or a side-effect of the metrics-reporter-graphite plugin that we are sporting.

Heap usage in general is much more reasonable after disabling performanceLogging.

However it seems like the metric objects are accumulating on the heap which is worrying.

There might be an underlying issue that was exacerbated by the performance metrics.

Don't know if this is related to core Gerrit or a side-effect of the metrics-reporter-graphite plugin that we are sporting.

Here's a graph over heap-space consumed by the most impactful object com.google.gerrit.metrics.dropwizard.DropWizardMetricMaker$TimerImpl:

Sven Selberg

May 4, 2022, 7:38:35 AM5/4/22

to Repo and Gerrit Discussion

After about two weeks the situation is increasingly bleak (bytes of heap taken up by com.google.gerrit.metrics.dropwizard.DropWizardMetricMaker$TimerImpl objects):

num #instances #bytes class name (module)

-------------------------------------------------------

-------------------------------------------------------

1: 48400478 15185391744 [B (java...@11.0.14.1)

2: 2828233 2225968248 [I (java...@11.0.14.1)

3: 10496006 1636066768 [Ljava.util.concurrent.ConcurrentHashMap$Node; (java...@11.0.14.1)

4: 10677080 1281249600 org.eclipse.jgit.internal.storage.file.LocalObjectToPack

5: 39299819 1257594208 java.lang.String (java...@11.0.14.1)

6: 10536334 1095778736 java.util.concurrent.ConcurrentHashMap (java...@11.0.14.1)

7: 20960578 1006107744 com.google.gerrit.metrics.dropwizard.DropWizardMetricMaker$TimerImpl

8: 10480119 754568568 com.google.gerrit.metrics.dropwizard.TimerImpl1

9: 13051682 626480736 java.util.HashMap$Node (java...@11.0.14.1)

10: 12864906 617515488 java.util.concurrent.ConcurrentHashMap$Node (java...@11.0.14.1)

11: 10480235 586893160 com.google.gerrit.metrics.AutoValue_Field

12: 1248307 526746576 [J (java...@11.0.14.1)

13: 10480118 503045664 com.google.gerrit.metrics.dropwizard.TimerImpl1$1

14: 10480214 335367760 [Lcom.google.gerrit.metrics.Field;

15: 19938 327142704 [Lorg.eclipse.jgit.lib.ObjectIdOwnerMap$Entry;

16: 20 319291872 [Lorg.h2.util.CacheObject;

17: 12995665 311895960 java.util.Optional (java...@11.0.14.1)

18: 705205 260201496 [Ljava.lang.Object; (java...@11.0.14.1)

19: 2655829 233712952 org.eclipse.jgit.revwalk.RevCommit

20: 54564 208499800 [Ljava.util.HashMap$Node; (java...@11.0.14.1)

2: 2828233 2225968248 [I (java...@11.0.14.1)

3: 10496006 1636066768 [Ljava.util.concurrent.ConcurrentHashMap$Node; (java...@11.0.14.1)

4: 10677080 1281249600 org.eclipse.jgit.internal.storage.file.LocalObjectToPack

5: 39299819 1257594208 java.lang.String (java...@11.0.14.1)

6: 10536334 1095778736 java.util.concurrent.ConcurrentHashMap (java...@11.0.14.1)

7: 20960578 1006107744 com.google.gerrit.metrics.dropwizard.DropWizardMetricMaker$TimerImpl

8: 10480119 754568568 com.google.gerrit.metrics.dropwizard.TimerImpl1

9: 13051682 626480736 java.util.HashMap$Node (java...@11.0.14.1)

10: 12864906 617515488 java.util.concurrent.ConcurrentHashMap$Node (java...@11.0.14.1)

11: 10480235 586893160 com.google.gerrit.metrics.AutoValue_Field

12: 1248307 526746576 [J (java...@11.0.14.1)

13: 10480118 503045664 com.google.gerrit.metrics.dropwizard.TimerImpl1$1

14: 10480214 335367760 [Lcom.google.gerrit.metrics.Field;

15: 19938 327142704 [Lorg.eclipse.jgit.lib.ObjectIdOwnerMap$Entry;

16: 20 319291872 [Lorg.h2.util.CacheObject;

17: 12995665 311895960 java.util.Optional (java...@11.0.14.1)

18: 705205 260201496 [Ljava.lang.Object; (java...@11.0.14.1)

19: 2655829 233712952 org.eclipse.jgit.revwalk.RevCommit

20: 54564 208499800 [Ljava.util.HashMap$Node; (java...@11.0.14.1)

Patrick Hiesel

May 4, 2022, 8:11:11 AM5/4/22

to Sven Selberg, Repo and Gerrit Discussion

Interesting.

DropWizardMetricMaker contains two ConcurrentHashMaps (bucketed, descriptions). They map from name => timerimpl. TimerImpl has hashmaps of it's own internally.

I checked Gerrit core to see if we set metrics names dynamically but we don't. So these maps should never grow out of bounds. The PluginMetricMaker allows plugins to register more metrics with a plugin prefix. Could you check if any of these set metric names dynamically (not just always the same string hardcoded)?

If you can use Eclipse MAT, I'd be interested in the keys of the hashmaps. I bet with Eclipse MAT you would find the problem quickly when looking at the object graph.

Also, can you use the API to list currently in use metrics (/config/metrics)? That would return what is in these buckets.

Thanks

Patrick

To view this discussion on the web visit https://groups.google.com/d/msgid/repo-discuss/0d371377-385d-48dd-9eee-f062e13153aan%40googlegroups.com.

Sven Selberg

May 4, 2022, 8:32:08 AM5/4/22

to Repo and Gerrit Discussion

On Wednesday, May 4, 2022 at 2:11:11 PM UTC+2 hie...@google.com wrote:

Interesting.Your heap dumps says you have many ConcurrentHashMap notes, but also ConcurrentHashMaps themselves. You also have Timer1 impls. This looks like it lines up to "too many metrics get registered with different names".DropWizardMetricMaker contains two ConcurrentHashMaps (bucketed, descriptions). They map from name => timerimpl. TimerImpl has hashmaps of it's own internally.I checked Gerrit core to see if we set metrics names dynamically but we don't. So these maps should never grow out of bounds. The PluginMetricMaker allows plugins to register more metrics with a plugin prefix. Could you check if any of these set metric names dynamically (not just always the same string hardcoded)?

Thanks for the analysis Patrick.

If you can use Eclipse MAT, I'd be interested in the keys of the hashmaps. I bet with Eclipse MAT you would find the problem quickly when looking at the object graph.

Also, can you use the API to list currently in use metrics (/config/metrics)? That would return what is in these buckets.

What API are you referring to?

Patrick Hiesel

May 12, 2022, 8:19:47 AM5/12/22

to Sven Selberg, Repo and Gerrit Discussion

On Wed, May 4, 2022 at 2:32 PM Sven Selberg <sven.s...@axis.com> wrote:

On Wednesday, May 4, 2022 at 2:11:11 PM UTC+2 hie...@google.com wrote:Interesting.Your heap dumps says you have many ConcurrentHashMap notes, but also ConcurrentHashMaps themselves. You also have Timer1 impls. This looks like it lines up to "too many metrics get registered with different names".DropWizardMetricMaker contains two ConcurrentHashMaps (bucketed, descriptions). They map from name => timerimpl. TimerImpl has hashmaps of it's own internally.I checked Gerrit core to see if we set metrics names dynamically but we don't. So these maps should never grow out of bounds. The PluginMetricMaker allows plugins to register more metrics with a plugin prefix. Could you check if any of these set metric names dynamically (not just always the same string hardcoded)?Thanks for the analysis Patrick.If you can use Eclipse MAT, I'd be interested in the keys of the hashmaps. I bet with Eclipse MAT you would find the problem quickly when looking at the object graph.Also, can you use the API to list currently in use metrics (/config/metrics)? That would return what is in these buckets.What API are you referring to?

Aparently there must be an undocumented API to look at live metrics

Maybe GET /config/server/metrics/

The code tells me there is a REST API by dropwizard metrics (see MetricCollection, ListMetrics etc)

To view this discussion on the web visit https://groups.google.com/d/msgid/repo-discuss/712dbb93-18e6-42c0-a6a8-e0cae4220070n%40googlegroups.com.

Sven Selberg

May 12, 2022, 10:25:39 AM5/12/22

to Repo and Gerrit Discussion

On Thursday, May 12, 2022 at 2:19:47 PM UTC+2 hie...@google.com wrote:

On Wed, May 4, 2022 at 2:32 PM Sven Selberg <sven.s...@axis.com> wrote:On Wednesday, May 4, 2022 at 2:11:11 PM UTC+2 hie...@google.com wrote:Interesting.Your heap dumps says you have many ConcurrentHashMap notes, but also ConcurrentHashMaps themselves. You also have Timer1 impls. This looks like it lines up to "too many metrics get registered with different names".DropWizardMetricMaker contains two ConcurrentHashMaps (bucketed, descriptions). They map from name => timerimpl. TimerImpl has hashmaps of it's own internally.I checked Gerrit core to see if we set metrics names dynamically but we don't. So these maps should never grow out of bounds. The PluginMetricMaker allows plugins to register more metrics with a plugin prefix. Could you check if any of these set metric names dynamically (not just always the same string hardcoded)?Thanks for the analysis Patrick.If you can use Eclipse MAT, I'd be interested in the keys of the hashmaps. I bet with Eclipse MAT you would find the problem quickly when looking at the object graph.Also, can you use the API to list currently in use metrics (/config/metrics)? That would return what is in these buckets.What API are you referring to?Aparently there must be an undocumented API to look at live metricsMaybe GET /config/server/metrics/The code tells me there is a REST API by dropwizard metrics (see MetricCollection, ListMetrics etc)

/config/server/metrics does exist.

A lot of metrics but nothing that suggests dynamic metric names (beyond metrics created for individual projects).

A lot of metrics but nothing that suggests dynamic metric names (beyond metrics created for individual projects).

The number of TimerImpl object have leveled out

Sven Selberg

May 12, 2022, 10:55:15 AM5/12/22

to Repo and Gerrit Discussion

On Thursday, May 12, 2022 at 4:25:39 PM UTC+2 Sven Selberg wrote:

On Thursday, May 12, 2022 at 2:19:47 PM UTC+2 hie...@google.com wrote:On Wed, May 4, 2022 at 2:32 PM Sven Selberg <sven.s...@axis.com> wrote:On Wednesday, May 4, 2022 at 2:11:11 PM UTC+2 hie...@google.com wrote:Interesting.Your heap dumps says you have many ConcurrentHashMap notes, but also ConcurrentHashMaps themselves. You also have Timer1 impls. This looks like it lines up to "too many metrics get registered with different names".DropWizardMetricMaker contains two ConcurrentHashMaps (bucketed, descriptions). They map from name => timerimpl. TimerImpl has hashmaps of it's own internally.I checked Gerrit core to see if we set metrics names dynamically but we don't. So these maps should never grow out of bounds. The PluginMetricMaker allows plugins to register more metrics with a plugin prefix. Could you check if any of these set metric names dynamically (not just always the same string hardcoded)?Thanks for the analysis Patrick.If you can use Eclipse MAT, I'd be interested in the keys of the hashmaps. I bet with Eclipse MAT you would find the problem quickly when looking at the object graph.Also, can you use the API to list currently in use metrics (/config/metrics)? That would return what is in these buckets.What API are you referring to?Aparently there must be an undocumented API to look at live metricsMaybe GET /config/server/metrics/The code tells me there is a REST API by dropwizard metrics (see MetricCollection, ListMetrics etc)/config/server/metrics does exist.

A lot of metrics but nothing that suggests dynamic metric names (beyond metrics created for individual projects).

@Luca How did you count the metrics to get 420?

Sven Selberg

May 13, 2022, 4:56:16 AM5/13/22

to Repo and Gerrit Discussion

On Thursday, May 12, 2022 at 4:55:15 PM UTC+2 Sven Selberg wrote:

On Thursday, May 12, 2022 at 4:25:39 PM UTC+2 Sven Selberg wrote:On Thursday, May 12, 2022 at 2:19:47 PM UTC+2 hie...@google.com wrote:On Wed, May 4, 2022 at 2:32 PM Sven Selberg <sven.s...@axis.com> wrote:On Wednesday, May 4, 2022 at 2:11:11 PM UTC+2 hie...@google.com wrote:Interesting.Your heap dumps says you have many ConcurrentHashMap notes, but also ConcurrentHashMaps themselves. You also have Timer1 impls. This looks like it lines up to "too many metrics get registered with different names".DropWizardMetricMaker contains two ConcurrentHashMaps (bucketed, descriptions). They map from name => timerimpl. TimerImpl has hashmaps of it's own internally.I checked Gerrit core to see if we set metrics names dynamically but we don't. So these maps should never grow out of bounds. The PluginMetricMaker allows plugins to register more metrics with a plugin prefix. Could you check if any of these set metric names dynamically (not just always the same string hardcoded)?Thanks for the analysis Patrick.If you can use Eclipse MAT, I'd be interested in the keys of the hashmaps. I bet with Eclipse MAT you would find the problem quickly when looking at the object graph.Also, can you use the API to list currently in use metrics (/config/metrics)? That would return what is in these buckets.What API are you referring to?Aparently there must be an undocumented API to look at live metricsMaybe GET /config/server/metrics/The code tells me there is a REST API by dropwizard metrics (see MetricCollection, ListMetrics etc)/config/server/metrics does exist.

A lot of metrics but nothing that suggests dynamic metric names (beyond metrics created for individual projects).@Luca How did you count the metrics to get 420?

(@Luca, your mails seem to get stuck and aren't promoted to the mailing list)

> Luca wrote: "jq '. | keys’ gives you the list of metric names. We have 420 published on GerritHub.io."

By that count we have 299 metrics ( from "jq '.|length'").

Luca Milanesio

May 13, 2022, 11:16:13 AM5/13/22

to Repo and Gerrit Discussion, Luca Milanesio, Sven Selberg, Patrick Hiesel

On 12 May 2022, at 13:19, 'Patrick Hiesel' via Repo and Gerrit Discussion <repo-d...@googlegroups.com> wrote:On Wed, May 4, 2022 at 2:32 PM Sven Selberg <sven.s...@axis.com> wrote:Interesting.Your heap dumps says you have many ConcurrentHashMap notes, but also ConcurrentHashMaps themselves. You also have Timer1 impls. This looks like it lines up to "too many metrics get registered with different names".DropWizardMetricMaker contains two ConcurrentHashMaps (bucketed, descriptions). They map from name => timerimpl. TimerImpl has hashmaps of it's own internally.I checked Gerrit core to see if we set metrics names dynamically but we don't. So these maps should never grow out of bounds. The PluginMetricMaker allows plugins to register more metrics with a plugin prefix. Could you check if any of these set metric names dynamically (not just always the same string hardcoded)?Thanks for the analysis Patrick.If you can use Eclipse MAT, I'd be interested in the keys of the hashmaps. I bet with Eclipse MAT you would find the problem quickly when looking at the object graph.Also, can you use the API to list currently in use metrics (/config/metrics)? That would return what is in these buckets.What API are you referring to?Aparently there must be an undocumented API to look at live metricsMaybe GET /config/server/metrics/The code tells me there is a REST API by dropwizard metrics (see MetricCollection, ListMetrics etc)

To view this discussion on the web visit https://groups.google.com/d/msgid/repo-discuss/CAM7sg%3D3Mirm%3D7b5edC0oa_KN77ZqStoyr%2BH53vqjxEVQzkr2fA%40mail.gmail.com.

<TimerImpl-on-heap.png><timerimpl-on-heap.png>

Matthias Sohn

May 13, 2022, 11:16:13 AM5/13/22

to Patrick Hiesel, Sven Selberg, Repo and Gerrit Discussion

On Thu, May 12, 2022 at 2:19 PM 'Patrick Hiesel' via Repo and Gerrit Discussion <repo-d...@googlegroups.com> wrote:

On Wed, May 4, 2022 at 2:32 PM Sven Selberg <sven.s...@axis.com> wrote:On Wednesday, May 4, 2022 at 2:11:11 PM UTC+2 hie...@google.com wrote:Interesting.Your heap dumps says you have many ConcurrentHashMap notes, but also ConcurrentHashMaps themselves. You also have Timer1 impls. This looks like it lines up to "too many metrics get registered with different names".DropWizardMetricMaker contains two ConcurrentHashMaps (bucketed, descriptions). They map from name => timerimpl. TimerImpl has hashmaps of it's own internally.I checked Gerrit core to see if we set metrics names dynamically but we don't. So these maps should never grow out of bounds. The PluginMetricMaker allows plugins to register more metrics with a plugin prefix. Could you check if any of these set metric names dynamically (not just always the same string hardcoded)?Thanks for the analysis Patrick.If you can use Eclipse MAT, I'd be interested in the keys of the hashmaps. I bet with Eclipse MAT you would find the problem quickly when looking at the object graph.Also, can you use the API to list currently in use metrics (/config/metrics)? That would return what is in these buckets.What API are you referring to?Aparently there must be an undocumented API to look at live metricsMaybe GET /config/server/metrics/The code tells me there is a REST API by dropwizard metrics (see MetricCollection, ListMetrics etc)

AFAIR you need to install one of the metrics exporter plugins to expose metrics

https://gerrit-review.googlesource.com/admin/repos/q/filter:metrics

https://gerrit-review.googlesource.com/admin/repos/q/filter:metrics

To view this discussion on the web visit https://groups.google.com/d/msgid/repo-discuss/CAM7sg%3D3Mirm%3D7b5edC0oa_KN77ZqStoyr%2BH53vqjxEVQzkr2fA%40mail.gmail.com.

Luca Milanesio

May 13, 2022, 11:16:13 AM5/13/22

to Repo and Gerrit Discussion, Luca Milanesio, Sven Selberg

> On 12 May 2022, at 15:55, Sven Selberg <sven.s...@axis.com> wrote:

>

>

>

> On Thursday, May 12, 2022 at 4:25:39 PM UTC+2 Sven Selberg wrote:

> On Thursday, May 12, 2022 at 2:19:47 PM UTC+2 hie...@google.com wrote:

> On Wed, May 4, 2022 at 2:32 PM Sven Selberg <sven.s...@axis.com> wrote:

>

>

> On Wednesday, May 4, 2022 at 2:11:11 PM UTC+2 hie...@google.com wrote:

> Interesting.

>

> Your heap dumps says you have many ConcurrentHashMap notes, but also ConcurrentHashMaps themselves. You also have Timer1 impls. This looks like it lines up to "too many metrics get registered with different names".

>

> DropWizardMetricMaker contains two ConcurrentHashMaps (bucketed, descriptions). They map from name => timerimpl. TimerImpl has hashmaps of it's own internally.

>

> I checked Gerrit core to see if we set metrics names dynamically but we don't. So these maps should never grow out of bounds. The PluginMetricMaker allows plugins to register more metrics with a plugin prefix. Could you check if any of these set metric names dynamically (not just always the same string hardcoded)?

>

> Thanks for the analysis Patrick.

>

>

> If you can use Eclipse MAT, I'd be interested in the keys of the hashmaps. I bet with Eclipse MAT you would find the problem quickly when looking at the object graph.

>

> Also, can you use the API to list currently in use metrics (/config/metrics)? That would return what is in these buckets.

>

> What API are you referring to?

>

> Aparently there must be an undocumented API to look at live metrics

>

> Maybe GET /config/server/metrics/

>

> The code tells me there is a REST API by dropwizard metrics (see MetricCollection, ListMetrics etc)

>

> /config/server/metrics does exist.

> A lot of metrics but nothing that suggests dynamic metric names (beyond metrics created for individual projects).

>

> @Luca How did you count the metrics to get 420?

jq '. | keys’ gives you the list of metric names. We have 420 published on GerritHub.io.

Luca.

> After about two weeks the situation is increasingly bleak (bytes of heap taken up by com.google.gerrit.metrics.dropwizard.DropWizardMetricMaker$TimerImpl objects):

>

>

>

>

> The number of TimerImpl object have leveled out

>

>

> <TimerImpl-on-heap.png><TimerImpl-on-heap.png><timerimpl-on-heap.png>

Nasser Grainawi

Jul 1, 2025, 11:10:12 AM7/1/25

to Repo and Gerrit Discussion

On Wednesday, May 4, 2022 at 6:11:11 AM UTC-6 Patrick Hiesel wrote:

Interesting.Your heap dumps says you have many ConcurrentHashMap notes, but also ConcurrentHashMaps themselves. You also have Timer1 impls. This looks like it lines up to "too many metrics get registered with different names".DropWizardMetricMaker contains two ConcurrentHashMaps (bucketed, descriptions). They map from name => timerimpl. TimerImpl has hashmaps of it's own internally.I checked Gerrit core to see if we set metrics names dynamically but we don't. So these maps should never grow out of bounds. The PluginMetricMaker allows plugins to register more metrics with a plugin prefix. Could you check if any of these set metric names dynamically (not just always the same string hardcoded)?

I know this thread is from a few years ago, but for anyone searching the list about metrics it might be helpful to know there were a few classes in core creating metrics dynamically when they were intended to be static. They were fixed on the stable-3.5 branch, but after 3.5 was EOL, so the first official releases with the fixes were v3.6.8, v3.7.6, v3.8.3, and v3.9+.

386976: QueryProcessor: Create Singleton metrics instances for subclasses | https://gerrit-review.googlesource.com/c/gerrit/+/386976

387214: DefaultRefFilter: Do not recreate metrics for each instance | https://gerrit-review.googlesource.com/c/gerrit/+/387214

387216: SubmitRuleEvaluator: Do not recreate metrics for each instance | https://gerrit-review.googlesource.com/c/gerrit/+/387216

Reply all

Reply to author

Forward

0 new messages