Causality and Machine Intelligence: Real/Causal AI as an Ontology Engineering Machine

Azamat Abdoullaev

Judea Pearl, Turing Award winner and AI pioneer

“Causality is very important for the next steps of progress of machine learning.”

Yoshua Bengio, Turing Award winner and “Godfather of Deep Learning”

“Causal AI is a key enabler of the next wave of AI, where AI moves toward greater decision automation, autonomy, robustness and common sense.”

Gartner, Analyst Firm

John F Sowa

Sent: Thursday, October 6, 2022 4:48 PM

To: "ontolog-forum" <ontolo...@googlegroups.com>, ontolog...@googlegroups.com

Subject: [Ontology Summit] Causality and Machine Intelligence: Real/Causal AIas an Ontology Engineering Machine

Neil McNaughton

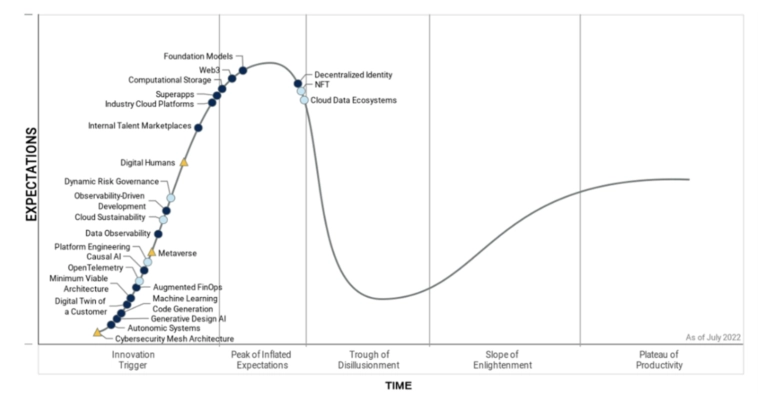

>> “Gartner’s analysis is an objective assessment”

Really? Who says? And the “hype curve”? A fantasy. A (poor) editorial/puffery in a graph!

Best regards,

Neil McNaughton

Editor Oil IT Journal – www.oilit.com

Recent readers’ testimonials

The Data Room SAS

7 Rue des Verrieres

92310 Sevres, France

Landline+33146239596

Cell+33672712642

--

All contributions to this forum are covered by an open-source license.

For information about the wiki, the license, and how to subscribe or

unsubscribe to the forum, see http://ontologforum.org/info/

---

You received this message because you are subscribed to the Google Groups "ontolog-forum" group.

To unsubscribe from this group and stop receiving emails from it, send an email to

ontolog-foru...@googlegroups.com.

To view this discussion on the web visit

https://groups.google.com/d/msgid/ontolog-forum/CAKK1bf867-_5v3_g6TF11F6Zob3ej8hq__vGKCBTcQqBfdkqVA%40mail.gmail.com.

Azamat Abdoullaev

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/AS4PR10MB56729B00118C15385F2640E4AC5F9%40AS4PR10MB5672.EURPRD10.PROD.OUTLOOK.COM.

John F Sowa

Azamat Abdoullaev

Who first builds Real/Causal /NaturalAI rules the world

Right. Computer scientists mostly focused on anthropocentric AI algorithms by imitating human intelligence with machines, trying to create artificial human intelligence or human AI as a fake AI or bogus AI, be it symbolic or statistical ones.

In fact, the scope and scale of a true/genuine AI is the whole world with all its content, not just human minds. What I dubbed as Real/Causal/Natural AI embraces the reality-inspired algorithms for problem-solving, search or optimization as well as perception, learning, reasoning, understanding, action and interaction.

So, it covers so-called nature-inspired optimization algorithms (NIOAs).

Many algorithms mimic natural phenomena such as how animals organize their lives, how they use instincts to survive, how generations evolve, how the human brain works, and how we as humans learn.

NIOAs are defined as a group of algorithms that are inspired by natural phenomena, including swarm intelligence, biological systems, physical and chemical systems, etc. NIOAs include bio-inspired algorithms and physics- and chemistry-based algorithms; the bio-inspired algorithms further include swarm intelligence-based and evolutionary algorithms.

NIOAs are an important branch of artificial intelligence (AI), and NIOAs have made significant progress in the last 30 years. Thus far, a large number of common NIOAs and their variants have been proposed, such as genetic algorithm (GA), particle swarm optimization (PSO) algorithm, differential evolution (DE) algorithm, artificial bee colony (ABC) algorithm, ant colony optimization (ACO) algorithm, cuckoo search (CS) algorithm, bat algorithm (BA), firefly algorithm (FA), immune algorithm (IA), grey wolf optimization (GWO), gravitational search algorithm (GSA), and harmony search (HS) algorithm. https://encyclopedia.pub/entry/12212

It is less known than neural networks algorithms (ANNs, machine learning algorithms that mimic the way human neurons communicate with one another) or reinforcement learning (RL) algorithms by trial and error with penalties and rewards.

My message is rather simple: No real causality, no real intelligence.

Or, comprehensive and consistent causal models of the world that include global ontology, science (universal knowledge), with computer science and engineering, are necessary requirements for true intelligence, human or machine.

It is able to efficiently identify the critical information/insights/knowledge/wisdom/learning/understanding in any datasets discarding all the irrelevant and misleading correlations, biased data, etc.

--

All contributions to this forum are covered by an open-source license.

For information about the wiki, the license, and how to subscribe or

unsubscribe to the forum, see http://ontologforum.org/info/

---

You received this message because you are subscribed to the Google Groups "ontolog-forum" group.

To unsubscribe from this group and stop receiving emails from it, send an email to ontolog-foru...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/6a97a304ec084cfcbded96062906465f%40bestweb.net.

John F Sowa

Azamat Abdoullaev

--

All contributions to this forum are covered by an open-source license.

For information about the wiki, the license, and how to subscribe or

unsubscribe to the email, see http://ontologforum.org/info/

---

You received this message because you are subscribed to the Google Groups "ontology-summit" group.

To unsubscribe from this group and stop receiving emails from it, send an email to ontology-summ...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontology-summit/28957dae904b488ebf7692e6e51e8cdd%40bestweb.net.

John Bottoms

Azamat, Who's Turing Test?

The key issue with a Turing Test is that it is First-Order-Logic

(FOL) based. That means that it comes with no metrics, is unlikely

to be adaptively context sensitive, and does not lead us to

extensible, collaborative AI.

We need to look at natural selection for some guidelines. Nature prefers diversity. If, when I look 50 or 100 years in the future, I see a static hoard of robots who all think in lock-step, then I will know we have failed.

The Brute Fact is that all intelligence is subjective and local. We don't have enough grains of sand or sheets of papyrus to create an all-knowing, all-seeing AI. They must be domain based, and that is why Domain Based Systems are, at least the immediate future, the favored architecture for systems.

Even with Higher Order Logic (HOL) we will need probabilistic metrics, and the entailed question is"Who's Metrics" and how will we calculate a metric's confidence factor, and for which context? And, a particular HOL is not innately extensible. There is also the issue, true for humans as well as AI, that learning sequences determine the intelligence result. So, we need an AI that is extensible and collaborative with metrics that account for collaboration. One of the prime elements lacking here is that we do not teach thinking in our schools. We, instead, teach how previous solutions are valuable economic solutions, omitting the reasoning and the failed use cases.

-John Bottoms, FirstStar Systems

unsubscribe to the forum, see http://ontologforum.org/info/

---

You received this message because you are subscribed to the Google Groups "ontolog-forum" group.

To unsubscribe from this group and stop receiving emails from it, send an email to ontolog-foru...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAKK1bf9Q1RbUn210%2BY-ttAqEkYTLQdP4E-D%3DYyMEWro3ZCh1zw%40mail.gmail.com.

Azamat Abdoullaev

JB: Azamat, Who's Turing Test?

It is rather What's Turing Test?

The key issue with a Turing Test is that it is First-Order-Logic (FOL) based.

It is not the standard interpretation of TT, which is a man-machine-man language test of a machine's ability to imitate intelligent behavior (blind conversation) identical to a human.

A similar idea was proposed by D. Diderot: "If they find a parrot who could answer to everything, I would claim it to be an intelligent being without hesitation".

The Brute Fact is that all intelligence is subjective and local.

Nope. Human intelligence is just a sample of real/natural/causal intelligence. Its subjectivity and locality are the attributes of an individual, which are much reduced in the collective intelligence, and minimal in objective scientific intelligence.

Where we agree is that the TT/Imitation Game is not any scientific/objective criterion and performance metric of intelligence. Otherwise, we need an infinite range of it:

Perception TT

Speech TT

Language TT

Learning TT

Planning TT,

Creativity TT

Reasoning TT

Action TT, etc.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/16207f7f-8a0c-f264-e455-68df07a21a7a%40firststarsystems.com.