Phantoms + QA + commercial solutions

Raamana, Pradeep Reddy

Hi Everyone,

FYI the talks from two commercial phantom services providers Gold Standard Phantoms and Phantom Lab will happen on Aug 24th 11am EDT to 12.30pm or so (latest schedule is here, with few other exciting talks coming up soon).

One question I’d be asking in this session of Q&A / debate to follow the talks would be: are we ready to develop / set a standard or two for routine QA for the well-understood sequences / use-cases? I feel like we may have at least one candidate (fBIRN QA), that can be refreshed/modernized/expanded into the level of full multi-site dataset (such as ABCD). Let’s discuss this and perhaps consider putting it forth in front of OHBM BPC? I’d love to hear your input on any other potential candidates.

Thanks,

Pradeep

Raamana, Pradeep Reddy

Have you guys seen this paper on dynamic phantoms? I’d love to hear this community’s thoughts on this as it appears to be a good step forward.

Kumar, R., Tan, L., Kriegstein, A., Lithen, A., Polimeni, J. R., Mujica-Parodi, L. R., & Strey, H. H. (2021). Ground-truth “resting-state” signal provides data-driven estimation and correction for scanner distortion of fMRI time-series dynamics. NeuroImage, 227, 117584. https://doi.org/10.1016/j.neuroimage.2020.117584

Abstract:

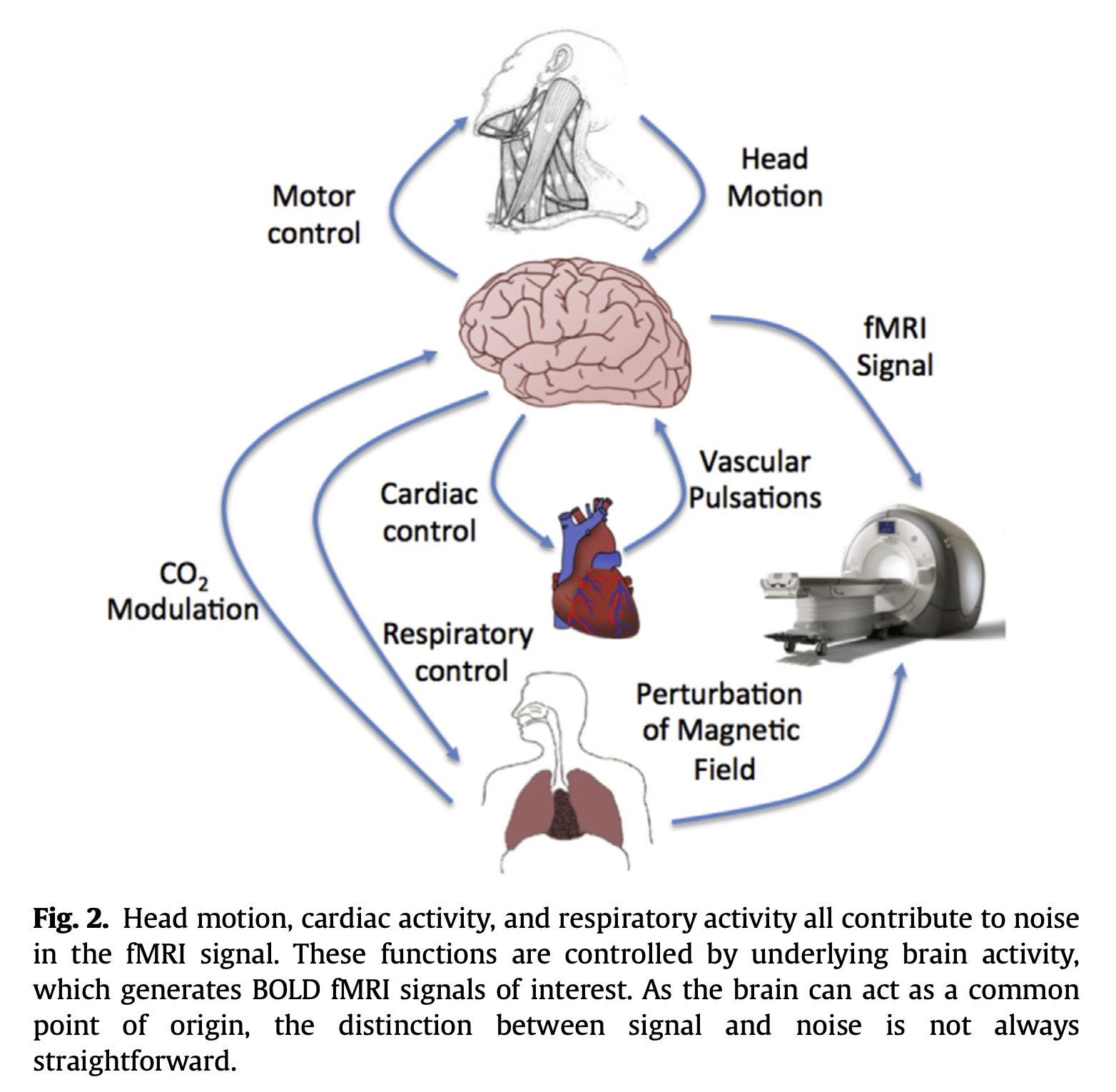

“The fMRI community has made great strides in decoupling neuronal activity from other physiologically induced T2∗ changes, using sensors that provide a ground-truth with respect to cardiac, respiratory, and head movement dynamics. However, blood oxygenation level-dependent (BOLD) time-series dynamics are also confounded by scanner artifacts, in complex ways that can vary not only between scanners but even, for the same scanner, between sessions. Unfortunately, the lack of an equivalent ground truth for BOLD time-series has thus far stymied the development of reliable methods for identification and removal of scanner-induced noise, a problem that we have previously shown to severely impact detection sensitivity of resting-state brain networks. To address this problem, we first designed and built a phantom capable of providing dynamic signals equivalent to that of the resting-state brain. Using the dynamic phantom, we then compared the ground-truth time-series with its measured fMRI data. Using these, we introduce data-quality metrics: Standardized Signal-to-Noise Ratio (ST- SNR) and Dynamic Fidelity that, unlike currently used measures such as temporal SNR (tSNR), can be directly compared across scanners. Dynamic phantom data acquired from four “best-case” scenarios: high-performance scanners with MR-physicist-optimized acquisition protocols, still showed scanner instability/multiplicative noise contributions of about 6–18% of the total noise. We further measured strong non-linearity in the fMRI response for all scanners, ranging between 8–19% of total voxels. To correct scanner distortion of fMRI time-series dynamics at a single-subject level, we trained a convolutional neural network (CNN) on paired sets of measured vs. ground- truth data. The CNN learned the unique features of each session’s noise, providing a customized temporal filter. Tests on dynamic phantom time-series showed a 4- to 7-fold increase in ST-SNR and about 40–70% increase in Dynamic Fidelity after denoising, with CNN denoising outperforming both the temporal bandpass filtering and denoising using Marchenko-Pastur principal component analysis. Critically, we observed that the CNN temporal denoising pushes ST-SNR to a regime where signal power is higher than that of noise (ST-SNR > 1). Denoising human-data with ground-truth-trained CNN, in turn, showed markedly increased detection sensitivity of resting- state networks. These were visible even at the level of the single-subject, as required for clinical applications of fMRI.”

Aina Puce

--

You received this message because you are subscribed to the Google Groups "niQC" group.

To unsubscribe from this group and stop receiving emails from it, send an email to niqc+uns...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/niqc/DM6PR04MB67467D2496FE3638ACCBD510B8F79%40DM6PR04MB6746.namprd04.prod.outlook.com.

Indiana University, Bloomington IN 47405 USA

Raamana, Pradeep Reddy

Indeed it is – an important point from this paper (and another one below) that made me think is that there is lack of standards for even for something as simple as SNR i.e. it appears SNR values based on static phantoms (basis for QA) are not comparable across sites [strictly speaking], because of scanner-induced noise and drift (even within the same session) etc. Love to hear what you all think about it.

It is not clear to me if the fBIRN community appreciated this sufficiently while making their QA recommendations back in 2012 (will read that again to see if they touched upon it).

Welvaert M, Rosseel Y (2013) On the Definition of Signal-To-Noise Ratio and Contrast-To-Noise Ratio for fMRI Data. PLOS ONE 8(11): e77089. https://doi.org/10.1371/journal.pone.0077089

cc-ing Dr. Lilianne Mujica-Parodi as one of the authors on the dynamic phantoms paper.

Todd Constable

To view this discussion on the web visit https://groups.google.com/d/msgid/niqc/DM6PR04MB674616369D006F669B055513B8F99%40DM6PR04MB6746.namprd04.prod.outlook.com.

Thomas Nichols

To view this discussion on the web visit https://groups.google.com/d/msgid/niqc/80C3B520-37E8-457B-A424-42F1825473F8%40gmail.com.

LR Mujica-Parodi

Open MINDS Lab at Pitt, PI Pradeep Raamana

Thanks Tom - can you point us to any specific papers/preprints if there are any on this?

Thomas Nichols

Bowring, A., Nichols, T. E., & Maumet, C. (2021). Isolating the Sources of Pipeline-Variability in Group-Level Task-fMRI results. bioRxiv. Retrieved from https://www.biorxiv.org/content/10.1101/2021.07.27.453994v1

To view this discussion on the web visit https://groups.google.com/d/msgid/niqc/28125fa8-6bd6-42c5-b0bf-dd91a90c0872n%40googlegroups.com.

Todd Constable

On Aug 18, 2021, at 9:43 AM, Thomas Nichols <thomas....@bdi.ox.ac.uk> wrote:

To view this discussion on the web visit https://groups.google.com/d/msgid/niqc/CAJoTcz7A6xpuOJFaGMO%2Brc%2BS%3DKYVruAKwJ1VSGU00Xjq3DpVoQ%40mail.gmail.com.

Stephen Strother

Signal image is the mean intensity across time by voxel.

Temporal fluctuation noise image : first, voxel time-series is detrended with a 2nd order polynomial. The fluctuation noise image is the standard deviation (SD) of the residual by voxel.

msi is mean signal intensity of the raw signal.

std SD of residuals after detrending

percentFluc 100 ∗ ( std )/( msi )

drift 100 ∗ (max raw signal - min raw signal)/ msi

driftfit 100 ∗ (max fit - min fit)/ msi"

To view this discussion on the web visit https://groups.google.com/d/msgid/niqc/CAMt3WqqD%2B2bh9ytJfojUj7D2aaf9WNYMSgWvzgxNRQbSDW5qkQ%40mail.gmail.com.

-- ---------------------------------------------------------------------------------------------------------------- To reduce e-mail overload follow the e-mail charter of Chris Anderson: http://emailcharter.org/. Stephen Strother, PhD Senior Scientist, Rotman Research Institute, Baycrest Professor of Medical Biophysics, University of Toronto E-mail: sstr...@research.baycrest.org Tel. Office: 416-785-2500 x2956 Fax: 426-785-2862

Open Minds Lab