instance file

44 views

Skip to first unread message

yanfei qian

Jul 10, 2021, 5:19:50 AM7/10/21

to The irace package: Iterated Racing for Automatic Configuration

Dear Manuel,

I have read example code of ACOTSP carefully and some sessions about benchmark problems before, but I stil have some probelms about it.

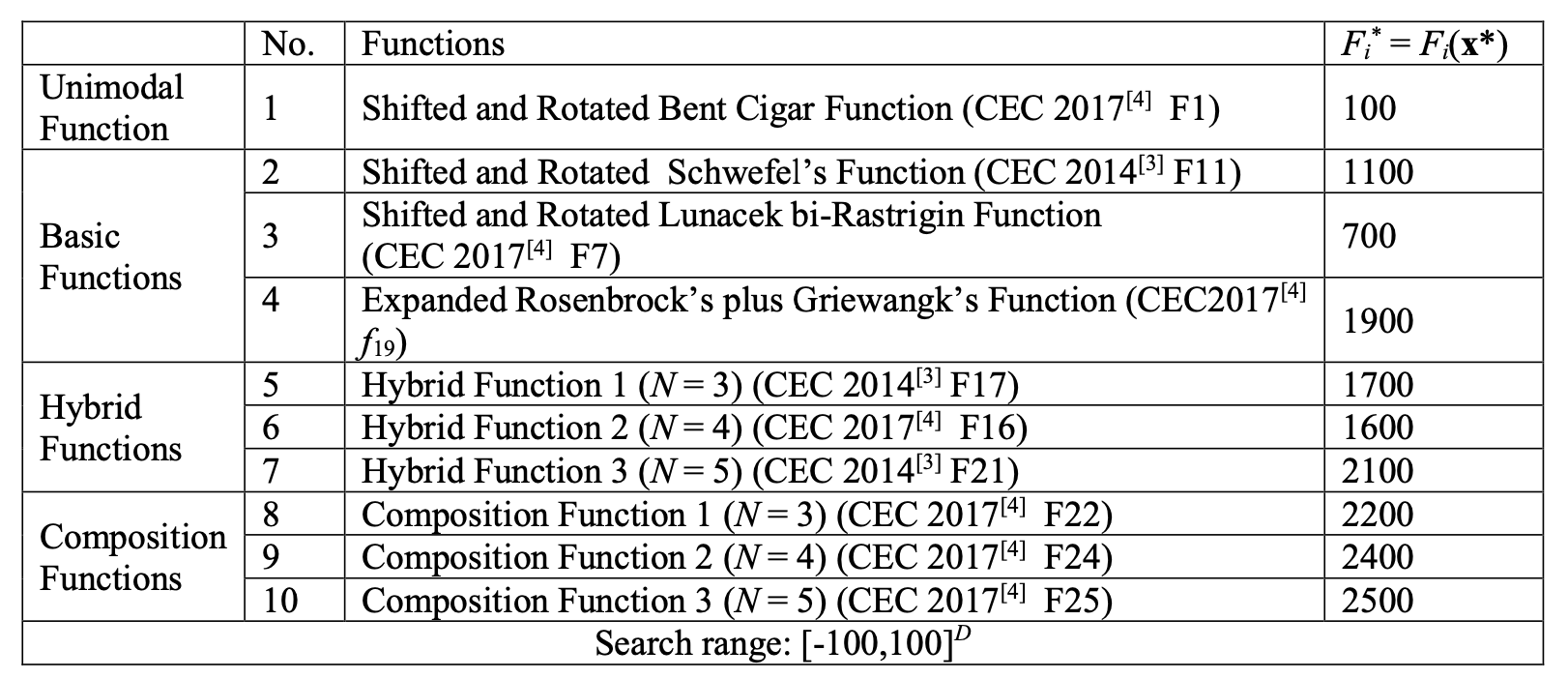

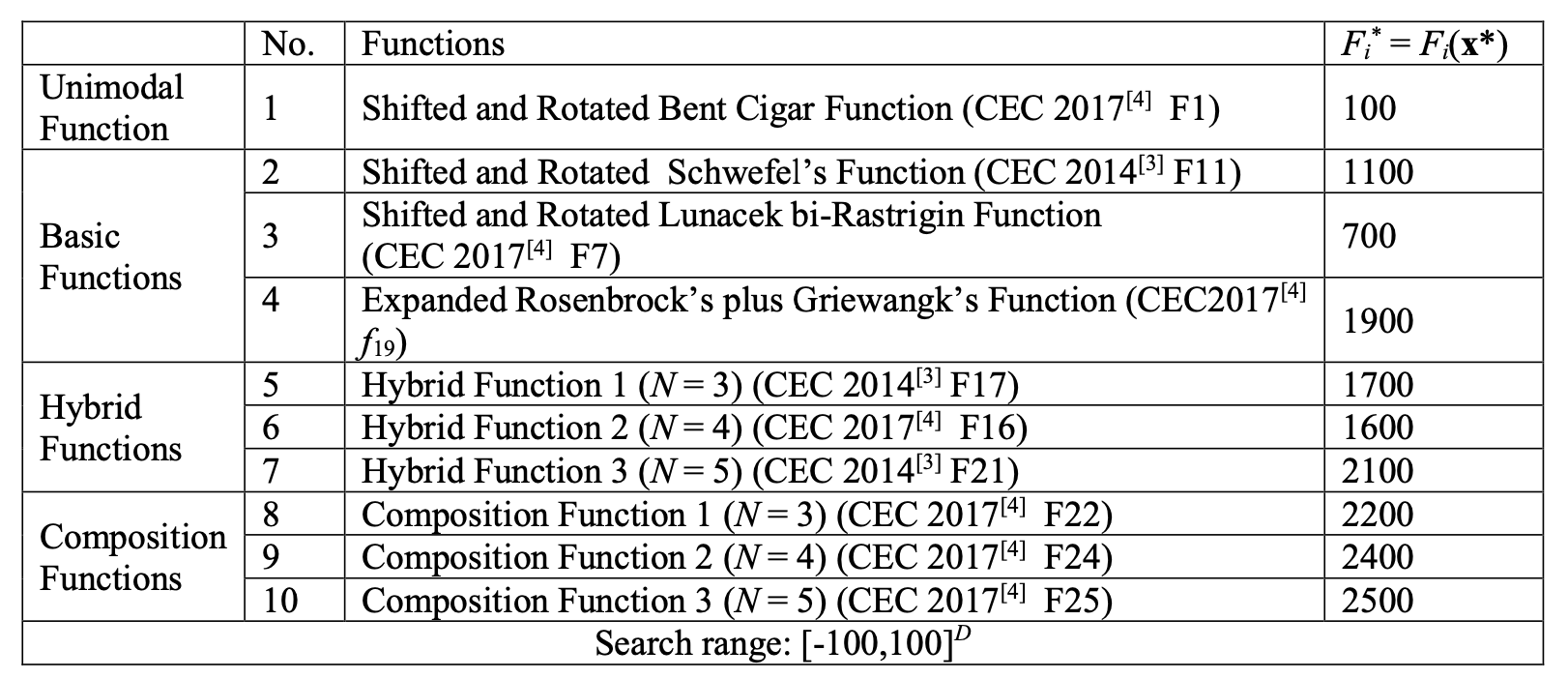

I want to use Differential evolution (DE) algorithm and it variants to solve benchmark problems. This algorithm has some parameters like pc, pm and so on. There are 10 benchmark functions to test.

My question is about train instance and test Instance. Is this just like train dataset and test dataset? I want to use all 10 functions during training phase to generate a set of parameters and then show the performace of algorithm using this set of parameters in test phase. is this means train instance and test instance are same in my project?

Best wishes,

Qian Yanfei

Manuel López-Ibáñez

Jul 10, 2021, 6:40:29 AM7/10/21

to The irace package: Iterated Racing for Automatic Configuration

Dear Qian Yanfei,

That depends on what you want to show. If you want to show that the parameter values found work well only on those benchmark functions, then yes, use the same, but I would argue that in such case, why not tune for each specific function since you are fixing the functions and you do not expect the parameter values to work well on functions different from those?

If you want to find good parameters values for continuous optimization functions in general, then you need to show that the parameter values found during tuning also work well for functions that you did not use in the tuning.

If your assumption is that what works well for the benchmark should work well for other continuous optimization functions, then using other functions for testing should show that parameter values tuned for the benchmark set should also give good results when using a different test set. Otherwise, your assumption is wrong.

Irace cannot tell you what you want to do (yet ;-) ), it just (hopefully) enables you to do what you want to do :-)

I hope this helps!

Manuel.

--

Dr Manuel López-Ibáñez | "Beatriz Galindo" Senior Distinguished Researcher | University of Málaga, Spain | http://lopez-ibanez.eu

------------------------------------------------------------------------------

Evolutionary Computation Journal: Special Issue on Reproducibility: http://lopez-ibanez.eu/ecj-si-rep

------------------------------------------------------------------------------

Workshop on Space & AI in association with ECML/PKDD 2021 (deadline: July 30th) http://spaceandai.ijs.si

------------------------------------------------------------------------------

11th Workshop on Evolutionary Computation for the Automated Design of Algorithms (ECADA): https://bonsai.auburn.edu/ecada/GECCO2021

------------------------------------------------------------------------------

Dr Manuel López-Ibáñez | "Beatriz Galindo" Senior Distinguished Researcher | University of Málaga, Spain | http://lopez-ibanez.eu

------------------------------------------------------------------------------

Evolutionary Computation Journal: Special Issue on Reproducibility: http://lopez-ibanez.eu/ecj-si-rep

------------------------------------------------------------------------------

Workshop on Space & AI in association with ECML/PKDD 2021 (deadline: July 30th) http://spaceandai.ijs.si

------------------------------------------------------------------------------

11th Workshop on Evolutionary Computation for the Automated Design of Algorithms (ECADA): https://bonsai.auburn.edu/ecada/GECCO2021

------------------------------------------------------------------------------

Reply all

Reply to author

Forward

0 new messages