Making idtrackerai faster

94 views

Skip to first unread message

Antonio Ortega

Jun 8, 2021, 10:39:12 AM6/8/21

to idtracker.ai users group

Dear idtracker devs & community

First of all, thanks for making such a high quality and well documented piece of software. I must say for me it worked out of the box, just following the instructions on the website. I was elated to discover the

conda install tensorflow-gpu=1.13

command also installs CUDA and cuDNN. Amazing. Keep it up!

I had a question regarding the feasibility of making idtrackerai faster by transferring knowledge from one video to another. I have tried to look up this question in the documentation and also on the Google group but I could not find anything.

As I understand it, idtrackerai has at least one training step, which enables it to work out of the box on a new (and very different) videos. I was wondering if it would be possible to run the tracker in a mode that makes use of the weights produced on video A to analyze video B, provided video A and B are just chops of video AB? I understand the so called "global fragments" need to be computed on every video, but the training may not have to? If so, this would make it feasible to make offline analysis of longer and longer videos (>few mins).

Thank you very much again for making idtrackerai!

Best regards,

Antonio

Francisco Romero

Jun 15, 2021, 5:51:24 AM6/15/21

to idtracker.ai users group

Hi Antonio,

Cheers,

Thank you very much for your nice words about idtracker.ai.

Right now idtracker.ai can perform knowledge transfer and identity transfer by setting properly the corresponding advanced parameters (https://idtrackerai.readthedocs.io/en/latest/advanced_parameters.html#knowledge-transfer-and-identity-transfer). This setup will still train the identification CNN on each video. Our experience tells us that using knowledge transfer allows users to track videos faster, as the training of the identification CNN starts from a better place. The identity transfer can make the tracking even faster, and on top of that, you will have the same identities as the animals for each video chunk. Assuming the animals are the same in each chunk.

As I understand, your idea is to just use the previous network to identify the animals from another video without retraining the network, so that everything is faster.

idtracker.ai does not have this option implemented in its current version. While it is possible to implement something along these lines, I am afraid that the identification quality won't be as good as if the network is trained for each video chunk.

We will add this feature to the development pipeline and consider it for the next versions.

Thanks for your feedback.

Cheers,

Francisco Romero-Ferrero

Antonio Ortega

Jun 23, 2021, 10:19:59 AM6/23/21

to idtracker.ai users group

Hi Francisco

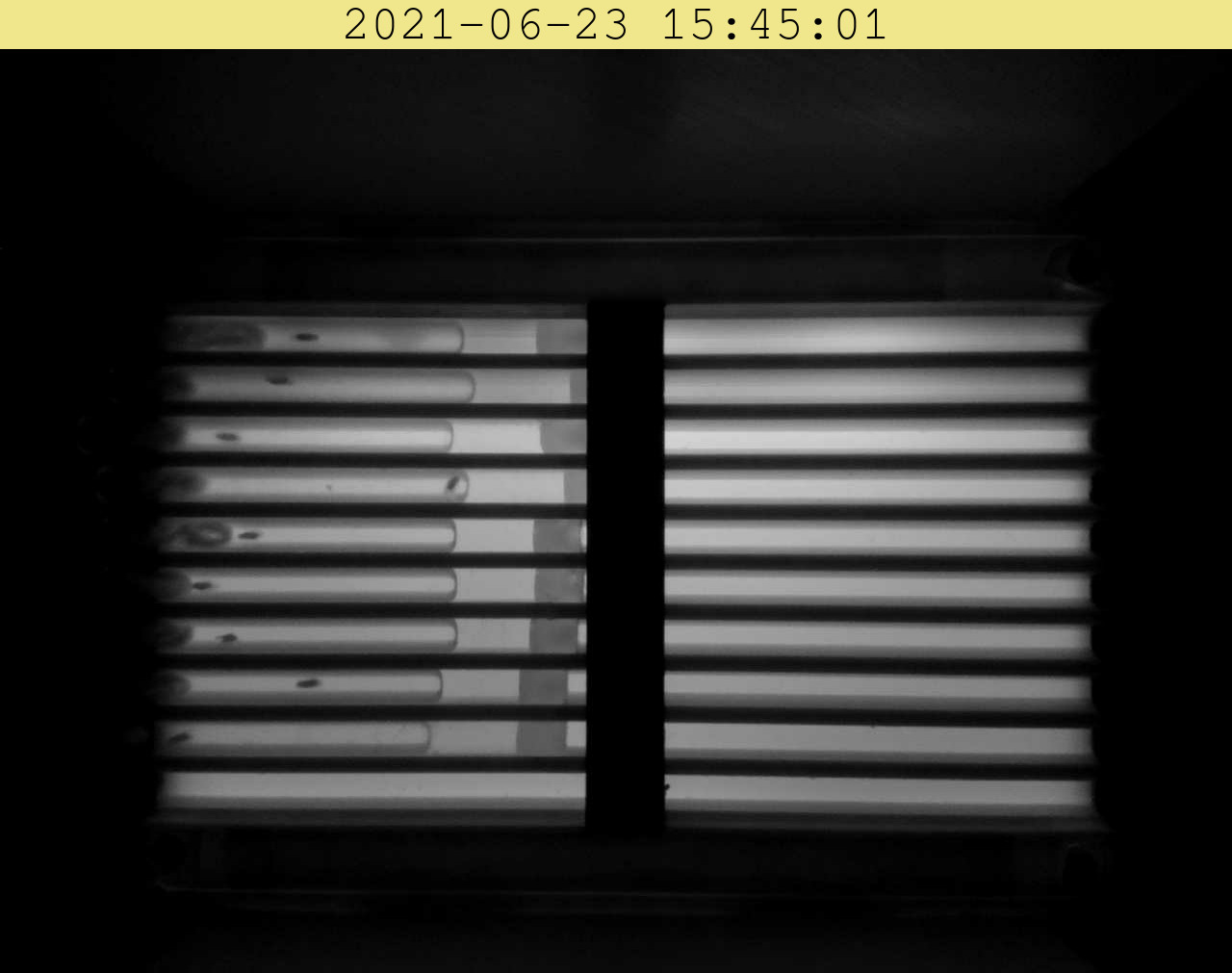

I was very impressed with my first attempts to deploy idtracker.ai in my application. But the reason why I propose this is I aim to track animals in videos lasting several hours, which makes the computation speed also a variable to consider. Can you share other tricks that you think could help speed up idtracker.ai? Maybe a naive adaptive thresholding applied before idtracker.ai would help it perform faster? I am thinking of something along the lines of what's shown on the videos in this zip (please let me know if you can't download it)

In it you will see a sqlite file produced with the ethoscope behavioral monitor (https://github.com/gilestrolab/ethoscope/) and two videos of a few frames. One with the raw footage, and one with the segmented animals. The segmentation produced by the ethoscope is computationally very cheap and it relies solely on opencv and scikit learn, so maybe I could provide the result of this segmentation to idtracker.ai so as to have it run on very long videos?

Please see attached photos for a summary of the videos.

Thank you!

Antonio Ortega

Jun 23, 2021, 10:55:29 AM6/23/21

to idtracker.ai users group

Double thinking about this, I realize maybe this helps but it's not gonna be a major speed up, because one of the first actions idtracker.ai performs is the segmentation based on the min and max one provides in the configuration right? A segmented video would make only that step redundant, but everything else (which I imagine is the computationally expensive part) would still be needed. Am I right? Maybe I can downsample the data to 2 or 3 fps (instead of >30?)

Antonio Ortega

Jul 27, 2021, 1:24:02 PM7/27/21

to idtracker.ai users group

Following up on this, after having a deeper dive in the code, I realize idtrackerai spends a lot of time on this for loop

I can imagine this loop is really essential for the high quality tracking performed by idtrackerai. It goes frame by frame, and blob by blob on the frame, checking to which future blob does a previous blob connect to (in terms of pixel overlap). If I understand correctly from the code, and also based on a quick check to my cpu usage, this is done single-thread, i.e. fully sequential. Maybe doing it in parallel would speed it this process a lot? (depending of how many cores the machine has available ofc). I think this is doable if idtrackerai prepares N jobs and each is in charge of total frames/N, and then there is a final job that connects the results of the last and first frame of jobs working with contiguous frames i.e. integrates the N outputs?

Let me know if you think this is relevant!

Best regards,

Antonio

Paco Romero

Jul 30, 2021, 4:12:29 AM7/30/21

to Antonio Ortega, idtracker.ai users group

Hi Antonio,

You are correct about the fact that such a loop is crucial for the functioning of idtracker.ai and about the fact that it is done in a single thread.

I also agree with you that doing such a loop in parallel would speed up the process a lot.

As you say the best would be connecting the blobs by episodes (one could reuse the FRAMES_PER_EPISODE constant), then the extremes of each episode should be connected.

The reason I haven't implemented that solution in the past was just a matter of time and priorities during the PhD.

I am happy if you want to give it a try and implement such parallelization in idtracker.ai in a branch that we can then merge.

Let me know what you think and we can discuss the implementation details. Also I would suggest that you do it on top of the v4-dev branch, that way it will go on the next release.

I will be out of the lab during August but I am happy to take a look when I am back in September.

Best regards,

Paco

Francisco Romero-Ferrero, Ph.D.

Collective Behaviour Lab, Champalimaud Research

Av. de Brasilia, Doca de Pedrouços

1400-038 Lisboa, Portugal

1400-038 Lisboa, Portugal

--

You received this message because you are subscribed to a topic in the Google Groups "idtracker.ai users group" group.

To unsubscribe from this topic, visit https://groups.google.com/d/topic/idtrackerai_users/Rl-nsAmoKXc/unsubscribe.

To unsubscribe from this group and all its topics, send an email to idtrackerai_us...@googlegroups.com.

To view this discussion on the web, visit https://groups.google.com/d/msgid/idtrackerai_users/ce6d2ee6-020c-4eae-a0e0-0f4cdda432f3n%40googlegroups.com.

Reply all

Reply to author

Forward

0 new messages