record_transformer--how to prevent throwing errors if field not found

3,645 views

Skip to first unread message

hokie...@gmail.com

Dec 4, 2018, 11:18:41 AM12/4/18

to Fluentd Google Group

Hi Everyone,

I am using the record_transformer filter to extract different parts of metricbeat messages and send them as separate, filtered messages from fluentd. The plugin is working great and does exactly what I need. The only issue is that it is throwing the following errors when a message is sent that does not contain the target JSON field:

2018-12-04 11:05:22 -0500 [warn]: #0 dump an error event: error_class=RuntimeError error="failed to expand `record[\"system\"][\"process\"][\"fd\"][\"open\"]` : error = undefined method `[]' for nil:NilClass" location="/opt/td-agent/embedded/lib/ruby/gems/2.4.0/gems/fluentd-1.2.2/lib/fluent/plugin/filter_record_transformer.rb:310:in `rescue in expand'" tag="metricbeat" time=2018-12-04 11:05:21.687000000 -0500 record={"@timestamp"=>"2018-12-04T16:05:21.687Z", "@metadata"=>{"beat"=>"metricbeat", "type"=>"doc", "version"=>"6.4.0"}, "metricset"=>{"module"=>"system", "rtt"=>1426, "name"=>"network"}, "system"=>{"network"=>{"name"=>"enp59s0", "in"=>{"bytes"=>0, "packets"=>0, "errors"=>0, "dropped"=>0}, "out"=>{"packets"=>0, "bytes"=>0, "errors"=>0, "dropped"=>0}}}, "beat"=>{"hostname"=>"ace", "version"=>"6.4.0", "name"=>"ace"}, "host"=>{"name"=>"ace"}}

Is there any way to disable these errors? In my case they really aren't errors as I expect that there will be messages that don't contain all of the fields I am looking for because metricbeat sends out different message types. Or, should I use the grep filter in front of the record_transformer filter?

Any guidance is greatly appreciated--thanks!

--John

Mr. Fiber

Dec 5, 2018, 10:20:28 AM12/5/18

to Fluentd Google Group

To avoid such error, you can use `dig` method instead of `[]` chain.

record.dig('system', 'process', 'fd', 'open')

Masahiro

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+u...@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

John Yost

Dec 6, 2018, 10:48:46 AM12/6/18

to flu...@googlegroups.com

HI Masahiro,

Gotcha, cool! Um, question...I am using the record_transformer as-is...is there a config setting to use the dig method?

--John

Mr. Fiber

Dec 6, 2018, 2:36:56 PM12/6/18

to Fluentd Google Group

Put "enable_ruby true" in record_transformer config.

If you use record_modifier instead, no need "enable_ruby true"

John Yost

Dec 6, 2018, 2:57:13 PM12/6/18

to flu...@googlegroups.com

Hmmm,..that's puzzling, because I've already got enable_ruby set to true:

<label @system-cpu-iowait-pct>

<filter *beat>

@type record_transformer

enable_ruby true

renew_record true

<record>

timestamp ${record["@timestamp"]}

system-cpu-iowait-pct ${record["system"]["cpu"]["iowait"]["pct"]}

route system-cpu-iowait-pct

</record>

</filter>

<filter *beat>

@type time_sampling

unit ${tag}, route

interval 60

</filter>

<match *beat>

Mr. Fiber

Dec 10, 2018, 3:49:09 AM12/10/18

to Fluentd Google Group

> Hmmm,..that's puzzling, because I've already got enable_ruby set to true:

Your reply is unclear for me. Your pasted configuration doesn't use dig instead of [] chain.

What is the problem?

John Yost

Dec 10, 2018, 3:37:14 PM12/10/18

to flu...@googlegroups.com

I apologize, please I don't understand the config change you are specifying. Please confirm what the configuration should be updated to. I need the ["system"]["cpu"]["iowait"]["pct"] chain, I think, to get this field only.

Mr. Fiber

Dec 10, 2018, 3:41:42 PM12/10/18

to Fluentd Google Group

record["system"]["cpu"]["iowait"]["pct"]

to

record.dig('system', 'cpu', 'iowait', 'pct')

record.dig('system', 'cpu', 'iowait', 'pct')

John Yost

Dec 10, 2018, 3:58:28 PM12/10/18

to flu...@googlegroups.com

Oh, I see, thanks! Yep, updated as you specified and the errors are fixed, thanks again!

--John

Mr. Fiber

Dec 12, 2018, 7:23:10 PM12/12/18

to Fluentd Google Group

Add document for this point:

John Yost

Dec 13, 2018, 7:34:48 AM12/13/18

to flu...@googlegroups.com

Argh, yep, there it is, thank you. I hope to meet you at KubeCon today.

--John

John Yost

Dec 17, 2018, 4:04:37 PM12/17/18

to flu...@googlegroups.com

Hi Masahiro,

Okay, this has uncovered another problem. The dig function fixes the error throwing, but now I am getting downstream errors due to the null value of the field. Is there a filter that will filter out a message where a field has a null value?

Thanks

--John

John Yost

Jan 3, 2019, 1:29:47 PM1/3/19

to flu...@googlegroups.com

Hi Masahiro,

Okay, returned to this and I determined that the null value is for the field I am doing the record.dig:

<filter *beat>

@type record_transformer

enable_ruby true

renew_record true

<record>

timestamp ${record["@timestamp"]}

system-cpu-iowait-pct ${record.dig("system", "cpu", "iowait", "pct")}

route system-cpu-iowait-pct

</record>

</filter>

results in this:

#0 emitted the record {"timestamp"=>"2019-01-03T18:01:01.846Z", "system-cpu-iowait-pct"=>nil, "route"=>"system-cpu-iowait-pct"} at the time of 1546538461

If I stick with the original configuration:

<filter *beat>

@type record_transformer

enable_ruby true

renew_record true

<record>

timestamp ${record["@timestamp"]}

system-cpu-iowait-pct ${record["system"]["cpu"]["iowait"]["pct"]}

route system-cpu-iowait-pct

</record>

</filter>

It works correctly and I get this in my td-agent client:

{"timestamp":"2019-01-03T18:02:02.517Z","system-cpu-iowait-pct":0.6943,"route":"system-cpu-iowait-pct"}

and I get the same thing in the file output:

2019-01-03T13:27:11-05:00 metricbeat {"timestamp":"2019-01-03T18:27:11.846Z","system-cpu-iowait-pct":null,"route":"system-cpu-iowait-pct"}

with this configuration:

<store>

@type file

@id output_file

path /home/hokiegeek2/development/data/metricbeats

</store>

It *seems* like the filter is seeing the field but failing to retrieve it.

Any ideas?

--John

John Yost

Jan 3, 2019, 1:31:16 PM1/3/19

to flu...@googlegroups.com

Also, my question from 12/17 is not valid. Again, the issue appears to be that the filter can find the JSON field but it is not retrieved.

Thanks

--John

John Yost

Jan 3, 2019, 2:12:58 PM1/3/19

to flu...@googlegroups.com

Okay, more results. For all fields, the lookup using record[][][] works, but record.nil("","","") produces nil, which, according to the docs, means the field was not found. For the following:

<filter *beat>

@type record_transformer

enable_ruby true

renew_record true

<record>

timestamp ${record["@timestamp"]}

system-cpu-user-pct ${record["system"]["cpu"]["user"]["pct"]}

system-cpu-user-pct ${record.dig("system", "cpu", "user", "pct")}

route system-cpu-user-pct

</record>

</filter>

The bolded version with [] nomenclature works, the dig version does not.

--John

John Yost

Jan 3, 2019, 3:04:23 PM1/3/19

to flu...@googlegroups.com

Here's an interesting follow-up. I adjusted the dig command as follows:

<record>

timestamp ${record["@timestamp"]}

#system-cpu-user-pct ${record["system"]["cpu"]["user"]["pct"]}

system-cpu-user-pct ${record.dig("system")}

route system-cpu-user-pct

</record>

This does not throw an error, but the dig lookup only picks up records with the "system", "process" path:

2019-01-03 14:52:53 -0500 [info]: #0 emitted the record {"timestamp"=>"2019-01-03T19:52:51.846Z", "system-cpu-user-pct"=>{"process"=>{"username"=>"root", "pid"=>1, "name"=>"systemd", "cpu"=>{"start_time"=>"2019-01-03T11:40:01.000Z", "total"=>{"value"=>13190, "pct"=>0.001, "norm"=>{"pct"=>0.0001}}}, "ppid"=>0, "state"=>"sleeping", "pgid"=>1, "cmdline"=>"/sbin/init splash", "fd"=>{"limit"=>{"soft"=>1048576, "hard"=>1048576}, "open"=>221}, "memory"=>{"size"=>231321600, "rss"=>{"bytes"=>9736192, "pct"=>0.0003}, "share"=>6774784}, "cwd"=>"/"}}, "route"=>"system-cpu-user-pct"} at the time of 1546545171

2019-01-03 14:53:53 -0500 [info]: #0 emitted the record {"timestamp"=>"2019-01-03T19:53:51.846Z", "system-cpu-user-pct"=>{"process"=>{"cpu"=>{"start_time"=>"2019-01-03T11:40:01.000Z", "total"=>{"pct"=>0.001, "norm"=>{"pct"=>0.0001}, "value"=>13230}}, "fd"=>{"open"=>221, "limit"=>{"soft"=>1048576, "hard"=>1048576}}, "ppid"=>0, "pgid"=>1, "username"=>"root", "pid"=>1, "state"=>"sleeping", "cwd"=>"/", "name"=>"systemd", "memory"=>{"size"=>231321600, "rss"=>{"bytes"=>9736192, "pct"=>0.0003}, "share"=>6774784}, "cmdline"=>"/sbin/init splash"}}, "route"=>"system-cpu-user-pct"} at the time of 1546545231

2019-01-03 14:54:53 -0500 [info]: #0 emitted the record {"timestamp"=>"2019-01-03T19:54:51.846Z", "system-cpu-user-pct"=>{"process"=>{"username"=>"root", "memory"=>{"size"=>0, "rss"=>{"bytes"=>0, "pct"=>0}, "share"=>0}, "fd"=>{"open"=>0, "limit"=>{"soft"=>1024, "hard"=>4096}}, "pid"=>56, "cpu"=>{"start_time"=>"2019-01-03T11:40:01.000Z", "total"=>{"pct"=>0, "norm"=>{"pct"=>0}, "value"=>0}}, "name"=>"netns", "pgid"=>0, "state"=>"unknown", "ppid"=>2}}, "route"=>"system-cpu-user-pct"} at the time of 1546545291

2019-01-03 14:55:53 -0500 [info]: #0 emitted the record {"timestamp"=>"2019-01-03T19:55:51.846Z", "system-cpu-user-pct"=>{"process"=>{"state"=>"unknown", "ppid"=>2, "username"=>"root", "memory"=>{"rss"=>{"bytes"=>0, "pct"=>0}, "share"=>0, "size"=>0}, "cpu"=>{"total"=>{"value"=>0, "pct"=>0, "norm"=>{"pct"=>0}}, "start_time"=>"2019-01-03T11:40:01.000Z"}, "pid"=>54, "name"=>"kworker/7:0H", "fd"=>{"open"=>0, "limit"=>{"soft"=>1024, "hard"=>4096}}, "pgid"=>0}}, "route"=>"system-cpu-user-pct"} at the time of 1546545351

root@ace:/home/kjyost/development/git/cmdaa/local-streaming-analytics/src#

I don't know why record["system"]["cpu"]["user"]["pct"] works but system-cpu-user-pct ${record.dig("system")} does not.

--John

John Yost

Jan 3, 2019, 3:28:16 PM1/3/19

to flu...@googlegroups.com

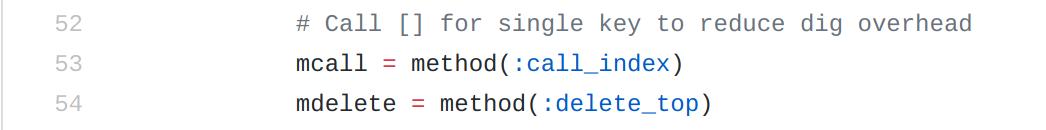

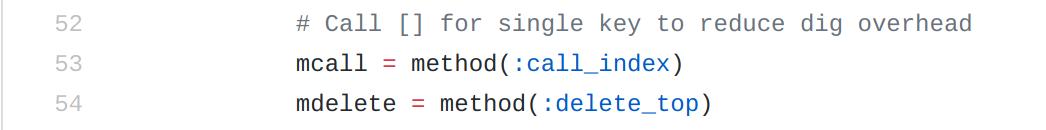

I may be way off here, but I am wondering if the issue is on line 53 of record_accessor.rb (see below). This seems to correlate with "system" JSON element lookup hitting the "process" child node only.

Mr. Fiber

Jan 3, 2019, 5:14:20 PM1/3/19

to Fluentd Google Group

> This does not throw an error, but the dig lookup only picks up records with the "system", "process" path:

What does this mean? Does "system" field contain multiple fields like below but returns only "process" field?

{"system": {"process":{}, "other_field":{}}}

I tested simple configuration and it works.

<source>

@type forward

</source>

<filter>

@type record_transformer

enable_ruby true

<record>

new_field ${record.dig("system")}

</record>

</filter>

<match test.**>

@type stdout

</match>

command:

% echo '{"system":{"f1":"v1","f2":"v2"}}' | ~/dev/fluentd/fluentd/bin/fluent-cat test.foo

result:

2019-01-04 07:09:50.449622000 +0900 test.foo: {"system":{"f1":"v1","f2":"v2"},"new_field":{"f1":"v1","f2":"v2"}}

2019-01-04 07:09:50.449622000 +0900 test.foo: {"system":{"f1":"v1","f2":"v2"},"new_field":{"f1":"v1","f2":"v2"}}

> This seems to correlate with "system" JSON element lookup hitting the "process" child node only.

This is not related because record_transformer filter uses record_accessor for only "remove_keys" parameter.

hokie...@gmail.com

Jan 3, 2019, 5:26:33 PM1/3/19

to flu...@googlegroups.com

Hi, the system field has multiple child nodes, process is one of them, and my configuration only brings back the JSON tree hash that contains process child node. It will not retrieve the one I want

—John

Sent from my iPhone

Mr. Fiber

Jan 3, 2019, 5:27:35 PM1/3/19

to Fluentd Google Group

Please paste your entire actual record.

John Yost

Jan 3, 2019, 8:52:37 PM1/3/19

to flu...@googlegroups.com

Hi Masahiro,

Here some of the messages coming in from metricbeat:

2019-01-03T15:45:28.023-0500 INFO [monitoring] log/log.go:149 Total non-zero metrics {"monitoring": {"metrics": {"beat":{"cpu":{"system":{"ticks":737170,"time":{"ms":737177}},"total":{"ticks":2171660,"time":{"ms":2171672},"value":2171660},"user":{"ticks":1434490,"time":{"ms":1434495}}},"info":{"ephemeral_id":"11d557b6-086f-4b28-a9f2-0bf03168ef78","uptime":{"ms":31351792}},"memstats":{"gc_next":6398144,"memory_alloc":5630736,"memory_total":338459113664,"rss":72347648}},"libbeat":{"config":{"module":{"running":0},"reloads":1},"output":{"events":{"acked":1145050,"active":23,"batches":15621,"failed":808454,"total":1953527},"read":{"bytes":270084,"errors":2931},"type":"logstash","write":{"bytes":357306515,"errors":6}},"pipeline":{"clients":2,"events":{"active":23,"dropped":183315,"published":1328388,"retry":1026710,"total":1328388},"queue":{"acked":1328365}}},"metricbeat":{"system":{"core":{"events":3904,"success":3904},"cpu":{"events":5700,"success":5700},"diskio":{"events":20984,"success":20984},"filesystem":{"events":136817,"success":136817},"fsstat":{"events":3345,"success":3345},"load":{"events":5712,"success":5712},"memory":{"events":5704,"success":5704},"network":{"events":28475,"success":28475},"process":{"events":1105843,"success":1105843},"process_summary":{"events":5700,"success":5700},"uptime":{"events":6204,"success":6204}}},"system":{"cpu":{"cores":8},"load":{"1":2.04,"15":2.77,"5":2.73,"norm":{"1":0.255,"15":0.3463,"5":0.3413}}}}}}

2019-01-03T15:45:26.728-0500 INFO [monitoring] log/log.go:141 Non-zero metrics in the last 30s {"monitoring": {"metrics": {"beat":{"cpu":{"system":{"ticks":737100,"time":{"ms":676}},"total":{"ticks":2171520,"time":{"ms":1922},"value":2171520},"user":{"ticks":1434420,"time":{"ms":1246}}},"info":{"ephemeral_id":"11d557b6-086f-4b28-a9f2-0bf03168ef78","uptime":{"ms":31350496}},"memstats":{"gc_next":9127024,"memory_alloc":4604328,"memory_total":338435326968,"rss":8192}},"libbeat":{"config":{"module":{"running":0}},"output":{"events":{"acked":1171,"batches":17,"failed":1180,"total":2351},"read":{"bytes":300,"errors":3},"write":{"bytes":347515}},"pipeline":{"clients":5,"events":{"active":5,"dropped":119,"published":1290,"retry":1574,"total":1290},"queue":{"acked":1290}}},"metricbeat":{"system":{"core":{"events":8,"success":8},"cpu":{"events":6,"success":6},"diskio":{"events":43,"success":43},"filesystem":{"events":164,"success":164},"fsstat":{"events":4,"success":4},"load":{"events":6,"success":6},"memory":{"events":6,"success":6},"network":{"events":30,"success":30},"process":{"events":1010,"success":1010},"process_summary":{"events":6,"success":6},"uptime":{"events":7,"success":7}}},"system":{"load":{"1":1.96,"15":2.77,"5":2.73,"norm":{"1":0.245,"15":0.3463,"5":0.3413}}}}}}

Mr. Fiber

Jan 5, 2019, 4:59:18 PM1/5/19

to Fluentd Google Group

fluentd accepts entire json of this line and you want to fetch "record["monitoring"]["metrics"]["metricbeat"]["system"]" field, right?

conf:

I tested it and works correctly.

<source>

@type forward

</source>

<filter>

@type record_transformer

enable_ruby true

<record>

new_field ${record.dig("monitoring", "metrics", "metricbeat", "system")}

</record>

</filter>

<match test.**>

@type stdout

</match>

command:

% echo '{"monitoring": {"metrics": {"beat":{"cpu":{"system":{"ticks":737170,"time":{"ms":737177}},"total":{"ticks":2171660,"time":{"ms":2171672},"value":2171660},"user":{"ticks":1434490,"time":{"ms":1434495}}},"info":{"ephemeral_id":"11d557b6-086f-4b28-a9f2-0bf03168ef78","uptime":{"ms":31351792}},"memstats":{"gc_next":6398144,"memory_alloc":5630736,"memory_total":338459113664,"rss":72347648}},"libbeat":{"config":{"module":{"running":0},"reloads":1},"output":{"events":{"acked":1145050,"active":23,"batches":15621,"failed":808454,"total":1953527},"read":{"bytes":270084,"errors":2931},"type":"logstash","write":{"bytes":357306515,"errors":6}},"pipeline":{"clients":2,"events":{"active":23,"dropped":183315,"published":1328388,"retry":1026710,"total":1328388},"queue":{"acked":1328365}}},"metricbeat":{"system":{"core":{"events":3904,"success":3904},"cpu":{"events":5700,"success":5700},"diskio":{"events":20984,"success":20984},"filesystem":{"events":136817,"success":136817},"fsstat":{"events":3345,"success":3345},"load":{"events":5712,"success":5712},"memory":{"events":5704,"success":5704},"network":{"events":28475,"success":28475},"process":{"events":1105843,"success":1105843},"process_summary":{"events":5700,"success":5700},"uptime":{"events":6204,"success":6204}}},"system":{"cpu":{"cores":8},"load":{"1":2.04,"15":2.77,"5":2.73,"norm":{"1":0.255,"15":0.3463,"5":0.3413}}}}}}' | ~/fluent-cat test.foo

2019-01-06 06:56:28.691276000 +0900 test.foo: {"monitoring":{"metrics":{"beat":{"cpu":{"system":{"ticks":737170,"time":{"ms":737177}},"total":{"ticks":2171660,"time":{"ms":2171672},"value":2171660},"user":{"ticks":1434490,"time":{"ms":1434495}}},"info":{"ephemeral_id":"11d557b6-086f-4b28-a9f2-0bf03168ef78","uptime":{"ms":31351792}},"memstats":{"gc_next":6398144,"memory_alloc":5630736,"memory_total":338459113664,"rss":72347648}},"libbeat":{"config":{"module":{"running":0},"reloads":1},"output":{"events":{"acked":1145050,"active":23,"batches":15621,"failed":808454,"total":1953527},"read":{"bytes":270084,"errors":2931},"type":"logstash","write":{"bytes":357306515,"errors":6}},"pipeline":{"clients":2,"events":{"active":23,"dropped":183315,"published":1328388,"retry":1026710,"total":1328388},"queue":{"acked":1328365}}},"metricbeat":{"system":{"core":{"events":3904,"success":3904},"cpu":{"events":5700,"success":5700},"diskio":{"events":20984,"success":20984},"filesystem":{"events":136817,"success":136817},"fsstat":{"events":3345,"success":3345},"load":{"events":5712,"success":5712},"memory":{"events":5704,"success":5704},"network":{"events":28475,"success":28475},"process":{"events":1105843,"success":1105843},"process_summary":{"events":5700,"success":5700},"uptime":{"events":6204,"success":6204}}},"system":{"cpu":{"cores":8},"load":{"1":2.04,"15":2.77,"5":2.73,"norm":{"1":0.255,"15":0.3463,"5":0.3413}}}}},"new_field":{"core":{"events":3904,"success":3904},"cpu":{"events":5700,"success":5700},"diskio":{"events":20984,"success":20984},"filesystem":{"events":136817,"success":136817},"fsstat":{"events":3345,"success":3345},"load":{"events":5712,"success":5712},"memory":{"events":5704,"success":5704},"network":{"events":28475,"success":28475},"process":{"events":1105843,"success":1105843},"process_summary":{"events":5700,"success":5700},"uptime":{"events":6204,"success":6204}}}

result:

You can see "new_field" has multiple fields, core, cpu and more.

John Yost

Jan 8, 2019, 11:11:51 AM1/8/19

to flu...@googlegroups.com

Hi,

Ran this again. Here’s the results:

For a message like this from metricbeat:

2019-01-08T10:24:14-05:00 metricbeat {"@timestamp":"2019-01-08T15:24:14.478Z","@metadata":{"beat":"metricbeat","type":"doc","version":"6.2.4"},"metricset":{"name":"cpu","module":"system","rtt":76},"system":{"cpu":{"steal":{"pct":0},"softirq":{"pct":0},"cores":12,"nice":{"pct":0},"total":{"pct":0.595},"user":{"pct":0.382},"system":{"pct":0.213},"idle":{"pct":11.405},"iowait":{"pct":0},"irq":{"pct":0}}},"beat":{"name":"hokiegeek2.home","hostname":"hokiegeek2.home","version":"6.2.4"}}

The configuration:

<record>

timestamp ${record["@timestamp"]}

system-cpu-user-pct ${record["system"]["cpu"]["user"]["pct”]}

route system-cpu-user-pct

</record>

yields the correct, expected results:

[LoggingHandler] Line 112 INFO: TimeSeriesMessageProcessedEvent route: system-cpu-user-pct; value: 0.3369; timestamp: 2019-01-08 15:34:34.474000

When I convert to the dig method:

<record>

timestamp ${record["@timestamp"]}

system-cpu-user-pct ${record.dig("system","cpu","user","pct")}

route system-cpu-user-pct

</record>

The value is not retrieved:

[TimeSeriesMessageHandler] Line 111 ERROR: Error malformed node or string: <_ast.Name object at 0x125c82240> in processing the message {"timestamp":"2019-01-08T15:30:47.891Z","system-cpu-user-pct":null,"route":"system-cpu-user-pct”}

—John

<dig-single-key.jpeg>

hokie...@gmail.com

Jan 16, 2019, 8:19:03 AM1/16/19

to Fluentd Google Group

Hi Masahiro, have you been able to investigate further or do you need more info? This, unfortunately, is a big problem that I need to get fixed. If this is not something you have the bandwidth to work on, if you could lemme know where I could potentially fix this myself, that's okay also.

Thanks! Fluentd is awesome, just need to resolve this edge case. :)

--John

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+unsubscribe@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+unsubscribe@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+unsubscribe@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+unsubscribe@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+unsubscribe@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+unsubscribe@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+unsubscribe@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+unsubscribe@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+unsubscribe@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+unsubscribe@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+unsubscribe@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+unsubscribe@googlegroups.com.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+unsubscribe@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+unsubscribe@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+unsubscribe@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+unsubscribe@googlegroups.com.

Mr. Fiber

Jan 17, 2019, 5:34:54 AM1/17/19

to Fluentd Google Group

From my test, there is no difference between [] and dig.

@type forward

</source>

<filter test.foo>

Here is the result.

Conf:

<source>@type forward

</source>

<filter test.foo>

@type record_transformer

enable_ruby true

<record>

system-cpu-user-pct ${record["system"]["cpu"]["user"]["pct"]}

route system-cpu-user-pct

</record>

route system-cpu-user-pct

</record>

</filter>

<filter test.bar>

<filter test.bar>

@type record_transformer

enable_ruby true

<record>

system-cpu-user-pct ${record.dig("system","cpu","user","pct")}

route system-cpu-user-pct

</record>

</filter>

route system-cpu-user-pct

</record>

</filter>

<match test.**>

@type stdout

</match>

@type stdout

</match>

Log:

2019-01-17 19:25:25 +0900 [info]: #0 fluentd worker is now running worker=0

2019-01-17 19:25:28.925784000 +0900 test.foo: {"@timestamp":"2019-01-08T15:24:14.478Z","@metadata":{"beat":"metricbeat","type":"doc","version":"6.2.4"},"metricset":{"name":"cpu","module":"system","rtt":76},"system":{"cpu":{"steal":{"pct":0},"softirq":{"pct":0},"cores":12,"nice":{"pct":0},"total":{"pct":0.595},"user":{"pct":0.382},"system":{"pct":0.213},"idle":{"pct":11.405},"iowait":{"pct":0},"irq":{"pct":0}}},"beat":{"name":"hokiegeek2.home","hostname":"hokiegeek2.home","version":"6.2.4"},"system-cpu-user-pct":0.382,"route":"system-cpu-user-pct"}

2019-01-17 19:25:31.866312000 +0900 test.bar: {"@timestamp":"2019-01-08T15:24:14.478Z","@metadata":{"beat":"metricbeat","type":"doc","version":"6.2.4"},"metricset":{"name":"cpu","module":"system","rtt":76},"system":{"cpu":{"steal":{"pct":0},"softirq":{"pct":0},"cores":12,"nice":{"pct":0},"total":{"pct":0.595},"user":{"pct":0.382},"system":{"pct":0.213},"idle":{"pct":11.405},"iowait":{"pct":0},"irq":{"pct":0}}},"beat":{"name":"hokiegeek2.home","hostname":"hokiegeek2.home","version":"6.2.4"},"system-cpu-user-pct":0.382,"route":"system-cpu-user-pct"}

2019-01-17 19:25:25 +0900 [info]: #0 fluentd worker is now running worker=0

2019-01-17 19:25:28.925784000 +0900 test.foo: {"@timestamp":"2019-01-08T15:24:14.478Z","@metadata":{"beat":"metricbeat","type":"doc","version":"6.2.4"},"metricset":{"name":"cpu","module":"system","rtt":76},"system":{"cpu":{"steal":{"pct":0},"softirq":{"pct":0},"cores":12,"nice":{"pct":0},"total":{"pct":0.595},"user":{"pct":0.382},"system":{"pct":0.213},"idle":{"pct":11.405},"iowait":{"pct":0},"irq":{"pct":0}}},"beat":{"name":"hokiegeek2.home","hostname":"hokiegeek2.home","version":"6.2.4"},"system-cpu-user-pct":0.382,"route":"system-cpu-user-pct"}

2019-01-17 19:25:31.866312000 +0900 test.bar: {"@timestamp":"2019-01-08T15:24:14.478Z","@metadata":{"beat":"metricbeat","type":"doc","version":"6.2.4"},"metricset":{"name":"cpu","module":"system","rtt":76},"system":{"cpu":{"steal":{"pct":0},"softirq":{"pct":0},"cores":12,"nice":{"pct":0},"total":{"pct":0.595},"user":{"pct":0.382},"system":{"pct":0.213},"idle":{"pct":11.405},"iowait":{"pct":0},"irq":{"pct":0}}},"beat":{"name":"hokiegeek2.home","hostname":"hokiegeek2.home","version":"6.2.4"},"system-cpu-user-pct":0.382,"route":"system-cpu-user-pct"}

command:

echo '{"@timestamp":"2019-01-08T15:24:14.478Z","@metadata":{"beat":"metricbeat","type":"doc","version":"6.2.4"},"metricset":{"name":"cpu","module":"system","rtt":76},"system":{"cpu":{"steal":{"pct":0},"softirq":{"pct":0},"cores":12,"nice":{"pct":0},"total":{"pct":0.595},"user":{"pct":0.382},"system":{"pct":0.213},"idle":{"pct":11.405},"iowait":{"pct":0},"irq":{"pct":0}}},"beat":{"name":"hokiegeek2.home","hostname":"hokiegeek2.home","version":"6.2.4"}}' | ~/dev/fluentd/fluentd/bin/fluent-cat test.foo

echo '{"@timestamp":"2019-01-08T15:24:14.478Z","@metadata":{"beat":"metricbeat","type":"doc","version":"6.2.4"},"metricset":{"name":"cpu","module":"system","rtt":76},"system":{"cpu":{"steal":{"pct":0},"softirq":{"pct":0},"cores":12,"nice":{"pct":0},"total":{"pct":0.595},"user":{"pct":0.382},"system":{"pct":0.213},"idle":{"pct":11.405},"iowait":{"pct":0},"irq":{"pct":0}}},"beat":{"name":"hokiegeek2.home","hostname":"hokiegeek2.home","version":"6.2.4"}}' | ~/dev/fluentd/fluentd/bin/fluent-cat test.foo

echo '{"@timestamp":"2019-01-08T15:24:14.478Z","@metadata":{"beat":"metricbeat","type":"doc","version":"6.2.4"},"metricset":{"name":"cpu","module":"system","rtt":76},"system":{"cpu":{"steal":{"pct":0},"softirq":{"pct":0},"cores":12,"nice":{"pct":0},"total":{"pct":0.595},"user":{"pct":0.382},"system":{"pct":0.213},"idle":{"pct":11.405},"iowait":{"pct":0},"irq":{"pct":0}}},"beat":{"name":"hokiegeek2.home","hostname":"hokiegeek2.home","version":"6.2.4"}}' | ~/dev/fluentd/fluentd/bin/fluent-cat test.bar

I assume you send non metricbeat event to fluentd and it causes inconsistent result.

To avoid non existence field issue, set default value is one approach like below.

system-cpu-user-pct ${record["system"]["cpu"]["user"]["pct"] || 0.0}

system-cpu-user-pct ${record.dig("system","cpu","user","pct") || 0.0}

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+u...@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+u...@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+u...@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+u...@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+u...@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+u...@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+u...@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+u...@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+u...@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+u...@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+u...@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+u...@googlegroups.com.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+u...@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+u...@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+u...@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+u...@googlegroups.com.

--

You received this message because you are subscribed to the Google Groups "Fluentd Google Group" group.

To unsubscribe from this group and stop receiving emails from it, send an email to fluentd+u...@googlegroups.com.

John Yost

Jan 17, 2019, 9:56:27 AM1/17/19

to flu...@googlegroups.com

Wow, you’re correct, these events are being handled just fine. I am streaming Metricbeat events exclusively, so I would think the records are consistent, dunno.

But I agree, from what you’re showing below, the record_transformer filter is working just fine with either method. Thanks again for your time in researching this. I need to do more investigative work to see why my results are not matching your’s.

Thanks

—John

hokie...@gmail.com

Feb 8, 2019, 10:22:18 AM2/8/19

to Fluentd Google Group

Hi, just got back to this. Okay, question--if the value of the field retrieved by the dig method has no value, is there a way to filter that so it does not get sent out? I cannot send out a default value because I am doing time series analysis, so the only message that I can accept is the one that actually has a value.

Thanks again for your time, much appreciated.

--John

Reply all

Reply to author

Forward

0 new messages