Chromium audio/video sync leads to unexpected latency

914 views

Skip to first unread message

Ke Wu

Jul 27, 2022, 1:40:29 PM7/27/22

to discuss-webrtc

Hi, I am new to the WebRTC world as a web developer. Recently I am working on a cloud gaming project which seems to have a weird latency issue.

So the scenario is, the streaming look normal at beginning, and once the first sender report for audio track is received, the latency will start to increase and I can see the experimental minPlayoutDelay show up in the webrtc-internals.

To investigate this issue, I have build chromium and try to play with the synchronizer and RTCP receiver. First I found out that the latency comes from rtp_streams_syncronizer2.cc::UpdateDelay call. Secondly, I found out that if I skip the RTCP packet handler in channel_receive.cc (or specifically the sender report handler), the synchronizer will not invoke UpdateDelay, and my issue seems resolved without a/v sync issue.

After reading some documents about a/v sync in WebRTC, I do think the sender report and synchronizer are reasonable parts in this case.

So my question is, why the extra latency show up in this case (considering I can just simply turn off the RTCP handler in audio processing without weird behaviour). Is it maybe because the clock is not sync in video and audio capturing?

Ke Wu

Jul 27, 2022, 10:21:43 PM7/27/22

to discuss-webrtc

Some updates, I do find a way to munge the SDP from sender side and play the video and audio in two different MediaStream instances to "bypass" the stream synchronizer.

So my real question would became, if I won't notice real a/v sync issue then why when I treat them as same MediaStream (the same a=msid field), chromium would introduce such playout delay?

Philipp Hancke

Jul 28, 2022, 2:07:19 AM7/28/22

to discuss...@googlegroups.com

you should check that the RTP timestamps of the audio and video streams progress at the expected rate (48khz for opus audio, 90khz for video) and them the NTP timestamps of the sender reports which are used to map the RTP timestamps to a time origin.

Putting extra logging into https://source.chromium.org/chromium/chromium/src/+/main:third_party/webrtc/video/stream_synchronization.cc might help too.

--

---

You received this message because you are subscribed to the Google Groups "discuss-webrtc" group.

To unsubscribe from this group and stop receiving emails from it, send an email to discuss-webrt...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/discuss-webrtc/1b0ec53b-f80f-4bd0-bb44-3b6fe629568an%40googlegroups.com.

Ke Wu

Jul 28, 2022, 4:11:37 AM7/28/22

to discuss-webrtc

Hi Philipp,

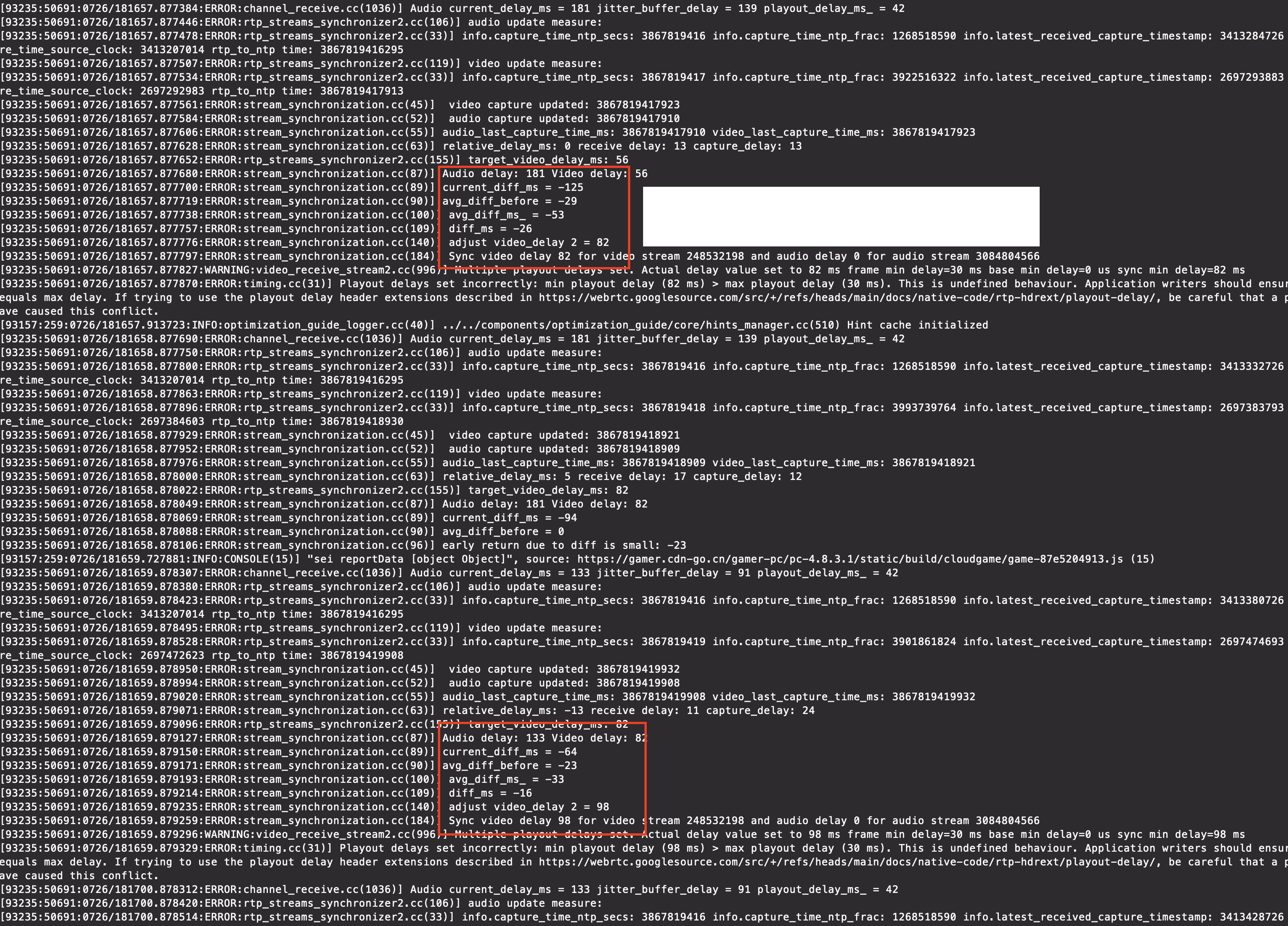

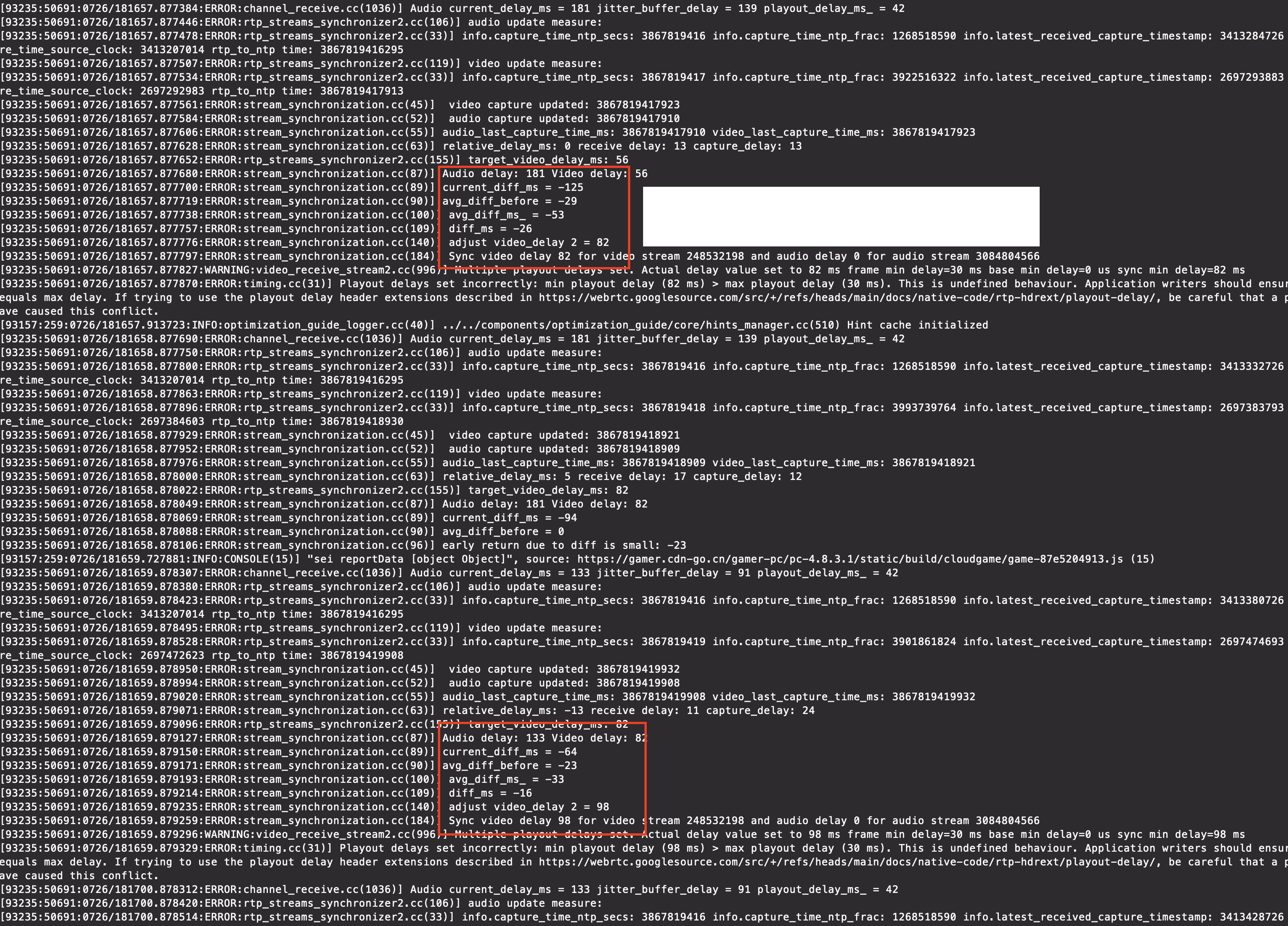

thanks for the advices, I will take a look of those timestamps. I do have the log for relative delay calculation and Im not sure if it is valid or reasonable, I seems the majority of "extra delay" came from the delta delay of audio and video, as shown in the screenshot. Could you please take a look or give some advices of what are some key stats that would indicates error or unexpected behaviour I should look for?

Philipp Hancke

Jul 28, 2022, 5:39:47 AM7/28/22

to discuss...@googlegroups.com

the "multiple playout delays set" warning is something you might want to check if you use the playout delay extension or the corresponding property on the RTCRtpReceiver.

In general this is a question what you expect as playout delay. If you have something less than 100ms and goes up and down a bit that is expected.

Disabling sync by not associating audio and video in the same MediaStream as you found out is always option too

To view this discussion on the web visit https://groups.google.com/d/msgid/discuss-webrtc/fd28b38c-6e1e-4ba5-bb88-b49a3915153fn%40googlegroups.com.

Ke Wu

Jul 28, 2022, 10:29:32 AM7/28/22

to discuss-webrtc

I don't think I ever use the playout delay property on the RTCRtpReceiver, nor the playout delay header extension (but it do exist in the SDP, just the incoming RTP packet doesn't include that).

I would say in cloud gaming scenario the delay should be as low as possible, meaning I shouldn't keep any frame in the buffer (frame received - frame decoded should be 0 or 1) and hopefully jitter buffer only represents network delay of incoming RTP packet.

Is it a correct expectation though? On one hand I think cloud gaming is highly sensitive to latency, but on the other hand a/v sync is also crucial aspect, is this more or less a trade off or decision I have to make? I noticed Google Stadia also doesn't send any SR packet and also result in stream synchronizer not being triggered.

Kevin Wang

Jul 28, 2022, 12:35:42 PM7/28/22

to discuss...@googlegroups.com

Does libwebrtc accept the playout delay header extension? This is the first time I've heard of it, and it looks pretty useful. We are running into a similar issue where a knob to tune the latency vs sync tradeoff would be nice :)

To view this discussion on the web visit https://groups.google.com/d/msgid/discuss-webrtc/3c77f873-f21c-4a58-90ce-b90b62cf0e80n%40googlegroups.com.

Kevin Wang

Jul 29, 2022, 4:21:57 AM7/29/22

to discuss...@googlegroups.com

While investigating the playout delay header extension, I discovered that libwebrtc also seems to include an absolute capture time header extension: https://webrtc.googlesource.com/src/+/refs/heads/main/docs/native-code/rtp-hdrext/abs-capture-time/

Would this address the A/V sync issues? We will try it on our end as well.

Reply all

Reply to author

Forward

0 new messages