Call for participants: reconstruction of human object interactions

Xianghui Xie

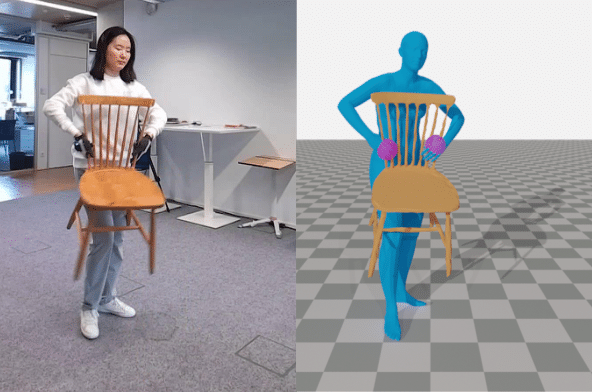

We have seen promising progress in reconstructing human body mesh or estimating 6DoF object pose from single images. However, most of these works focus on occlusion-free images, which is not realistic for settings during close human-object interaction since humans and objects occlude each other. This makes inference more difficult and poses challenges to existing state-of-the-art methods. In this challenge, we want to examine how well the existing human and object reconstruction methods work under more realistic settings and more importantly, understand how they can benefit each other for accurate interaction reconstruction. The recently released BEHAVE dataset (CVPR'22), enables for the first time joint reasoning about human-object interactions in real settings.

Competition website

Based on the BEHAVE dataset, this competition is split into three tracks:

- 3D human reconstruction from monocular RGB images. (competition website).

- 6DoF object pose estimation from monocular RGB images. (competition website).

- Joint human and object reconstruction from monocular RGB images (competition website).

Awards

The winner of each track will be invited to give a talk in our CVPR'23 Rhobin workshop. In addition, they will also be a suprise reward for the winners.