Ear Movement using BOOFCV

16 views

Skip to first unread message

BrandMetis Width

Jul 16, 2021, 7:45:25 AM7/16/21

to BoofCV

Hello,

We're working with a GP (Doctor) to create an Otoscope that can detect inner ear movement (Ear Rumbling) - using an Otoscope.

We can do this - and have shown it can work via basic movement detection - but are now trying to make it more robust.

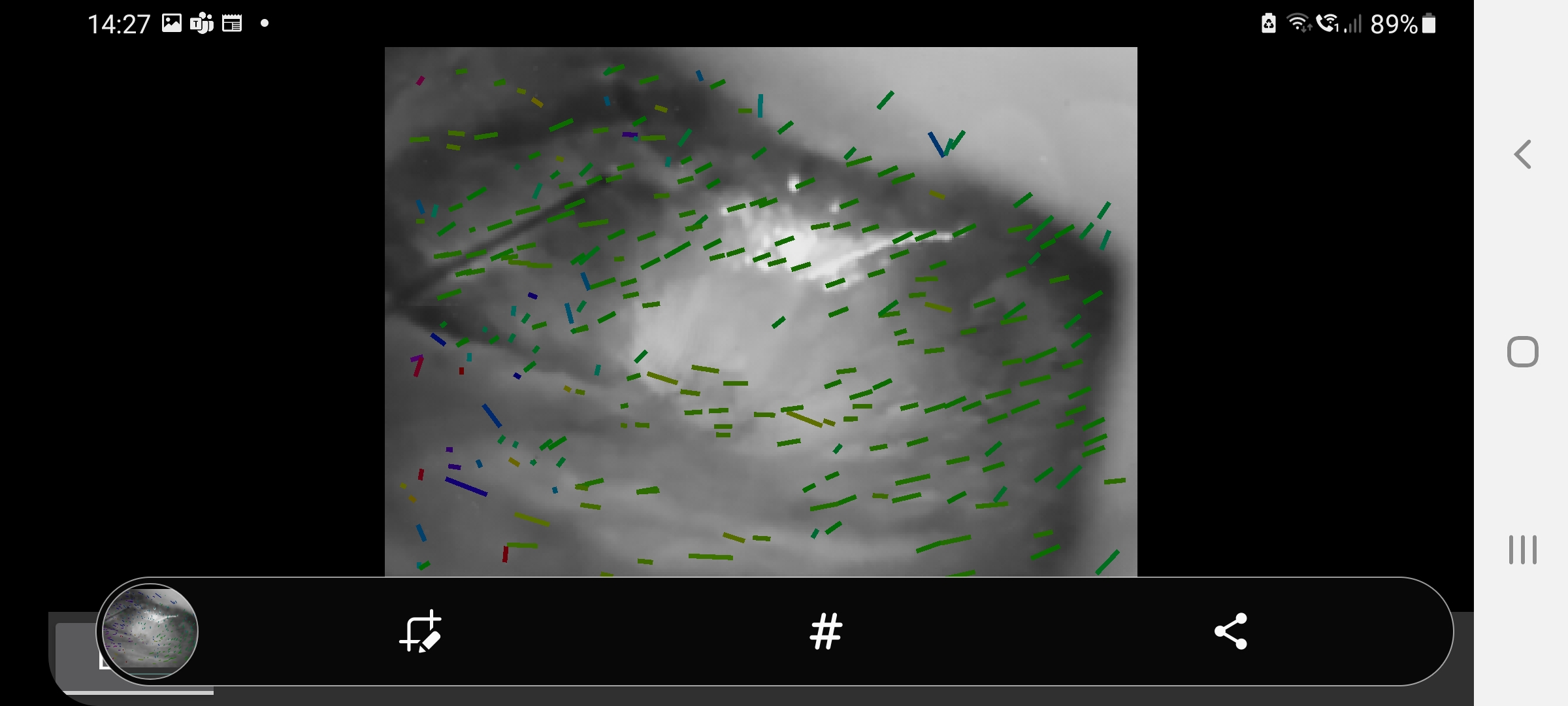

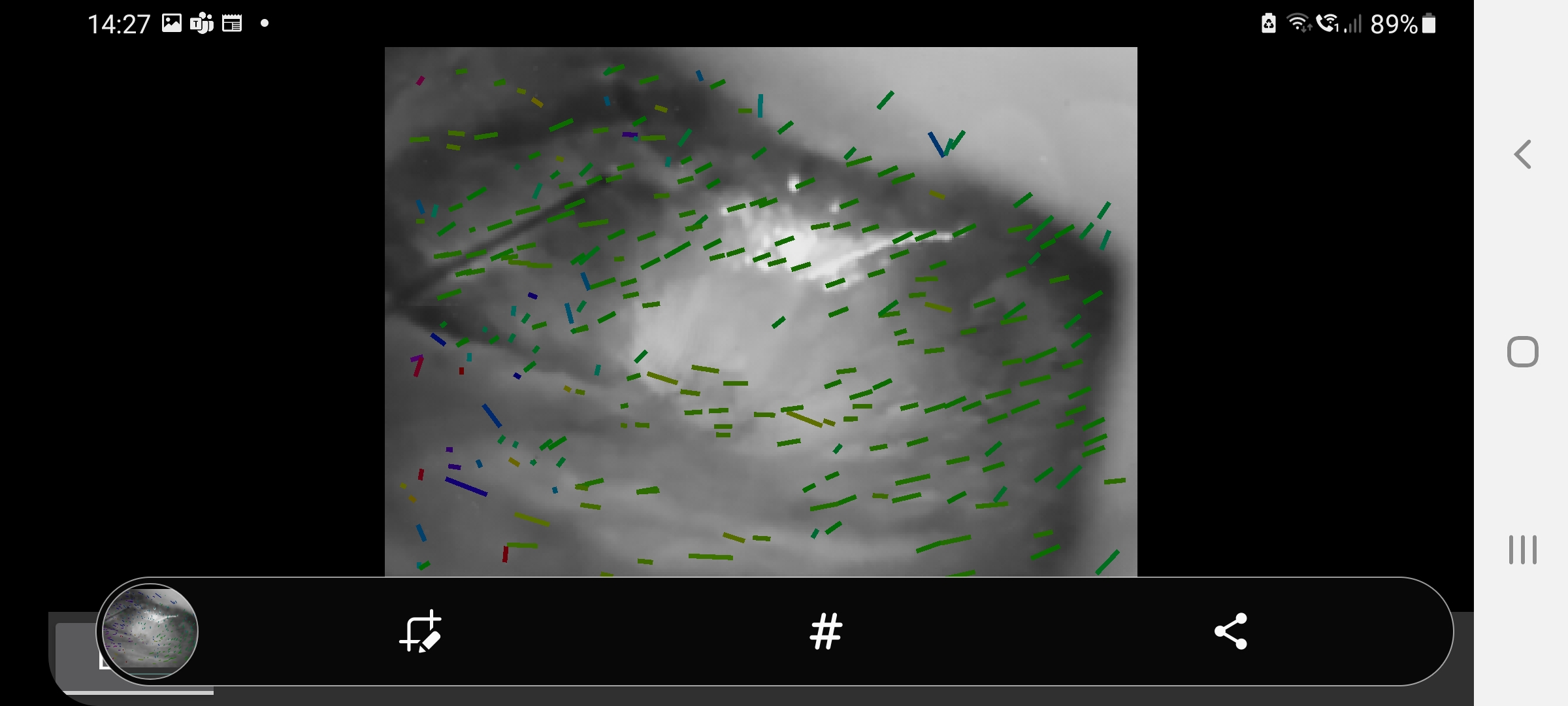

We're looking to use BOOFCV's - KLT method to then apply motion detection on it (whilst using USB - OTG to keep it compatible with COTS cameras / Otoscopes.

This seems to allow us to see whole movement (camera movement) vs clustered movement of the KLT - which gives us a good impression / probability of Typani motion.

I wonder if anyone could suggest any methods for this last bit? To then look for clustered KLT changes in an image? It's the clustered movement that appeals to this - since there are lots of in-ear variables - e.g. light, cleanliness, narrowness of aperture - etc.

Thanks

John

We're working with a GP (Doctor) to create an Otoscope that can detect inner ear movement (Ear Rumbling) - using an Otoscope.

We can do this - and have shown it can work via basic movement detection - but are now trying to make it more robust.

We're looking to use BOOFCV's - KLT method to then apply motion detection on it (whilst using USB - OTG to keep it compatible with COTS cameras / Otoscopes.

This seems to allow us to see whole movement (camera movement) vs clustered movement of the KLT - which gives us a good impression / probability of Typani motion.

I wonder if anyone could suggest any methods for this last bit? To then look for clustered KLT changes in an image? It's the clustered movement that appeals to this - since there are lots of in-ear variables - e.g. light, cleanliness, narrowness of aperture - etc.

Thanks

John

BrandMetis Width

Jul 16, 2021, 11:49:28 AM7/16/21

to BoofCV

Is there any additional documentation of explanation of this feature:

Pyramid Kit Tracker?

https://javadoc.io/static/org.boofcv/boofcv/0.13/boofcv/alg/tracker/klt/PyramidKltTracker.html

Pyramid Kit Tracker?

https://javadoc.io/static/org.boofcv/boofcv/0.13/boofcv/alg/tracker/klt/PyramidKltTracker.html

Reply all

Reply to author

Forward

0 new messages