Depthmapping turns statue into a blob

56 views

Skip to first unread message

Jens Andersen

May 16, 2021, 9:02:19 AM5/16/21

to AliceVision

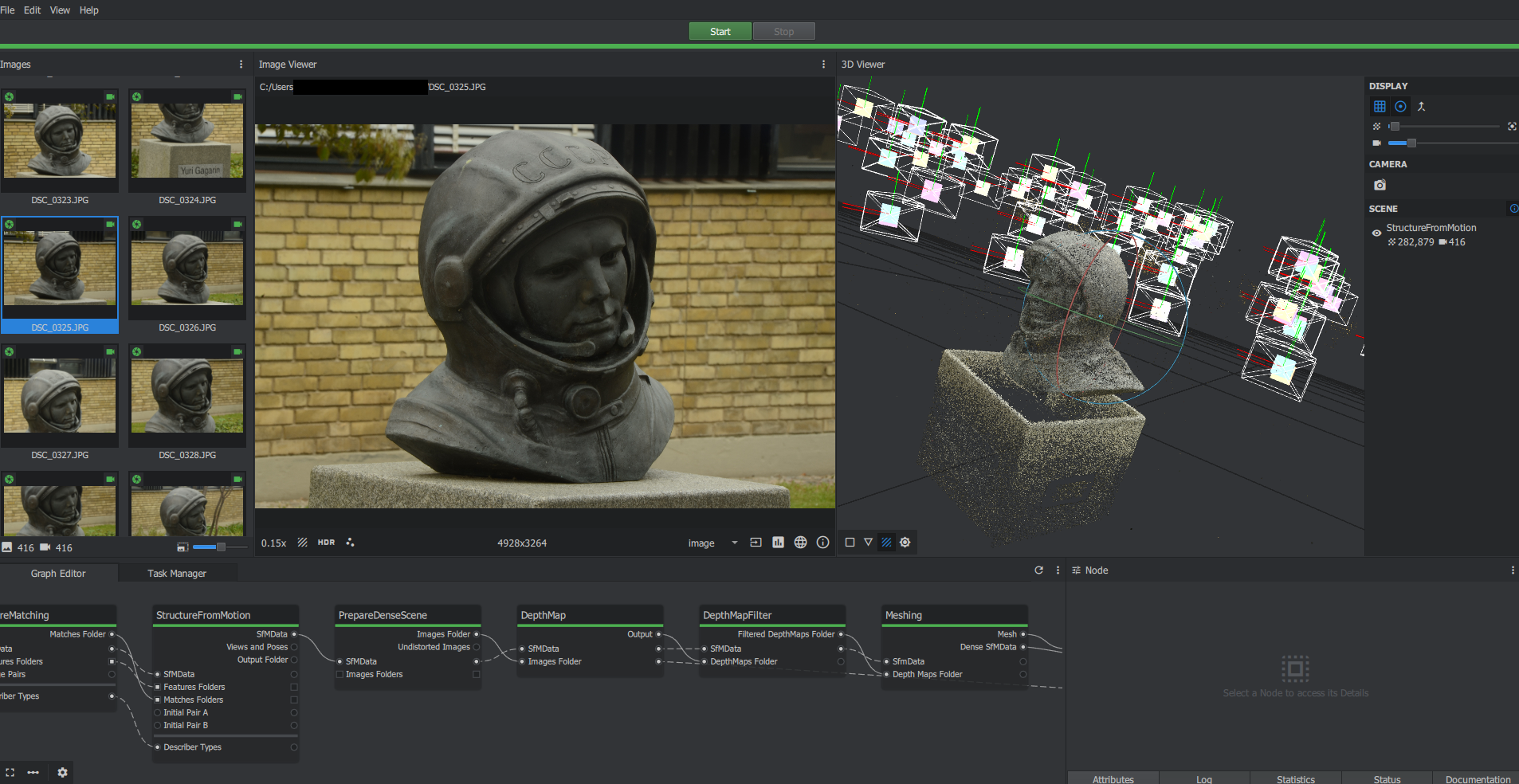

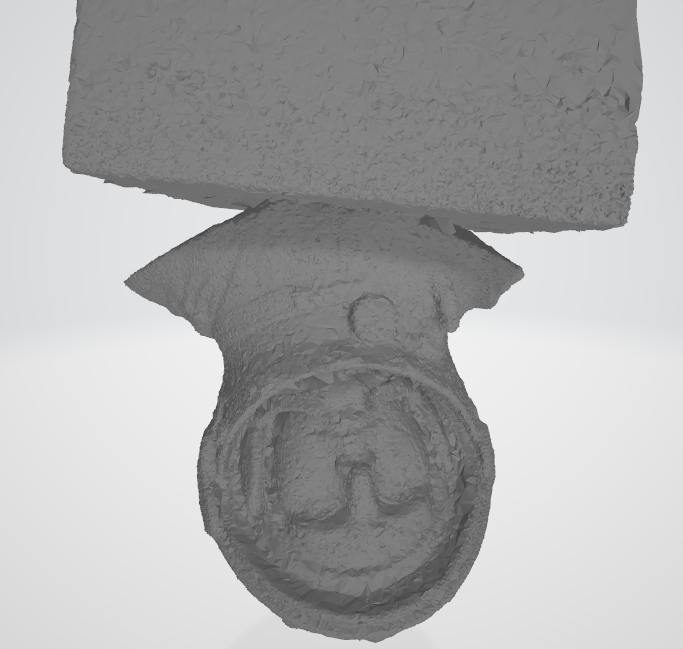

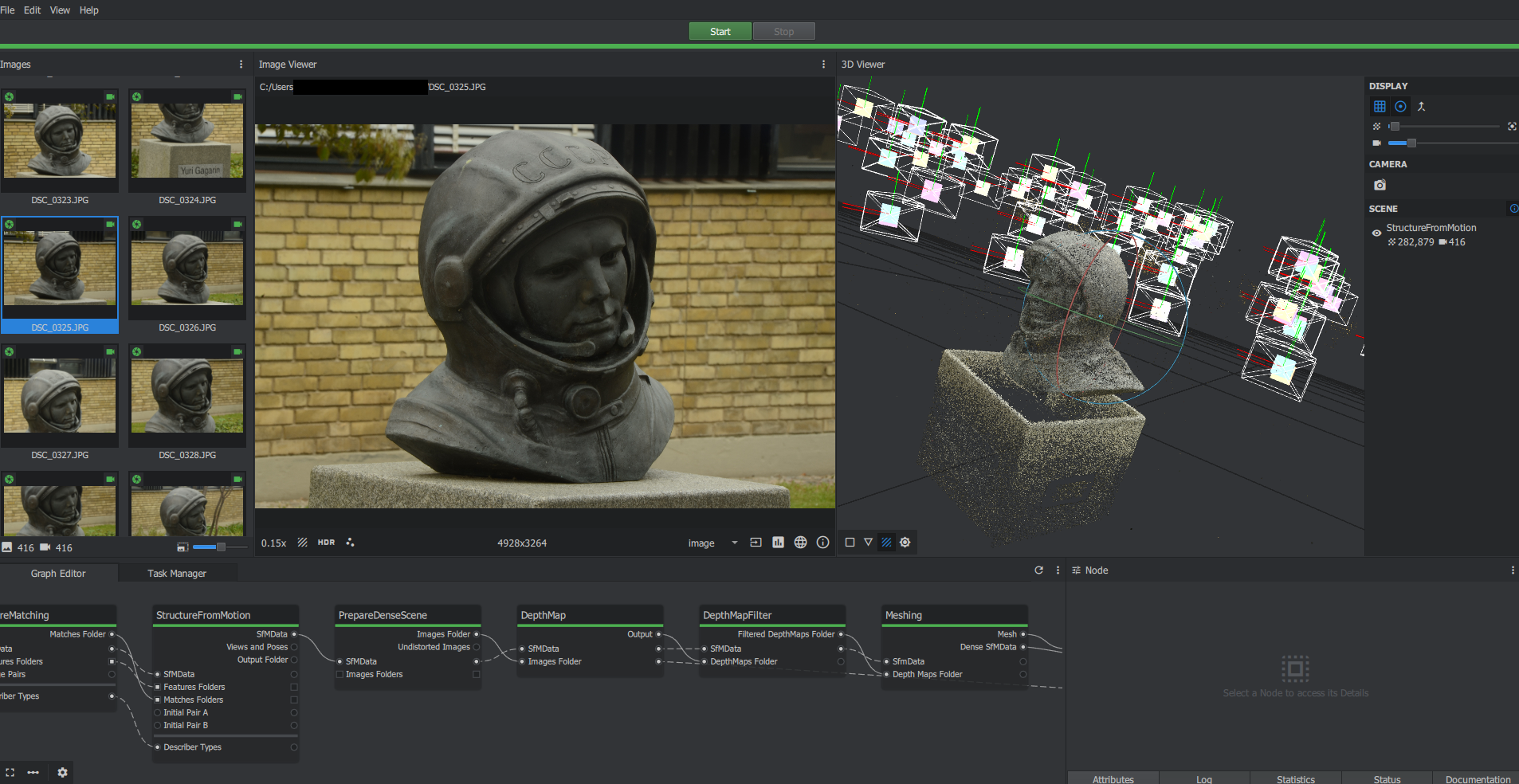

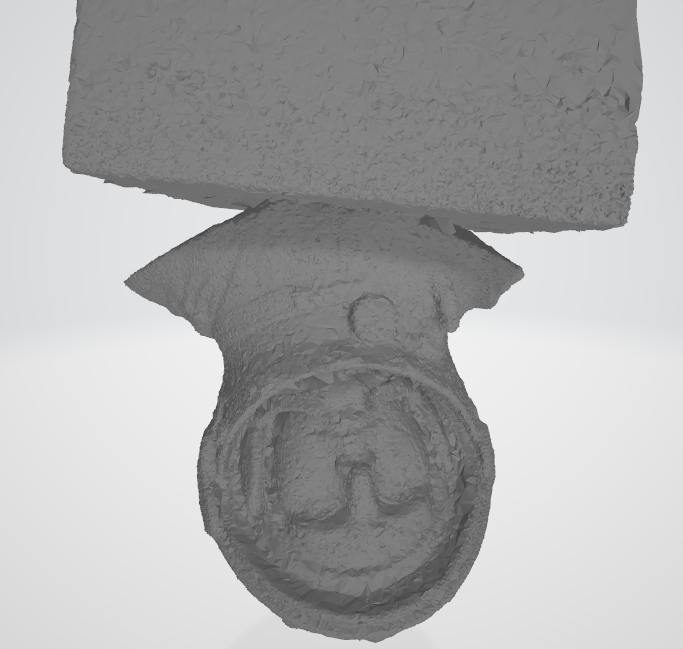

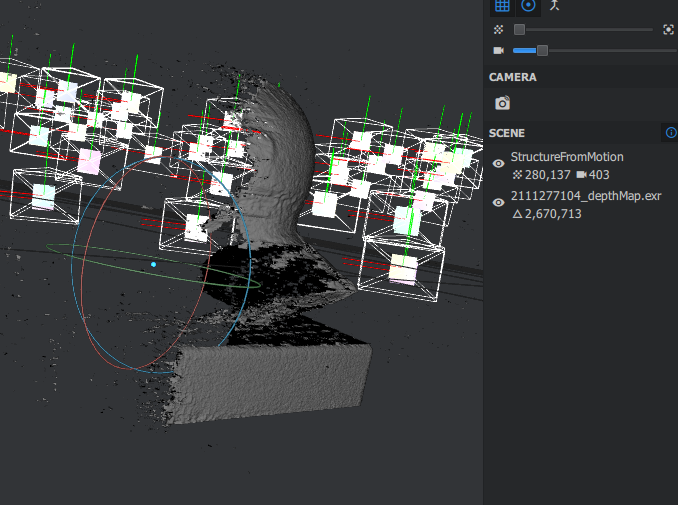

I've been trying to do a photogrammetry scan of this statue. The SfM data looks great, and can be directly meshed into something passable. However, the final result after depthmapping is a largely featureless blob. Why does this occur, and what can I do to fix it?

I have attempted to increase describer density and decrease downscaling, but that hasn't worked.

Jens Andersen

May 17, 2021, 12:42:10 PM5/17/21

to AliceVision

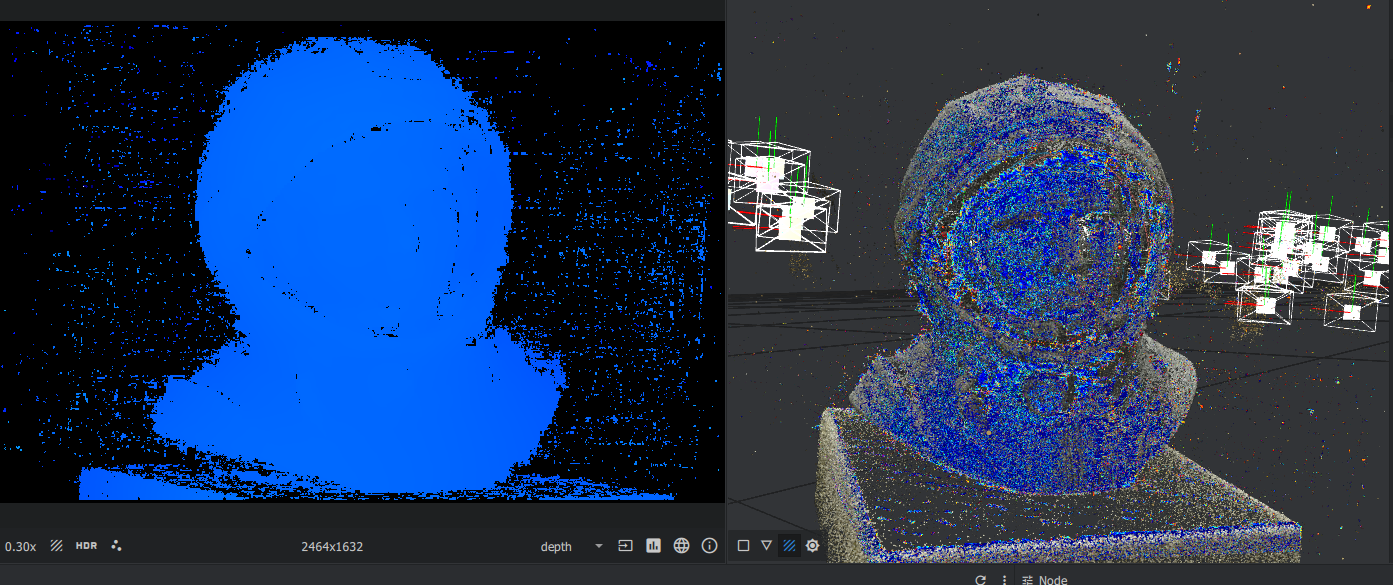

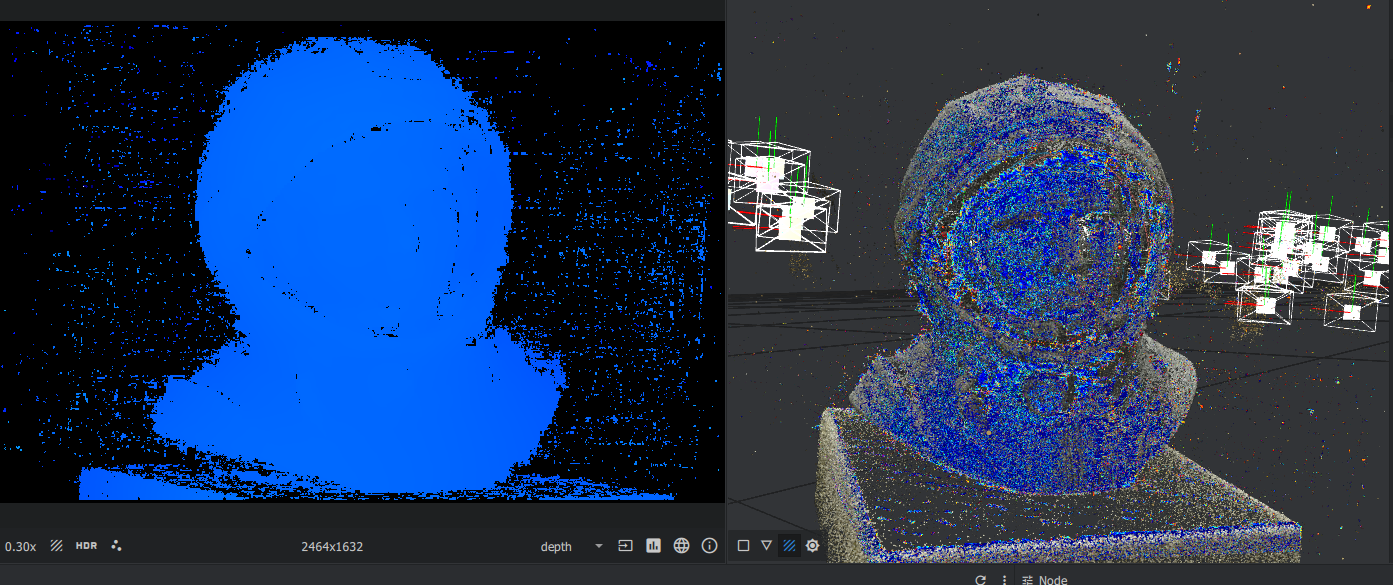

Interesting note: Depth mapping seems to ignore half of the statue, which is probably leading to the issues described above. Does anyone know why that might be?

Jens Andersen

May 19, 2021, 3:40:58 PM5/19/21

to AliceVision

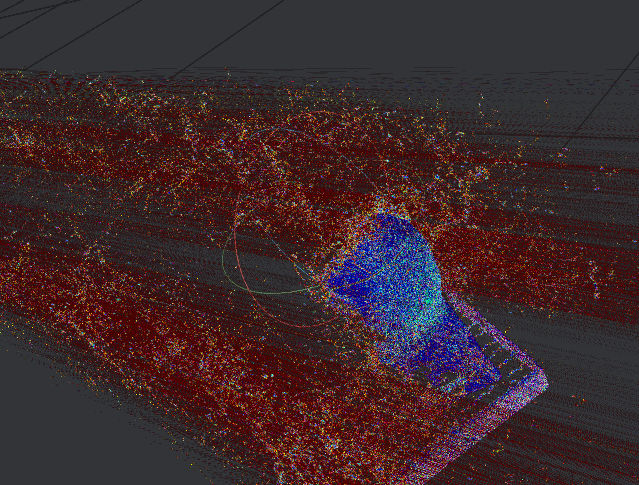

Well, I got a few comments that informed me that the previous post (with only half the structure visible in depth map) is expected behavior, and comes from the current camera selected. With this in mind, I looked into other camera views and found what is shown below. I assume that this is not correct. Does anyone know why this occurs?

Ben Ruppel

May 24, 2021, 6:35:22 PM5/24/21

to Jens Andersen, AliceVision

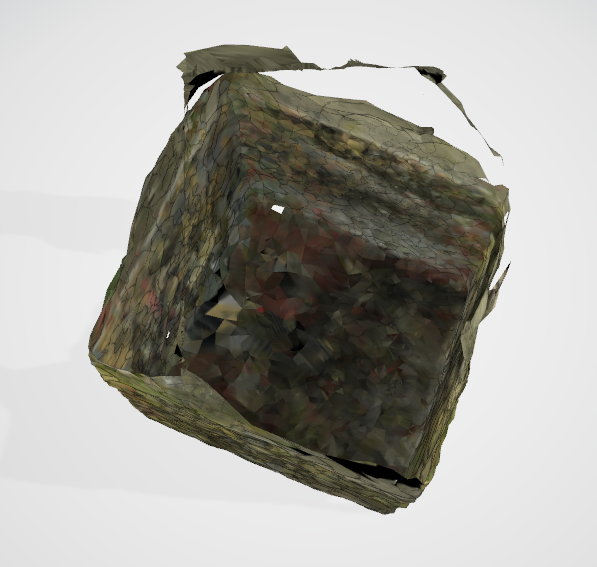

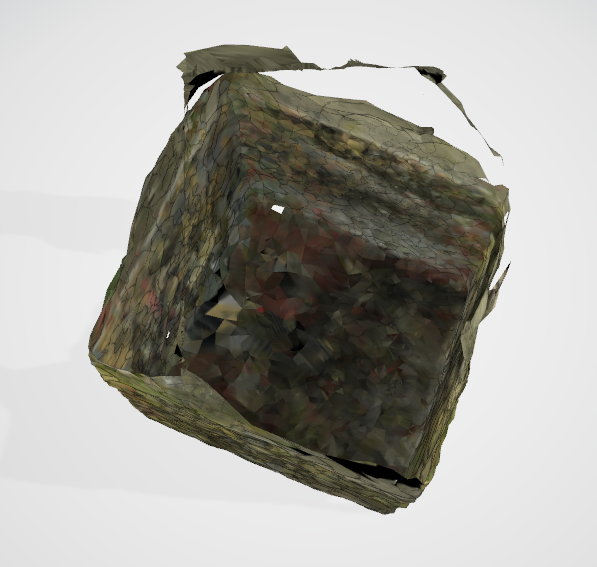

Sorry if I keep misunderstanding you. I corrected my bounding box and ran the mesh, but it still fills in the mesh as a square that fills in my bounding box.

If one only views the data points from sfm, one sees that enough points to show the shape of the model are clearly being found.

Therefore, with my knowledge, my opinion is that this is all about the meshing node and none of the nodes before it. The points are there, but the meshing node is connecting the dots incorrectly. Of course, I'm an amateur.

--

You received this message because you are subscribed to the Google Groups "AliceVision" group.

To unsubscribe from this group and stop receiving emails from it, send an email to alicevision...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/alicevision/e087fc04-6e4a-4780-86e5-7278ebaeebe3n%40googlegroups.com.

Jens Andersen

May 25, 2021, 5:01:39 AM5/25/21

to AliceVision

Thank you for giving it a go! At least I know that it's not just my computer.

My guess is that the depth mapping results are really noisy and don't get properly filtered out, which confuses the meshing process. Unfortunately I don't know how to clean it up. I tried changing some of the settings of the depth map filtering step, but keep getting the same result. I did find this thread on reddit, but I'm not really sure what to make of the responses.

ben.r...@gmail.com

May 31, 2021, 5:17:24 PM5/31/21

to AliceVision

I'm replying to your old findings here. Yes, I believe that the depthmap view of one camera is going to give you data points from a single camera. (I can't reproduce the red parts of your image, only the blue ones), so I think your image above is to be expected.

(For the sake of sharing and documentation)

For each image selected in the images pane, on the lower right corner of the Image Viewer, click on View Depth Map in 3D (an arrow over a box icon).

Each time this is performed, a layer is added to the 3D viewer. You can toggle the layers on and off.

My understanding is that the subsequent processes in the workflow use the overlap between the points from these depth maps, and blend or stitch them together.

To me, the sfm data points look good, and the depthmap points look good. What's left is the depthmap filtering and meshing. There are several options that can be fiddled with for these nodes but I'd like to find some documentation. I'm trying to follow the source code...

The DepthMapFilter node stores its results in a folder shown in the node properties. That folder is full of some files I don't know how to interpret.

The next node, Meshing, uses those files along with the Sfm data.

Right now my gut says that the meshing algorithm is having trouble, possibly when it assigns weights, and it is smoothing everything out into a brick.

Reply all

Reply to author

Forward

0 new messages