High CPU usage, logs contain [Hikari Housekeeping Timer]

655 views

Skip to first unread message

armen donigian

Nov 7, 2014, 2:27:53 PM11/7/14

to activate-p...@googlegroups.com

App: Spray servlet using activate framework to interact with DB.

Threads available = 19, inUse =1, total=20.

2014 11 07 00:06:10.393 [Hikari Housekeeping Timer] DEBUG com.zaxxer.hikari.pool.HikariPool - Before pool cleanup Pool stats HikariPool-0 (total=20, inUse=1, avail=19, waiting=0)2014 11 07 00:06:10.394 [Hikari Housekeeping Timer] DEBUG com.zaxxer.hikari.pool.HikariPool - After pool cleanup Pool stats HikariPool-0 (total=20, inUse=1, avail=19, waiting=0)2014 11 07 00:06:40.380 [Hikari Housekeeping Timer] DEBUG com.zaxxer.hikari.pool.HikariPool - Before pool cleanup Pool stats HikariPool-0 (total=20, inUse=1, avail=19, waiting=0)2014 11 07 00:06:40.380 [Hikari Housekeeping Timer] DEBUG com.zaxxer.hikari.pool.HikariPool - After pool cleanup Pool stats HikariPool-0 (total=20, inUse=1, avail=19, waiting=0)2014 11 07 00:07:10.376 [Hikari Housekeeping Timer] DEBUG com.zaxxer.hikari.pool.HikariPool - Before pool cleanup Pool stats HikariPool-0 (total=20, inUse=1, avail=19, waiting=0)2014 11 07 00:07:10.376 [Hikari Housekeeping Timer] DEBUG com.zaxxer.hikari.pool.HikariPool - After pool cleanup Pool stats HikariPool-0 (total=20, inUse=1, avail=19, waiting=0)2014 11 07 00:07:40.376 [Hikari Housekeeping Timer] DEBUG com.zaxxer.hikari.pool.HikariPool - Before pool cleanup Pool stats HikariPool-0 (total=20, inUse=1, avail=19, waiting=0)2014 11 07 00:07:40.377 [Hikari Housekeeping Timer] DEBUG com.zaxxer.hikari.pool.HikariPool - After pool cleanup Pool stats HikariPool-0 (total=20, inUse=1, avail=19, waiting=0)2014 11 07 00:08:10.376 [Hikari Housekeeping Timer] DEBUG com.zaxxer.hikari.pool.HikariPool - Before pool cleanup Pool stats HikariPool-0 (total=20, inUse=1, avail=19, waiting=0)2014 11 07 00:08:10.376 [Hikari Housekeeping Timer] DEBUG com.zaxxer.hikari.pool.HikariPool - After pool cleanup Pool stats HikariPool-0 (total=20, inUse=1, avail=19, waiting=0)2014 11 07 00:08:40.387 [Hikari Housekeeping Timer] DEBUG com.zaxxer.hikari.pool.HikariPool - Before pool cleanup Pool stats HikariPool-0 (total=20, inUse=1, avail=19, waiting=0)Curious to see if others have had this issue or found a resolution.

Flavio W. Brasil

Nov 7, 2014, 4:20:49 PM11/7/14

to armen donigian, activate-p...@googlegroups.com

Hi Armen,

I couldn’t figure out what is happening. Some questions to help the analysis:

1. Which is the database?

2. Are you using non-blocking async?

3. Are these hikari messages shown only during the issue?

4. What is the resolution? Restart the application or wait the application recover by itself?

5. Is is it possible to obtain a thread dump of the app during the issue?

6. Is the application totally irresponsive during the issue?

7. Is the issue correlated with a load pattern? For instance, does it happen during the peak usage?

8. How many application instances do you have?

9. Are you using optimistic offline locking?

Sorry for so many questions. :)

Cheers,

--

Flavio W. Brasil

--

You received this message because you are subscribed to the Google Groups "Activate Persistence Framework" group.

To unsubscribe from this group and stop receiving emails from it, send an email to activate-persist...@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

armen donigian

Nov 7, 2014, 5:38:33 PM11/7/14

to activate-p...@googlegroups.com, doni...@gmail.com

1. DB is an Amazon RDS postgres instance.

9. How would I know whether we are using optimistic offline locking.

2. What's the best of finding out whether we are using non-blocking async? If it's wrapped in a Future? Not familiar with Activate's API.

3. The hikari messages are show up throughout the logs but in fewer numbers. So we see 3 or 4 log records during normal operation, but when the issue occurs it's the only text that fills up our logs.

4. Restarting the application (Tomcat) will fix the issue. We've waited 10 mins after problem occurs but didn't self heal.

5. Unfortunately, I don't see a thread dump of the app during the issue in any logs.

6. App is totally unresponsive during this state.

7. It doesn't seem to be correlated with load, as we've turned those scripts off to debug this issue in further.

8. At any given point, Amazon ElasticBeanstalk has 4 - 6 instances running.

9. How would I know whether we are using optimistic offline locking.

brett.wo...@gmail.com

Nov 7, 2014, 9:28:07 PM11/7/14

to activate-p...@googlegroups.com, doni...@gmail.com

The housekeeping thread is a ScheduledExecutor that runs every 30 seconds. When debug logging is enabled, you should see two logs every 30 seconds (a "before" cleanup and "after" cleanup). It seems more likely to me that the thread consuming 100% CPU is the application thread holding the one "in-use" connection. Enabling leak detection may identify the thread.

armen donigian

Nov 8, 2014, 2:10:28 AM11/8/14

to activate-p...@googlegroups.com, doni...@gmail.com, brett.wo...@gmail.com

How would you enable leak detection?

What would be causing the thread to run with high CPU? Long running query (not likely) ? Networking error while query is running?

Can you elaborate?

brett.wo...@gmail.com

Nov 8, 2014, 2:14:42 AM11/8/14

to activate-p...@googlegroups.com, doni...@gmail.com, brett.wo...@gmail.com

In HikariCP you set leakDetectionThreshold, for example, to 30000 (milliseconds). You will get a log for any connection out of the pool for more than 30 seconds. I don't know anything about your application, so its too early to speculate on what that thread might be doing. But finding out if it _is_ that thread, seems important.

brett.wo...@gmail.com

Nov 10, 2014, 7:59:54 PM11/10/14

to activate-p...@googlegroups.com, doni...@gmail.com, brett.wo...@gmail.com

Threads blocked in Unsafe.park() do not consume CPU. Are you sure it is the Java process consuming 100% CPU?

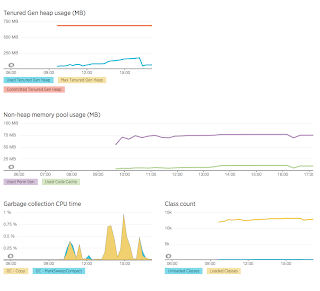

Can you get monitor the JVM memory usage and GC behavior?

brett.wo...@gmail.com

Nov 10, 2014, 8:31:08 PM11/10/14

to activate-p...@googlegroups.com, doni...@gmail.com, brett.wo...@gmail.com

Armen, is that a snapshot during high-CPU conditions?

armen donigian

Nov 10, 2014, 8:35:51 PM11/10/14

to activate-p...@googlegroups.com, doni...@gmail.com, brett.wo...@gmail.com

yes.

armen d

Nov 11, 2014, 2:51:35 PM11/11/14

to activate-p...@googlegroups.com, doni...@gmail.com, brett.wo...@gmail.com

Could the fact that we are using future's which call functions that use activate's transactional (not async transactions) be causing high cpu usage?

Flavio W. Brasil

Nov 11, 2014, 5:11:46 PM11/11/14

to armen d, activate-p...@googlegroups.com, brett.wo...@gmail.com

Hi Armen,

Which is the thread pool type that you are using? For instance, it isn’t good to use a forkjoinpool (that is the scala default) with blocking operations (not async transactions).

If that is the case, I recommend to use the cachedthreadpool.

Best,

--

Flavio W. Brasil

Reply all

Reply to author

Forward

0 new messages