Help with Raven Db Query and Synonym Analyzer

Mathew Bonasera

Hello All,

I need some help because I'm a bit stumped.

I need a Synonym Analyzer for text searches so that when I search for the word "plane" and I have a synonym entry that accepts "aircraft" any document with the word "aircraft" will be returned if I search for "plane".

I found a project on the web that did that: http://www.codeproject.com/Articles/32201/Lucene-Net-Custom-Synonym-Analyzer?msg=5043417#xx5043417xx

This is exactly what I was looking for. Thank you to Andrew Smith :-)

The problem was that this is written for Lucene 2.9.4 and the current Lucene.net Nuget Version of 3.0.3.

After reading a lot of Lucene.net's code. Yes, I would tend to agree with Ayende on this: http://ayende.com/blog/158914/lucene-net-is-ugly

I was able to port over that project to 3.0.3. The internals of Lucene.net changed quite a bit between the two versions.

As of now, I have the 3.0.3 synonym analyzer giving the exact same results as the 2.9.4 version. All is right with the world.

... not so fast ...

When I use this new Analyzer in Raven DB it does appear to work as far as building the index. I can see the index building correctly and I can see in the "Index Entry" view the correct index tokens.

All that looks correct but the result from a query do not return any of the synonym documents correctly except for exact matches.

Here is the simple example and results from RavenDB management studio.

Here are the documents in the Raven:

Id Comment

TestComments/1 fast pass the word

TestComments/2 quick passing the word

TestComments/3 rapid passed the word

TestComments/4 pass the words

TestComments/5 pass the wording

TestComments/6 pass wtf

TestComments/7 wtf word

Here is the list of Synonyms in my configuration.

<?xml version="1.0" encoding="utf-8" ?>

<synonyms>

<group>

<syn>fast</syn>

<syn>quick</syn>

<syn>rapid</syn>

</group>

<group>

<syn>slow</syn>

<syn>decrease</syn>

</group>

<group>

<syn>google</syn>

<syn>search</syn>

</group>

<group>

<syn>check</syn>

<syn>lookup</syn>

<syn>look</syn>

</group>

</synonyms>

Here is the tokenization of the documents using the Synonym Analyzer:

NOTE: This is out of raven, not my test project. The results are the same either way.

Id Comment _document_id

testcomments [ "fast", "pass", "quick", "rapid", "word" ] testcomments/1

testcomments [ "fast", "passing", "quick", "rapid", "word" ] testcomments/2

testcomments [ "fast", "passed", "quick", "rapid", "word" ] testcomments/3

testcomments [ "pass", "words" ] testcomments/4

testcomments [ "pass", "wording" ] testcomments/5

testcomments [ "pass", "wtf" ] testcomments/6

testcomments [ "word", "wtf" ] testcomments/7

So far so good. The Analyzer correctly adds synonyms to the indexes for documents that have words that have synonyms.

Here is where I need your help and guidance. :-/

The query for “fast” only returns the document:

Id Comment

TestComments/1 fast pass the word

It appears to be only matching on an exact match even though 3 other documents also have “fast” in their indexes.

HHHHMMMM….

I have a sample project for the synonym analyzer that works with 3.0.3. It shows the tokenizing process and it looks the same as the results in Ravendb. I also have a test project with tests around the use of the synonym analyzer and that raven does not seem to see the synonyms in the indexes.

Any help would be greatly appreciated.

P.S. I would love to contrib this work to either Raven or Lucene.net if they want it. It’s probably nothing special to anyone but if so, I would like to help out and keep it updated for mine and others future use.

Thanks!

Matt

Here is my Repo with the code. Again, any help is greatly appreciated.

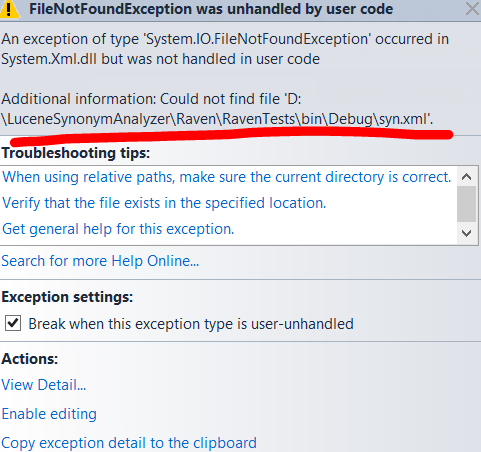

https://github.com/Racerboymatt/LuceneSynonymAnalyzer

Oren Eini (Ayende Rahien)

Hibernating Rhinos Ltd

Oren Eini l CEO l Mobile: + 972-52-548-6969

Office: +972-4-622-7811 l Fax: +972-153-4-622-7811

--

You received this message because you are subscribed to the Google Groups "RavenDB - 2nd generation document database" group.

To unsubscribe from this group and stop receiving emails from it, send an email to ravendb+u...@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.