Got an alluxio.exception.UnexpectedAlluxioException error

126 views

Skip to first unread message

Antonio Si

Aug 8, 2016, 2:22:42 PM8/8/16

to Alluxio Users

Hi,

I am getting the following exception when I try to create a data file in Alluxio 1.1.1:

Caused by: alluxio.exception.UnexpectedAlluxioException: java.lang.RuntimeException: java.io.IOException: Failed to replace a bad datanode on the existing pipeline due to no more good datanodes being available to try. (Nodes: current=[DatanodeInfoWithStorage[10.88.131.233:50010,DS-8d64dc48-f819-4231-b6fa-0d9fa7ce03b1,DISK], DatanodeInfoWithStorage[10.88.131.234:50010,DS-0dda4e57-fd6e-4b35-9368-1eb8d753bf8c,DISK]], original=[DatanodeInfoWithStorage[10.88.131.233:50010,DS-8d64dc48-f819-4231-b6fa-0d9fa7ce03b1,DISK], DatanodeInfoWithStorage[10.88.131.234:50010,DS-0dda4e57-fd6e-4b35-9368-1eb8d753bf8c,DISK]]). The current failed datanode replacement policy is DEFAULT, and a client may configure this via 'dfs.client.block.write.replace-datanode-on-failure.policy' in its configuration. at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method) at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62) at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45) at java.lang.reflect.Constructor.newInstance(Constructor.java:422) at alluxio.exception.AlluxioException.fromThrift(AlluxioException.java:99) at alluxio.AbstractClient.retryRPC(AbstractClient.java:329) at alluxio.client.file.FileSystemMasterClient.createDirectory(FileSystemMasterClient.java:92) at alluxio.client.file.BaseFileSystem.createDirectory(BaseFileSystem.java:79) at alluxio.hadoop.AbstractFileSystem.mkdirs(AbstractFileSystem.java:494)

May I ask what does this exception mean what may be the cause of it?

Thanks.

Antonio.

Bin Fan

Aug 9, 2016, 4:22:23 PM8/9/16

to Alluxio Users

Hi Antonio,

The error message is actually from HDFS.

Are you using CACHE_THROUGH?

It looks to me something wrong happens in underfs which is HDFS here and HDFS sees errors

- Bin

Bin Fan

Aug 24, 2016, 3:50:55 PM8/24/16

to Alluxio Users

Hi Antonio,

do you still have this problem?

- Bin

William Callaghan

Aug 30, 2016, 10:45:25 AM8/30/16

to Alluxio Users

How many nodes are in your HDFS cluster? What is your dfs replication?

张华玮

Dec 13, 2016, 8:52:47 PM12/13/16

to Alluxio Users

Hi Antonio.

I had the same problem now. How do you resolve this problem? Could you help me?

在 2016年8月9日星期二 UTC+8上午2:22:42,Antonio Si写道:

在 2016年8月9日星期二 UTC+8上午2:22:42,Antonio Si写道:

Haoyuan Li

Dec 13, 2016, 9:40:39 PM12/13/16

to 张华玮, Alluxio Users

What version of Alluxio are you using?

--

You received this message because you are subscribed to the Google Groups "Alluxio Users" group.

To unsubscribe from this group and stop receiving emails from it, send an email to alluxio-users+unsubscribe@googlegroups.com.

For more options, visit https://groups.google.com/d/optout.

张华玮

Mar 20, 2017, 2:29:14 AM3/20/17

to Alluxio Users, godbewit...@gmail.com

At first ,I must apologize for my reply is too late.my network is not well ~.~

If alluxio running for some time (a month or so),It will get this issue.And hdfs is running well.

I am using Alluxio1.3 and cloudera5.5.4(the hdfs version is 2.6.0).

If alluxio running for some time (a month or so),It will get this issue.And hdfs is running well.

if restart Alluxio, then it works. It confused me.

Best regards,

在 2016年12月14日星期三 UTC+8上午10:40:39,Haoyuan Li写道:

在 2016年12月14日星期三 UTC+8上午10:40:39,Haoyuan Li写道:

To unsubscribe from this group and stop receiving emails from it, send an email to alluxio-user...@googlegroups.com.

Haoyuan Li

Mar 20, 2017, 10:22:24 AM3/20/17

to 张华玮, Alluxio Users

Please post the error log here. In the meantime, highly recommend you to use Alluxio 1.4.0 or higher.

To unsubscribe from this group and stop receiving emails from it, send an email to alluxio-users+unsubscribe@googlegroups.com.

Bin Fan

Mar 20, 2017, 6:28:48 PM3/20/17

to Alluxio Users, godbewit...@gmail.com

Hywel,

The error you see in the original post is because it alluxio sees issues writing to ufs.

are you writing files to alluxio using cache-through mode when seeing problems?

As Haoyuan said, please post your error message with tracestack will greatly help.

Also, check worker log ($ALLUXIO_HOME/logs/worker.log) and look for any error happening there too, ideally post your worker.log here too.

Bin Fan

Mar 21, 2017, 9:50:32 PM3/21/17

to Hywel Zhang, Alluxio Users

Hi Hywel,

the issue you see is because you didn't use the right user to start Alluxio, please read this for more details and possible fix:

On Mon, Mar 20, 2017 at 6:23 PM, Hywel Zhang <godbewit...@gmail.com> wrote:

Hi Bin Fan,thank for your reply:There is worker.log.The ERROR `Non-super user cannot change owner` is a new issue, I think it is independent with the first ERROR .And my cluster has thirty nodes, and all nodes are running well. I guess the true problem is not the DEFAULT policy of 'dfs.client.block.write.replace-datanode-on-failure.policy'.Best regards

Bin Fan

Mar 22, 2017, 2:43:57 AM3/22/17

to Hywel Zhang, Alluxio Users

Hi Hywel (adding alluxio-users list back in case our discussion can ben useful for or benefit from others)

- What alluxio version?

- What operations do you do when you see this error (read? write? append? by HDFS client)?

On Tue, Mar 21, 2017 at 11:11 PM, Hywel Zhang <godbewit...@gmail.com> wrote:

Hi, Bin Fan :

Thank you for this suggetion .And the issue of permission,I have solved it.The main issue is about this:```2017-03-20 02:33:00,219 ERROR logger.type (RpcUtils.java:call) - Unexpected error running rpcjava.lang.RuntimeException: alluxio.exception.UnexpectedAlluxioException: java.lang.RuntimeException: java.io.IOException: Failed to replace a bad datanode on the existing pipeline due to no more good datanodes being available to try. (Nodes: current=[10.116.50.230:50010, 10.116.78.14:50010], original=[10.116.50.230:50010, 10.116.78.14:50010]). The current failed datanode replacement policy is DEFAULT, and a client may configure this via 'dfs.client.block.write.replace-datanode-on-failure.policy' in its configuration.at com.google.common.base.Throwables.propagate(Throwables.java:160)at alluxio.AbstractClient.retryRPC(AbstractClient.java:321)at alluxio.worker.block.BlockMasterClient.commitBlock(BlockMasterClient.java:90)at alluxio.worker.block.DefaultBlockWorker.commitBlock(DefaultBlockWorker.java:276)at alluxio.worker.block.BlockWorkerClientServiceHandler$2.call(BlockWorkerClientServiceHandler.java:99)at alluxio.worker.block.BlockWorkerClientServiceHandler$2.call(BlockWorkerClientServiceHandler.java:96)at alluxio.RpcUtils.call(RpcUtils.java:62)at alluxio.worker.block.BlockWorkerClientServiceHandler.cacheBlock(BlockWorkerClientServiceHandler.java:96)at alluxio.thrift.BlockWorkerClientService$Processor$cacheBlock.getResult(BlockWorkerClientService.java:824)at alluxio.thrift.BlockWorkerClientService$Processor$cacheBlock.getResult(BlockWorkerClientService.java:808)at org.apache.thrift.ProcessFunction.process(ProcessFunction.java:39)at org.apache.thrift.TBaseProcessor.process(TBaseProcessor.java:39)at org.apache.thrift.TMultiplexedProcessor.process(TMultiplexedProcessor.java:123)at org.apache.thrift.server.TThreadPoolServer$WorkerProcess.run(TThreadPoolServer.java:286)at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1145)at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:615)at java.lang.Thread.run(Thread.java:745)Caused by: alluxio.exception.UnexpectedAlluxioException: java.lang.RuntimeException: java.io.IOException: Failed to replace a bad datanode on the existing pipeline due to no more good datanodes being available to try. (Nodes: current=[10.116.50.230:50010, 10.116.78.14:50010], original=[10.116.50.230:50010, 10.116.78.14:50010]). The current failed datanode replacement policy is DEFAULT, and a client may configure this via 'dfs.client.block.write.replace-datanode-on-failure.policy' in its configuration.at sun.reflect.GeneratedConstructorAccessor28.newInstance(Unknown Source)at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)at java.lang.reflect.Constructor.newInstance(Constructor.java:526)at alluxio.exception.AlluxioException.fromThrift(AlluxioException.java:92)... 16 more```I don't know why got this error . As I said last email , My cluster had thirty nodes and hdfs was running well. I think the god nodes is not enough that is not the true reason of this issue.Best Regards

Hywel Zhang

Mar 22, 2017, 10:18:46 PM3/22/17

to Bin Fan, Alluxio Users

OK,thanks for your suggesion, and I have added the alluxio-users list.

I am using alluxio-1.3.0, and the hdfs version is hdfs-cdh5.5.4.

I got the issue when my spark applications write data to alluxio . And the issue is no timed, It occurs usually when alluxio running more than one month . And the data is more big ,the issue is more liable to occur . When getting this issue ,spark application can't write data to alluxio , but it can write data to hdfs directly without using alluxio.

(Furthermore, Can we communicate with Chinese? And my English is poor, I afraid my discription will confuse you.)

best regards;

Bin Fan

Mar 28, 2017, 4:12:24 PM3/28/17

to Hywel Zhang, Alluxio Users

Hi Hywel,

I created an issue on Alluxio JIRA dashboard to track this

Meanwhile, this issue is due to failed journal writing to HDFS

(targeting writing 3 replications for the journal file, while only two datanodes are healthy)

This can be because the other datanode is full of capacity, or under heavy load.

See this link for more details and a workaround.

https://community.hortonworks.com/questions/27153/getting-ioexception-failed-to-replace-a-bad-datano.htmlOne question left: after you see this error returned, are you able to still read from or write to Alluxio on other jobs?

Is the Alluxio master still alive?

- Bin

Hywel Zhang

Apr 6, 2017, 10:32:19 PM4/6/17

to Bin Fan, Alluxio Users

When the issue occurred, jobs of reading from Alluxio is still work , But the write is not .

And the Alluxio master still alive. The only error is can't write

Bin Fan

Apr 10, 2017, 12:29:30 PM4/10/17

to Hywel Zhang, Bin Fan, Alluxio Users

Thanks Hywel for your information.

I have updated the JIRA.

Feel free to track the progress of this issue from the JIRA https://alluxio.atlassian.net/browse/ALLUXIO-2651

- Bin

To unsubscribe from this group and stop receiving emails from it, send an email to alluxio-users+unsubscribe@googlegroups.com.

Antonio Si

May 1, 2017, 12:57:54 PM5/1/17

to Alluxio Users, godbewit...@gmail.com, bin...@alluxio.com

Hi,

I am getting a similar problem. Here is the log from master.log:

java.lang.RuntimeException: java.io.IOException: Failed to replace a bad datanode on the existing pipeline due to no more good datanodes being available to try. (Nodes: current=[DatanodeInfoWithStorage[10.88.131.233:50010,DS-5080e110-5907-4e31-84f2-d7308e722562,DISK], DatanodeInfoWithStorage[10.88.131.235:50010,DS-b5dea108-94a8-4232-a849-eba697a4a3ab,DISK]], original=[DatanodeInfoWithStorage[10.88.131.233:50010,DS-5080e110-5907-4e31-84f2-d7308e722562,DISK], DatanodeInfoWithStorage[10.88.131.235:50010,DS-b5dea108-94a8-4232-a849-eba697a4a3ab,DISK]]). The current failed datanode replacement policy is DEFAULT, and a client may configure this via 'dfs.client.block.write.replace-datanode-on-failure.policy' in its configuration.

at alluxio.master.AbstractMaster.waitForJournalFlush(AbstractMaster.java:248)

at alluxio.master.file.FileSystemMaster.createDirectory(FileSystemMaster.java:1247)

at alluxio.master.file.FileSystemMasterClientServiceHandler$2.call(FileSystemMasterClientServiceHandler.java:89)

at alluxio.master.file.FileSystemMasterClientServiceHandler$2.call(FileSystemMasterClientServiceHandler.java:86)

at alluxio.RpcUtils.call(RpcUtils.java:61)

at alluxio.master.file.FileSystemMasterClientServiceHandler.createDirectory(FileSystemMasterClientServiceHandler.java:86)

at alluxio.thrift.FileSystemMasterClientService$Processor$createDirectory.getResult(FileSystemMasterClientService.java:1360)

at alluxio.thrift.FileSystemMasterClientService$Processor$createDirectory.getResult(FileSystemMasterClientService.java:1344)

at org.apache.thrift.ProcessFunction.process(ProcessFunction.java:39)

at org.apache.thrift.TBaseProcessor.process(TBaseProcessor.java:39)

at org.apache.thrift.TMultiplexedProcessor.process(TMultiplexedProcessor.java:123)

at org.apache.thrift.server.TThreadPoolServer$WorkerProcess.run(TThreadPoolServer.java:286)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1142)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:617)

at java.lang.Thread.run(Thread.java:745)

Caused by: java.io.IOException: Failed to replace a bad datanode on the existing pipeline due to no more good datanodes being available to try. (Nodes: current=[DatanodeInfoWithStorage[10.88.131.233:50010,DS-5080e110-5907-4e31-84f2-d7308e722562,DISK], DatanodeInfoWithStorage[10.88.131.235:50010,DS-b5dea108-94a8-4232-a849-eba697a4a3ab,DISK]], original=[DatanodeInfoWithStorage[10.88.131.233:50010,DS-5080e110-5907-4e31-84f2-d7308e722562,DISK], DatanodeInfoWithStorage[10.88.131.235:50010,DS-b5dea108-94a8-4232-a849-eba697a4a3ab,DISK]]). The current failed datanode replacement policy is DEFAULT, and a client may configure this via 'dfs.client.block.write.replace-datanode-on-failure.policy' in its configuration.

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.findNewDatanode(DFSOutputStream.java:929)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.addDatanode2ExistingPipeline(DFSOutputStream.java:984)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.setupPipelineForAppendOrRecovery(DFSOutputStream.java:1131)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.processDatanodeError(DFSOutputStream.java:876)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.run(DFSOutputStream.java:402)

Is it the same problem as https://alluxio.atlassian.net/projects/ALLUXIO/issues/ALLUXIO-2672?filter=allopenissues

Thanks.

Antonio.

Bin Fan

May 1, 2017, 1:16:04 PM5/1/17

to Antonio Si, Alluxio Users, Hywel Zhang

hi Antonio,

from the log message, it looks like the same reason you see this.

- Bin

Hywel Zhang

May 10, 2017, 5:01:43 AM5/10/17

to Bin Fan, Antonio Si, Alluxio Users

you can try to reduce the pressure of the Alluxio master.

I remove all roles except Alluxio master , And the issue not occure now.

I remove all roles except Alluxio master , And the issue not occure now.

Antonio Si

May 10, 2017, 4:28:26 PM5/10/17

to Alluxio Users, bin...@alluxio.com, anton...@gmail.com

Hi Hywel,

Thanks for your reply. What do you mean by roles except Alluxio master? Is this something we can

configure in the alluxio config file?

Thanks again.

Antonio.

Hywel Zhang

May 31, 2017, 11:09:14 PM5/31/17

to Antonio Si, Alluxio Users, Bin Fan

So sorry too late to reply you:

At the start, I config the machine of Alluxio master is the compute node, too . And now I use a node be the alluxio master , and don't perform any compute task on it .

Reducing the pressure of this node, It work to me~~~

At the start, I config the machine of Alluxio master is the compute node, too . And now I use a node be the alluxio master , and don't perform any compute task on it .

Reducing the pressure of this node, It work to me~~~

best regards

--

You received this message because you are subscribed to a topic in the Google Groups "Alluxio Users" group.

To unsubscribe from this topic, visit https://groups.google.com/d/topic/alluxio-users/GLx4Y2dIazg/unsubscribe.

To unsubscribe from this group and all its topics, send an email to alluxio-users+unsubscribe@googlegroups.com.

Bin Fan

Jun 1, 2017, 12:19:23 PM6/1/17

to Hywel Zhang, Antonio Si, Alluxio Users

thanks for the update!

Hywel Zhang

Jun 6, 2017, 5:02:05 AM6/6/17

to Bin Fan, Antonio Si, Alluxio Users

you are welcome.

And I have another question now , Can you help me ?

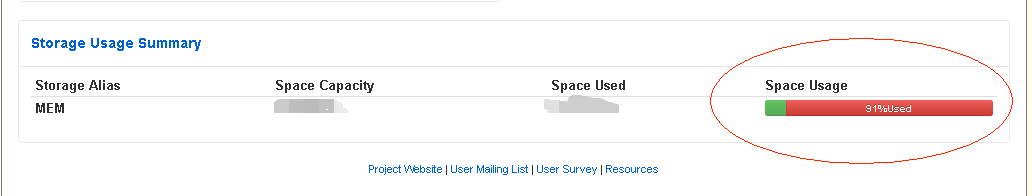

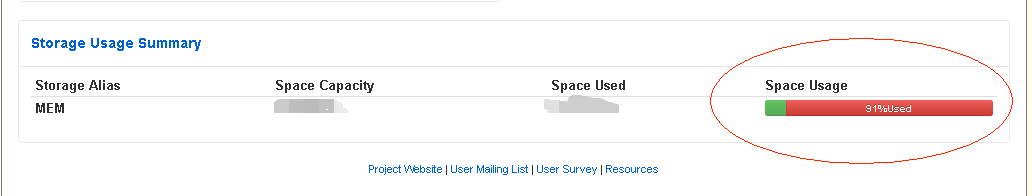

when Alluxio last working, the Space Usage will always grow until 99%. And the data is not enough to fill the all memory space.

Is this normal?

And I have another question now , Can you help me ?

when Alluxio last working, the Space Usage will always grow until 99%. And the data is not enough to fill the all memory space.

Is this normal?

Thank you~~~

Looking forward to hearing from you

Looking forward to hearing from you

best regards !

Bin Fan

Jun 6, 2017, 1:05:15 PM6/6/17

to Hywel Zhang, Antonio Si, Alluxio Users

Hi Hywel,

yes this is the expected behavior by default.

basically, new data will evict previous old data in Alluxio when the storage is full.

in other words, by default stale data will live in Alluxio memory until they are kicked out by new data due to lack of space.

There are several configuration to improve this in different ways:

(1) use "free" command to manually release the memory resource

(2) set time-to-live (ttl) so the space will be free after your specified time period , see http://www.alluxio.org/docs/1.5/en/Command-Line-Interface.html

(3) set reserve ratio for your Alluxio memory capacity. If the usage exceeds the high water mark, eviction will start in background; once the usage goes below the low water mark, eviction will stop.

Hope they help.

- Bin

Hywel Zhang

Jun 6, 2017, 10:41:34 PM6/6/17

to Bin Fan, Antonio Si, Alluxio Users

Hi Bin Fan:

Thanks for your reply , it help me.

Further more , What strategy do the Alluxio use to evict the data? Eviction by the oldest create time? or the Least recently used?

Thanks for your reply , it help me.

Further more , What strategy do the Alluxio use to evict the data? Eviction by the oldest create time? or the Least recently used?

very thanks

Bin Fan

Jun 7, 2017, 12:25:56 PM6/7/17

to Hywel Zhang, Antonio Si, Alluxio Users

by default, it is LRU Evictor (i.e., least recent used will be evicted)

This policy can be configured by Alluxio property "alluxio.worker.evictor.class" (it defaults to alluxio.worker.block.evictor.LRUEvictor)

other candidates are documented in http://www.alluxio.org/docs/master/en/Tiered-Storage-on-Alluxio.html#evictors

Hywel Zhang

Jun 8, 2017, 2:44:28 AM6/8/17

to Bin Fan, Antonio Si, Alluxio Users

thanks for you, it help me very much !😁😁😁

Best regards!

Reply all

Reply to author

Forward

0 new messages