Vision compositing corner position

Daniel Guerrero

mark maker

The problem ist that bottom vision normally expects bright elements (contacts) that it detects. It dismisses both green elements and black stuff (usually the plastic body).

So for your use case, you need a pipeline that goes for the green

only.

We had the almost same situation here:

https://groups.google.com/g/openpnp/c/5e3US2kiliU/m/SM7hfeTGBgAJ

if you follow this discussion to the end you should be able to do

it (read it all at once, I forgot some things first and added them

later).

If this is unclear, just ask.

_Mark

--

You received this message because you are subscribed to the Google Groups "OpenPnP" group.

To unsubscribe from this group and stop receiving emails from it, send an email to openpnp+u...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/openpnp/6d8a26b6-10c7-4a60-b834-f0d39f8ac229n%40googlegroups.com.

Daniel Guerrero

mark maker

I'm not sure I understand. Are you trying to use the pipeline outside of bottom vision?

_Mark

To view this discussion on the web visit https://groups.google.com/d/msgid/openpnp/89cefeda-ff27-4234-9c28-ae38b4386c9fn%40googlegroups.com.

Daniel Guerrero

mark maker

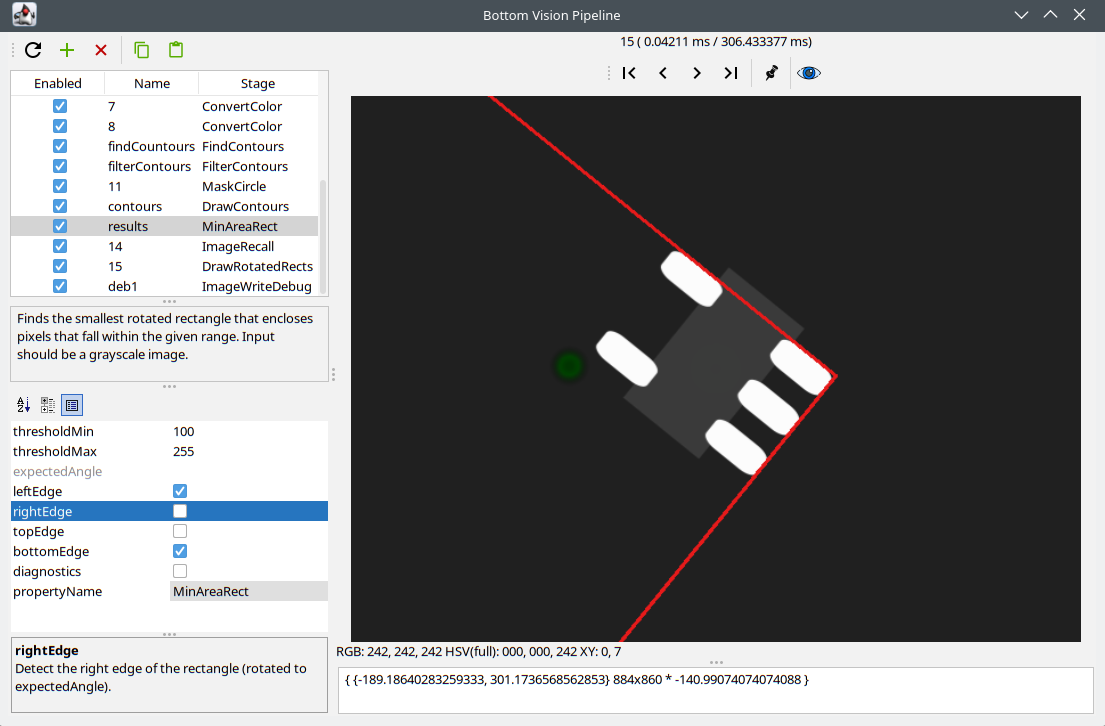

The stage is documented here:

https://github.com/openpnp/openpnp/wiki/MinAreaRect

As you see there (screen shot below), you tell it which edge(s) to detect (subject to rotation by expectedAngle). So it is these edges you can evaluate. The other edges are just arbitrarily shifted out of the camera view, so only the selective edges are visible:

corner = center

+/- (half width/height, rotated by angle)

I cannot help you more specifically if you don't explain to me

how you intend to use this.

_Mark

To view this discussion on the web visit https://groups.google.com/d/msgid/openpnp/4938180e-f0d1-414a-b6a7-2859bb50732fn%40googlegroups.com.

Daniel Guerrero

mark maker

> We are placing "hand-made" parts that are all

roughly the same size.

I think you could use the whole bottom vision (alignment)

function of OpenPnP for that, instead of trying to build your own

motion, pipeline and math. It would do all the positioning and

calculations for you, including iteration (when you enable

pre-rotate, which is always better, even when no rotation is

needed).

Enter the rough part size as a single pad footprint (not the

body!) of the Package.

Modify the standard pipeline as discussed earlier.

Use regular pick and alignment.

The size will be reported as the end result, as a properly composited RotatedRect (reported in the log, which you could parse).

You could even do a Part Size Check (PadExtents) with

tolerance:

https://github.com/openpnp/openpnp/wiki/Bottom-Vision#part-configuration

If I missed something, tell me. Otherwise, you need to completely let go of your earlier elaborate plans and let OpenPnP do its job 😁

_Mark

To view this discussion on the web visit https://groups.google.com/d/msgid/openpnp/27f0825f-aaa2-451b-9bac-38971c835dban%40googlegroups.com.

Daniel Guerrero

Thanks,

Daniel

Daniel Guerrero

Thanks,

Daniel

mark maker

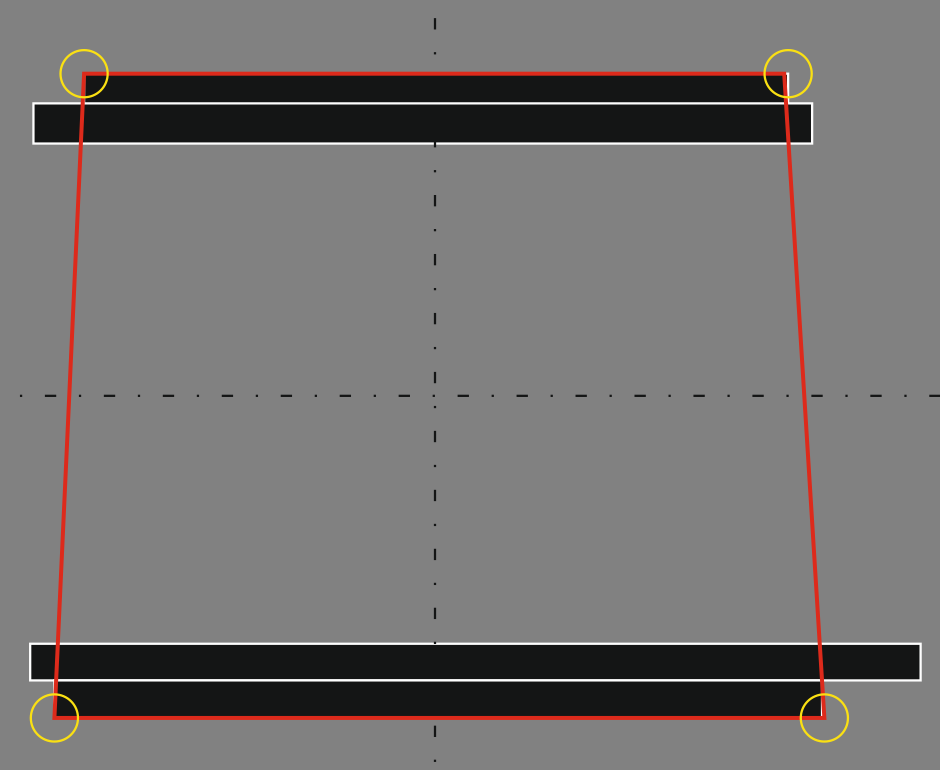

Well that's tricky. As you can guess, the corner detection expects 90° corners.

The only thing that comes to mind is to mask the corners small

enough that the 1.25° don't matter.

You can trick OpenPnP into doing it by building the "footprint"

out of multiple pads.

The imaginary trapezoid hull must be symmetric in X, and "halfed" in Y.

Two outer bars hug the isosceles trapezoid. There will be a

minimal error due to the 1.25°.

Two inner bars are larger and deliberately asymmetric, so

OpenPnP cannot take them as candidates for corners.

Because these inner bars form a concave hull, OpenPnP must isolate

the corners of the outer bars , i.e. it must apply circular

masks (marked yellow).

The height of the bars is probably difficult to get right:

Large enough so the corner can be well detected. Plus they must

be larger than the pick tolerance of your parts (which must

include the size tolerance).

But small enough, so the 1.25° won't matter.

Note: OpenPnP will give you back the bounding rectangle.

_Mark

To view this discussion on the web visit https://groups.google.com/d/msgid/openpnp/7c5dc084-0e71-464d-a00c-ca1a60309310n%40googlegroups.com.

Daniel Guerrero

Daniel

mark maker

Not in the pipeline. The pipeline is called separately for each

corner shot, the compositing happens outside the pipeline.

But I could probably collect the detected corner locations and

then add the corner location array to the

Vision.PartAlignment.After script parameters, so you

could do in a script, whatever it is you want to do with that

information.

_Mark

To view this discussion on the web visit https://groups.google.com/d/msgid/openpnp/04e2cb09-f573-446a-b07f-1466dd234079n%40googlegroups.com.

Daniel Guerrero

mark maker

Are you guys paying attention? We should be long past that.

Please re-read my first answer in this conversation, especially the instructions about the pipeline that I linked (my earlier answers to Jim, read until the end).

_Mark

To view this discussion on the web visit https://groups.google.com/d/msgid/openpnp/cccf7b86-a902-4148-9981-ed6715a4f29fn%40googlegroups.com.

mark maker

Sorry, my reaction was a bit harsh. Bad work day. 😇 But the

pointers are still valid.

To view this discussion on the web visit https://groups.google.com/d/msgid/openpnp/ad8679ff-cf20-46fd-70bd-9fa6f8b9c64b%40makr.zone.

Daniel Guerrero

Sorry we missed parts of your comments in the other thread! We looked back at it in detail and found the problem in our pipeline. We fixed it, now the compositing gets the four corners of the part (shots attached). Now we are working on reconstructing the overall trapezoid shape.

Thanks again for your help.

Best,

Daniel