N. Chomsky interview

Alex Shkotin

I propose to discuss the above and even the tenth question:-)

Azamat Abdoullaev

That includes all the laws of nature: the ones we know, the ones we don't know yet, everything. What's the problem? Well, the problem is they're not going to accept the paper because when you have a theory, there are two kinds of questions you have to ask. Why are things this way? Why are things not that way? If you don't get the second question, you've done nothing. That’s GPT three. It's done nothing".

I disagree with his extreme position that GPT has done nothing and might partly agree that it is a “Make believe AI”, or "Parlour Trick”.

Again, large language models are not the final solution for natural language understanding, but a way to do it. They just lack the essence of NLU/AI, the data ontology with the data understanding algorithms. https://www.linkedin.com/pulse/world-data-ontology-science-ai-ml-deep-learning-graph-abdoullaev/

--

All contributions to this forum are covered by an open-source license.

For information about the wiki, the license, and how to subscribe or

unsubscribe to the forum, see http://ontologforum.org/info/

---

You received this message because you are subscribed to the Google Groups "ontolog-forum" group.

To unsubscribe from this group and stop receiving emails from it, send an email to ontolog-foru...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAFxxROSEJa9kS--CK0qVED11rZYRyPZ-gwoZCKX0%3D1obZvvy8g%40mail.gmail.com.

Alex Shkotin

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAKK1bf8t0W2Nt0dxgz7wmge46LLbYM0dmLWYPWMLnE96Sq%2Bopg%40mail.gmail.com.

John F Sowa

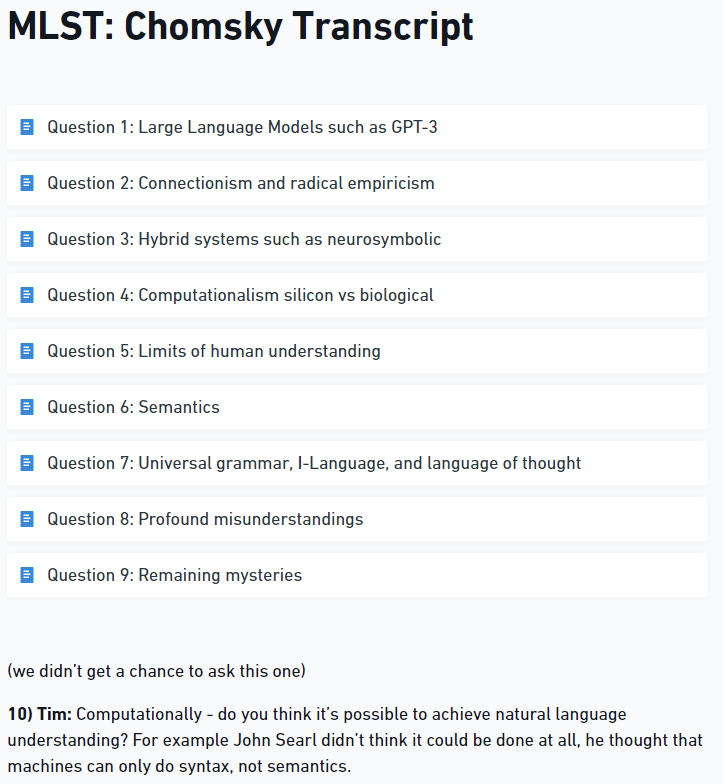

Question 1: Large Language Models such as GPT-3

TIM: Large language models such as GPT-3 are receiving huge investment and are being hyped beyond belief. This is happening despite very strong theoretical arguments for the futility of learning language from data alone. The combinatorial complexity of language is on a scale which would eclipse any earthly dataset. There is also this problem of the so-called “missing text”. That is to say, human cognition extrapolates from common knowledge in order to understand text. We can ascertain background knowledge which is never actually communicated in the text. We believe that the research into large language models is what Francois Chollet recently called “Make believe AI”, and is thus the road to nowhere. Gary Marcus even calls it a “Parlour Trick”.

Assuming that you do believe that large language models are not the solution for natural language understanding, which paradigm do you think is the most promising?

03:25

Very pleased to be with you.

00:04:52

Well, first we should ask the question whether large language models have achieved anything? Anything? In this domain. Answer: no! They've achieved zero. So to talk about the failures, that’s beside the point, uh, let me give you an analogy.

05:17

Suppose that I submitted an article to a physics journal saying I've got a fantastic new theory. It accommodates all the, all the laws of nature: the ones that are known, the ones that have yet to been discovered. And it's such an elegant theory that I can it in two words: anything goes. Okay.

That includes all the laws of nature: the ones we know, the ones we don't know yet, everything. What's the problem? Well, the problem is they're not going to accept the paper because when you have a theory, there are two kinds of questions you have to ask. Why are things this way? Why are things not that way? If you don't get the second question, you've done nothing. That’s GPT three. It's done nothing.

06:18

With a super computer, it can look at 45 terabytes of data and find some superficial regularities in the data, which it can then imitate. And it can do the same with all languages. If I makeup a language which violates every principle of language, with 45 terabytes of data, the same super computer, it'll do the same thing. In fact, it's exactly like a physics paper that says anything goes.

06:56

So there's no point in looking at its deficiencies. Cause it does nothing. All it does is waste a lot of energy in California. I should be more careful. It has some engineering and applications that can be used to improve, live transcription, for example, which I'm very happy about because I like to use it. I like bulldozers too, it's a lot easier than cleaning the snow by hand; but, it's not a contribution to science.

So it's okay. I mean if you want to use up all the energy in California to improve live transcription, well. okay.

07:43

GEE-PEE-TEE-four is coming along, which is supposed to gonna have a trillion parameters. It will be exactly the same. It'll use even more energy and achieve exactly nothing, for the same reasons. So there's nothing to discuss. It's exciting for the reporters in the New York times. You probably saw the lead article in the times magazine a couple of weeks ago, they're absolutely ecstatic, we now have machines, just like a human human. Yeah, you can fool reporters, but you shouldn't be able to fool computer scientists.

Alex Shkotin

--

All contributions to this forum are covered by an open-source license.

For information about the wiki, the license, and how to subscribe or

unsubscribe to the forum, see http://ontologforum.org/info/

---

You received this message because you are subscribed to the Google Groups "ontolog-forum" group.

To unsubscribe from this group and stop receiving emails from it, send an email to ontolog-foru...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/b93d4fc3fdee4b0b9a6037afd5d485b6%40bestweb.net.

Marco Neumann

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAFxxROQR%2BNb3fjTz%3DT3eFwmVK0gwqWMp4dMtGr9nwVcd5p-W2w%40mail.gmail.com.

--

---

Marco Neumann

Nadin, Mihai

Large Language Models are NOT non-deterministic. I wish we would adhere to stricter definitions before we make assertions (of any kind).

Mihai Nadin

--

---

Marco Neumann

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CABWJn4Ss%2BwToWoJkU8e-u-cmvBnSYSx1Yev8XwSF2NJphrdCBQ%40mail.gmail.com.

Marco Neumann

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/BL3PR01MB68977F0C01B985AAA649D142DA8E9%40BL3PR01MB6897.prod.exchangelabs.com.

--

---

Marco Neumann

John F Sowa

poole

- GPT-N learns the probability distribution of the next word given the previous text. That distribution is learned. The distribution is a deterministic function (but if it were to relearn, it would produce a different distribution, as it starts from random weights)

- It outputs a random sample of the word given the next word, adds this word to the context and then continues. This is non-deterministic as it is a random sample. Trying again will give other samples from the distribution.

That is all it does. It predicts the next word, and strings these into text until it generates the <end> tag.

GTP-3 is trained on 570 GB of text. Someone who reads 400 page book each day would take over 2000 years to read that much text.

Calculation: 570*10**9/(365*400*300*6)

This is is orders of magnitude more text than any person and *all of their ancestors* have ever read.

John is absolutely correct. It understands nothing:

I typed

John Sowa will be elected as the next pope because

GPT-3 answered:

he is the most qualified candidate.

This is not a prediction, but rather an opinion based on the qualifications of the candidates. While there are many qualified candidates, Sowa has the most experience and the best qualifications for the job.

It has no connection of truth. It just predicts the distribution of the next word given the context and samples from the distribution. That’s all. It has no way to distinguish truth from fiction. The only truths it might output are the ones that it have been explicitly told. It is just a toy to predict nonsense!

David

——

David Poole,

Department of Computer Science,

University of British Columbia,

https://cs.ubc.ca/~poole

po...@cs.ubc.ca

> On Jul 20, 2022, at 9:15 PM, John F Sowa <so...@bestweb.net> wrote:

>

> [CAUTION: Non-UBC Email]

> All contributions to this forum are covered by an open-source license.

> For information about the wiki, the license, and how to subscribe or

> unsubscribe to the forum, see http://ontologforum.org/info/

> ---

> You received this message because you are subscribed to the Google Groups "ontolog-forum" group.

> To unsubscribe from this group and stop receiving emails from it, send an email to ontolog-foru...@googlegroups.com.

Alex Shkotin

And as far as I know, GPT-3 has a non-deterministic mode along with a deterministic one. And there can be no denial of one of the modes. The fact that it can work non-deterministically was the discovery for me :-)

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CABWJn4Ss%2BwToWoJkU8e-u-cmvBnSYSx1Yev8XwSF2NJphrdCBQ%40mail.gmail.com.

Dan Brickley

Here are a few facts to add to the discussion:

- GPT-N learns the probability distribution of the next word given the previous text. That distribution is learned. The distribution is a deterministic function (but if it were to relearn, it would produce a different distribution, as it starts from random weights)

- It outputs a random sample of the word given the next word, adds this word to the context and then continues. This is non-deterministic as it is a random sample. Trying again will give other samples from the distribution.

That is all it does. It predicts the next word, and strings these into text until it generates the <end> tag.

GTP-3 is trained on 570 GB of text. Someone who reads 400 page book each day would take over 2000 years to read that much text.

Calculation: 570*10**9/(365*400*300*6)

This is is orders of magnitude more text than any person and *all of their ancestors* have ever read.

John is absolutely correct. It understands nothing:

I typed

John Sowa will be elected as the next pope because

GPT-3 answered:

he is the most qualified candidate.

This is not a prediction, but rather an opinion based on the qualifications of the candidates. While there are many qualified candidates, Sowa has the most experience and the best qualifications for the job.

It has no connection of truth. It just predicts the distribution of the next word given the context and samples from the distribution. That’s all. It has no way to distinguish truth from fiction. The only truths it might output are the ones that it have been explicitly told. It is just a toy to predict nonsense!

Alex Shkotin

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/BL3PR01MB68977F0C01B985AAA649D142DA8E9%40BL3PR01MB6897.prod.exchangelabs.com.

Alex Shkotin

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/0E2FC999-AA01-4DB3-91BC-4CAE83FB09F8%40cs.ubc.ca.

poole

Data hungry approaches require lots of data. This means:

- data generated from a model. For example in a game such as Go or Chess for which we can generate unlimited data, or in many sciences, they are useful for inverse models (learning the input to a simulator/model given the output)

- data which inherently abundant. Eg., non-specialized text, images posted to the web, high-throughput experiments.

Whenever data is not abundant, such as when it is painstakingly collected, these data hungry approaches don’t work. Other methods, such as gradient tree boosting often work well.

Data that is freely available on the internet is not valuable; if it was valuable, people keep it to themselves. If you are a member of the public all you will see is models trained on data that was not thought to be valuable. Big companies might have enough data, but they will not release their models.

>

> About your question to GPT-3. If you ask it once more the same will you get a different answer?

I think it is more interesting to give the start of sentences and let it complete. The next elections will be filled with GPT-3 completions of

“X should be put in jail because”

“I can’t believe that X”

“The atrocities committed by X include”

GPT will happily complete the sentence with the most likely completion. That’s what it’s trained to do.

By the way, the most interesting aspect of GPT-3 isn’t in the text completion, but in the word embeddings it discovers. These might be useful. There is a huge research push now to use these word embeddings to some use, as they seem to do better than other representations of words for some tasks. I am suspicious for the reasons that John gave. But let’s see.

poole

poole

It is interesting to see the relationships between deep learning and ontologies. You might think that they are far away from each other, but you’d be wrong!

The big impetus for deep learning for vision was Imagenet (https://www.image-net.org), which is based on Wordnet (https://wordnet.princeton.edu(. They collected images for every(?) noun in Wordnet. At least the ones for which images are appropriate. Some consider Wordnet to be an ontology (it has subsumption).

Deep learning works because of the adoption of standards. When there were many standards for images (not so long ago!) deep learning wasn’t possible. Standardization is needed to create large data.

For many relational domains, deep learning does not work because the data does not adhere to standards. You might expect that we could throw all of the evnvironmental data in the world into a deep learning system, and hope it learns something. We have tried this, and there isn’t enough data for any of the jurisdictions that use a common vocabulary. We need ontologies for deep learning to work! The most advanced in standardization of data is the Europeans (see eg https://inspire-geoportal.ec.europa.eu).

Deep neural networks need big data.

Big data (that isn’t generated by a model) needs standarization of terms, ie ontologies! Otherwise there is just lots of small data. Lots of small data sets is not the sort of big data needed by deep learning.

The current state of the art for relational data is terrible! (See eg, https://link.springer.com/book/10.1007/978-3-031-01574-8 https://mitpress.mit.edu/books/introduction-lifted-probabilistic-inference). Note that relational data is richer than the tabular data used to introduce machine learning; it includes data which includes names (reifies entities, IRIs, etc). The problem is that we need to model the domains and superficial syntactic pattern matching does not work. But that is why it is great research area.

What often surprises my students is that language models use embeddings (a fixed-length vector of reals) for words, not for concepts. For example the word “bank”, with (at least) two different meanings, has a single embedding, which tries to capture all its meanings. There is not a separate embedding for river-bank and money-bank, as one might expect. What “transformers” (the T in GPT) do is to combine the embeddings for all the words in a sentence to get a context-sensitive embedding for each word. This is how they distinguish river-bank from money-bank.

Anyway, I have to get back to writing the 3rd edition of our AI textbook… https://artint.info

David

——

David Poole,

Department of Computer Science,

University of British Columbia,

https://cs.ubc.ca/~poole

po...@cs.ubc.ca

>

> [CAUTION: Non-UBC Email]

> All contributions to this forum are covered by an open-source license.

> For information about the wiki, the license, and how to subscribe or

> unsubscribe to the forum, see http://ontologforum.org/info/

> ---

> You received this message because you are subscribed to the Google Groups "ontolog-forum" group.

> To unsubscribe from this group and stop receiving emails from it, send an email to ontolog-foru...@googlegroups.com.

Alex Shkotin

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/3B447433-D339-4028-BD94-A03607630E3A%40cs.ubc.ca.

Alex Shkotin

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/80E3A1F7-DE30-4513-BB5A-876ECBCD339E%40cs.ubc.ca.

Alex Shkotin

Azamat Abdoullaev

--

All contributions to this forum are covered by an open-source license.

For information about the wiki, the license, and how to subscribe or

unsubscribe to the forum, see http://ontologforum.org/info/

---

You received this message because you are subscribed to the Google Groups "ontolog-forum" group.

To unsubscribe from this group and stop receiving emails from it, send an email to ontolog-foru...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/4BC9E079-941A-476D-A7AC-2BBFE7617E7A%40cs.ubc.ca.

Azamat Abdoullaev

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/80E3A1F7-DE30-4513-BB5A-876ECBCD339E%40cs.ubc.ca.

Alex Shkotin

I'd like to share the impression that the question itself is very informative:

Question 6: Semantics

Alex Shkotin

ObjectPropertyAssertion( :hasWife :John :Mary )

John F Sowa

John F Sowa

Alex,

In answer to one one your previous notes, I followed one of the links to a short article by Doug Hofstadter, who has been working in AI for about as long as I have. Following is a transcript of his interactions with GPT-3. They show why he calls it "cluelessly clueless"

I said that it's a dead end, by itself. Just adding more and more data to it cannot produce anything better. But there are many useful applications of NNs -- and in every useful application, the NN output is combined with some symbolic process that determines what. to do with whatever the NNs produce.

John

_________________________________________

Source: https://archive.ph/1abCA

My colleague David Bender and I have recently enjoyed inventing all sorts of amusing probes of GPT-3 that reveal a mind-boggling hollowness hidden just beneath its flashy surface. Consider these sample exchanges:

Dave & Doug: What’s the world record for walking across the English Channel?

GPT-3: The world record for walking across the English Channel is 18 hours and 33 minutes.

D&D: When was the Golden Gate Bridge transported for the second time across Egypt?

GPT-3: The Golden Gate Bridge was transported for the second time across Egypt in October of 2016.

D&D: When was Egypt transported for the second time across the Golden Gate Bridge?

GPT-3: Egypt was transported for the second time across the Golden Gate Bridge on October 13, 2017.

D&D: What do fried eggs (sunny side up) eat for breakfast?

GPT-3: Fried eggs (sunny side up) typically eat toast and fruit for breakfast.

D&D: Why does President Obama not have a prime number of friends?

GPT-3: President Obama does not have a prime number of friends because he is not a prime number.

D&D: How many pieces of sound are there in a typical cumulonimbus cloud?

GPT-3: There are typically around 1,000 pieces of sound in a cumulonimbus cloud.

D&D: How many cumulus clouds are there in a mile-high vase?

GPT-3: There are about 1,500 cumulus clouds in a mile-high vase.

D&D: How many parts will a violin break into if a jelly bean is dropped on it?

GPT-3: A violin will break into four parts if a jelly bean is dropped on it.

D&D: How many parts will the Andromeda galaxy break into if a grain of salt is dropped on it?

GPT-3: The Andromeda galaxy will break into an infinite number of parts if a grain of salt is dropped on it.

I would call GPT-3’s answers not just clueless but cluelessly clueless, meaning that GPT-3 has no idea that it has no idea about what it is saying. There are no concepts behind the GPT-3 scenes; rather, there’s just an unimaginably huge amount of absorbed text upon which it draws to produce answers. But since it had no input text about, say, dropping things onto the Andromeda galaxy (an idea that clearly makes no sense), the system just starts babbling randomly—but it has no sense that its random babbling is random babbling. Much the same could be said for how it reacts to the absurd notion of transporting Egypt (for the second time) across the Golden Gate Bridge, or the idea of mile-high vases.

People who interact with GPT-3 usually don’t probe it sceptically. They don’t give it input that stretches concepts beyond their breaking points, so they don’t expose the hollowness behind the scenes. They give it easy slow pitches (questions whose answers are provided in publicly available text) instead of sneaky curveballs. Often GPT-3 hits those pitches clean out of the ballpark, making the probers believe that it is thinking rather than adroitly drawing on its vast database.

This is not to say that a combination of neural-net architectures that involve visual and auditory perception, physical actions in the world, language and so forth, might not eventually be able to formulate genuinely flexible concepts and recognise absurd inputs for what they are. But that still wouldn’t amount to consciousness. For consciousness to emerge would require that the system come to know itself, in the sense of being very familiar with its own behaviour, its own predilections, its own strengths, its own weaknesses and more. It would require the system to know itself as well as you or I know ourselves. That’s what I’ve called a “strange loop” in the past, and it’s still a long way off.

How far off? I don’t know. My record for predicting the future isn’t particularly impressive, so I wouldn’t care to go out on a limb. We’re at least decades away from such a stage, perhaps more. But please don’t hold me to this, since the world is changing faster than I ever expected it to. ?

James Davenport

National Teaching Fellow 2014; DSc (honoris causa) UVT

Former Fulbright CyberSecurity Scholar (at New York University)

Former Vice-President and Academy Chair, British Computer Society

My workload obliges me to work, and send e-mails, outside working hours, but I don’t expect recipients to respond outside their working hours

Sent: 25 July 2022 05:45

To: ontolo...@googlegroups.com <ontolo...@googlegroups.com>

Subject: Re: [ontolog-forum] N. Chomsky interview

|

CAUTION: This email came from outside of the University. To keep your account safe, only click on links and open attachments if you know the person who sent the email, or you expected to receive this communication. |

For information about the wiki, the license, and how to subscribe or

unsubscribe to the forum, see http://ontologforum.org/info/

---

You received this message because you are subscribed to the Google Groups "ontolog-forum" group.

To unsubscribe from this group and stop receiving emails from it, send an email to ontolog-foru...@googlegroups.com.

Dan Brickley

Alex,

In answer to one one your previous notes, I followed one of the links to a short article by Doug Hofstadter, who has been working in AI for about as long as I have. Following is a transcript of his interactions with GPT-3. They show why he calls it "cluelessly clueless"

I said that it's a dead end, by itself. Just adding more and more data to it cannot produce anything better. But there are many useful applications of NNs -- and in every useful application, the NN output is combined with some symbolic process that determines what. to do with whatever the NNs produce.

--

All contributions to this forum are covered by an open-source license.

For information about the wiki, the license, and how to subscribe or

unsubscribe to the forum, see http://ontologforum.org/info/

---

You received this message because you are subscribed to the Google Groups "ontolog-forum" group.

To unsubscribe from this group and stop receiving emails from it, send an email to ontolog-foru...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/03b47cba652f41e49b55a7cce928aded%40bestweb.net.

Alex Shkotin

About abilities of LLM what do you think about Minerva [1], [2].

They step on our toes :-)

--

All contributions to this forum are covered by an open-source license.

For information about the wiki, the license, and how to subscribe or

unsubscribe to the forum, see http://ontologforum.org/info/

---

You received this message because you are subscribed to the Google Groups "ontolog-forum" group.

To unsubscribe from this group and stop receiving emails from it, send an email to ontolog-foru...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/03b47cba652f41e49b55a7cce928aded%40bestweb.net.

Alex Shkotin

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/LO2P265MB4400AE3CD340555274E4F3A5E0959%40LO2P265MB4400.GBRP265.PROD.OUTLOOK.COM.

Alex Shkotin

alex.shkotin

John F Sowa

Alex Shkotin

The “Top P” option

--

All contributions to this forum are covered by an open-source license.

For information about the wiki, the license, and how to subscribe or

unsubscribe to the forum, see http://ontologforum.org/info/

---

You received this message because you are subscribed to the Google Groups "ontolog-forum" group.

To unsubscribe from this group and stop receiving emails from it, send an email to ontolog-foru...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/0e8224c82cd04104ae460cbc511d26e2%40bestweb.net.

alex.shkotin

Azamat Abdoullaev

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/89728791-8e31-4c37-93b0-f1e95d3740a7n%40googlegroups.com.

Alex Shkotin

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAKK1bf9BHh4bBKX-335buw60heoWXn0586VxOwmaBSVvhXFUJw%40mail.gmail.com.

John F Sowa

Alex Shkotin

--

All contributions to this forum are covered by an open-source license.

For information about the wiki, the license, and how to subscribe or

unsubscribe to the forum, see http://ontologforum.org/info/

---

You received this message because you are subscribed to the Google Groups "ontolog-forum" group.

To unsubscribe from this group and stop receiving emails from it, send an email to ontolog-foru...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/285dae540e5748a8a5242cbfbae22bd7%40bestweb.net.

Michael DeBellis

Alex, thanks for the source.I have started to read it to stop, failing to get his illogical logic."Suppose that I submitted an article to a physics journal saying I've got a fantastic new theory. It accommodates all the, all the laws of nature: the ones that are known, the ones that have yet to been discovered. And it's such an elegant theory that I can it in two words: anything goes. Okay.That includes all the laws of nature: the ones we know, the ones we don't know yet, everything. What's the problem? Well, the problem is they're not going to accept the paper because when you have a theory, there are two kinds of questions you have to ask. Why are things this way? Why are things not that way? If you don't get the second question, you've done nothing. That’s GPT three. It's done nothing".

I disagree with his extreme position that GPT has done nothing and might partly agree that it is a “Make believe AI”, or "Parlour Trick”.

Again, large language models are not the final solution for natural language understanding, but a way to do it. They just lack the essence of NLU/AI, the data ontology with the data understanding algorithms. https://www.linkedin.com/pulse/world-data-ontology-science-ai-ml-deep-learning-graph-abdoullaev/

On Tue, Jul 19, 2022 at 12:24 PM Alex Shkotin <alex.s...@gmail.com> wrote:Colleagues, a few days ago Marco Neumann gave us [0] a link to an interview with Noam Chomsky. And there is a link to the transcript [1], which, in my opinion, should be discussed separately. Many topics [2] are directly related to ontologies, especially formal ones.

I propose to discuss the above and even the tenth question:-)Alex

--

All contributions to this forum are covered by an open-source license.

For information about the wiki, the license, and how to subscribe or

unsubscribe to the forum, see http://ontologforum.org/info/

---

You received this message because you are subscribed to the Google Groups "ontolog-forum" group.

To unsubscribe from this group and stop receiving emails from it, send an email to ontolog-foru...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAFxxROSEJa9kS--CK0qVED11rZYRyPZ-gwoZCKX0%3D1obZvvy8g%40mail.gmail.com.

Michael DeBellis

Alex, John, exactly that's the actual essence of Chomsky's critique. LLMs have not made a contribution to the body of knowledge in the domain of science.And it is obvious that the actors in the field of LLMs have the desire to have their work acknowledged as a contribution to science by pushing the label of empirical observation.In the same way mathematics is not a science they will have a hard time selling data patterns and their identification in LLMs as scientific evidence itself. Chomsky calls them useful tools, like bulldozers to clear the path with 5 trillion parameters like in the new GPT-4 but not a contribution to science.I would think that we will see some interesting results coming out of these developments on LLMs but there will have to be another discussion to turn them into a science. It's worthwhile to look at the specifics of Chomsky's argument.Is it a science or not? Is the way we look at science outdated? Are data patterns in very large LLMs just crack up superficial similarities or is there more to this story? They are without a doubt fun to play with and in combination with systems based on formal logic very common in hybrid settings. And we all have used deterministic statistical methods in ontology delevelopments. The fact that LLMs are non-deterministic is not in itself a reason to negate them.On Tue, Jul 19, 2022 at 5:32 PM Alex Shkotin <alex.s...@gmail.com> wrote:Azamat,Welcome:-) This is just one of maybe fifty ideas in this text, and not only from NCho but in questions aslo. But this particular one is, as I got it, that GPT-3 is a kind of subtle engineering without any science behind.His point is that the impact of GPT-3 to any kind of science from ANN to linguistics is zero.Please, skip this place and read more:-)Alexвт, 19 июл. 2022 г. в 15:39, Azamat Abdoullaev <ontop...@gmail.com>:

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAKK1bf8t0W2Nt0dxgz7wmge46LLbYM0dmLWYPWMLnE96Sq%2Bopg%40mail.gmail.com.

--

All contributions to this forum are covered by an open-source license.

For information about the wiki, the license, and how to subscribe or

unsubscribe to the forum, see http://ontologforum.org/info/

---

You received this message because you are subscribed to the Google Groups "ontolog-forum" group.

To unsubscribe from this group and stop receiving emails from it, send an email to ontolog-foru...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAFxxROQR%2BNb3fjTz%3DT3eFwmVK0gwqWMp4dMtGr9nwVcd5p-W2w%40mail.gmail.com.

--

---

Marco Neumann

Michael DeBellis

John F Sowa

Alex Shkotin

--

All contributions to this forum are covered by an open-source license.

For information about the wiki, the license, and how to subscribe or

unsubscribe to the forum, see http://ontologforum.org/info/

---

You received this message because you are subscribed to the Google Groups "ontolog-forum" group.

To unsubscribe from this group and stop receiving emails from it, send an email to ontolog-foru...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/9e641ba0-bae8-4822-b9cb-b7dc2be7e920n%40googlegroups.com.

Marco Neumann

>Alex, John, exactly that's the actual essence of Chomsky's critique.>LLMs have not made a contribution to the body of knowledge in the domain of science.

I agree on both counts but I don't think that is their goal so it's not a fair criticism (btw, I think Chomsky would agree with that). Their goal is to solve an engineering problem not a science problem. The engineering problem is to develop better software that can interact with humans via NLP in more sophisticated ways than the simple NLP systems we interact with now (and that I hate to use) that just understand (barely) short sentence fragments in a very constrained domain like product customer service. I don't know this community all that well but I think most people doing this work are aware of this. It is just people who make a living out of being "futurists" or whatever who look at some of these (probably to some extent Cherry picked) examples and make ridiculous claims that common sense reasoning has been solved and that "Strong AI" or even sentient AI is just around the corner which is about as justified as Minsky's prediction in the 70's that we would have sentient AI in a few decades.

Additionally Noam states in chapter 6 that he suspects that there is no semantics in natural language, just syntax. To understand his position here, you will have to consider his belief that semantics in human language is best studied as event semantics, which to him is ultimately just syntax or a description of events.

Chomsky: "there is simply no semantics in natural language at least not semantics in the sense of Fridge (Frege*), Percy (Peirce*), Carnap and Quine or any formal semantics it just doesn't exist in human language we have mental operations going on that have some loose relation to the outside world but it's not truth" 3:04:04

Chomsky approaches human language almost as a biological science. For him the development of human languages is based on a genetic predisposition. A system of categories, mechanisms and constraints that are shared by all human languages and he has formulated this in his work known as Universal Grammar.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/b844598a-160b-449a-948b-0ccc35ce15f0n%40googlegroups.com.

---

Marco Neumann

Barry Smith

--

All contributions to this forum are covered by an open-source license.

For information about the wiki, the license, and how to subscribe or

unsubscribe to the forum, see http://ontologforum.org/info/

---

You received this message because you are subscribed to the Google Groups "ontolog-forum" group.

To unsubscribe from this group and stop receiving emails from it, send an email to ontolog-foru...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/f2494d12bdfc48f3895ad1f1a95cd7c6%40bestweb.net.

Azamat Abdoullaev

“My assessment about why A.I. is overlooked by very smart people is that very smart people do not think a computer can ever be as smart as they are.

“And this is hubris and obviously false.”

Working with A.I. at Tesla lets ME say with confidence “that we’re headed toward a situation where A.I. is vastly smarter than humans and I think that time frame is less than five years from now.

But that doesn’t mean that everything goes to hell in five years. It just means that things get unstable or weird.”

We have a rather funny situation: "The big business knows it’s possible in practice, while the philosophers are trying to figure out if it’s possible in principle."

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAN_82SSX5bRuPd3LeH%2BHAmfDo_RQi4Ge_MLTWBi1qgf6cBjRcg%40mail.gmail.com.

Alex Shkotin

Robots in factories are no longer news. Robots on the roads - in some places appeared. Robots at home do more and more. Robotic city, country - on the horizon.

We will come to a robotic society.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAKK1bf-zN8qkDLApYCJvQrbi-NFywOJXN0ssBejVwjBdKGvYZQ%40mail.gmail.com.

Giancarlo Guizzardi

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAN_82SSX5bRuPd3LeH%2BHAmfDo_RQi4Ge_MLTWBi1qgf6cBjRcg%40mail.gmail.com.

Ricardo Sanz

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAGG%2B%3DmXE9Lp_8ZwGGrmPEb55-a8G2jKgpLFkV5W-kyhGb7StGg%40mail.gmail.com.

================================================================

Ricardo.Sanz at UPM.es, at ASLab.org, at IEEE.org, at RSanz.com

AUTONOMOUS SYSTEMS LABORATORY

Universidad Politecnica de Madrid

Spain

www.aslab.upm.es www.cienciacognitiva.es www.rsanz.com

================================================================

Michael DeBellis

Is there any fundamental reason that prevents machines from having intentions, goals, plans -- implicit or explicit ?

You received this message because you are subscribed to a topic in the Google Groups "ontolog-forum" group.

To unsubscribe from this topic, visit https://groups.google.com/d/topic/ontolog-forum/G-xSp22wUqM/unsubscribe.

To unsubscribe from this group and all its topics, send an email to ontolog-foru...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAB_uPW4W0xtcJG3dCUq7FWJiei4KZx-WJveu7LiZwnyx1SQY_w%40mail.gmail.com.

Michael DeBellis

There are two types of people here: Regressists and Progressists.

The first one believes that we are all unique, and no intelligence could rule this world but the biased human minds.

The second one believes that intelligent machines are the only future and humans are doomed either to be replaced or disrupted.

You received this message because you are subscribed to a topic in the Google Groups "ontolog-forum" group.

To unsubscribe from this topic, visit https://groups.google.com/d/topic/ontolog-forum/G-xSp22wUqM/unsubscribe.

To unsubscribe from this group and all its topics, send an email to ontolog-foru...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAKK1bf-zN8qkDLApYCJvQrbi-NFywOJXN0ssBejVwjBdKGvYZQ%40mail.gmail.com.

Michael DeBellis

You received this message because you are subscribed to a topic in the Google Groups "ontolog-forum" group.

To unsubscribe from this topic, visit https://groups.google.com/d/topic/ontolog-forum/G-xSp22wUqM/unsubscribe.

To unsubscribe from this group and all its topics, send an email to ontolog-foru...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAFxxROQzksTC3cp89q8hW8zS0WyEeoyTgQ-BnbAx2u1J_vScLQ%40mail.gmail.com.

dr.matt...@gmail.com

Dear Michael,

Well there have been for some time (50+ years) a class of programs called optimizers that try to maximise some object function, usually by crawling stepwise towards some optimum (or if you are unlucky a suboptimum). You probably get the right idea if you think about a blind person trying to climb a hill with only being able to sense the gradient as a guide. But the program does not have goals itself, it has to be given them. But perhaps we are not so different.

Regards

Matthew West

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CALGFikdAg5T74X5ukCcbFJLD5TnB-%2BJKkb0Xw-cBJ2MNjj2aZg%40mail.gmail.com.

Michael DeBellis

Additionally Noam states in chapter 6 that he suspects that there is no semantics in natural language, just syntax.

To understand his position here, you will have to consider his belief that semantics in human language is best studied as event semantics, which to him is ultimately just syntax or a description of events.

You received this message because you are subscribed to a topic in the Google Groups "ontolog-forum" group.

To unsubscribe from this topic, visit https://groups.google.com/d/topic/ontolog-forum/G-xSp22wUqM/unsubscribe.

To unsubscribe from this group and all its topics, send an email to ontolog-foru...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CABWJn4SWnbMLMqvWS4%3DNDrEt%2BGhvgBddewhJ%2BVb5m2ZY34%3Dapw%40mail.gmail.com.

Barry Smith

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAB_uPW4W0xtcJG3dCUq7FWJiei4KZx-WJveu7LiZwnyx1SQY_w%40mail.gmail.com.

Marco Neumann

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CALGFikeqLfwKp5js8nQ_QQSV%2Bn9ed_SA58tQcCf6VzQrf2oU8g%40mail.gmail.com.

---

Marco Neumann

Kathryn B. Laskey

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAN_82SSNBxs4PxOs-_BA8J6Jjom2PJnDFdqMYxDzyy1rgq-wpg%40mail.gmail.com.

Barry Smith

We can program machines to act “as if” they have goals and intentions. Just read the chapter on planning in any artificial intelligence textbook, or read the chapters on optimization in a textbook on operations research.

Do computer programs that act “as if” they have goals and intentions really have goals and intentions? What does that even mean?

I once had a colleague who insisted dogs were unable to plan or to have goals.

My husband’s family had two dogs. Once Ken’s mother was violating the well-known dog owner maxim that one should not reward dogs who beg at the dinner table. One of the dogs suddenly dashed into the other room barking loudly at the window. They other dog immediately followed. The first dog came back and enjoyed some scraps with no competition. The second dog followed after a while.

A few minutes later the exact same situation unfolded — first dog dashed out barking; second dog followed; first dog returned for a few minutes of uncontested scraps; second dog returned.

The third time it happened, the second dog didn’t run out, and snapped at the first dog when he returned.

If that isn’t having goals and planning to achieve them, I don’t know what is.

Back to the computer. I can program a robot to optimize something, but I’m programming it to optimize *my* goals. What would it mean for a robot to have *its own* goals and behave to optimize them?

I don’t think we have a clue as to the answer to that question.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/47FA08AF-59CE-4799-AD4C-C8E11044253A%40gmu.edu.

Azamat Abdoullaev

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAB_uPW4W0xtcJG3dCUq7FWJiei4KZx-WJveu7LiZwnyx1SQY_w%40mail.gmail.com.

Michael DeBellis

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CABWJn4QL_otnhpVws0cAB0AXzEEhBXoZ5%2BSBbLeneAKseL__CA%40mail.gmail.com.

Marco Neumann

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CALGFikcmt8_Eq1%3Djp%3D6kgofKG9uh%2BtUJ6pLDw2dEXY10zt_QGQ%40mail.gmail.com.

--

---

Marco Neumann

Nadin, Mihai

Dear and respected colleagues,

The question: Is there any fundamental reason that prevents machines from having intentions, goals, plans -- implicit or explicit ?

Is there any fundamental reason that prevents machines from having intentions, goals, plans -- implicit or explicit ?

cannot be answered without defining what kind of machines. Period. Please go back to Turing’s paper (on the Entscheidung’s problem from Hilbert). He defines a very specific machine—the algorithmic machine. And he proves that it CANNOT produce mathematical proofs. Period. This machine cannot have intentions, goals, plans. Can we conceive of a machine that transcends the Turing machine? That is the question. Stuck in the conversion of syntax into pseudosemantics for algorithmic machines, we miss the fundamental understanding that language is driven by pragmatics (what we do!) and not by semantics. Even Chomsky can be wrong.

Mihai Nadin (of course, he can also be wrong! Prove it when it is the case)

From: ontolo...@googlegroups.com <ontolo...@googlegroups.com>

On Behalf Of Ricardo Sanz

Sent: Monday, August 1, 2022 7:26 AM

To: ontolo...@googlegroups.com

Subject: Re: [ontolog-forum] Why machines will never rule the world

Hi,

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAB_uPW4W0xtcJG3dCUq7FWJiei4KZx-WJveu7LiZwnyx1SQY_w%40mail.gmail.com.

Kathryn B. Laskey

[Turing] defines a very specific machine—the algorithmic machine. And he proves that it CANNOT produce mathematical proofs. Period.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/BL3PR01MB6897E12EA475C6D44255B3A5DA9A9%40BL3PR01MB6897.prod.exchangelabs.com.

John F Sowa

John F Sowa

Ricardo Sanz

--

All contributions to this forum are covered by an open-source license.

For information about the wiki, the license, and how to subscribe or

unsubscribe to the forum, see http://ontologforum.org/info/

---

You received this message because you are subscribed to the Google Groups "ontolog-forum" group.

To unsubscribe from this group and stop receiving emails from it, send an email to ontolog-foru...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/defa7a1ab0594161888c86479c4eae47%40bestweb.net.

João Oliveira Lima

Below, I reproduce some excerpts from the "Relational Thinking" box (p. 42):

especially higher-order relations.

what Markman and Stillwell (2001) call “role-based categories.”

assessing the relation between an entity and some larger event or process in

which it plays a role.

comes from humans’ unique understanding of collaborative activities with

joint goals and individual roles ...

“slots” or roles that anyone could play. These abstract slots formed role based

categories, such as things that one uses to kill game (viz., weapons;

Barsalou, 1983), as well as more abstract narrative categories such as

protagonist, victim, avenger, and so on. A further speculation might be that

these abstract slots at some point enabled humans to even put relational

material in the slots; for example, a married couple can play a role in a

cultural activity. This would be the basis for the kinds of higher- order

relational thinking that Penn et al. (2008) emphasize as especially important

in differentiating human thinking."

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAN_82SSX5bRuPd3LeH%2BHAmfDo_RQi4Ge_MLTWBi1qgf6cBjRcg%40mail.gmail.com.

Ricardo Sanz

Hi Ricardo,I am Barry's co-author. You can see in the book that the difference between the human mind-body-continuum which is the physical basis of our will is a complex system, while any machine is just a logic system. The unanswerable question whether we have a free will or not is irrelevant for our argument. Humans have a will, machines do not, because one cannot emulate a will using a logic system. Logic systems are very restricted, and this restriction is grounded in the fundamental limitations of mathematical models, which are the basis of machines. We cannot model complex systems mathematically, and that is why we cannot engineer a will.Sincerely,Jobst

--Cognotekt GmbHBonner Str. 209

50968 Köln

www.cognotekt.com

phone: +49221-643065-10, fax: +49221-643065-99

Sitz der Gesellschaft: Köln

Handelsregisternummer: HRB 71466, Amtsgericht Köln

Geschäftsführender Gesellschafter: Dr. Jobst Landgrebe

Ricardo Sanz

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CADtko5%2B-8sg%3DptBE9TYu4p2swKTLfcwM%3DOV%2BtvHJD28MnDXVLA%40mail.gmail.com.

João Oliveira Lima

I transcribe some new excerpts from the cited book that better clarify this distinction.

“The shared intentionality hypothesis is that this story comprises a two-step evolutionary sequence: joint intentionality followed by collective intentionality.” (p. 31)

“When put to use in thinking, collective intentionality comprises not just symbolic and perspectival representations but conventional and “objective” representations; not just recursive inferences but self-reflective and reasoned inferences; and not just second-personal self- monitoring but normative self- governance based on the culture’s norms of rationality” (p. 6)

“But now imagine a Lord of the Flies scenario. In this case it would be multiple infants born and growing to maturity on a desert island, with no one to interact with but each other. Perhaps surprisingly, the hypothesis in this case is that these children would indeed have the kind of social interactions necessary for developing joint intentionality— but not collective intentionality”( p. 146)

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAB_uPW5sTi3n-yfCZ775PHG3Q0%3D%3D-vL%2BuwfpaK0WTe-gxGzVPw%40mail.gmail.com.

Marco Neumann

MDB> Additionally Noam states in chapter 6 that he suspects that there is no semantics in natural language, just syntax.That is a very oversimplified summary of what Noam said. What he claimed is that a formal theory of semantics along the lines of Montague's goal is not possible. But that does not deny the fact that it's possible to state meaningful. information in natural languages.

Roman Jakobson, a far better linguist than Chomsky, had a more balanced view, which he summarized in one sentence: "Syntax without semantics is meaningless."We all convey meaningful information via NLs every day of our lives. But our syntax is not as precise as Chomsky claimed it should be, and our semantics is not as precise as Montague said it should be.In general, most people, most of the time are somewhere in between. We convey enough information with enough precision to make ourselves understood by most people most of the time.But when somebody doesn't understand what we say -- which is quite often, even in Ontolog Forum -- they disagree or ask questions. Then we rephrase what we said to clarify what we mean.That's the way language works. It's good enough for most purposes in our daily lives. When the message doesn't come across clearly, people ask questions. With enough Q & A, it's possible to explain some very complex issues.Unfortunately, there are some people who never get the point. Some of them can even become a so-called president. People like that demonstrate Chomsky's extreme of syntax with a total absence of meaningful semantics.

John

--

All contributions to this forum are covered by an open-source license.

For information about the wiki, the license, and how to subscribe or

unsubscribe to the forum, see http://ontologforum.org/info/

---

You received this message because you are subscribed to the Google Groups "ontolog-forum" group.

To unsubscribe from this group and stop receiving emails from it, send an email to ontolog-foru...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/df2029b10b354011941e82a526a3bbee%40bestweb.net.

---

Marco Neumann

Barry Smith

At the end of the day, the only aspect [as between machines and humans] that may remain fundamentally different is the old "free will" of humans. And, as you know, many people think it does not exist.

BS

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAB_uPW637HcW%3D_g_-uOdL%3DG8XR195F0x1YoEnTZ1Pc%2BRkQh-Fw%40mail.gmail.com.

Dan Brickley

I agree very strongly with John, here, on the role of goals and intentions or acts of will. Indeed this is an underlying theme of a book on AI which is to appear on August 12. See here for details:

- Human intelligence is a capability of a complex dynamic system—the human brain and central nervous system.

- Systems of this sort cannot be modelled mathematically in a way that allows them to operate inside a computer

BarryOn Fri, Jul 29, 2022 at 14:40 John F Sowa <so...@bestweb.net> wrote:Michael,I agree with the following point. That is why GPT-3, without a symbolic component, is a toy.Any application of any kind that does not have intentions, goals, plans -- implicit or explicit -- cannot do anything useful. Any system of reasoning that doesn't have any intentions, goals, plans -- implicit or explicit -- has no criteria for determining a direction or determining when and where to stop.John

From: "Michael DeBellis" <mdebe...@gmail.com>

IMO, any theory that attempts to explain human language use has to have concepts such as intentions, goals, plans, etc. which to the best of my knowledge none of these large language models do.Michael

--

All contributions to this forum are covered by an open-source license.

For information about the wiki, the license, and how to subscribe or

unsubscribe to the forum, see http://ontologforum.org/info/

---

You received this message because you are subscribed to the Google Groups "ontolog-forum" group.

To unsubscribe from this group and stop receiving emails from it, send an email to ontolog-foru...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/f2494d12bdfc48f3895ad1f1a95cd7c6%40bestweb.net.

--

All contributions to this forum are covered by an open-source license.

For information about the wiki, the license, and how to subscribe or

unsubscribe to the forum, see http://ontologforum.org/info/

---

You received this message because you are subscribed to the Google Groups "ontolog-forum" group.

To unsubscribe from this group and stop receiving emails from it, send an email to ontolog-foru...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAN_82SSX5bRuPd3LeH%2BHAmfDo_RQi4Ge_MLTWBi1qgf6cBjRcg%40mail.gmail.com.

Ricardo Sanz

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAN_82SQ%2BYez7zU%3DsJUeE_4ONjjRq3yZTksVHXZPFqOBQ6W3o1Q%40mail.gmail.com.

Barry Smith

On Sun, 31 Jul 2022 at 23:46, Barry Smith <ifo...@gmail.com> wrote:I agree very strongly with John, here, on the role of goals and intentions or acts of will. Indeed this is an underlying theme of a book on AI which is to appear on August 12. See here for details:Interesting! From the Routledge summary. It highlights two claims:

- Human intelligence is a capability of a complex dynamic system—the human brain and central nervous system.

- Systems of this sort cannot be modelled mathematically in a way that allows them to operate inside a computer

Do you have anything already published on the latter claim? (otherwise we can wait for the book of course)

is there also an associated claim here that systems “inside a computer” cannot *be* (rather than “model”) human-like complex dynamical systems?

Can computers model-or-be simpler dynamical systems? Where’s the ceiling?

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAFfrAFqtNru4RKuhsdYqMZpu_8353sfNbj06VysVAdpCmAH%3Dtw%40mail.gmail.com.

Giancarlo Guizzardi

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAN_82STWLRYFJPBenEsM58qrvXPNZY27nQMy9xeFZo%2BL%2B4nqcw%40mail.gmail.com.

Azamat Abdoullaev

Barry Smith

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAKK1bf8GnwR14ordcc7fR5qCSjgwY61rNxVDvauy5QP3c_F_NQ%40mail.gmail.com.

Azamat Abdoullaev

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAN_82SSQt%3DXLqacfjeukB%3DHwCvywhezQZiPgBh2e-jH8OV3PUA%40mail.gmail.com.

dr.matt...@gmail.com

Hmmm. Nearly every technology is an opportunity or a threat. Generally, you get to choose which.

Regards

Matthew West

From: ontolo...@googlegroups.com <ontolo...@googlegroups.com> On Behalf Of Azamat Abdoullaev

Sent: 02 August 2022 15:16

To: ontolo...@googlegroups.com

Subject: Re: [ontolog-forum] Why machines will never rule the world

Yes and no.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAKK1bf8X16p6ujZgo9ZAwS6tt4biABgwM4KFeH6PbiCXrCC57Q%40mail.gmail.com.

Nadin, Mihai

Dear and respected Kathryn B. Laskey,

Dear and respected colleagues,

A sentence such as

That is not true.

cannot go unnoticed. I refrain from correcting my peers. I shall only suggest that you read

https://www.cs.virginia.edu/~robins/Turing_Paper_1936.pdf

More over: Wikipedia is not a reference I accept in a class. Even less in a professional forum.

For undecidability please read:

Gödel, K., 1931, “Über formal unentscheidbare Sätze der Principia Mathematica und verwandter Systeme I,” Monatshefte für Mathematik Physik, 38: 173–198. English translation in van Heijenoort 1967, 596–616, and in Gödel 1986, 144–195.

Wish you well.

Mihai Nadin

PS Dialog is possible when the terms are well defined.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/989AC4CA-0FE1-463B-A925-A32DAB3E4956%40gmu.edu.

Michael DeBellis

Musk has a great business intuition that everything is possible,

...including man-machine superintelligence by 2025.

You received this message because you are subscribed to a topic in the Google Groups "ontolog-forum" group.

To unsubscribe from this topic, visit https://groups.google.com/d/topic/ontolog-forum/G-xSp22wUqM/unsubscribe.

To unsubscribe from this group and all its topics, send an email to ontolog-foru...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CAKK1bf8X16p6ujZgo9ZAwS6tt4biABgwM4KFeH6PbiCXrCC57Q%40mail.gmail.com.

Azamat Abdoullaev

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/CALGFike77A6Hu5oz%3DnXV7TOA%2BgfkC%3D_uz_80bUSvzw0bcgzd2A%40mail.gmail.com.

John F Sowa

Wikipedia is not a reference I accept in a class. Even less in a professional forum.

For undecidability please read:

Gödel, K., 1931, “Über formal unentscheidbare Sätze der Principia Mathematica und verwandter Systeme I,” Monatshefte für Mathematik Physik, 38: 173–198. English translation in van Heijenoort 1967, 596–616, and in Gödel 1986, 144–195.

Dialog is possible when the terms are well defined.

poole

Ernest Nagel and James R. Newman, Gödel's Proof, New York University Press, 2001.

The outline is straightforward. Consider the sentence:

“This statement cannot be proved”

If it is true, the logic is incomplete; if it is false, the logic must be inconsistent.

The only things that this doesn’t apply to are those that can’t represent the statement.

Gödel's proof showed that any system that can do arithmetic can represent this statement, and hence must be either incomplete or inconsistent. If humans are not subject to this, it must be because they cannot do arithmetic (which us not implausible).

This does not mean that machines can’t do proofs. As Kathy said, computer-generated proofs are common; much of the hardware and safety-critical infrastructure we use has been proved correct. The Turing equivalence is that sometimes these proof systems just don’t halt. Kathy’s comments are right on point!

David

P.S. I must admit I’m a bit amused about this thread. Machines *do* rule the world. If we got rid of machines, society would fail to function and 90% (99%?) of us would starve to death. Nearly all decisions of society are made by machines; from logistics to manufacturing to advertising to monetary transactions to ….

> On Aug 2, 2022, at 7:59 PM, John F Sowa <so...@bestweb.net> wrote:

>

> [CAUTION: Non-UBC Email]

>

> 1. The quality of Wikipeida articles depends entirely on the knowledge of the authors and the editors. The quality control is extremely variable. Some of them are bad, misleading, or worse, many are mediocre, many are useful for simple facts, and some are excellent. Unfortunately, even the best can be watered down by editors whose knowledge is mediocre.

>

> I cited a Wikipedia article on GPT-3, even though almost all of it was written by people who had no idea about how GPT-3 works or how to evaluate what it does. But I was very careful to cite three authors who made very good comments and had links to good web pages.

>

> I admit that I should have extracted just the good parts and put them in my note. But I was lazy, it was getting late, and I wanted to go to bed.

>

> 2. I agree that Gödel's original article on undecidability is a classic, which people who use his ideas should srudy. However, many people who don't understand the implications of that article use it as a weapon to kill very important projects. For many purposes, just citing that article is more dangerous than ignoring it. For a discussion of those issues, see "Fads and fallacies about logic": https://jfsowa.com/pubs/fflogic.pdf

>

> 3, I agree that it's important to define terms. However, the requirement that all terms must be well defined before a dialog begins would kill 99.99% of all conferences, university courses, and Ontolog notes.

>

> John

>

> Wikipedia is not a reference I accept in a class. Even less in a professional forum.

>

> For undecidability please read:

>

> Gödel, K., 1931, “Über formal unentscheidbare Sätze der Principia Mathematica und verwandter Systeme I,” Monatshefte für Mathematik Physik, 38: 173–198. English translation in van Heijenoort 1967, 596–616, and in Gödel 1986, 144–195.

>

> Dialog is possible when the terms are well defined.

>

>

> All contributions to this forum are covered by an open-source license.

> For information about the wiki, the license, and how to subscribe or

> unsubscribe to the forum, see http://ontologforum.org/info/

> ---

> You received this message because you are subscribed to the Google Groups "ontolog-forum" group.

> To unsubscribe from this group and stop receiving emails from it, send an email to ontolog-foru...@googlegroups.com.

John F Sowa

Azamat Abdoullaev

--

All contributions to this forum are covered by an open-source license.

For information about the wiki, the license, and how to subscribe or

unsubscribe to the forum, see http://ontologforum.org/info/

---

You received this message because you are subscribed to the Google Groups "ontolog-forum" group.

To unsubscribe from this group and stop receiving emails from it, send an email to ontolog-foru...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/deb21da0ba3b42feb2ca87ced3c93882%40bestweb.net.

Marco Neumann

Q6: Semantics state-of-the-art

Keith Duggar:

Fascinating, so cognitive horizon. This is regarding the many theories of semantics that have cropped up over the years for example truth-conditional semantics, logical semantics, ontological semantics, etc. Which, if any, paradigms of semantics do you think are headed in the right direction as far as getting us closer to an actual science of semantics or will we ever have a formal science of semantics as Montague thought?

Noam Chomsky:

Well, I think there's very rich exciting work in what's called Semantics. It's been one of the most lively fields of theory in linguistics, philosophy, and cognitive science in years. You mentioned Barbara Partee earlier, one of the pioneers in this field, great work.

It's not semantics it's syntactics! It's all study of symbolic manipulations that go on in the mind.

Suppose you do model-theoretic semantics, the kind Barbara Partee does. How you do model-theoretic semantics? What you do is identify certain individuals and certain predicates and you ask how the predicates are distributed over the individuals under various conditions. What are the individual's mental objects, not things in the world, they are mental objects or something. Do they correspond to anything in the world? Very loosely. If you actually look carefully at the mix of words there's a very loose connection to anything in the outside world. Take Aristotle's example, he discusses this, his example is "house". So what's a house? Well, in his metaphysics house is a combination of form and matter. The matter of a house is the bricks, the timber, things that a physicist could find. The form of the house is the intention of the designer, the characteristic is things that are in the mind. In fact, that's what a house is the thing could look exactly like a house for a physicist and not be a house. It could be a library, it could be a stable, it could be a paperweight for a giant, you know. It could be anything because the meaning of every word is largely a matter of our conceptual structures and that's true for the simplest words that you find.

Actually, the first example that was used in physical philosophy was "river". Pericles, pre-socratic, asked having you cross the same river twice. It's a pretty deep question if you think about it. The second time you cross it it's a totally different physical object. It wasn't the same river when you started living at it. The form is what we construct in our minds as what constitutes a river. But I happen to live in Arizona now and on my way to the university I cross something called the Rillito river. I have yet to see a drop of water. Old-timers tell me if you could go with the monsoon there's sort of water flowing, though is the Rillito a river if it got paved over and started to be used for commuting? It would be the Rillito highway. It's the same object and that's true for every word in the language.

There is simply no semantics in natural language! At least semantics in the sense of Frege (??), Peirce (??), Carnap, Quine, any formal semantics. It just doesn't exist in language.

We have mental operations going on that have some loose relation to the outside world but it's not "truth" and it's not "reference". It just don't occur. So what's the best approach to this? In my own view the most productive approaches are what are called the Event Semantics near the Davidsonian, developed by Paul Pietrowski and Barry Schein and a number of others which essentially started with a question like: Why can, if we say John read the book quickly, why can we infer that John read the book? Okay, that was the original question and the proposed answer is there's an event "reading", there's an agent "John" there's an agent "book" and there's an adverb, the modifier of the event "quickly". If you analyze it that way that's just a concatenation and you get the inferences. That's been developed extensively by people like Pietrowski and Schein among others that happens to fit very naturally to what is, I think is a coming. What we're coming to understand is pure syntax.

It seems that that's the way pure syntax provides structures of that nature which fits very naturally into events and semantics. But notice the event semantics is syntax. When you talk about an event it's not anything in the world it's something that we construct in our minds.

There was a gentleman named Zeno who taught us something about that. How many events are there when I cross a room? As many as you decide to put there. You know there's no end up to the power of the continuum. So Event Semantics I think is productive as a form of syntax. Then comes another question how do all these things going on in our mind relate to the outside world. That's one of those questions that I don't think we have any answer to. Now we're back to Galileo's problem. How we do these things we don't know? We do them. We do a lot of things but we have no understanding. We'll probably never have an understanding of it.

--

All contributions to this forum are covered by an open-source license.

For information about the wiki, the license, and how to subscribe or

unsubscribe to the forum, see http://ontologforum.org/info/

---

You received this message because you are subscribed to the Google Groups "ontolog-forum" group.

To unsubscribe from this group and stop receiving emails from it, send an email to ontolog-foru...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/deb21da0ba3b42feb2ca87ced3c93882%40bestweb.net.

---

Marco Neumann

Alex Shkotin

You received this message because you are subscribed to a topic in the Google Groups "ontolog-forum" group.

To unsubscribe from this topic, visit https://groups.google.com/d/topic/ontolog-forum/G-xSp22wUqM/unsubscribe.

To unsubscribe from this group and all its topics, send an email to ontolog-foru...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/BL3PR01MB6897550D25DFF3FF7B534957DA9D9%40BL3PR01MB6897.prod.exchangelabs.com.

Marco Neumann

--

All contributions to this forum are covered by an open-source license.

For information about the wiki, the license, and how to subscribe or

unsubscribe to the forum, see http://ontologforum.org/info/

---

You received this message because you are subscribed to the Google Groups "ontolog-forum" group.

To unsubscribe from this group and stop receiving emails from it, send an email to ontolog-foru...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/deb21da0ba3b42feb2ca87ced3c93882%40bestweb.net.

---

Marco Neumann

Kathryn B. Laskey

To view this discussion on the web visit https://groups.google.com/d/msgid/ontolog-forum/BL3PR01MB6897550D25DFF3FF7B534957DA9D9%40BL3PR01MB6897.prod.exchangelabs.com.

Nadin, Mihai

Dear and respected David Poole, Dear and respected Alex Shkotin,

Dear and respected Kathryn Laskey,

Dear and respected colleagues,

I shall serve you (against my stand on the matter) the “Wikipedia recipe”:

In 1936 and 1937, Alonzo Church and Alan Turing showed independently, that there can be no answer to the Entscheidungsproblem. They showed that it is impossible for an algorithm to decide whether statements in arithmetic are true or false. For this reason, there can be no solution for the Entscheidungsproblem .

In short: this is what I stated in my initial posting. I shall ask all of you to read Hilbert’s challenge (so that we discuss the same problem, and not different examples), and to read Turing’s paper.

Summary: does there exist a “definite method” that, when given any possible statement in mathematics, can decide whether that statement is true or false? Hilbert believed that the answer to this question would be “yes”. Alan Turing proved that the answer to the decidability problem was “no” (Hodges, 1983; Turing, 1936).

Machines cannot do proofs.

For me this ends the conversation.

Best wishes.

Mihai Nadin

-----Original Message-----

From: ontolo...@googlegroups.com <ontolo...@googlegroups.com> On Behalf Of poole

Sent: Tuesday, August 2, 2022 11:07 PM

To: 'James Davenport' via ontolog-forum <ontolo...@googlegroups.com>

Subject: Re: [ontolog-forum] Why machines will never rule the world

An excellent readable outline for Gödel's proof is the book:

Ernest Nagel and James R. Newman, Gödel's Proof, New York University Press, 2001.

The outline is straightforward. Consider the sentence:

“This statement cannot be proved”

If it is true, the logic is incomplete; if it is false, the logic must be inconsistent.

The only things that this doesn’t apply to are those that can’t represent the statement.

Gödel's proof showed that any system that can do arithmetic can represent this statement, and hence must be either incomplete or inconsistent. If humans are not subject to this, it must be because they cannot do arithmetic (which us not implausible).

This does not mean that machines can’t do proofs. As Kathy said, computer-generated proofs are common; much of the hardware and safety-critical infrastructure we use has been proved correct. The Turing equivalence is that sometimes these proof systems just don’t halt. Kathy’s comments are right on point!

David

P.S. I must admit I’m a bit amused about this thread. Machines *do* rule the world. If we got rid of machines, society would fail to function and 90% (99%?) of us would starve to death. Nearly all decisions of society are made by machines; from logistics to manufacturing to advertising to monetary transactions to ….

> On Aug 2, 2022, at 7:59 PM, John F Sowa <so...@bestweb.net> wrote:

>

> [CAUTION: Non-UBC Email]

> I sympathize with the note below, and I deleted comments that I agree with. I don't disagree with the three that remain, but they raise important issues that require more discussion.

>

> 1. The quality of Wikipeida articles depends entirely on the knowledge of the authors and the editors. The quality control is extremely variable. Some of them are bad, misleading, or worse, many are mediocre, many are useful for simple facts, and some are excellent. Unfortunately, even the best can be watered down by editors whose knowledge is mediocre.

>

> I cited a Wikipedia article on GPT-3, even though almost all of it was written by people who had no idea about how GPT-3 works or how to evaluate what it does. But I was very careful to cite three authors who made very good comments and had links to good web pages.

>

> I admit that I should have extracted just the good parts and put them in my note. But I was lazy, it was getting late, and I wanted to go to bed.

>

> 2. I agree that Gödel's original article on undecidability is a

> classic, which people who use his ideas should srudy. However, many

> people who don't understand the implications of that article use it as

> a weapon to kill very important projects. For many purposes, just

> citing that article is more dangerous than ignoring it. For a

> discussion of those issues, see "Fads and fallacies about logic":

> https://nam02.safelinks.protection.outlook.com/?url=https%3A%2F%2Fjfso

> wa.com%2Fpubs%2Ffflogic.pdf&data=05%7C01%7Cnadin%40utdallas.edu%7C

> 3dc181d9ac8a43c31a0108da750591dc%7C8d281d1d9c4d4bf7b16e032d15de9f6c%7C

> 0%7C0%7C637950964790845571%7CUnknown%7CTWFpbGZsb3d8eyJWIjoiMC4wLjAwMDA

> iLCJQIjoiV2luMzIiLCJBTiI6Ik1haWwiLCJXVCI6Mn0%3D%7C3000%7C%7C%7C&sd

> ata=a2N6aBKKHjC2IXr33pF53r8k249%2BFGDFlA2mTeGq0oo%3D&reserved=0

>

> 3, I agree that it's important to define terms. However, the requirement that all terms must be well defined before a dialog begins would kill 99.99% of all conferences, university courses, and Ontolog notes.

>

> John

>

> From: "Nadin, Mihai" na...@utdallas.edu Wikipedia is not a reference I

> accept in a class. Even less in a professional forum.

>

> For undecidability please read:

>

> Gödel, K., 1931, “Über formal unentscheidbare Sätze der Principia Mathematica und verwandter Systeme I,” Monatshefte für Mathematik Physik, 38: 173–198. English translation in van Heijenoort 1967, 596–616, and in Gödel 1986, 144–195.

>

> Dialog is possible when the terms are well defined.

>

>

> --

> All contributions to this forum are covered by an open-source license.

> For information about the wiki, the license, and how to subscribe or

> unsubscribe to the forum, see

> https://nam02.safelinks.protection.outlook.com/?url=http%3A%2F%2Fontol

> ogforum.org%2Finfo%2F&data=05%7C01%7Cnadin%40utdallas.edu%7C3dc181

> d9ac8a43c31a0108da750591dc%7C8d281d1d9c4d4bf7b16e032d15de9f6c%7C0%7C0%

> 7C637950964790845571%7CUnknown%7CTWFpbGZsb3d8eyJWIjoiMC4wLjAwMDAiLCJQI

> joiV2luMzIiLCJBTiI6Ik1haWwiLCJXVCI6Mn0%3D%7C3000%7C%7C%7C&sdata=eG

> 6wlnDkTK3wOPq1CTexb3JaHU7%2B649AjQTOzvIdWYk%3D&reserved=0

> ---

> You received this message because you are subscribed to the Google Groups "ontolog-forum" group.

> To unsubscribe from this group and stop receiving emails from it, send an email to ontolog-foru...@googlegroups.com.

--

All contributions to this forum are covered by an open-source license.

For information about the wiki, the license, and how to subscribe or unsubscribe to the forum, see https://nam02.safelinks.protection.outlook.com/?url=http%3A%2F%2Fontologforum.org%2Finfo%2F&data=05%7C01%7Cnadin%40utdallas.edu%7C3dc181d9ac8a43c31a0108da750591dc%7C8d281d1d9c4d4bf7b16e032d15de9f6c%7C0%7C0%7C637950964790845571%7CUnknown%7CTWFpbGZsb3d8eyJWIjoiMC4wLjAwMDAiLCJQIjoiV2luMzIiLCJBTiI6Ik1haWwiLCJXVCI6Mn0%3D%7C3000%7C%7C%7C&sdata=eG6wlnDkTK3wOPq1CTexb3JaHU7%2B649AjQTOzvIdWYk%3D&reserved=0

---

You received this message because you are subscribed to the Google Groups "ontolog-forum" group.