Stenger on Initial Low Entropy

176 views

Skip to first unread message

Jason Resch

Oct 15, 2020, 6:51:13 PM10/15/20

to Everything List

I noticed that Victor Stenger's position on entropy, as described here: https://arxiv.org/pdf/1202.4359.pdf on page 7, appears to be the same as described by the cosmologist David Layzer in a 1975 issue of Scientific American: https://static.scientificamerican.com/sciam/assets/media/pdf/2008-05-21_1975-carroll-story.pdf

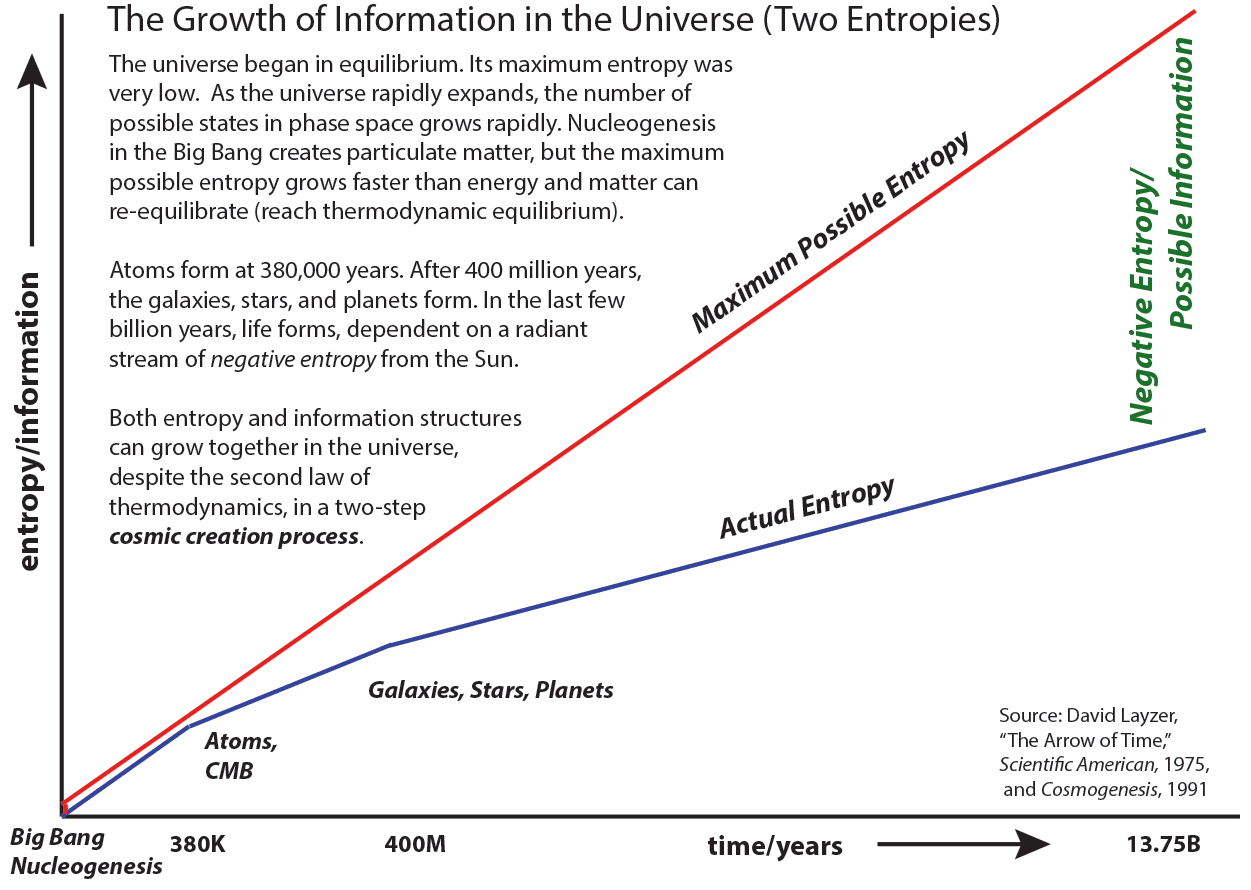

The basic idea, which is described graphically here: https://www.informationphilosopher.com/solutions/scientists/layzer/arrow_of_time.html

It is a counter-argument to the commonly expressed idea that the universe began in a low entropy state. Rather, it explains how the expansion of the universe increases the state of maximum possible entropy. If the universe expands more quickly than an equilibrium can be reached, then there is room for complexity (information / negative entropy) to increase.

Why is it that the "low entropy" myth is so persistent, and this alternate explanation is so little known? Some physicists, such as Penrose are still looking for alternate explanations for the special low entropy state. What fraction of physicists are aware of Stenger's/Layzer's view? Does it appear in any physics textbooks? Has it been refuted?

Jason

Bruce Kellett

Oct 15, 2020, 7:07:46 PM10/15/20

to Everything List

It is refuted by the idea of unitary evolution in QM. Unitary evolution means that everything is reversible, If new microstates are created as the universe expands, then this expansion cannot be reversed: the creation of such microstates gives an absolute arrow of time. This is generally rejected, because physicists tend to believe in unitary dynamics. If dynamics are not unitary, then the universe is not governed by the Schrodinger equation, and arguments for the multiverse collapse.

Bruce

Russell Standish

Oct 16, 2020, 12:38:27 AM10/16/20

to everyth...@googlegroups.com

On Fri, Oct 16, 2020 at 10:07:32AM +1100, Bruce Kellett wrote:

>

> It is refuted by the idea of unitary evolution in QM. Unitary evolution means

> that everything is reversible, If new microstates are created as the universe

> expands, then this expansion cannot be reversed: the creation of such

> microstates gives an absolute arrow of time. This is generally rejected,

> because physicists tend to believe in unitary dynamics. If dynamics are not

> unitary, then the universe is not governed by the Schrodinger equation, and

> arguments for the multiverse collapse.

I'm not sure the last point follows, perhaps you can expand on it. But

>

> It is refuted by the idea of unitary evolution in QM. Unitary evolution means

> that everything is reversible, If new microstates are created as the universe

> expands, then this expansion cannot be reversed: the creation of such

> microstates gives an absolute arrow of time. This is generally rejected,

> because physicists tend to believe in unitary dynamics. If dynamics are not

> unitary, then the universe is not governed by the Schrodinger equation, and

> arguments for the multiverse collapse.

it is an interesting argument that the Layzer style "increase in microstates"

should be enough to prevent a Hawking style "wavefunction of the

universe".

Could the ideas be made compatible by have the number of accessible

microstates increasing over time, due to the expansion of the

universe, but that the total number remains constant, or is even

infinite? Or does that place us right back at the original problem of

having a low entropy initial state.

--

----------------------------------------------------------------------------

Dr Russell Standish Phone 0425 253119 (mobile)

Principal, High Performance Coders hpc...@hpcoders.com.au

http://www.hpcoders.com.au

----------------------------------------------------------------------------

Bruce Kellett

Oct 16, 2020, 1:49:48 AM10/16/20

to Everything List

On Fri, Oct 16, 2020 at 3:38 PM Russell Standish <li...@hpcoders.com.au> wrote:

On Fri, Oct 16, 2020 at 10:07:32AM +1100, Bruce Kellett wrote:

>

> It is refuted by the idea of unitary evolution in QM. Unitary evolution means

> that everything is reversible, If new microstates are created as the universe

> expands, then this expansion cannot be reversed: the creation of such

> microstates gives an absolute arrow of time. This is generally rejected,

> because physicists tend to believe in unitary dynamics. If dynamics are not

> unitary, then the universe is not governed by the Schrodinger equation, and

> arguments for the multiverse collapse.

I'm not sure the last point follows, perhaps you can expand on it. But

it is an interesting argument that the Layzer style "increase in microstates"

should be enough to prevent a Hawking style "wavefunction of the

universe".

I was talking about the Everett-style quantum many worlds. Other types of multiverse (such as the existence of other cosmological Hubble volumes) are not necessarily affected. Hawking's "wave function of the universe" is a definite casualty if unitary evolution is denied.

Could the ideas be made compatible by have the number of accessible

microstates increasing over time, due to the expansion of the

universe, but that the total number remains constant, or is even

infinite? Or does that place us right back at the original problem of

having a low entropy initial state.

I don't really understand this. An infinite number of microstates makes little sense in standard thermodynamics.

Bruce

Russell Standish

Oct 16, 2020, 2:03:52 AM10/16/20

to Everything List

which would definitely be non-standard thermodynamics. But that's

never stopped anyone before :).

I was more speculating along the lines of the usual way of reconciling

irreversible processes with a reversible multiverse. Where the

interesting stuff happens in a finite dimensional subspace of an

infinite dimensional Hilbert space, but that dimensionality grows in

time due to "splitting", or "decoherence" or what have you.

Bruce Kellett

Oct 16, 2020, 2:29:39 AM10/16/20

to Everything List

Decoherence does not increase the relevant number of dimensions of Hilbert space. If you do a spin measurement, the up and down branches entangle with the environment through decoherence, but there are still really only two primary bundles of states - the operative Hilbert space (as far as the original measurement is concerned) is still two-dimensional. The number of dimensions in the original problem does not magically increase.

If you want to see decoherence as an indication of irreversibility, then that is fine. But that is not what Everett says. To get irreversibiliy into unitary QM you have to introduce a real collapse process, such as GRW -- or some of the branches resulting from decoherence have to be traced over (coarse-grained).

Bruce

Alan Grayson

Oct 16, 2020, 2:47:16 AM10/16/20

to Everything List

If the very early universe is a hot photon gas, wouldn't that be a very high entropy initial condition? Why would anyone think the initial state is low entropy? AG

Jason Resch

Oct 16, 2020, 2:49:29 AM10/16/20

to Everything List

I understand unitarity for a fixed physical system with certain finite boundaries. But how does that work for the case of an expanding universe? If you define the wave function for the observable universe at time 1, what is the wave function for time 2? Doesn't the number of possible states in time 2 not increase beyond what it was in time 1, given new information has entered the system from the cosmological horizon?

Also, I think we can borrow a lesson from quantum computing to shed some light on the problem of irreversibility and entropy. Quantum computers need to use reversible logic gates to prevent premature decoherence. Reversible circuits generate garbage (ancilla) bits as a result of the continued operation of the computation. (see https://en.wikipedia.org/wiki/Ancilla_bit and https://quantumcomputing.stackexchange.com/questions/1185/why-is-it-important-to-eliminate-the-garbage-qubits ).

If we extend this analogy to the universe, can we envision the rise of complexity/macroscopic order in a similar way to the locally growing order of a reversible computation, which must generate waste heat ("garbage/ancilla bits") leading to global rise in entropy? So long as there are enough places to dump these ancilla bits (such as into the low temperature, non-equalized environment), then there is space for growth of local order through the process of reversible computations.

Jason

Jason Resch

Oct 16, 2020, 3:42:25 AM10/16/20

to Everything List

Entropy could begin at or near it's maximum, but if the maximum entropy grows faster than the actual entropy, it provides room for entropy to grow. See: https://informationphilosopher.com/solutions/scientists/layzer/

Jason

Lawrence Crowell

Oct 16, 2020, 6:37:18 AM10/16/20

to Everything List

With quantum fields in spacetime there is no equilibrium. Think of a black hole of mass M and temperature T = ħc^3/8πkGM = 1/8πm in a background region with equal temperature. The black hole can absorb or emit a unit of mass δm m → m ± δm so the temperature changes as T → T ∓ δT. This means the temperature will always drift away from any equilibrated temperature. There is then no thermal equilibrium or maximum entropy.

The only way to prevent a black hole from this sort of runaway situation is to place it in a box so if it emits Hawking radiation it is reflected back to the black hole and reabsorbed. The only ideal box is the anti-de Sitter spacetime. This then leads to the black hole quantum states in an entanglement with the boundary fields of the AdS which are by the Maldecena AdS/CFT result dual to a quantum conformal field theory (CFT) of one dimension less. If the AdS is three dimensions this is AdS_3 ≃ CFT_2, which is the Virasoro algebra of the bosonic string. For AdS_4 we have CFT_3 that is an N = 2 supersymmetric Yang Mills CFT. This corresponds to the BTZ black hole in and AdS_3. For AdS_5 this is CFT_4 for the N = 4 SYM CFT.

This leads to a bit of a conundrum. The AdS has Λ < 0, which in the case of the bosonic string defines its negative vacuum plus tachyon state. Yet clearly, we do not live in this sort of universe. I published a paper recently though that illustrates how the vacuum between two black holes in near collision is approximately AdS_4. We live in a spacetime with Λ ≥ 0, with equality only a local approximation. This is the Vafa swampland situation, where the de Sitter (like) spacetime we observe, say FLRW, is some broken symmetry physics from the AdS. Now interestingly this involves some increase in vacuum energy. An AdS spacetime has causal regions separated by timelike boundaries, and on these boundaries are CFTs. These may include gauge-like gravity with the emergence of an induced metric with Λ ≥ 0. Also, hyperbolic subsets in AdS, analogous to arcs in the Poincare ½-plane or disk, may be defects with Lanscoz junction condition for a positive vacuum there. This would then be a holographic screen corresponding to de Sitter spacetime. This might then be the dS spacetime of eternal inflation.

This leads then back to the entropy condition of the early universe. The entropy of the inflationary spacetime is determined by the area of the local horizon. This is really very small ≃ 10^{10} Planck areas at most. Remember that in QFT in curved spacetime area of a horizon or holographic screen = entropy. A local region of this spacetime then quantum tunnels into a false vacuum of much lower energy corresponding to the cosmological constant we observe. The entropy of fields then was much smaller than the horizon scale expanded to 10^{10} light years. This gives entropy lots of room to grow, and this is reflected in the mass-gap between the inflationary false vacuum and the physical vacuum and sets in reheating. This generates radiation and particles. So the upshot is that the entropy of the early universe set by the inflationary dS spacetime, but quantum transitioned into a region that had much lower temperature. Hence there was a huge disequilibrium situation that defined the resulting big bang.

LC

Lawrence Crowell

Oct 16, 2020, 6:50:38 AM10/16/20

to Everything List

Sort of, but where this is set with inflation. The patch on the dS spacetime corresponding to the observable universe quantum tunnels from a low entropy configuration into a spacetime with a very high entropy bound. So this departure set into motion around the first 10^{-35}sec of this world and lasted 10^{-30}sec.

LC

Bruce Kellett

Oct 16, 2020, 7:19:14 AM10/16/20

to Everything List

On Fri, Oct 16, 2020 at 5:49 PM Jason Resch <jason...@gmail.com> wrote:

On Thu, Oct 15, 2020 at 6:07 PM Bruce Kellett <bhkel...@gmail.com> wrote:On Fri, Oct 16, 2020 at 9:51 AM Jason Resch <jason...@gmail.com> wrote:I noticed that Victor Stenger's position on entropy, as described here: https://arxiv.org/pdf/1202.4359.pdf on page 7, appears to be the same as described by the cosmologist David Layzer in a 1975 issue of Scientific American: https://static.scientificamerican.com/sciam/assets/media/pdf/2008-05-21_1975-carroll-story.pdfThe basic idea, which is described graphically here: https://www.informationphilosopher.com/solutions/scientists/layzer/arrow_of_time.htmlIt is a counter-argument to the commonly expressed idea that the universe began in a low entropy state. Rather, it explains how the expansion of the universe increases the state of maximum possible entropy. If the universe expands more quickly than an equilibrium can be reached, then there is room for complexity (information / negative entropy) to increase.Why is it that the "low entropy" myth is so persistent, and this alternate explanation is so little known? Some physicists, such as Penrose are still looking for alternate explanations for the special low entropy state. What fraction of physicists are aware of Stenger's/Layzer's view? Does it appear in any physics textbooks? Has it been refuted?It is refuted by the idea of unitary evolution in QM. Unitary evolution means that everything is reversible, If new microstates are created as the universe expands, then this expansion cannot be reversed: the creation of such microstates gives an absolute arrow of time. This is generally rejected, because physicists tend to believe in unitary dynamics. If dynamics are not unitary, then the universe is not governed by the Schrodinger equation, and arguments for the multiverse collapse.I understand unitarity for a fixed physical system with certain finite boundaries. But how does that work for the case of an expanding universe? If you define the wave function for the observable universe at time 1, what is the wave function for time 2? Doesn't the number of possible states in time 2 not increase beyond what it was in time 1, given new information has entered the system from the cosmological horizon?

If there is a unitary operator that takes the wave function at time 1 to time 2, the the evolution is unitary and reversible. Horizons play no part in this.

Also, I think we can borrow a lesson from quantum computing to shed some light on the problem of irreversibility and entropy. Quantum computers need to use reversible logic gates to prevent premature decoherence. Reversible circuits generate garbage (ancilla) bits as a result of the continued operation of the computation. (see https://en.wikipedia.org/wiki/Ancilla_bit and https://quantumcomputing.stackexchange.com/questions/1185/why-is-it-important-to-eliminate-the-garbage-qubits ).If we extend this analogy to the universe, can we envision the rise of complexity/macroscopic order in a similar way to the locally growing order of a reversible computation, which must generate waste heat ("garbage/ancilla bits") leading to global rise in entropy? So long as there are enough places to dump these ancilla bits (such as into the low temperature, non-equalized environment), then there is space for growth of local order through the process of reversible computations.

The quantum process of generating the ancilla bits is unitary, hence reversible. If these bits are treated as garbage and thrown away, then the result is irreversibility. No new space for bits is created.

Bruce

Lawrence Crowell

Oct 16, 2020, 7:53:50 AM10/16/20

to Everything List

A Turing machine replaces symbols on a tape. This is erasure, which is irreversible. A quantum computer is governed by a Hamiltonian, which in classical mechanics defines the energy surface of constant energy. Hence for a Turing machine to be reversible requires ancillary tapes or registers that send "erased information" into some record that can be accessed in a reversible way. A quantum computer, in principle a quantized Turing machine requires the same. These are the trash qubits, which are required in order to maintain the fidelity of the wave function.

LC

Alan Grayson

Oct 18, 2020, 4:12:51 AM10/18/20

to Everything List

For a given volume, the entropy is what it is, related to the possible microstates as given by Boltzmann's formula. If the volume increases, the entropy increases, and it starts at a maximum level depending on the volume of the very early universe. So I see no distinguishing the Actual Entropy from the Maximum Possible Entropy. AG

John Clark

Oct 18, 2020, 8:09:39 AM10/18/20

to everyth...@googlegroups.com

On Sun, Oct 18, 2020 Alan Grayson <agrays...@gmail.com> wrote:

> If the very early universe is a hot photon gas, wouldn't that be a very high entropy initial condition?

That would be true if gravity was not an important factor as is the case for most experiments we can produce in the lab where gravity can be safely ignored, but in the very early universe gravity was enormously important and can not be ignored. The early universe was very smooth but, due to random quantum variations, not perfectly smooth. So some parts of the universe had very slightly more mass/energy than other parts. As time progressed, because of gravity, the slightly denser regions pulled in slightly more particles into themselves than the slightly less dense regions did, and so those tiny variations started to grow larger. As a result of that growth of variations entropy increased and the Universe never again reached the very low entropy level it had when it was young.

> For a given volume, the entropy is what it is, related to the possible microstates as given by Boltzmann's formula. If the volume increases, the entropy increases, and it starts at a maximum level depending on the volume of the very early universe. So I see no distinguishing the Actual Entropy from the Maximum Possible Entropy. AG

The point is that if the universe is expanding (and accelerating) then there is no such thing as the universe having a Maximum Possible Entropy, whatever entropy value you give me no matter how large I can show you a time when the universe will have an even larger entropy.

John K Clark

Alan Grayson

Oct 18, 2020, 11:11:15 AM10/18/20

to Everything List

On Sunday, October 18, 2020 at 6:09:39 AM UTC-6, John Clark wrote:

On Sun, Oct 18, 2020 Alan Grayson <agrays...@gmail.com> wrote:> If the very early universe is a hot photon gas, wouldn't that be a very high entropy initial condition?That would be true if gravity was not an important factor as is the case for most experiments we can produce in the lab where gravity can be safely ignored, but in the very early universe gravity was enormously important and can not be ignored.

In Boltzmann's formula for entropy, gravity isn't a parameter. I think entropy only depends on the number of possible microstates, and therefore on the volume. AG

The early universe was very smooth but, due to random quantum variations, not perfectly smooth. So some parts of the universe had very slightly more mass/energy than other parts. As time progressed, because of gravity, the slightly denser regions pulled in slightly more particles into themselves than the slightly less dense regions did, and so those tiny variations started to grow larger. As a result of that growth of variations entropy increased and the Universe never again reached the very low entropy level it had when it was young.> For a given volume, the entropy is what it is, related to the possible microstates as given by Boltzmann's formula. If the volume increases, the entropy increases, and it starts at a maximum level depending on the volume of the very early universe. So I see no distinguishing the Actual Entropy from the Maximum Possible Entropy. AGThe point is that if the universe is expanding (and accelerating) then there is no such thing as the universe having a Maximum Possible Entropy, whatever entropy value you give me no matter how large I can show you a time when the universe will have an even larger entropy.

The concept of Maximum Possible Entropy was introduced by Jason, via his reference, and shown schematically in his diagram. Supposedly it can be compared with actual entropy at any time. I have no idea what this means. AG

John K Clark

Jason Resch

Oct 18, 2020, 12:30:23 PM10/18/20

to Everything List

Thanks Lawrence for your detailed reply. It is helpful, though I was not familiar with most of the terminology, the last paragraph made sense to me.

Jason

Jason Resch

Oct 18, 2020, 12:33:44 PM10/18/20

to Everything List

But according to the Bekenstein bound, the maximum possible entropy of a system is bound by its mass/enegy AND its volume. Two particles by themselves can encode an infinite amount of information if given infinite space to place them.

Wouldn't expanding the available volume for a system increase the number of bit-combinations you can work with?

Jason

Jason Resch

Oct 18, 2020, 12:39:43 PM10/18/20

to Everything List

So to make the analogy of "our universe as a quantum computer", as the volume of space expands (even for constant mass/energy) there become available more ways of encoding bits, and hence more places to stuff the garbage information in ancilla bits.

Whereas a spherical volume with a 1 meter radius a single photon with a wavelength of 1 meter might encode 1 bit of information (exist or not), in a volume of 2 meters that same photon can now encode two bits of information (north/south and west/east hemispheres) for its location.

The more places you gain to stuff trash bits, the more room there is for the computational process to continue and generate complexity and useful information.

Is there anything wrong with the above view?

Jason

Jason Resch

Oct 18, 2020, 12:48:33 PM10/18/20

to Everything List

This article explains the concept in detail for why the total entropy of the system might not grow as quickly as the maximum possible entropy of the system: https://www.informationphilosopher.com/solutions/scientists/layzer/growth_of_order/

"3. The evolution of an isolated system composed of a large number of gravitating particles generates information. In such a system the central density and temperature increase steadily, while the peripheral regions expand and become less dense. Thus a system of this kind evolves away from the maximum-entropy state appropriate to its energy, mass, and radius. A spherical system of gravitating particles confined by a reflecting spherical wall will evolve toward a stable equilibrium configuration if the ratio of the central density to the surface density in this configuration is less than a certain critical value. If the ratio exceeds this value, the equilibrium configuration is unstable and the core will continue to collapse indefinitely."

Jason

John Clark

Oct 18, 2020, 2:44:45 PM10/18/20

to everyth...@googlegroups.com

On Sun, Oct 18, 2020 at 11:11 AM Alan Grayson <agrays...@gmail.com> wrote:

> In Boltzmann's formula for entropy, gravity isn't a parameter.

Gravity can be Ignored in some situations, such as those Boltzmann could have performed in his lab, but not in other situations, such as in the very early universe, or in the present day where a large cloud of gas and dust has only just started to form a star. Consistent with quantum mechanics every part of the very early universe was as dense as any other part of the universe; in a world that obeys the laws of quantum mechanics and things were that smooth there is only one state that universe could have been in, and therefore it had to have extremely low entropy. When things started to clump together the entropy increased and it's been increasing ever since.

> I think entropy only depends on the number of possible microstates, and therefore on the volume. AG

That is correct, but Science marches on and now we know something that Boltzmann did not, in our expanding accelerating universe new volume is constantly being created and so a maximum entropy level will never be reached and the second law of thermodynamics will ALWAYS remain true. Entropy will NEVER stop increasing.

John K Clark

The early universe was very smooth but, due to random quantum variations, not perfectly smooth. So some parts of the universe had very slightly more mass/energy than other parts. As time progressed, because of gravity, the slightly denser regions pulled in slightly more particles into themselves than the slightly less dense regions did, and so those tiny variations started to grow larger. As a result of that growth of variations entropy increased and the Universe never again reached the very low entropy level it had when it was young.> For a given volume, the entropy is what it is, related to the possible microstates as given by Boltzmann's formula. If the volume increases, the entropy increases, and it starts at a maximum level depending on the volume of the very early universe. So I see no distinguishing the Actual Entropy from the Maximum Possible Entropy. AGThe point is that if the universe is expanding (and accelerating) then there is no such thing as the universe having a Maximum Possible Entropy, whatever entropy value you give me no matter how large I can show you a time when the universe will have an even larger entropy.The concept of Maximum Possible Entropy was introduced by Jason, via his reference, and shown schematically in his diagram. Supposedly it can be compared with actual entropy at any time. I have no idea what this means. AGJohn K Clark

--

You received this message because you are subscribed to the Google Groups "Everything List" group.

To unsubscribe from this group and stop receiving emails from it, send an email to everything-li...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/everything-list/198293d3-75a7-48c9-9dd6-c7bc31e328aao%40googlegroups.com.

John Clark

Oct 18, 2020, 2:51:40 PM10/18/20

to everyth...@googlegroups.com

On Sun, Oct 18, 2020 at 12:33 PM Jason Resch <jason...@gmail.com> wrote:

> But according to the Bekenstein bound, the maximum possible entropy of a system is bound by its mass/enegy AND its volume.

The problem with the Bekenstein Bound Is that it assumes General Relativity will never need to be modified regardless of how small a region of space-time is under consideration, and that is unlikely to be true. We won't know for sure until somebody finds a quantum theory of gravity.

John K Clark

Bruce Kellett

Oct 18, 2020, 7:53:43 PM10/18/20

to Everything List

The maximum entropy state for a given mass-energy occurs when the entire system forms a black hole. Increasing the volume of space around this BH does not affect the entropy -- it is already at its maximum. The only way to increase this maximum is to increase the amount of mass-energy available -- and simply expanding the universe (available volume) does not do this!

Bruce

Jason Resch

Oct 18, 2020, 8:21:17 PM10/18/20

to Everything List

I think it's the other way around, a black hole is the maximum entropy for a given volume, not for a given mass-energy. At least that's what Bernstein's equation implies:

Entropy is bounded by (Radius * Energy * (a constant))

Jason

Bruce

--

You received this message because you are subscribed to the Google Groups "Everything List" group.

To unsubscribe from this group and stop receiving emails from it, send an email to everything-li...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/everything-list/CAFxXSLT1QdG1326SD0KCTAM9x4nBQ-brjw4ifqt1h_w_N0z2Rw%40mail.gmail.com.

Bruce Kellett

Oct 18, 2020, 8:31:01 PM10/18/20

to Everything List

Nah. You have interpreted that wrongly. If you compress a given amount of mass-energy into a smaller and smaller volume, when the radius reaches the Schwarzschild radius, a black hole will form. This then represents the maximum entropy state for that fixed amount of mass energy. Do not forget that entropy works rather differently in GR.

The Bekenstein bound merely limits the amount of mass-energy (hence entropy) in a given volume (or the minimum volume that a fixed amount of mass-energy can be squeezed into). The only way to increase that minimum volume is to increase the mass-energy. The volume of the surrounding space is irrelevant.

Bruce

Lawrence Crowell

Oct 18, 2020, 8:31:52 PM10/18/20

to Everything List

On Sunday, October 18, 2020 at 10:11:15 AM UTC-5 agrays...@gmail.com wrote:

On Sunday, October 18, 2020 at 6:09:39 AM UTC-6, John Clark wrote:On Sun, Oct 18, 2020 Alan Grayson <agrays...@gmail.com> wrote:> If the very early universe is a hot photon gas, wouldn't that be a very high entropy initial condition?That would be true if gravity was not an important factor as is the case for most experiments we can produce in the lab where gravity can be safely ignored, but in the very early universe gravity was enormously important and can not be ignored.In Boltzmann's formula for entropy, gravity isn't a parameter. I think entropy only depends on the number of possible microstates, and therefore on the volume. AG

Totally wrong. Entropy is given by the area of a black hole, where the area is A = 4πr^2 and r = 2GM/c^2 or just 2m. Gravity is equivalent to temperature and T = 1/8πm.

LC

Jason Resch

Oct 18, 2020, 9:07:08 PM10/18/20

to Everything List

You're contradicting the equation. R can increase arbitrarily which increases the bound arbitrarily high.

The Bekenstein bound merely limits the amount of mass-energy (hence entropy) in a given volume (or the minimum volume that a fixed amount of mass-energy can be squeezed into). The only way to increase that minimum volume is to increase the mass-energy. The volume of the surrounding space is irrelevant.

The black hole entropy equation is different from the bernstein bound. The black hole is an edge case of the equation, which in its most general form relates volume and energy to a maximum entropy.

Jason

--Bruce

You received this message because you are subscribed to the Google Groups "Everything List" group.

To unsubscribe from this group and stop receiving emails from it, send an email to everything-li...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/everything-list/CAFxXSLR6iMBOymkL0FbuYqRbZq35XozrXcGi141qvG9_2o3n9Q%40mail.gmail.com.

Bruce Kellett

Oct 18, 2020, 9:47:02 PM10/18/20

to Everything List

On Mon, Oct 19, 2020 at 12:07 PM Jason Resch <jason...@gmail.com> wrote:

On Sun, Oct 18, 2020, 7:31 PM Bruce Kellett <bhkel...@gmail.com> wrote:On Mon, Oct 19, 2020 at 11:21 AM Jason Resch <jason...@gmail.com> wrote:On Sun, Oct 18, 2020, 6:53 PM Bruce Kellett <bhkel...@gmail.com> wrote:On Mon, Oct 19, 2020 at 3:33 AM Jason Resch <jason...@gmail.com> wrote:But according to the Bekenstein bound, the maximum possible entropy of a system is bound by its mass/enegy AND its volume. Two particles by themselves can encode an infinite amount of information if given infinite space to place them.Wouldn't expanding the available volume for a system increase the number of bit-combinations you can work with?The maximum entropy state for a given mass-energy occurs when the entire system forms a black hole. Increasing the volume of space around this BH does not affect the entropy -- it is already at its maximum. The only way to increase this maximum is to increase the amount of mass-energy available -- and simply expanding the universe (available volume) does not do this!I think it's the other way around, a black hole is the maximum entropy for a given volume, not for a given mass-energy. At least that's what Bernstein's equation implies:Entropy is bounded by (Radius * Energy * (a constant))Nah. You have interpreted that wrongly. If you compress a given amount of mass-energy into a smaller and smaller volume, when the radius reaches the Schwarzschild radius, a black hole will form. This then represents the maximum entropy state for that fixed amount of mass energy. Do not forget that entropy works rather differently in GR.You're contradicting the equation. R can increase arbitrarily which increases the bound arbitrarily high.

The Bekenstein bound states that the maximum mass-energy that can be held in any particular volume is given when that volume is a black hole. The only way to increase the radius of a BH is to increase its mass. If you take a fixed energy, you can fit this in any volume larger than that of the related BH. But the entropy is maximum for that mass-energy if it is in the form of a black hole.

The Bekenstein bound merely limits the amount of mass-energy (hence entropy) in a given volume (or the minimum volume that a fixed amount of mass-energy can be squeezed into). The only way to increase that minimum volume is to increase the mass-energy. The volume of the surrounding space is irrelevant.The black hole entropy equation is different from the bernstein bound. The black hole is an edge case of the equation, which in its most general form relates volume and energy to a maximum entropy.

The Bekenstein bound simply refers to the maximum energy in any particular volume. That maximum is reached when the mass forms a black hole of the given radius.

Remember that entropy is basically related to the volume of phase space, not of ordinary space. And phase space relates to the number of particles (hence mass-energy). Spatial volume is essentially irrelevant for volumes greater than that of the corresponding black hole.

Bruce

Jason Resch

Oct 18, 2020, 10:09:10 PM10/18/20

to Everything List

On Sun, Oct 18, 2020, 8:47 PM Bruce Kellett <bhkel...@gmail.com> wrote:

On Mon, Oct 19, 2020 at 12:07 PM Jason Resch <jason...@gmail.com> wrote:On Sun, Oct 18, 2020, 7:31 PM Bruce Kellett <bhkel...@gmail.com> wrote:On Mon, Oct 19, 2020 at 11:21 AM Jason Resch <jason...@gmail.com> wrote:On Sun, Oct 18, 2020, 6:53 PM Bruce Kellett <bhkel...@gmail.com> wrote:On Mon, Oct 19, 2020 at 3:33 AM Jason Resch <jason...@gmail.com> wrote:But according to the Bekenstein bound, the maximum possible entropy of a system is bound by its mass/enegy AND its volume. Two particles by themselves can encode an infinite amount of information if given infinite space to place them.Wouldn't expanding the available volume for a system increase the number of bit-combinations you can work with?The maximum entropy state for a given mass-energy occurs when the entire system forms a black hole. Increasing the volume of space around this BH does not affect the entropy -- it is already at its maximum. The only way to increase this maximum is to increase the amount of mass-energy available -- and simply expanding the universe (available volume) does not do this!I think it's the other way around, a black hole is the maximum entropy for a given volume, not for a given mass-energy. At least that's what Bernstein's equation implies:Entropy is bounded by (Radius * Energy * (a constant))Nah. You have interpreted that wrongly. If you compress a given amount of mass-energy into a smaller and smaller volume, when the radius reaches the Schwarzschild radius, a black hole will form. This then represents the maximum entropy state for that fixed amount of mass energy. Do not forget that entropy works rather differently in GR.You're contradicting the equation. R can increase arbitrarily which increases the bound arbitrarily high.The Bekenstein bound states that the maximum mass-energy that can be held in any particular volume is given when that volume is a black hole.

I agree that black hole density is the largest possible mass for a given volume.

However, I disagree with that characterization of the Bekenstein bound. The Bekenstein bound isn't about what volume a black hole of given mass will have; that was known well before Bekenstein.

The only way to increase the radius of a BH is to increase its mass. If you take a fixed energy, you can fit this in any volume larger than that of the related BH. But the entropy is maximum for that mass-energy if it is in the form of a black hole.The Bekenstein bound merely limits the amount of mass-energy (hence entropy) in a given volume (or the minimum volume that a fixed amount of mass-energy can be squeezed into). The only way to increase that minimum volume is to increase the mass-energy. The volume of the surrounding space is irrelevant.The black hole entropy equation is different from the bernstein bound. The black hole is an edge case of the equation, which in its most general form relates volume and energy to a maximum entropy.The Bekenstein bound simply refers to the maximum energy in any particular volume.

You must be thinking of something else.

That maximum is reached when the mass forms a black hole of the given radius.

True, but this isn't what the Bekenstein bound is.

Remember that entropy is basically related to the volume of phase space, not of ordinary space. And phase space relates to the number of particles (hence mass-energy). Spatial volume is essentially irrelevant for volumes greater than that of the corresponding black hole.

No. Consider an infinite length. With a single atom you can encode infinite information through placement of the atom along that length. This is with finite mass energy, but unrestricted spatial volume.

Jason

--Bruce

You received this message because you are subscribed to the Google Groups "Everything List" group.

To unsubscribe from this group and stop receiving emails from it, send an email to everything-li...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/everything-list/CAFxXSLTx9q3HH7gppJkNTRhgA_1bSJ591rFYS4kirK4oZxrPxg%40mail.gmail.com.

Bruce Kellett

Oct 18, 2020, 11:33:42 PM10/18/20

to Everything List

That does not encode infinite information. There is, after all, only one particle, and it can have only one position. If you want to encode more information, you need more particles. You might need an infinite number of bits to encode the position of one particle as a real number, but the single particle cannot encode this.

An arbitrary volume can only hold a limited amount of energy, or entropy, as given by the Bekenstein bound. But the maximum entropy for a particular mass is given when that mass forms a black hole -- which saturates the Bekenstein bound. Increasing the volume does not increase the actual entropy unless you simultaneously increase the mass. In terms of the cosmological problem, the initial state has a particular total mass, and that does not increase with the expansion of the universe. Consequently, the maximum possible entropy does not increase either. The point of the Past Hypothesis is that the initial state of this mass was of low entropy since the gravitational degrees of freedom were not saturated (it did not form a black hole), so there is a large amount of room available for the entropy to increase.

Bruce

Jason Resch

Oct 19, 2020, 12:31:21 AM10/19/20

to Everything List

On Sun, Oct 18, 2020, 10:33 PM Bruce Kellett <bhkel...@gmail.com> wrote:

On Mon, Oct 19, 2020 at 1:09 PM Jason Resch <jason...@gmail.com> wrote:On Sun, Oct 18, 2020, 8:47 PM Bruce Kellett <bhkel...@gmail.com> wrote:Remember that entropy is basically related to the volume of phase space, not of ordinary space. And phase space relates to the number of particles (hence mass-energy). Spatial volume is essentially irrelevant for volumes greater than that of the corresponding black hole.No. Consider an infinite length. With a single atom you can encode infinite information through placement of the atom along that length. This is with finite mass energy, but unrestricted spatial volume.That does not encode infinite information. There is, after all, only one particle, and it can have only one position. If you want to encode more information, you need more particles. You might need an infinite number of bits to encode the position of one particle as a real number, but the single particle cannot encode this.

This is plainly false. Every 1 mile distance that particle is placed along the line encodes a unique number. Travel up to 2^N miles and you can encode N bits. With infinite range there's no upper bound.

Or think of a grid of naughts and crosses, with a larger grid but fixed number of crosses, the number of possible combinations for drawing a fixed number of crosses still increases with more spaces to place them.

An arbitrary volume can only hold a limited amount of energy, or entropy, as given by the Bekenstein bound.

Energy isn't the same thing as entropy.

But the maximum entropy for a particular mass is given when that mass forms a black hole -- which saturates the Bekenstein bound.

The bound is always satisfied. Black holes just reach the maximum of the bound at a given VOLUME.

Increasing the volume does not increase the actual entropy unless you simultaneously increase the mass.

You keep saying this but don't provide any justification or sources. I implore you to read the wikipedia article and if it is wrong, please point me to a source with the right/corrected equation.

As explained on that page, the bound is not limited to black holes, it says something more general which relates entropy bounds to the product of spherical radius and mass.

Jason

In terms of the cosmological problem, the initial state has a particular total mass, and that does not increase with the expansion of the universe. Consequently, the maximum possible entropy does not increase either. The point of the Past Hypothesis is that the initial state of this mass was of low entropy since the gravitational degrees of freedom were not saturated (it did not form a black hole), so there is a large amount of room available for the entropy to increase.Bruce

--

You received this message because you are subscribed to the Google Groups "Everything List" group.

To unsubscribe from this group and stop receiving emails from it, send an email to everything-li...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/everything-list/CAFxXSLSEGvVqnunZd%2BJAEirj3QKiwGO91NBXe6SCgqzsBpC7xg%40mail.gmail.com.

Bruce Kellett

Oct 19, 2020, 12:55:47 AM10/19/20

to Everything List

On Mon, Oct 19, 2020 at 3:31 PM Jason Resch <jason...@gmail.com> wrote:

On Sun, Oct 18, 2020, 10:33 PM Bruce Kellett <bhkel...@gmail.com> wrote:On Mon, Oct 19, 2020 at 1:09 PM Jason Resch <jason...@gmail.com> wrote:On Sun, Oct 18, 2020, 8:47 PM Bruce Kellett <bhkel...@gmail.com> wrote:Remember that entropy is basically related to the volume of phase space, not of ordinary space. And phase space relates to the number of particles (hence mass-energy). Spatial volume is essentially irrelevant for volumes greater than that of the corresponding black hole.No. Consider an infinite length. With a single atom you can encode infinite information through placement of the atom along that length. This is with finite mass energy, but unrestricted spatial volume.That does not encode infinite information. There is, after all, only one particle, and it can have only one position. If you want to encode more information, you need more particles. You might need an infinite number of bits to encode the position of one particle as a real number, but the single particle cannot encode this.This is plainly false. Every 1 mile distance that particle is placed along the line encodes a unique number. Travel up to 2^N miles and you can encode N bits. With infinite range there's no upper bound.

A single particle can be in only one place, and encode on ly one bit.

Or think of a grid of naughts and crosses, with a larger grid but fixed number of crosses, the number of possible combinations for drawing a fixed number of crosses still increases with more spaces to place them.

Each combination encodes only one combination.

An arbitrary volume can only hold a limited amount of energy, or entropy, as given by the Bekenstein bound.Energy isn't the same thing as entropy.

Bekenstein relates them.

But the maximum entropy for a particular mass is given when that mass forms a black hole -- which saturates the Bekenstein bound.The bound is always satisfied. Black holes just reach the maximum of the bound at a given VOLUME.

I said saturated, not 'satisfied'. The bound gives the maximum possible enclosed mass for a given volume, or the volume is that for which entropy is maximum for a given mass which saturates the bound.

Increasing the volume does not increase the actual entropy unless you simultaneously increase the mass.You keep saying this but don't provide any justification or sources. I implore you to read the wikipedia article and if it is wrong, please point me to a source with the right/corrected equation.

The justification is that it is impossible to increase the mass of a black hole without at the same time increasing its radius (volume). For a black hole, the radius is 2M, in natural units. So the mass and radius are directly related. Any greater volume for the same mass does not saturate the bound.

As explained on that page, the bound is not limited to black holes, it says something more general which relates entropy bounds to the product of spherical radius and mass.

The entropy bound you are talking about is

S <= 2pi RE.

This is saturated when the radius and energy are related as for a black hole:

R = 2M, for which S = 4pi M^2.

Nothing mysterious here. I was talking about maximum possible entropy, which occurs when the bound is saturated, as for a black hole.

That is really all that the Bekenstein bound says. It is a bound, after all, and has information about the entropy only when that bound is saturated.

So for a fixed amount of mass, the entropy is maximized when that mass is in the form of a black hole. Increasing the volume surrounding the BH makes no difference to the entropy maximum for that mass.

Bruce

Bruce Kellett

Oct 19, 2020, 6:54:07 AM10/19/20

to Everything List

On Mon, Oct 19, 2020 at 3:55 PM Bruce Kellett <bhkel...@gmail.com> wrote:

On Mon, Oct 19, 2020 at 3:31 PM Jason Resch <jason...@gmail.com> wrote:As explained on that page, the bound is not limited to black holes, it says something more general which relates entropy bounds to the product of spherical radius and mass.The entropy bound you are talking about isS <= 2pi RE.This is saturated when the radius and energy are related as for a black hole:

To add to my earlier comments, I think that this way of writing the Bekenstein bound is seriously misleading. It is not exactly incorrect, but it does give the impression that the radius, R, of the volume is independent of the mass-energy, E. However, the bound does not mean that one can increase the entropy by simply increasing the volume, leaving the mass-energy constant. Entropy is a physical quantity, and it is matter, not empty space, that has entropy. So although the bound above can become arbitrarily large for a fixed anergy by simply increasing the volume, the maximum attainable entropy of any physical system is not thereby increased. The maximum entropy of a physical system (fixed mass-energy) is given when the bound is saturated. In other words, when the radius is that of the corresponding black hole: R = 2E (or 2M in natural units).

Likewise, one cannot increase the entropy by an arbitrary increase in the mass-energy for a fixed volume. Once the mass equals half the radius of the spherical volume, M = R/2, a black hole forms, and no more mass can be added without increasing the radius.

Bruce

Jason Resch

Oct 19, 2020, 8:08:59 AM10/19/20

to Everything List

On Sun, Oct 18, 2020, 11:55 PM Bruce Kellett <bhkel...@gmail.com> wrote:

On Mon, Oct 19, 2020 at 3:31 PM Jason Resch <jason...@gmail.com> wrote:On Sun, Oct 18, 2020, 10:33 PM Bruce Kellett <bhkel...@gmail.com> wrote:On Mon, Oct 19, 2020 at 1:09 PM Jason Resch <jason...@gmail.com> wrote:On Sun, Oct 18, 2020, 8:47 PM Bruce Kellett <bhkel...@gmail.com> wrote:Remember that entropy is basically related to the volume of phase space, not of ordinary space. And phase space relates to the number of particles (hence mass-energy). Spatial volume is essentially irrelevant for volumes greater than that of the corresponding black hole.No. Consider an infinite length. With a single atom you can encode infinite information through placement of the atom along that length. This is with finite mass energy, but unrestricted spatial volume.That does not encode infinite information. There is, after all, only one particle, and it can have only one position. If you want to encode more information, you need more particles. You might need an infinite number of bits to encode the position of one particle as a real number, but the single particle cannot encode this.This is plainly false. Every 1 mile distance that particle is placed along the line encodes a unique number. Travel up to 2^N miles and you can encode N bits. With infinite range there's no upper bound.A single particle can be in only one place, and encode on ly one bit.

If I were building a hard drive and could only write a fixed number of crosses, let's say 5 crossed, on a LxL grid, the number of possible combinations would be (L^2 choose 5).

So for L = 3 that is (9 choose 5) = 126.

Which means I can encode Log2(126) = 6.97 bits

For L = 4 that is (16 choose 5) = 4368.

Which means I can encode Log2(4368) = 12.09 bits

My total number of crosses (let's say I use a single atom to represent each, is 5 in both cases). It is constant. But if I have more space, I have more ways of arranging them, and can use them to encode more bits, or conversely it takes more information to describe the system.

Why does this analogy not extend to a quantum system of particles in larger or smaller regions of space?

Or think of a grid of naughts and crosses, with a larger grid but fixed number of crosses, the number of possible combinations for drawing a fixed number of crosses still increases with more spaces to place them.Each combination encodes only one combination.

More unique combinations mean more states a system can possibly be in, meaning it takes more information to uniquely define the state the system can be in, or alternatively the more information the system may encode.

An arbitrary volume can only hold a limited amount of energy, or entropy, as given by the Bekenstein bound.Energy isn't the same thing as entropy.Bekenstein relates them.But the maximum entropy for a particular mass is given when that mass forms a black hole -- which saturates the Bekenstein bound.The bound is always satisfied. Black holes just reach the maximum of the bound at a given VOLUME.I said saturated, not 'satisfied'. The bound gives the maximum possible enclosed mass for a given volume, or the volume is that for which entropy is maximum for a given mass which saturates the bound.

I think you're thinking of the black hole entropy equation, which is related to, but distinct from, the Bekenstein bound.

Increasing the volume does not increase the actual entropy unless you simultaneously increase the mass.You keep saying this but don't provide any justification or sources. I implore you to read the wikipedia article and if it is wrong, please point me to a source with the right/corrected equation.The justification is that it is impossible to increase the mass of a black hole without at the same time increasing its radius (volume). For a black hole, the radius is 2M, in natural units. So the mass and radius are directly related. Any greater volume for the same mass does not saturate the bound.

Forget about saturating the bound, that's not the point. Saturating the bound requires maximizing entropy for a given volume. On that we agree.

My point is that the bound implies that a larger amount of volume, for fixed energy, allows for higher entropy.

Put your black hole in a larger volume and now the black hole has a very well defined position in that volume, which is more information than you had before.

It's a generally accepted in computer science that a turing machine allowed to use infinite space could store infinite information, even with fixed total mass/energy.

As explained on that page, the bound is not limited to black holes, it says something more general which relates entropy bounds to the product of spherical radius and mass.The entropy bound you are talking about isS <= 2pi RE.This is saturated when the radius and energy are related as for a black hole:R = 2M, for which S = 4pi M^2.Nothing mysterious here. I was talking about maximum possible entropy, which occurs when the bound is saturated, as for a black hole.That is really all that the Bekenstein bound says. It is a bound, after all, and has information about the entropy only when that bound is saturated.

If the bound strictly depends on energy, why is R included in the formulation?

So for a fixed amount of mass, the entropy is maximized when that mass is in the form of a black hole. Increasing the volume surrounding the BH makes no difference to the entropy maximum for that mass.

For the system as a whole it does. Now the black hole has coordinates in a larger volume which did not exist before, and must be included in any description of that system.

A non-collapsed relativistic gas sits right on the edge of becoming a black hole and satisfying the bound. Consider such a relativistic gas confined to a 1 meter volume. Now considering that gas is given more space to occupy, it is placed in a sphere of 1 light-year.

Are there not now many more degrees of freedom possible for that same mass energy in a 1 light-year space than when it was confined to 1 meter? Are not more bits and precision required to specify the coordinates of each particle?

Jason

BruceJasonIn terms of the cosmological problem, the initial state has a particular total mass, and that does not increase with the expansion of the universe. Consequently, the maximum possible entropy does not increase either. The point of the Past Hypothesis is that the initial state of this mass was of low entropy since the gravitational degrees of freedom were not saturated (it did not form a black hole), so there is a large amount of room available for the entropy to increase.Bruce

--

You received this message because you are subscribed to the Google Groups "Everything List" group.

To unsubscribe from this group and stop receiving emails from it, send an email to everything-li...@googlegroups.com.

To view this discussion on the web visit https://groups.google.com/d/msgid/everything-list/CAFxXSLTohsYxw1TObr0MurptwzKcp%3D9pG3j_2oWOQC2SsZ8eRg%40mail.gmail.com.

Lawrence Crowell

Oct 19, 2020, 6:06:10 PM10/19/20

to Everything List

On Sunday, October 18, 2020 at 9:09:10 PM UTC-5 Jason wrote:

On Sun, Oct 18, 2020, 8:47 PM Bruce Kellett <bhkel...@gmail.com> wrote:On Mon, Oct 19, 2020 at 12:07 PM Jason Resch <jason...@gmail.com> wrote:On Sun, Oct 18, 2020, 7:31 PM Bruce Kellett <bhkel...@gmail.com> wrote:On Mon, Oct 19, 2020 at 11:21 AM Jason Resch <jason...@gmail.com> wrote:On Sun, Oct 18, 2020, 6:53 PM Bruce Kellett <bhkel...@gmail.com> wrote:On Mon, Oct 19, 2020 at 3:33 AM Jason Resch <jason...@gmail.com> wrote:But according to the Bekenstein bound, the maximum possible entropy of a system is bound by its mass/enegy AND its volume. Two particles by themselves can encode an infinite amount of information if given infinite space to place them.Wouldn't expanding the available volume for a system increase the number of bit-combinations you can work with?The maximum entropy state for a given mass-energy occurs when the entire system forms a black hole. Increasing the volume of space around this BH does not affect the entropy -- it is already at its maximum. The only way to increase this maximum is to increase the amount of mass-energy available -- and simply expanding the universe (available volume) does not do this!I think it's the other way around, a black hole is the maximum entropy for a given volume, not for a given mass-energy. At least that's what Bernstein's equation implies:Entropy is bounded by (Radius * Energy * (a constant))Nah. You have interpreted that wrongly. If you compress a given amount of mass-energy into a smaller and smaller volume, when the radius reaches the Schwarzschild radius, a black hole will form. This then represents the maximum entropy state for that fixed amount of mass energy. Do not forget that entropy works rather differently in GR.You're contradicting the equation. R can increase arbitrarily which increases the bound arbitrarily high.The Bekenstein bound states that the maximum mass-energy that can be held in any particular volume is given when that volume is a black hole.I agree that black hole density is the largest possible mass for a given volume.

There is no stationary meaning to the volume of a black hole. The Penrose diagram for the Schwarzschild black hole is seen below

The black hole is the upper triangle and there are two parts of the split horizon at r = 2GM that are moving apart at the speed of light. The interior volume of the black hole is increasing enormously. This is the ideal vacuum solution, but the truncated diagram with infalling matter also has the same feature. Here the black hole ends in Hawking radiation. The small wedge below r = 0 grows enormously.

LC

Bruce Kellett

Oct 19, 2020, 8:06:54 PM10/19/20

to Everything List

On Mon, Oct 19, 2020 at 11:08 PM Jason Resch <jason...@gmail.com> wrote:

On Sun, Oct 18, 2020, 11:55 PM Bruce Kellett <bhkel...@gmail.com> wrote:On Mon, Oct 19, 2020 at 3:31 PM Jason Resch <jason...@gmail.com> wrote:On Sun, Oct 18, 2020, 10:33 PM Bruce Kellett <bhkel...@gmail.com> wrote:On Mon, Oct 19, 2020 at 1:09 PM Jason Resch <jason...@gmail.com> wrote:On Sun, Oct 18, 2020, 8:47 PM Bruce Kellett <bhkel...@gmail.com> wrote:Remember that entropy is basically related to the volume of phase space, not of ordinary space. And phase space relates to the number of particles (hence mass-energy). Spatial volume is essentially irrelevant for volumes greater than that of the corresponding black hole.No. Consider an infinite length. With a single atom you can encode infinite information through placement of the atom along that length. This is with finite mass energy, but unrestricted spatial volume.That does not encode infinite information. There is, after all, only one particle, and it can have only one position. If you want to encode more information, you need more particles. You might need an infinite number of bits to encode the position of one particle as a real number, but the single particle cannot encode this.This is plainly false. Every 1 mile distance that particle is placed along the line encodes a unique number. Travel up to 2^N miles and you can encode N bits. With infinite range there's no upper bound.A single particle can be in only one place, and encode on ly one bit.If I were building a hard drive and could only write a fixed number of crosses, let's say 5 crossed, on a LxL grid, the number of possible combinations would be (L^2 choose 5).So for L = 3 that is (9 choose 5) = 126.Which means I can encode Log2(126) = 6.97 bitsFor L = 4 that is (16 choose 5) = 4368.Which means I can encode Log2(4368) = 12.09 bitsMy total number of crosses (let's say I use a single atom to represent each, is 5 in both cases). It is constant. But if I have more space, I have more ways of arranging them, and can use them to encode more bits, or conversely it takes more information to describe the system.Why does this analogy not extend to a quantum system of particles in larger or smaller regions of space?

I think you are forgetting the physical nature of your atoms and your grid. Because information is physical, it requires mass-energy to encode. Look again at the Bekenstein bound you have used:

S <= 2pi RE

That does imply that if you increase the volume, you can fit in a greater entropy. But it does not mean that increasing the volume for fixed mass-energy automatically increases the entropy. In order to increase the entropy to that allowed in the larger volume, you have to also increase the mass-energy.

Or think of a grid of naughts and crosses, with a larger grid but fixed number of crosses, the number of possible combinations for drawing a fixed number of crosses still increases with more spaces to place them.Each combination encodes only one combination.More unique combinations mean more states a system can possibly be in, meaning it takes more information to uniquely define the state the system can be in, or alternatively the more information the system may encode.An arbitrary volume can only hold a limited amount of energy, or entropy, as given by the Bekenstein bound.Energy isn't the same thing as entropy.Bekenstein relates them.But the maximum entropy for a particular mass is given when that mass forms a black hole -- which saturates the Bekenstein bound.The bound is always satisfied. Black holes just reach the maximum of the bound at a given VOLUME.I said saturated, not 'satisfied'. The bound gives the maximum possible enclosed mass for a given volume, or the volume is that for which entropy is maximum for a given mass which saturates the bound.I think you're thinking of the black hole entropy equation, which is related to, but distinct from, the Bekenstein bound.

The Bekenstein bound as you have used it merely means that the amount of entropy in a given volume is limited. Increasing the volume will allow for greater entropy, but the entropy at the bound increases only if the mass is also increased. Entropy (information) is a physical thing, and coding or storing information requires energy.

I realize that it is difficult to say this clearly and precisely, because in general statistical physics, the entropy is so far below the maximum possible in the considered volume, that the Bekenstein bound is largely irrelevant. It becomes an issue only if you look at situations, such as black holes, where the bound is in fact saturated, and you consider increasing either the mass or the volume. It is then that the fact that the bound depends on their interdependence becomes important.

Increasing the volume does not increase the actual entropy unless you simultaneously increase the mass.You keep saying this but don't provide any justification or sources. I implore you to read the wikipedia article and if it is wrong, please point me to a source with the right/corrected equation.The justification is that it is impossible to increase the mass of a black hole without at the same time increasing its radius (volume). For a black hole, the radius is 2M, in natural units. So the mass and radius are directly related. Any greater volume for the same mass does not saturate the bound.Forget about saturating the bound, that's not the point. Saturating the bound requires maximizing entropy for a given volume. On that we agree.My point is that the bound implies that a larger amount of volume, for fixed energy, allows for higher entropy.

That is correct, provided you realize that increasing the entropy beyond a saturated bound requires the input of more mass-energy.

Put your black hole in a larger volume and now the black hole has a very well defined position in that volume, which is more information than you had before.It's a generally accepted in computer science that a turing machine allowed to use infinite space could store infinite information, even with fixed total mass/energy.

You do not have massless tapes on which to store your infinite information. So this would appear to be nonsensical. A Turing machine in a physical object, and it is subject to the laws of physics.

As explained on that page, the bound is not limited to black holes, it says something more general which relates entropy bounds to the product of spherical radius and mass.The entropy bound you are talking about isS <= 2pi RE.This is saturated when the radius and energy are related as for a black hole:R = 2M, for which S = 4pi M^2.Nothing mysterious here. I was talking about maximum possible entropy, which occurs when the bound is saturated, as for a black hole.That is really all that the Bekenstein bound says. It is a bound, after all, and has information about the entropy only when that bound is saturated.If the bound strictly depends on energy, why is R included in the formulation?

That specifies the volume within which the energy is enclosed. But increasing the volume does not, of itself, increase the entropy. The maximum entropy for a fixed mass-energy is fixed by the surface area of a black hole of radius R = 2M.

So for a fixed amount of mass, the entropy is maximized when that mass is in the form of a black hole. Increasing the volume surrounding the BH makes no difference to the entropy maximum for that mass.For the system as a whole it does. Now the black hole has coordinates in a larger volume which did not exist before, and must be included in any description of that system.A non-collapsed relativistic gas sits right on the edge of becoming a black hole and satisfying the bound. Consider such a relativistic gas confined to a 1 meter volume. Now considering that gas is given more space to occupy, it is placed in a sphere of 1 light-year.Are there not now many more degrees of freedom possible for that same mass energy in a 1 light-year space than when it was confined to 1 meter? Are not more bits and precision required to specify the coordinates of each particle?

Putting a black hole in a bigger volume does not increase the entropy of that black hole. Specifying coordinates for the constituents of the BH is either irrelevant, or requires additional mass.

The upshot of all of this is that the expansion of space in a cosmology does not increase the maximum possible entropy. The maximum entropy is set by the amount of mass-energy in the cosmology, and that does not increase with the expansion.

Bruce

Jason Resch

Oct 19, 2020, 9:02:59 PM10/19/20

to everyth...@googlegroups.com

On Monday, October 19, 2020, Bruce Kellett <bhkel...@gmail.com> wrote:

On Mon, Oct 19, 2020 at 11:08 PM Jason Resch <jason...@gmail.com> wrote:On Sun, Oct 18, 2020, 11:55 PM Bruce Kellett <bhkel...@gmail.com> wrote:On Mon, Oct 19, 2020 at 3:31 PM Jason Resch <jason...@gmail.com> wrote:On Sun, Oct 18, 2020, 10:33 PM Bruce Kellett <bhkel...@gmail.com> wrote:On Mon, Oct 19, 2020 at 1:09 PM Jason Resch <jason...@gmail.com> wrote:On Sun, Oct 18, 2020, 8:47 PM Bruce Kellett <bhkel...@gmail.com> wrote:Remember that entropy is basically related to the volume of phase space, not of ordinary space. And phase space relates to the number of particles (hence mass-energy). Spatial volume is essentially irrelevant for volumes greater than that of the corresponding black hole.No. Consider an infinite length. With a single atom you can encode infinite information through placement of the atom along that length. This is with finite mass energy, but unrestricted spatial volume.That does not encode infinite information. There is, after all, only one particle, and it can have only one position. If you want to encode more information, you need more particles. You might need an infinite number of bits to encode the position of one particle as a real number, but the single particle cannot encode this.This is plainly false. Every 1 mile distance that particle is placed along the line encodes a unique number. Travel up to 2^N miles and you can encode N bits. With infinite range there's no upper bound.A single particle can be in only one place, and encode on ly one bit.If I were building a hard drive and could only write a fixed number of crosses, let's say 5 crossed, on a LxL grid, the number of possible combinations would be (L^2 choose 5).So for L = 3 that is (9 choose 5) = 126.Which means I can encode Log2(126) = 6.97 bitsFor L = 4 that is (16 choose 5) = 4368.Which means I can encode Log2(4368) = 12.09 bitsMy total number of crosses (let's say I use a single atom to represent each, is 5 in both cases). It is constant. But if I have more space, I have more ways of arranging them, and can use them to encode more bits, or conversely it takes more information to describe the system.Why does this analogy not extend to a quantum system of particles in larger or smaller regions of space?I think you are forgetting the physical nature of your atoms and your grid. Because information is physical, it requires mass-energy to encode. Look again at the Bekenstein bound you have used:

With enough cells in a grid, you could store an entire hard drives worth of data by placing a single atom placed in the right cell.

S <= 2pi REThat does imply that if you increase the volume, you can fit in a greater entropy. But it does not mean that increasing the volume for fixed mass-energy automatically increases the entropy.

I agree it's not automatic. Is it correct to say it increases the ceiling of distinguishable quantum states the system might be in?

In order to increase the entropy to that allowed in the larger volume, you have to also increase the mass-energy.

Doesn't this contradict what you just said?

Do you agree that the bound is the same for 1 Kg in a 2 meter sphere as for 2 Kg in a 1 meter sphere?

Or think of a grid of naughts and crosses, with a larger grid but fixed number of crosses, the number of possible combinations for drawing a fixed number of crosses still increases with more spaces to place them.Each combination encodes only one combination.More unique combinations mean more states a system can possibly be in, meaning it takes more information to uniquely define the state the system can be in, or alternatively the more information the system may encode.An arbitrary volume can only hold a limited amount of energy, or entropy, as given by the Bekenstein bound.Energy isn't the same thing as entropy.Bekenstein relates them.But the maximum entropy for a particular mass is given when that mass forms a black hole -- which saturates the Bekenstein bound.The bound is always satisfied. Black holes just reach the maximum of the bound at a given VOLUME.I said saturated, not 'satisfied'. The bound gives the maximum possible enclosed mass for a given volume, or the volume is that for which entropy is maximum for a given mass which saturates the bound.I think you're thinking of the black hole entropy equation, which is related to, but distinct from, the Bekenstein bound.The Bekenstein bound as you have used it merely means that the amount of entropy in a given volume is limited.

Agree.

Increasing the volume will allow for greater entropy,

Agree.

but the entropy at the bound increases only if the mass is also increased.

When you say "at the bound" you are talking about black holes, which is the point of maximum mass and maximum entropy for a given volume.

But I have to disagree with your conclusion that adding volume doesn't increase the total entropy of the system. Use my grid example: with placement of the block hole in the grid instead of the atom. If the volume is big enough, you can encode more bits through the placement of the hole somewhere in the grid than the Bekenstein bound gives for the black hole's total entropy.

Entropy (information) is a physical thing, and coding or storing information requires energy.

It takes energy to encode information, yes, but that is irrelevant to the bound. It's the difference between powering up a hard drive or CD burner to write the information once, and then later considering the hard drive or CD with the data on it as an isolated system. You can ignore the input energy used to write when considering the bound for the powered down storage media.

I realize that it is difficult to say this clearly and precisely, because in general statistical physics, the entropy is so far below the maximum possible in the considered volume, that the Bekenstein bound is largely irrelevant. It becomes an issue only if you look at situations, such as black holes, where the bound is in fact saturated, and you consider increasing either the mass or the volume. It is then that the fact that the bound depends on their interdependence becomes important.Increasing the volume does not increase the actual entropy unless you simultaneously increase the mass.You keep saying this but don't provide any justification or sources. I implore you to read the wikipedia article and if it is wrong, please point me to a source with the right/corrected equation.The justification is that it is impossible to increase the mass of a black hole without at the same time increasing its radius (volume). For a black hole, the radius is 2M, in natural units. So the mass and radius are directly related. Any greater volume for the same mass does not saturate the bound.Forget about saturating the bound, that's not the point. Saturating the bound requires maximizing entropy for a given volume. On that we agree.My point is that the bound implies that a larger amount of volume, for fixed energy, allows for higher entropy.That is correct, provided you realize that increasing the entropy beyond a saturated bound requires the input of more mass-energy.

I don't see how your previous sentence can be interpreted consistently. It seems like the seconds clause contradicts the first.

Put your black hole in a larger volume and now the black hole has a very well defined position in that volume, which is more information than you had before.It's a generally accepted in computer science that a turing machine allowed to use infinite space could store infinite information, even with fixed total mass/energy.You do not have massless tapes on which to store your infinite information. So this would appear to be nonsensical. A Turing machine in a physical object, and it is subject to the laws of physics.

The tape can be empty space, while the information can be represented by placement of one particle in a definite location within that infinite space.

Imagine an infinite grid. By marking a single X in a single cell of that grid you can encode any number. Therefore you can encode any data of any length in a system of finite energy. The grid can be empty space.

As explained on that page, the bound is not limited to black holes, it says something more general which relates entropy bounds to the product of spherical radius and mass.The entropy bound you are talking about isS <= 2pi RE.This is saturated when the radius and energy are related as for a black hole:R = 2M, for which S = 4pi M^2.Nothing mysterious here. I was talking about maximum possible entropy, which occurs when the bound is saturated, as for a black hole.That is really all that the Bekenstein bound says. It is a bound, after all, and has information about the entropy only when that bound is saturated.If the bound strictly depends on energy, why is R included in the formulation?That specifies the volume within which the energy is enclosed. But increasing the volume does not, of itself, increase the entropy. The maximum entropy for a fixed mass-energy is fixed by the surface area of a black hole of radius R = 2M.

That's false. The maximum entropy is NOT fixed unless both the mass-energy AND the volume are fixed.

If the bound were as you say, determined solely by mass-energy, then R would not appear in the equation as it does.

So for a fixed amount of mass, the entropy is maximized when that mass is in the form of a black hole. Increasing the volume surrounding the BH makes no difference to the entropy maximum for that mass.For the system as a whole it does. Now the black hole has coordinates in a larger volume which did not exist before, and must be included in any description of that system.A non-collapsed relativistic gas sits right on the edge of becoming a black hole and satisfying the bound. Consider such a relativistic gas confined to a 1 meter volume. Now considering that gas is given more space to occupy, it is placed in a sphere of 1 light-year.Are there not now many more degrees of freedom possible for that same mass energy in a 1 light-year space than when it was confined to 1 meter? Are not more bits and precision required to specify the coordinates of each particle?Putting a black hole in a bigger volume does not increase the entropy of that black hole. Specifying coordinates for the constituents of the BH is either irrelevant, or requires additional mass.

See my grid example. No additional mass is needed for the black hole to occupy a certain position in the grid. If the grid has is 10^10^100 cells, then the location of the hole provides at least 10^100 bits of information. This is more information/entropy than in even a galactic mass black hole.

The upshot of all of this is that the expansion of space in a cosmology does not increase the maximum possible entropy. The maximum entropy is set by the amount of mass-energy in the cosmology, and that does not increase with the expansion.

Jason

--Bruce

You received this message because you are subscribed to the Google Groups "Everything List" group.