High level syntax approach to scalable coding in VP9

Christian

In the scope of a thesis with the Institut für Nachrichtentechnik at RWTH Aachen University we experimented with scalable coding in VP9. Since the standardization of the scalable extension of HEVC (SHVC) has recently been finished, we wanted to implement a similar high level syntax approach for scalable coding in VP9 and evaluate its performance. Here we want to share the principal of the implementation and out findings.

The scalable extension of HEVC (SHVC):

SHVC uses a very simple high level syntax only approach to achieve scalability. Mainly the reference picture list in the enhancement layer is extended to also contain the reconstructed picture of the corresponding lower layer frame. If this inter layer reference is chosen to be used in the enhancement layer, the motion vector is restricted to be zero (0,0) in order to limit the additional complexity which is introduced by the inter layer process. Also experiments revealed that the additional complexity does not justify the performance gains. Inter layer motion vector prediction is enabled by allowing TMVP (temporal motion vector prediction) to be used from the lower layer reference (although the prediction is technically not temporal in this case). While this approach to scalability is easy to implement and standardize, it also yields a high complexity increase since in practice for every layer a full encoder/decoder has to be run with its own reference picture buffer, loop filter, entropy coding, transform etc…

The high level syntax only approach in VP9:

The figure shows the prediction structure of VP9 for one golden frame group (GFG). After encoding frame zero using only Intra prediction the next frame contains a super-frame. This super frame consists of a frame from the future which is put into the altref frame buffer and frame 2 which can now use frame 0 and the frame from the future for reference. All the following frames (3,4,5,6,7,8,9) then utilize the last frame (black line), frame 0 in the golden frame buffer (red dashed line) and the future frame in the altref buffer (blue dotted line). The future frame is finally displayed by copying the altref buffer into the current decoding buffer.

This figure shows the modified prediction structure for the scalable approach. In the base layer (BL) the prediction structure remains unchanged. However, in the enhancement layer (EL) the golden-frame buffer (red dashed line) is now pointing to the base layer reconstructed image instead of frame 0.

Some modifications to the coding process were necessary to maximize coding performance. Picture 0 in the EL is allowed to also use inter prediction (since the golden-frame buffer contains a valid reference). For this, the frame_type of frame 0 in the EL is changed to INTERFRAME.

Tested configuration / Results:

The encoding is performed in two separate steps. At first the unmodified encoder/decoder is run to create the base layer stream and reconstruction. In a second step the modified enhancement layer encoder/decoder is run which loads the lower layer reconstruction YUV file to obtain the lower layer references. The base layer call can be seen below. For calling the enhancement layer, the parameter --passes is set to 1. Also the source width, source height and the target bitrate may be different for the enhancement layer (depending on the scalability type).

Encoder Call:

vpxenc <InputFile> -o <Bitstream0> -w <SourceWidth0> -h <SourceHeight0> --passes=2 --good --cpu-used=0 --target-bitrate=<Bitrate0> --codec=vp9 --i420 --limit=<FramesToBeEncoded> --fps=<Fps0>*1000/1001 --end-usage=vbr --kf-max-dist=<IntraPeriod0> --lag-in-frames=11 --skip=0 --threads=4 --static-thresh=0

The testing conditions are chosen to be similar to the SHVC common testing conditions. These consist of 5 Full HD sequences, as well as 2 1600p sequences. The tested scalability ratios are 2x, 1.5x and SNR scalability. As a comparison a simulcast as well as the single layer scenario is chosen. This way we try to evaluate the benefit of a scalable scheme compared to simulcasting as well as the cost of scalability compared to single layer coding. The implemented up/down-samplers are also taken from SHVC.

This figure shows

the Y-PSNR results for the same sequence (Basketball Drive) for a 1.5x

scalability factor between the layers. A similar behavior as for SNR

scalability can be observed here.

This figure shows the Y-PSNR results for the sequence Basketball Drive and a scalability factor of 2x. Here we can see that a gain can only be observed for high bitrates and that there is actually a slight loss compared to simulcast. However, one can also see that the bitrate of the base layer (the difference between single layer and simulcast) is also very small.

The table shows the average BD-Rate results for the whole test set and the 3 different tested types of scalability. The first row (VP9-SVC vs Simulcast) shows how much bitrate can on average be saved compared to simulcast. In the Y component these savings are quite high for SNR- and 1.5x scalability. For 2x scalability there is no gain compared to simulcasting. The results for the Chroma components are lower. However it has to be considered that these components use a 4:2:0 subsampling.

The second and third row show the overhead when using scalable coding and when using simulcast. One can see that the overhead is reduced from 44.1%-47.2% (SNR and 1.5x) to 23%-22% (SNR and 1.5x). Again for 2x scalability there is no reduction for the overhead.

Comparison to Scalable coding in HEVC (SHVC):

Warning! This comparison should be viewed with a healthy amount of skepticism. The comparison of different video coding standards in itself is highly complicated and it goes without saying that this gets even more complicated for scalable codecs. This is why instead of comparing the two methods directly we will only compare the results presented in the table above with the same results for SHVC vs HEVC simulcast and HEVC single layer coding.

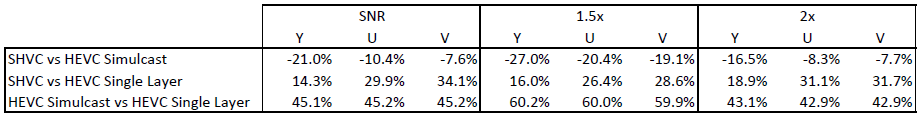

This table shows the results for SHVC compared to HEVC. (These results can be found in the document JCTVC-Q1009. See link above.) The following things can be observed:

- As in the tested VP9 scalability approach, 1.5x scalability shows the highest gains while 2x scalability shows the lowest gains

- As in the tested VP9 scalability approach, the U and V components yield lower gains than the Y component

- The overhead of the base layer (as can be seen in the last row) is similar for SNR scalability. However, for 1.5x and 2x scalability the overhead is lower for VP9. This indicates that the bitrate setting for the tested VP9 approach is not directly comparable to the bitrates in the SHVC test. So also the achieved complexity reductions in the first row are not directly comparable. The test settings could be improved here.

Conclusion:

A scalable approach similar to that of SHVC (Scalable HEVC) was implemented in VP9. In the enhancement layer the lower layer reconstruction is available in the golden frame buffer. For SNR and 1.5x scalability this approach yields a good compression gain compared to the simulcast scenario. For the SHVC this compression gain seems to be greater. It is suspected that replacing the golden frame buffer, which usually holds frame 0, has quite a big negative influence on the enhancement layer coding efficiency. Therefore it is concluded that using a fourth reference buffer for the lower layer reconstruction can further improve the scalable video coding performance in VP9.

Additionally the current implementation does not support inter layer motion prediction. These two points could be the key to bringing the bitrate savings (compared to the VP9 simulcast scenario) to an equal level with the bitrate savings for SHVC (compared to the HEVC simulcast scenario).

This work was performed by Matthias Gundert and Christian Feldmann. Lehrstuhlund Institut für Nachrichtentechnik, RWTH Aachen University. D-52064 Aachen, Germany.

Silvia Pfeiffer

That's a pretty cool analysis. Did you also look at the patent space in this area? Did you publish your code for this as unencumbered open source code?

Best Regards,

Silvia.

--

You received this message because you are subscribed to the Google Groups "Codec Developers" group.

To unsubscribe from this group and stop receiving emails from it, send an email to codec-devel...@webmproject.org.

To post to this group, send email to codec...@webmproject.org.

Visit this group at http://groups.google.com/a/webmproject.org/group/codec-devel/.

For more options, visit https://groups.google.com/a/webmproject.org/d/optout.

Christian

- Using the lower layer reconstructed pixels for prediction of the enhancement layer has been around for quite some time. It has been used in the scalable extension of H.262/MPEG-2 part 2 and the oldest patent I found on it is from 1992.

- The concept of inter layer motion vector prediction is younger. The oldest patent I found is US 20060153300 A1 from 2006.

- First the unmodified encoder/decoder have to be run to produce the base layer bitstream and the base layer reconstruction YUV file

- Secondly the software provided here can be run which takes an additional input parameter specifying the lower layer reconstruction YUV (encoder and decoder).

James Bankoski

ffmpeg -i input.mp4 -c:v rawvideo -pix_fmt yuv420p out.yuv

I want to try a simple command as a start.vpxenc --good --cpu-used=0 -w 1280 -h 720 --fps=30000/1001 --codec=vp9 -o out1.yuv input.mp4

But it didn't work.

Pass 1/2 frame 1/0 0B 0 us 0.00 fpm [ETA unknown] Segmentation fault: 11

Do you have any recommendation?

--

You received this message because you are subscribed to the Google Groups "Codec Developers" group.

To unsubscribe from this group and stop receiving emails from it, send an email to codec-devel...@webmproject.org.

To post to this group, send email to codec...@webmproject.org.

Visit this group at https://groups.google.com/a/webmproject.org/group/codec-devel/.

gbak...@ku.edu.tr

Christian

I am the one responsible for this scalability experiment in VP9. So first of all please be aware that it was not more than that: An experiment. Also the software we provided here is really just suited for the experiments that we did. It should not be considered a stable encoder/decoder implementation. However, of course I can commit on the software:

- Firstly: Did you provide a valid lower layer reconstructed YUV file to the encoder? The way the encoder works is that it uses two YUV files. The original and the reconstruction of the lower layer. In the ennd you have two bitstreams (one for each layer). This lower layer YUV reconstruction can be generated with any version of the VP9 encoder. However, it probably makes the most sense to use the same version as the encoder for the enhancement layer. So yor question: "Do i need to use the raw constructed file of base layer as an input of second encoding process?" -> Yes precicely.

- I don't know if I understood your quesion correctly, but of course you can use the described changes to the encoder in any other libvpx version. You would just have to port/implement them there.

I hope this helps,

Best regards,

Christian