Definition of "hart"

Tommy Murphy

Liviu Ionescu

> On 18 Nov 2017, at 01:26, Tommy Murphy <tommy_...@hotmail.com> wrote:

>

> Is there a clear, concise and authoritative definition of the term/concept "hart" anywhere?

> The spec doesn't seem to have one and simply uses the term without any specific explanation so it remains unclear/ambiguous.

"A RISC-V hardware platform can contain one or more RISC-V-compatible processing cores together with other ...

A component is termed a core if it contains an independent instruction fetch unit. A RISC-V-compatible core might support multiple RISC-V-compatible hardware threads, or harts, through multithreading. ..."

Turning the definition upside down, a hart is a component that does not have it's own independent instruction fetch unit. Several harts may share a single instruction fetch unit.

The manual definition might be technically correct, but, to be really helpful, it might need some additional explanations.

Regards,

Liviu

atish patra

Hart: HARdware Thread

https://www.sifive.com/documentation/risc-v-core/e51-risc-v-core-ip-manual/

Palmer Dabbelt

hart: A hardware execution context, which contains all the state mandated by

the RISC-V ISA: a PC and some registers. This terminology is designed to

disambiguate software's view of execution contexts from any particular

microarchitectural implementation strategy. For example, my Intel laptop is

described as having one socket with two cores, each of which has two hyper

threads. Therefore this system has four harts.

https://git.kernel.org/pub/scm/linux/kernel/git/torvalds/linux.git/tree/Documentation/devicetree/bindings/riscv/cpus.txt#n22

Karsten Merker

to the spec, but there is a glossary in the RISC-V CPU devicetree

bindings description wich defines "hart" as follows:

"hart: A hardware execution context, which contains all the

state mandated by the RISC-V ISA: a PC and some registers.

This terminology is designed to disambiguate software's view of

execution contexts from any particular microarchitectural

implementation strategy. For example, my Intel laptop is

described as having one socket with two cores, each of which

has two hyper threads. Therefore this system has four harts."

https://git.kernel.org/pub/scm/linux/kernel/git/torvalds/linux.git/tree/Documentation/devicetree/bindings/riscv/cpus.txt#n22

Regards,

Karsten

--

Gem. Par. 28 Abs. 4 Bundesdatenschutzgesetz widerspreche ich der Nutzung

sowie der Weitergabe meiner personenbezogenen Daten für Zwecke der

Werbung sowie der Markt- oder Meinungsforschung.

Tommy Murphy

Tommy Murphy

Palmer Dabbelt

Liviu Ionescu

> On 18 Nov 2017, at 01:51, Tommy Murphy <tommy_...@hotmail.com> wrote:

>

> Who might be in a position to clearly explain the term?

hart:

A component that contains a hardware execution context, which

includes all the state mandated by the RISC-V ISA: a PC and some registers.

core:

A component that contains one or more harts that share a

single independent instruction fetch unit.

Liviu

Tommy Murphy

Liviu Ionescu

> On 18 Nov 2017, at 02:20, Tommy Murphy <tommy_...@hotmail.com> wrote:

>

> I'm not sure that this is correct.

> The SiFive U54 has 5 cores but sometimes they are referred to as harts - e.g. in the documentation and the openocd log messages.

Liviu

Palmer Dabbelt

OpenOCD refers to this as a 5-hart system because it doesn't care about cores:

cores are a micro architectural detail that, to a first order, software doesn't

care about -- of course there's always edge cases like performance,

clock+power+reset, etc :).

The documentation will probably refer to both harts are cores, as they're two

different concepts. It just happens that on our U54 each hart corresponds to

exactly one core.

Ted Speers

--

You received this message because you are subscribed to the Google Groups "RISC-V SW Dev" group.

To unsubscribe from this group and stop receiving emails from it, send an email to sw-dev+un...@groups.riscv.org.

To post to this group, send email to sw-...@groups.riscv.org.

Visit this group at https://groups.google.com/a/groups.riscv.org/group/sw-dev/.

To view this discussion on the web visit https://groups.google.com/a/groups.riscv.org/d/msgid/sw-dev/mhng-ade2c0e7-2e4a-43d1-9029-9d6f32beb575%40palmer-si-x1c4.

Tommy Murphy

Liviu Ionescu

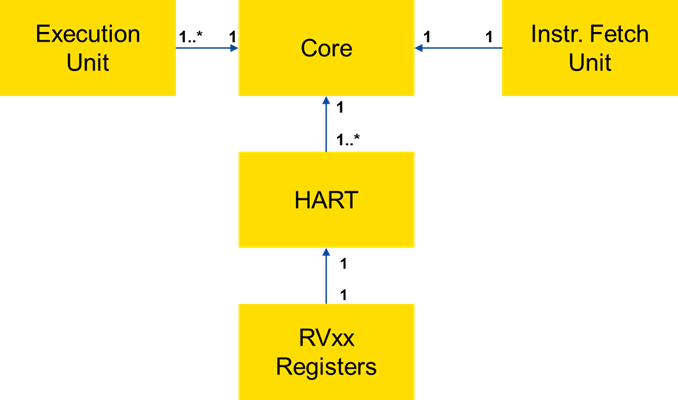

> On 18 Nov 2017, at 02:35, Ted Speers <Ted.S...@microsemi.com> wrote:

>

> My reading is that there is a 1:1 relationship between a set of RVxx* registers and a HART

A good further question might be: 'are the multiple execution units corresponding to multiple harts in a core really independent?' or they can share some common functionality, like 1 FPU for two harts, or things like this?

Regards,

Liviu

Liviu Ionescu

> On 18 Nov 2017, at 02:41, Tommy Murphy <tommy_...@hotmail.com> wrote:

>

> If so then in a multi-hart core it's implicit that each hart will be executing the same instructions at the same time?

regards,

Liviu

John Leidel

--

You received this message because you are subscribed to the Google Groups "RISC-V SW Dev" group.

To unsubscribe from this group and stop receiving emails from it, send an email to sw-dev+unsubscribe@groups.riscv.org.

To post to this group, send email to sw-...@groups.riscv.org.

Visit this group at https://groups.google.com/a/groups.riscv.org/group/sw-dev/.

To view this discussion on the web visit https://groups.google.com/a/groups.riscv.org/d/msgid/sw-dev/C85BEE4D-EB1C-4FA0-AF3C-8CC52041FCBA%40livius.net.

Tommy Murphy

atish patra

There are tons of good explanations of hyper threading in the web.

Here are some of them.

https://superuser.com/questions/122536/what-is-hyper-threading-and-how-does-it-work

Christopher Celio

--

You received this message because you are subscribed to the Google Groups "RISC-V SW Dev" group.

To unsubscribe from this group and stop receiving emails from it, send an email to sw-dev+un...@groups.riscv.org.

To post to this group, send email to sw-...@groups.riscv.org.

Visit this group at https://groups.google.com/a/groups.riscv.org/group/sw-dev/.

To view this discussion on the web visit https://groups.google.com/a/groups.riscv.org/d/msgid/sw-dev/5e3bc1ea-fef8-4d96-bec7-3cd63d9ec76d%40groups.riscv.org.

Liviu Ionescu

> On 18 Nov 2017, at 02:47, John Leidel <john....@gmail.com> wrote:

>

> +1 to Liviu's note. We've tested up to 8 harts/rocket core in GC64. It supports up to 64/core.

another way of seeing things would be:

- from a software point of view, only the harts are relevant, since each hart can execute a separate thread/process.

from this point of view, each hart has its set of registers, the required execution units, and fetches instructions from memory; at this level each hart appears to be an independent processor

- from the hardware point of view, multiple harts in a core may share some resources; the ISA manual mentions only the instruction fetching unit, common for all harts in a core.

in other words, to save some transistors, harts are not fully independent, they make use in common of some components.

this might be fully transparent for the software, but might also have subtle consequences, for example if two software threads execute the same code, it might be better to allocate them to harts in the same core, perhaps they can benefit from a common cache.

regards,

Liviu

Liviu Ionescu

> On 18 Nov 2017, at 02:58, atish patra <atis...@gmail.com> wrote:

>

> As I understood, the core concept is similar to hyper-threads in x86.

regards,

Liviu

Rishiyur Nikhil

regards,

Liviu

--

You received this message because you are subscribed to the Google Groups "RISC-V SW Dev" group.

To unsubscribe from this group and stop receiving emails from it, send an email to sw-dev+unsubscribe@groups.riscv.org.

To post to this group, send email to sw-...@groups.riscv.org.

Visit this group at https://groups.google.com/a/groups.riscv.org/group/sw-dev/.

To view this discussion on the web visit https://groups.google.com/a/groups.riscv.org/d/msgid/sw-dev/45A895FF-62EC-460D-B7C7-E29B2BE3BD71%40livius.net.

Ted Speers

In SysML, I think it looks like this … I hope everyone can see:

From: Rishiyur Nikhil [mailto:nik...@bluespec.com]

Sent: Friday, November 17, 2017 5:24 PM

To: Liviu Ionescu <i...@livius.net>

Cc: atish patra <atis...@gmail.com>; RISC-V SW Dev <sw-...@groups.riscv.org>; tommy_...@hotmail.com; pal...@sifive.com

Subject: Re: [sw-dev] Definition of "hart"

EXTERNAL EMAIL

> ... it's all about the registers.

But this is true even of software threads.

Shouldn't the definition be sharper, to avoid such "virtualization" of registers?

I.e., that the registers can be "simultaneously/concurrently" active?

Nikhil

On Fri, Nov 17, 2017 at 8:05 PM, Liviu Ionescu <i...@livius.net> wrote:

> On 18 Nov 2017, at 02:58, atish patra <atis...@gmail.com> wrote:

>

> As I understood, the core concept is similar to hyper-threads in x86.

I would say that the hart concept is similar to hyper-threads in x86; core is more or less the same in both architectures.

regards,

Liviu

--

You received this message because you are subscribed to the Google Groups "RISC-V SW Dev" group.

To unsubscribe from this group and stop receiving emails from it, send an email to sw-dev+un...@groups.riscv.org.

To post to this group, send email to sw-...@groups.riscv.org.

Visit this group at https://groups.google.com/a/groups.riscv.org/group/sw-dev/.

To view this discussion on the web visit https://groups.google.com/a/groups.riscv.org/d/msgid/sw-dev/45A895FF-62EC-460D-B7C7-E29B2BE3BD71%40livius.net.

--

You received this message because you are subscribed to the Google Groups "RISC-V SW Dev" group.

To unsubscribe from this group and stop receiving emails from it, send an email to sw-dev+un...@groups.riscv.org.

To post to this group, send email to sw-...@groups.riscv.org.

Visit this group at

https://groups.google.com/a/groups.riscv.org/group/sw-dev/.

To view this discussion on the web visit https://groups.google.com/a/groups.riscv.org/d/msgid/sw-dev/CAAVo%2BPn33eNbgdoOjuqRPMMDXjXma82Sp%2BvpTbn_YMnY9%3DZ5cw%40mail.gmail.com.

atish patra

Tommy Murphy

They are helpful but still point to lack of clarity.

- some say a key point is that a hart lacks independent instruction fetch, others say that's not relevant (or not necessarily the case?)

- independent registers doesn't seem to distinguish it from a software thread with "virtual" independent registers

- having to extrapolate from x86 documentation to what hart means to RISC-V specifically isn't clear

The fact that nobody can give a clear and concise definition of the term/concept hart suggests to me that it's not widely or consistently understood. Dutch a definition does not appear in the specs or any third party RISC-V online or printed material that I have read so far either.

The specs really should provide such a definition in my opinion since it seems so fundamental to understanding RISC-V.

Thanks

Liviu Ionescu

> On 18 Nov 2017, at 11:39, Tommy Murphy <tommy_...@hotmail.com> wrote:

>

> The specs really should provide such a definition in my opinion since it seems so fundamental to understanding RISC-V.

Liviu

Valentin Nechayev

Sat, Nov 18, 2017 at 01:39:46, tommy_murphy wrote about "Re: [sw-dev] Definition of "hart"":

> - some say a key point is that a hart lacks independent instruction fetch, others say that's not relevant (or not necessarily the case?)

The declaration that hart is a beast without independent instruction

fetch is more than confusing - it is irrelevant and close to be wrong,

at least according to all usages of this word in current specs. No

matters whether instruction fetching is really independent. It could

be, for example, packed in batches for all 1000 physical processor

chips on a custom board. But, if a hart's logic decides what and when

to fetch (address to execute, instruction timings, etc.), it _is_

independent.

If someone provides dependency on x86 details, this is also how

"hyperthread" is implemented there. A hyperthread is (or, at least,

was, in first versions) a independent instruction fetch-and-scheduling

unit that can share ALUs and other execution units with another

hyperthreads (and HT shouldn't be turned on if this contention gets

hindering the performance).

> The fact that nobody can give a clear and concise definition of the term/concept hart suggests to me that it's not widely or consistently understood. Dutch a definition does not appear in the specs or any third party RISC-V online or printed material that I have read so far either.

>

> The specs really should provide such a definition in my opinion since it seems so fundamental to understanding RISC-V.

>

1. Make hart definition be primary, without any reference to

"cores"/etc: a hart is something that, while running, keeps its state

(defined in terms of registers), fetches and executes instructions.

2. In informal descriptive part, elaborate the state-of-the-art

typical implementation designs (that is, a chip is 1-or-more uniform

cores or groups of uniform cores, a core is 1-or-more harts sharing

execution devices, etc. - and, after that, show high similarity

with x86 hyperthread).

-netch-

Liviu Ionescu

> On 18 Nov 2017, at 13:48, Valentin Nechayev <ne...@netch.kiev.ua> wrote:

>

> if a hart's logic decides what and when

> to fetch (address to execute, instruction timings, etc.), it _is_

> independent.

if resources are not a problem, each core may have a single hart, and in this case the hart is really independent.

if multiple harts share a core, they are independent within the limits of resource sharing.

regards,

Liviu

kr...@berkeley.edu

In simple language, a hart is a RISC-V execution context that contains

a full set of RISC-V architectural registers and that executes its

program independently from other harts in a RISC-V system. What

constitutes a "RISC-V system" depends on the software's execution

environment but for standard user-level programs, it means the

user-visible harts and memory (i.e., a multithreaded Unix user

process). "Execute independently" means that each hart will

eventually fetch and execute its next instruction in program order

regardless of the activity of other harts (at least at user level).

How harts are mapped to physical execution resources should have no

effect on harts' functional correctness, though obviously there will

be observable differences in performance and observable differences in

legal instruction interleavings across harts (in general, a

multithreaded RISC-V program has a set of legal outcomes, not just

one).

In practice, at machine-mode level each hart is a real hardware

thread, either one hart per core without hardware multithreading, or

multiple harts per core with hardware multithreading. Whether harts

share instruction fetch units is an implementation detail, but for

legal RISC-V machines, the harts must be able to proceed independently

(e.g., some forms of Nvidia-like SIMT implementation packing harts

into SIMD warps will violate the "execute independently" clause and

cause deadlock for RISC-V programs that should terminate, so would not

be legal RISC-V implementations). Some systems can migrate harts

dynamically between cores (e.g. for big-little optimization, or fault

handling, or load-balancing), either with machine-mode code or purely

in hardware.

To give some history, we introduced the term hart in the context of

the Lithe user-level thread scheduling library, where we wanted to

clearly distinguish threads as a programming abstraction from threads

as a resource abstraction (pthreads confuses the two, causing havoc

when composing parallel runtime libraries):

https://people.eecs.berkeley.edu/~krste/papers/lithe-hotpar09.pdf

https://people.eecs.berkeley.edu/~krste/papers/lithe-pldi2010.pdf

https://people.eecs.berkeley.edu/~krste/papers/pan-phd-thesis.pdf

Hart is a contraction of "hardware thread" and represents the hardware

resource. It is also partly a play on "heart", representing something

that is continually "beating" (executing instructions).

It is possible, of course, to emulate harts in software, for example,

privileged execution environments can multiplex lesser-privileged

harts onto physical hardware using timer interrupts (note that

co-operative multithreading within same privilege level could not give

a compliant implementation).

Across all these implementation choices, we retain the concept of a

hart as a resource abstraction representing an independently advancing

RISC-V execution context within a RISC-V execution environment.

Krste

| You received this message because you are subscribed to the Google Groups "RISC-V SW Dev" group.

| To unsubscribe from this group and stop receiving emails from it, send an email to sw-dev+un...@groups.riscv.org.

| To post to this group, send email to sw-...@groups.riscv.org.

| Visit this group at https://groups.google.com/a/groups.riscv.org/group/sw-dev/.

Ted Speers

Do you mean 'beest'?

https://en.wikipedia.org/wiki/Coke%27s_hartebeest

-----Original Message-----

From: Valentin Nechayev [mailto:ne...@netch.kiev.ua]

Sent: Saturday, November 18, 2017 3:48 AM

To: Tommy Murphy <tommy_...@hotmail.com>

Cc: RISC-V SW Dev <sw-...@groups.riscv.org>

Subject: Re: [sw-dev] Definition of "hart"

EXTERNAL EMAIL

You received this message because you are subscribed to the Google Groups "RISC-V SW Dev" group.

To unsubscribe from this group and stop receiving emails from it, send an email to sw-dev+un...@groups.riscv.org.

To post to this group, send email to sw-...@groups.riscv.org.

Visit this group at https://groups.google.com/a/groups.riscv.org/group/sw-dev/.

Valentin Nechayev

Sun, Nov 19, 2017 at 04:00:16, Ted.Speers wrote about "RE: [sw-dev] Definition of "hart"":

> " The declaration that hart is a beast."

>

> Do you mean 'beest'?

>

> https://en.wikipedia.org/wiki/Coke%27s_hartebeest

Well, do you think it's a good RV mascot proposition? ;)

-netch-

Liviu Ionescu

I added an issue to the isa manual repo, perhaps you or Andrew can add such a definition to the specs:

https://github.com/riscv/riscv-isa-manual/issues/114

Regards,

Liviu

Tommy Murphy

But some of this raises more questions than it provides answers for me.

Can the term/concept be defined in a clear, concise and jargon free manner as I asked earlier?

> In simple language

Hmmm, that's debatable... :-)

> a full set of RISC-V architectural registers

After all some csrs are memory mapped so presumably cannot be unique to a hart.

> it means the user-visible harts and memory (i.e., a multithreaded Unix user process).

Otherwise this seems to severely constrain what a hart can do.

If harts are independent then surely one could run Linux and another could run FreeRTOS for example?

> one hart per core without hardware multithreading, or multiple harts per core with hardware multithreading

> Some systems can migrate harts dynamically between cores

> each hart is a real hardware thread

Thanks

Tommy Murphy

Hart's A Real Thread...

:-)

Liviu Ionescu

> On 19 Nov 2017, at 11:11, Tommy Murphy <tommy_...@hotmail.com> wrote:

>

> If harts are independent then surely one could run Linux and another could run FreeRTOS for example?

for example the SiFive U54 (https://www.sifive.com/products/risc-v-core-ip/u54-mc/)

you can run an RTOS on E51 (which has no MMU) and a Linux kernel and Linux processes on the 4 U54 cores (which are single hart, but with MMU), although I doubt the device was intended for fully separate usage, most probably the E51 will take over some of the Linux kernel functionality, and assist it.

regards,

Liviu

kr...@berkeley.edu

>>>>> On Sun, 19 Nov 2017 01:11:00 -0800 (PST), Tommy Murphy <tommy_...@hotmail.com> said:

| Thanks Kristen.

| But some of this raises more questions than it provides answers for me.

| Can the term/concept be defined in a clear, concise and jargon free manner as I asked earlier?

|| In simple language

| Hmmm, that's debatable... :-)

as any other architecture's hardware thread. If your use case does

not involve anything more than unprotected machine-mode code running

directly on a real machine, then a hart is literally a hardware

thread. The difference between RISC-V cores and harts is the same as

that between x86/POWER/ARM/MIPS/SPARC cores and hardware threads -

there is nothing new here. (If you consider "hardware thread" to be

jargon, I'd suggest a more general computer architecture tutorial

before diving into RISC-V land.)

The reason for my more complicated definitions are that since the

1960s, architects and system designers have been adding layers of

abstraction and virtualization to provide more protection and greater

efficiency. So, what seems to be a hardware construct at one level is

actually virtualized at another level, yet it is usually still most

simply described as if it were hardware, as that's the mental model

software authors have when programming at each level and, except for

performance, the behavior is close, if not identical, to that of a

direct hardware implementation.

For example, the RISC-V instruction set definitions in the user manual

describe being executed within an execution environment. The execution

environment can be provided by a bare machine (giving full access to

the physical address space), a hypervisor (implementing a recursive

hypervisor instance or implementing the SBI for each guest OS), or an

operating system (providing a user process). Code is written assuming

some execution-environment binary interface (e.g., ABI for user-level

application code or what we call SBI for operating system code) that

describes how the environment is initialized, what instructions are

allowed and what memory, architectural state, and CSRs are visible,

and also what environment calls are provided to request service from

the execution environment (e.g., I/O, or exit). Hypervisors or OSs

provide execution environments as virtual machines, each supplying a

particular abstract machine interface to the code running inside the

virtual machine, and all multiplexed by the hypervisor/OS onto the

resources the hypervisor/OS obtains from its own execution

environment. (RISC-V privileged architecture was designed to support

recursive virtualization so a hypervisor could actually be running

under another hypervisor, for example.)

Back to hart definitions - from the perspective of the software

running in a given virtual machine, a hart is something that has the

defined hart architectural state and that independently fetches and

executes RISC-V instructions within that virtual machine. Now, the

virtual machine implementation might time-multiplex a set of virtual

machine harts onto fewer harts in the underlying physical hardware (or

underlying virtual machine) but has to do so in a way such that they

operate like independent hardware threads. In particular, it must be

able to preempt threads and cannot wait indefinitely for guest

software on a hart to "yield" control of the hart. (As an aside, the

execution environment could be emulating the RISC-V harts on an x86

server a la QEMU or rv8.)

The important distinction between hardware thread and software thread

here is really that the software inside a virtual machine is not

responsible for causing progress of each of hardware threads exposed

inside the virtual machine, that is the responsibility of the outer

execution environment - so it seems like "hardware" to the software

inside the virtual machine.

Given the above confusions, you might ask why we picked hardware

thread as a name instead of "virtual processor" or "environment

thread". These and other suggestions had their own potential causes

of confusion. For example, CPU has lost any useful meaning now that

it's often used to refer to a whole SoC. Virtual processor is

probably the best alternative, except "processor" is usually

associated with "core" rather than thread, so we'd have questions

whether a virtual processor is multithreaded and we'd need another

name for those threads. Also, it seems strange to describe the sole

thread on a real core as a virtual processor. Virtual thread can be

confused with a regular software thread context.

|| a full set of RISC-V architectural registers

| Does this mean x and fp regs only?

hart depending on the supported set of instruction set extensions and

privilege level at which it is running. So, no fp registers if there

is no floating-point, and the set of CSRs visible depends on the

extensions and privilege level. The execution-environment binary

interface defines this for each hart in the support virtual machine.

| After all some csrs are memory mapped so presumably cannot be unique to a hart.

mapped - they are accessed by CSR instructions. Some state that is

writable via memory-mapped IO can be read via CSRs (e.g., mstatus.MSIP

and other interrupt pending inputs). We avoid state that is writable

both via a CSR address and through memory-mapped IO.

|| it means the user-visible harts and memory (i.e., a multithreaded Unix user process).

| Did you mean e.g. rather than i.e.?

| Otherwise this seems to severely constrain what a hart can do.

| If harts are independent then surely one could run Linux and another

| could run FreeRTOS for example?

machine to each S-mode OS using PMPs to partition the physical address

space of the machine, and by providing each OS instance with a given

subset of the total machine harts. The M-mode interrupt delegation

registers can be used to direct certain device interrupts directly to

particular harts within each OS.

|| one hart per core without hardware multithreading, or multiple harts per core with hardware multithreading

| Whatever about hart becoming clearer I still don't get how a hart

| differs from a core either at an architectural hardware level or an

| abstract software level?

versus hardware threads. A core is usually considered a purely

physical thing. A core implements one or more harts, where if there

are multiple harts, they are time-multiplexing some common hardware

components (e.g., instruction fetch, physical registers, ALUs,

predictor state, etc.). The concept of a core is usually not directly

represented at other abstraction levels (except as a performance hint

to preferentially schedule work on harts on different cores instead of

harts on the same core). You could imagine exposing the hart-core

mapping more abstractly so higher virtualization levels could avoid

scheduling work on the same core if other cores were idle, but I think

experience has been this gets too complicated/restrictive for

software. Another reason you might expose cores to higher-level code

is for security reasons, where you might not allow code from two

different security domains to run simultaneously on different harts on

the same core to prevent high-bandwidth timing attacks.

|| Some systems can migrate harts dynamically between cores

| How can the do this if hart is a hardware resource?

|| each hart is a real hardware thread

| ...

|| we retain the concept of a hart as a resource abstraction

| How can it be both real hardware AND an abstraction?

valid implementation, and even when virtualized it behaves like

hardware. In all cases, it's a resource within an execution

environment that has state and advances along executing a RISC-V

instruction stream independently of other software inside the same

execution environment.

While I can imagine some desire to have a term that could only be used

to describe a real piece of hardware that executes code, that would

have very limited usefulness. Even when just developing the silicon,

and running the system under simulation, you would have to use a

different name to describe your simulated hardware engine - and

engineers don't think that way. A simulation of an "X" is still

called an "X".

Krste

| Thanks

| --

| You received this message because you are subscribed to the Google Groups "RISC-V SW Dev" group.

| To unsubscribe from this group and stop receiving emails from it, send an email to sw-dev+un...@groups.riscv.org.

| To post to this group, send email to sw-...@groups.riscv.org.

| Visit this group at https://groups.google.com/a/groups.riscv.org/group/sw-dev/.

Ahmed Juba

Is there a clear, concise and authoritative definition of the term/concept "hart" anywhere?The spec doesn't seem to have one and simply uses the term without any specific explanation so it remains unclear/ambiguous.Thanks.