Re: [chromium-dev] Preliminary results in Blink Web Tests

21 views

Skip to first unread message

Ben Pastene

Dec 13, 2021, 1:39:17 PM12/13/21

to g...@google.com, infra-dev, Chromium-dev, Dana Jansens

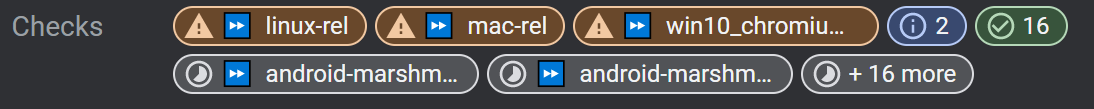

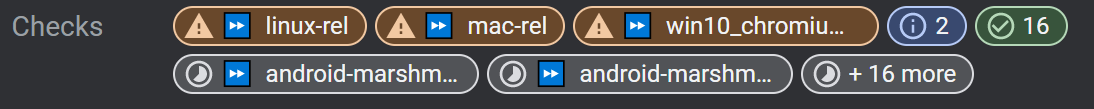

Not sure what the "Preliminary results" UI is that you're referring to. Is that the new "Checks" UI in gerrit? Or the "Test Results" tab in a build page?

A link or screenshot might help.

On Mon, Dec 13, 2021 at 9:06 AM 'Gabriel Charette' via Chromium-dev <chromi...@chromium.org> wrote:

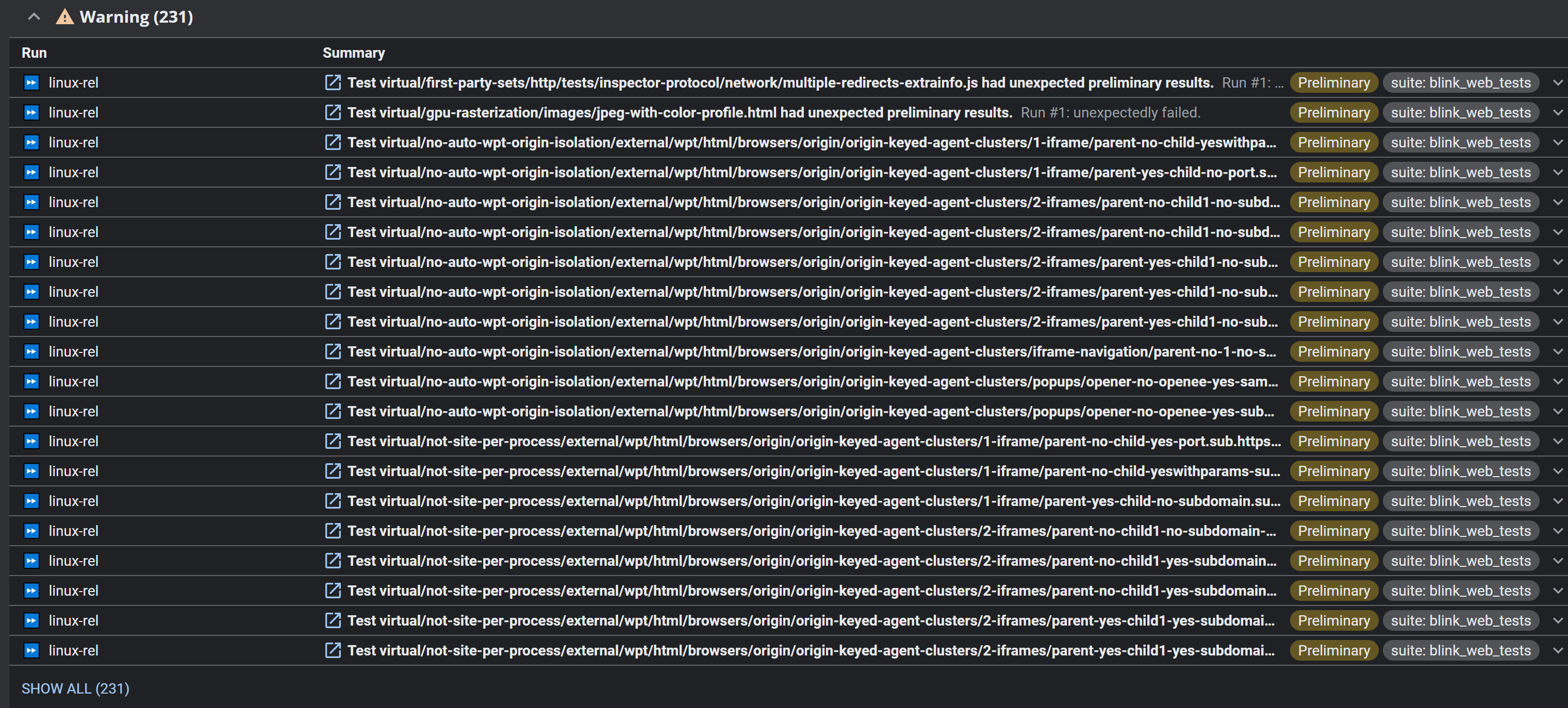

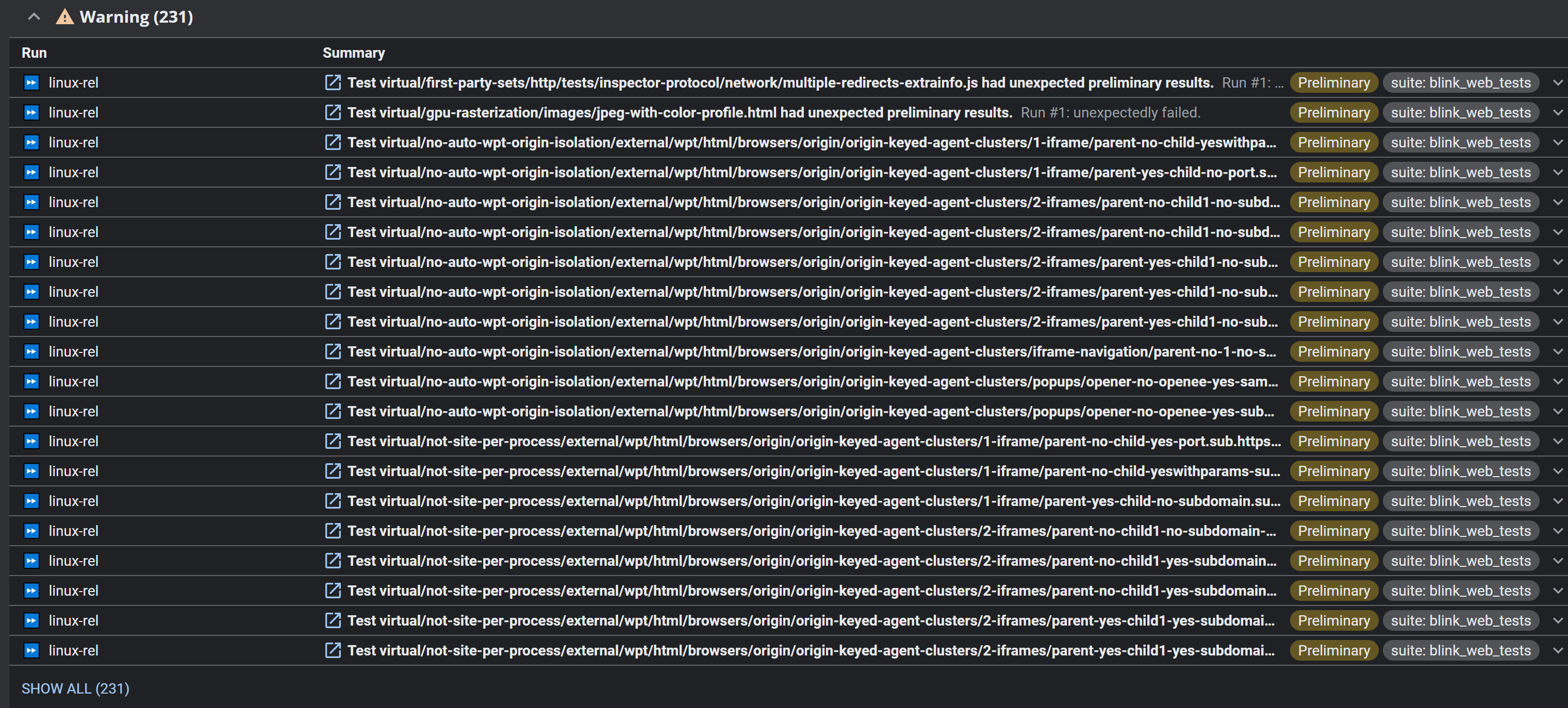

It seems that Blink Web Tests always fail in the new "Preliminary results" CQ UI and then are later ignored by CQ logic. Makes the results noisy and inactionable.--Can we:1) Make Blink Web Tests less flaky..?2) Hide known flakes in preliminary results?

--

Chromium Developers mailing list: chromi...@chromium.org

View archives, change email options, or unsubscribe:

http://groups.google.com/a/chromium.org/group/chromium-dev

---

You received this message because you are subscribed to the Google Groups "Chromium-dev" group.

To unsubscribe from this group and stop receiving emails from it, send an email to chromium-dev...@chromium.org.

To view this discussion on the web visit https://groups.google.com/a/chromium.org/d/msgid/chromium-dev/CAJTZ7LLhSg_rj3oW%3D1JzERoC%2BEz%2BkM8oy%3DUPs08iu112EsqwmQ%40mail.gmail.com.

Gabriel Charette

Dec 13, 2021, 5:16:07 PM12/13/21

to Ben Pastene, infra-dev, Chromium-dev, Dana Jansens

It's transient but it's there during any CQ dry run in my experience.

Erik Staab

Dec 14, 2021, 4:01:56 PM12/14/21

to Gabriel Charette, Gavin Mak, Matthew Warton, Ben Pastene, infra-dev, Chromium-dev, Dana Jansens

Yeah, these preliminary results are presented between the first run of tests and subsequent retries and when lots of tests are consistently flaky on the first attempt it ends up being pretty spammy.

Hiding known flakes could be a good workaround since I don't think making major progress in web test flakiness will be as easy.

cc +Gavin Mak for gerrit checks UI

cc +Matthew Warton for test results

You received this message because you are subscribed to the Google Groups "infra-dev" group.

To unsubscribe from this group and stop receiving emails from it, send an email to infra-dev+...@chromium.org.

To view this discussion on the web visit https://groups.google.com/a/chromium.org/d/msgid/infra-dev/CAJTZ7LLKVJpZhbOYHegHtsd4CBn3JmHjFUwoFOBUD0A154Oz-Q%40mail.gmail.com.

Gabriel Charette

Dec 14, 2021, 8:50:28 PM12/14/21

to Gavin Mak, Erik Staab, Gabriel Charette, Matthew Warton, Ben Pastene, infra-dev, Chromium-dev, Dana Jansens

On Tue, Dec 14, 2021 at 5:04 PM Gavin Mak <gavi...@google.com> wrote:

It seems straightforward enough to mark these known-flaky variants in ResultDB with a tag that Gerrit could use to hide preliminary results from.Stepping back though, how useful are preliminary results in general? If they are more noisy than helpful we could hide them by default and show them only if "Additional Results" are shown. This is the approach we currently use for exonerated/flaky test results.

I really like the idea of Preliminary Results, I don't like that I currently need to sift through the ones I know to always be flaky to figure out if there's an actually interesting preliminary result.

Gavin Mak

Dec 16, 2021, 12:04:35 PM12/16/21

to Gabriel Charette, Erik Staab, Matthew Warton, Ben Pastene, infra-dev, Chromium-dev, Dana Jansens

In that case, I think it'd be best to tag flaky tests accordingly in ResultDB. Once they're tagged it'll be simple to omit them from showing up on Gerrit. Eventually, I could see the tag being used as a predicate s.t. the flaky tests aren't fetched in the first place.

Matthew, would you be the right person for actually tagging results?

Xianzhu Wang

Dec 16, 2021, 12:04:41 PM12/16/21

to gavi...@google.com, Gabriel Charette, Erik Staab, Matthew Warton, Ben Pastene, infra-dev, Chromium-dev, Dana Jansens, Brian Sheedy

Another way is to add entries in TestExpectations for the flaky tests found by FindIt. I think it has the following benefits:

1. The failures won't be shown in preliminary results.

2. We don't need two ways (TestExpectations and Known-Flake-by-FindIt) to suppress failures of flaky tests. I think TestExpectations is well known by blink developers, while Known-Flake-by-FindIt is less known. (Also will FindIt be deprecated?)

3. The stale test expectation removal tool (+bsheedy@) will work for all previously-known flaky tests that are no longer flaky.

To view this discussion on the web visit https://groups.google.com/a/chromium.org/d/msgid/chromium-dev/CADwPHrA_Cyq1WC8sOj%3D-c2CH3pcgXQjcZcmQKGVM_VKS9v95Lw%40mail.gmail.com.

Dirk Pranke

Dec 16, 2021, 12:15:54 PM12/16/21

to wangx...@chromium.org, gavi...@google.com, Gabriel Charette, Erik Staab, Matthew Warton, Ben Pastene, infra-dev, Chromium-dev, Dana Jansens, Brian Sheedy

+1 to this suggestion. Please don't duplicate what TestExpectations does.

-- Dirk

To view this discussion on the web visit https://groups.google.com/a/chromium.org/d/msgid/chromium-dev/CADBxridkZnnp3pcaFs0suT3VHzXm7Gi89S_C7LETorjDKriP9A%40mail.gmail.com.

Gavin Mak

Dec 16, 2021, 5:24:04 PM12/16/21

to Dirk Pranke, wangx...@chromium.org, Gabriel Charette, Erik Staab, Matthew Warton, Ben Pastene, infra-dev, Chromium-dev, Dana Jansens, Brian Sheedy

I'm not familiar with TestExpectations. What, if anything, would need to be done on the Gerrit side?

Xianzhu Wang

Dec 16, 2021, 7:00:41 PM12/16/21

to Gavin Mak, Dirk Pranke, Gabriel Charette, Erik Staab, Matthew Warton, Ben Pastene, infra-dev, Chromium-dev, Dana Jansens, Brian Sheedy

TestExpectations is Blink's way to suppress web test failures, permanently or temporarily.

If FindIt can add found flaky tests into TestExpectations like:

# Added by FindItcrbug.com/bug-number foo/bar.html [ Pass Failure ]

then Gerrit probably doesn't need to do anything. FindIt needs to do more work though.

The drawback is that the above change will need to be committed (probably through the commit queue) to take effect (instead of currently FindIt suppressions taking effect immediately), but this is just like how sheriffs suppress failing web tests which seems to work well.

Gabriel Charette

Dec 17, 2021, 10:43:38 AM12/17/21

to wangx...@chromium.org, Gavin Mak, Dirk Pranke, Gabriel Charette, Erik Staab, Matthew Warton, Ben Pastene, infra-dev, Chromium-dev, Dana Jansens, Brian Sheedy

Do we need to commit expectations? It'd seem better to me if Infra was able to file bugs for flaky tests, ignore them on CQ, but keep running them (auto-closing bugs of the ones that stop flaking).

I have a more general concern with explicitly disabling flaky tests however. I've been struggling with the inability to conscientiously avoid introducing new flakes to the codebase. When making core changes that affect all tests, sometimes even in an attempt to address existing flakes, it's really hard to know that you're not introducing new flakes. Impossible as a dry run (CQ explicitly hides flakes from you.. no way to request otherwise), so the "best" way is to wait for pinpoint/sheriffs, 2-3 days of silence is usually a good sign...

Whenever such a CL gets reverted, I'm always left to wonder how many other tests were disabled because of the systemic flake I inadvertently introduced... Some sheriffs do tag me/bug but there's no guarantee. Other disabled tests are gone forever...

If instead Infra had a database of known flakes, it could:

1) Ignore known flakes in CQ

2) Close bugs for flakes that vanish (like ClusterFuzz)

3) Have a "flakiness Dry Run" on CQ where it could spot new flakes before landing :)!

Also, re. TestExpectations. This issue is not specific to only Blink Web Tests, they are #1 but there are other instances.

2) Close bugs for flakes that vanish (like ClusterFuzz)

3) Have a "flakiness Dry Run" on CQ where it could spot new flakes before landing :)!

Also, re. TestExpectations. This issue is not specific to only Blink Web Tests, they are #1 but there are other instances.

- Gab

To view this discussion on the web visit https://groups.google.com/a/chromium.org/d/msgid/chromium-dev/CADBxrifFMDu8Y5dxRRmatC95%3DNxmsrGdXp4uRk_kSGYDsFiHKA%40mail.gmail.com.

K. Moon

Dec 17, 2021, 11:05:03 AM12/17/21

to Gabriel Charette, wangx...@chromium.org, Gavin Mak, Dirk Pranke, Erik Staab, Matthew Warton, Ben Pastene, infra-dev, Chromium-dev, Dana Jansens, Brian Sheedy

+1; I have the uncomfortable feeling that disabling tests due to flakiness is just hiding problems introduced elsewhere in the code at least part of the time. Really wish there was better automation around this. And as a sheriff who doesn't routinely work with Web tests, committing changes to TestExpectations works, but is sorta a pain the first time you do it. And then the next time, when I've forgotten how to do it. :-)

To view this discussion on the web visit https://groups.google.com/a/chromium.org/d/msgid/infra-dev/CAJTZ7L%2B8C3eU-K6bvLxFdF1H0VvT2_e6VmnCRaA3YitE%2BZ3OLA%40mail.gmail.com.

Reply all

Reply to author

Forward

0 new messages