Wrong data shown on Chrome UX report - Core Web Vitas ( Google Search Console)

2,549 views

Skip to first unread message

ProSec GmbH

Aug 2, 2021, 5:54:02 AM8/2/21

to Chrome UX Report (Discussions)

Hello,

We were working on Core Web Vitals for our website and now we have the result like below:

Mobile device:

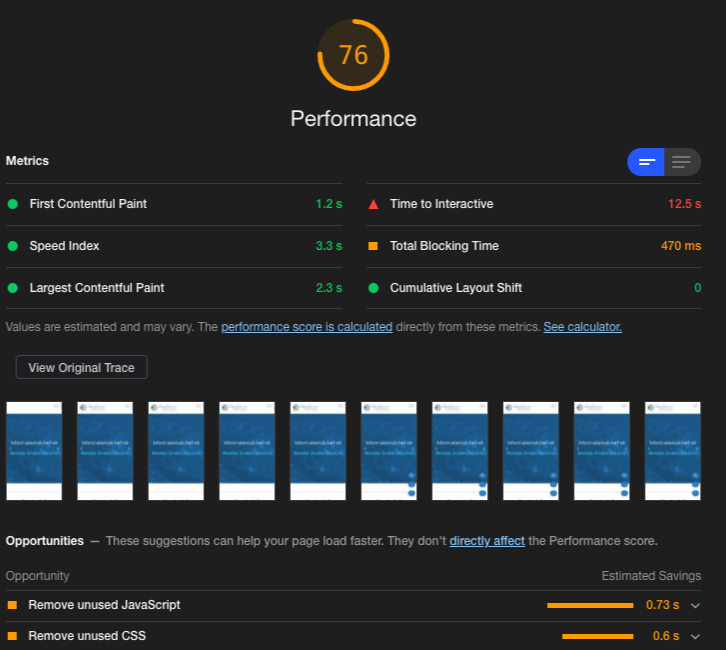

Lighthouse – LCP – Green ( 2.0 to 2.4 Sec) for all our pages

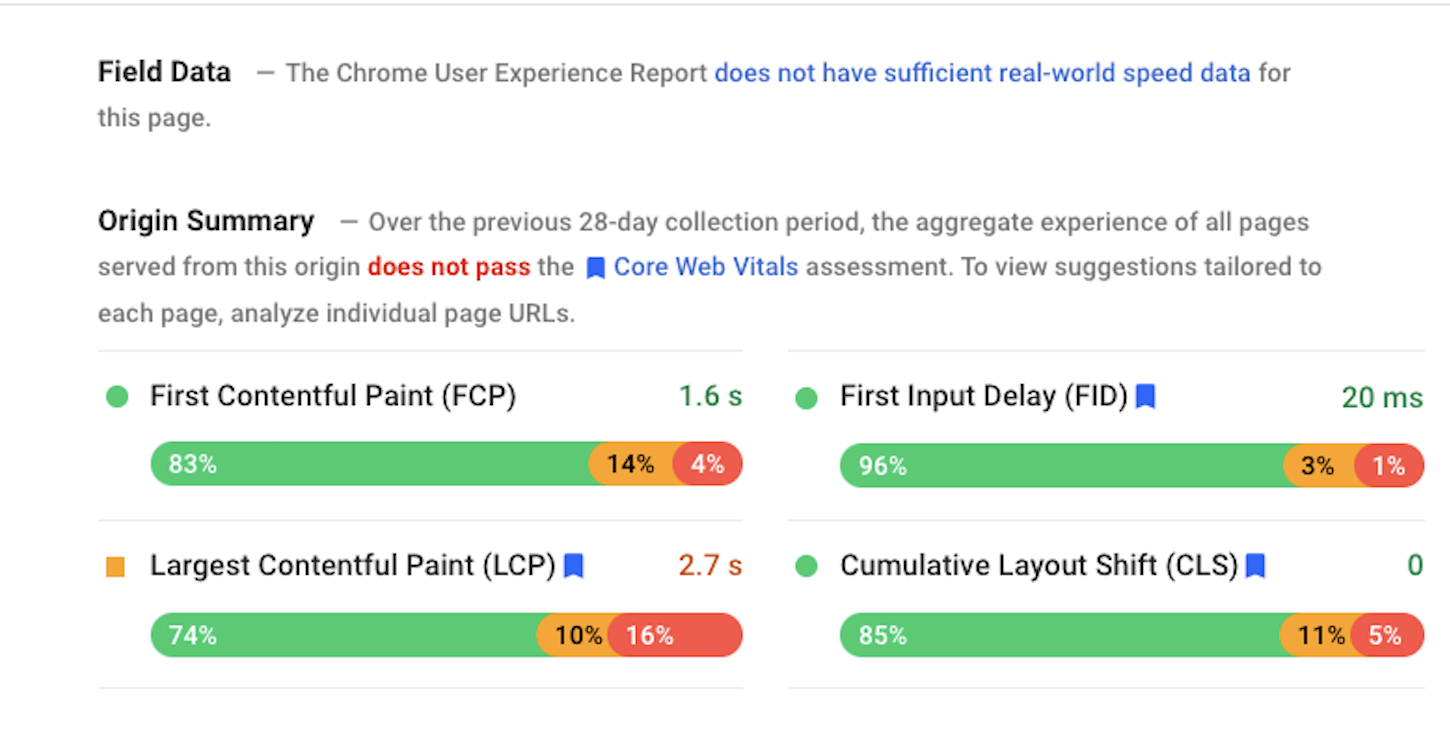

Page speed Insight – LCP(Field Data) – Orange ( 2.7 Sec) for all our page.

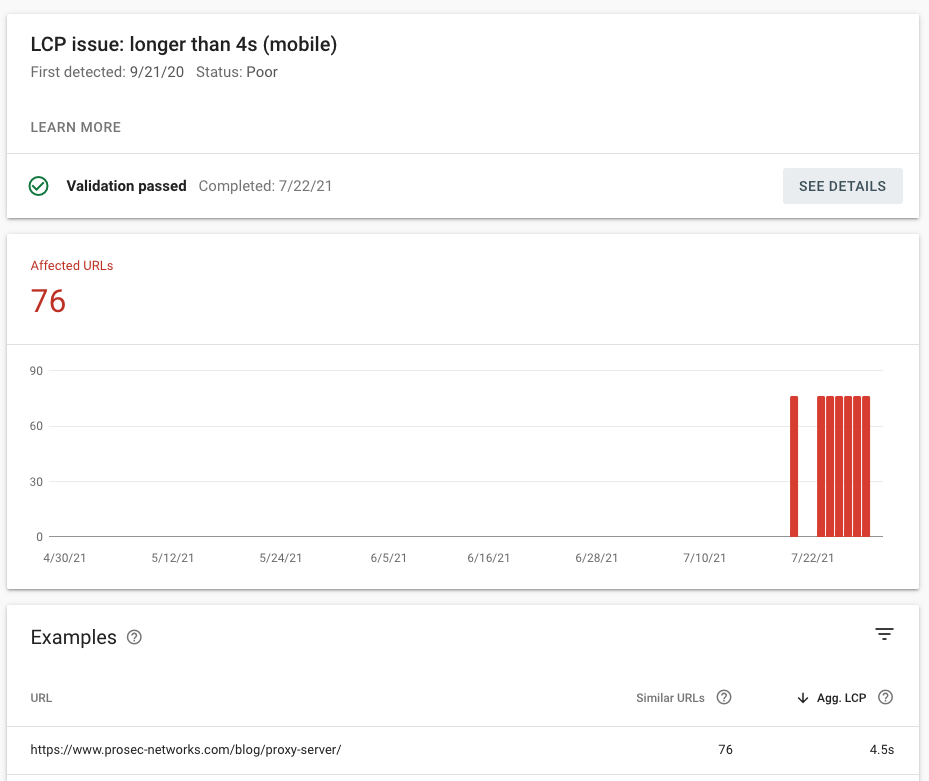

But in Google Search Console , Core Web Vitals , it’s showing we have all URLS on LCP 4.7 Sec average and hence poor URLS. And also there is no option for validate fix.

Can you please help me on this regard? All the screenshots are attached here with this email for you reference.

Regards,

Vishnu Menon

Rick Viscomi

Aug 2, 2021, 5:09:50 PM8/2/21

to Chrome UX Report (Discussions), ProSec GmbH

Hi Vishnu,

There's an explanation for how all of these seemingly conflicting data points can all be true at the same time, but I can see why this experience can be confusing and frustrating. We're trying to do a better job of explaining the scope of each tool, so this type of feedback is very helpful. I'll try to explain what's happening in each tool and hopefully that will clarify things for you.

Starting with Search Console, this tool aggregates real-user experiences into groups of pages. Based on your screenshot, it's saying that there are 76 URLs that are similar to /blog/proxy-server/ (probably other blog posts) and the aggregate LCP score for this group is 4.5 seconds. Some blog posts may have faster experiences, some may have slower experiences, but the assessment for the entire group of pages is 4.5s.

PageSpeed Insights assesses the real-user experiences of both individual pages and the website as a whole. The 2.7 second LCP in your screenshot is the aggregation of all LCP experiences for the origin (website) as a whole. The line above, "The Chrome User Experience Report does not have sufficient real-world speed data for this page." is saying that there's not enough data to make a page-level assessment of the UX. Even though we don't have data on real-user experiences for this particular page, we can see a distribution of how users experienced all pages, beyond the group of 76 blog posts.

So already we can see that these tools are measuring different things. Search Console is aggregating at the group-level and PageSpeed Insights is reporting page-level data when available and falling back to origin-level data when it exists. (Some websites have so little traffic that they may not even have origin-level data). Unfortunately, it's hard to corroborate what Search Console is saying when PageSpeed Insights is lacking group-level data. This is a known limitation and the team is exploring a fix.

The other tool you looked at is Lighthouse. This tool simulates a user experience by loading a page and measuring the performance. This simulation is limited in how realistically it accesses the page (eg network speed) and how it behaves on the page (eg clicking, scrolling), so the performance data it collects may or may not be representative of real user experiences, but it tries to get as close as possible. This is considered a synthetic or lab test because it's not a real user experience; it can only simulate one. You can imagine that if two tests are configured identically except one has a slower network speed, that one will have worse LCP performance. So the LCP values like 2.3 seconds are not necessarily saying that real users are having fast experiences, just that the simulations were fast based on the particular network speeds (and other variables) used by the tests. Given that, the primary benefit of lab testing is the advice provided by the audits; these are actionable things you can do to improve performance.

To recap: Search Console and PageSpeed Insights are tools that report on real-user experiences, but they may be reporting at different granularities. Lighthouse (which is also reported in PageSpeed Insights in the "Lab Data" section) reports on synthetic/simulated experiences.

The distinctions between lab and field data can be subtle, not to mention the different levels of aggregation of field data, so this is definitely something the tooling/documentation teams will be working to improve.

As for how to actually improve the LCP situation reported by Search Console, I would recommend looking at the Lighthouse audits for actionable optimization opportunities. Based on PageSpeed Insights testing, the backend server response time looks like it may be one culprit. You should also look into running your own Lighthouse testing with a slower network speed to better reflect the slower LCP times real users are experiencing. If you run Lighthouse from Chrome DevTools, you could turn on network throttling with a slower config. That may help bring other optimization opportunities to the surface. https://webpagetest.org/webvitals could also be a useful tool for diagnosing Web Vitals issues and the advanced testing configurations can give you more granular control to synthetically access/behave more like real users.

Hope that helps!

Rick

ProSec GmbH

Aug 12, 2021, 7:30:15 AM8/12/21

to Chrome UX Report (Discussions), rvis...@google.com

Hello Rick,

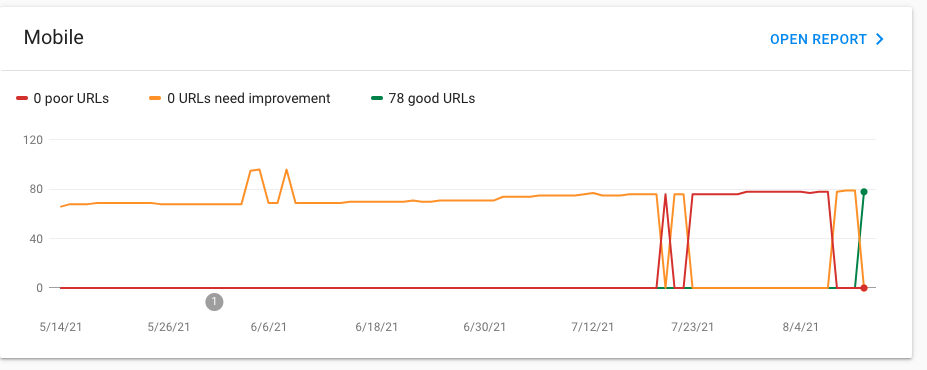

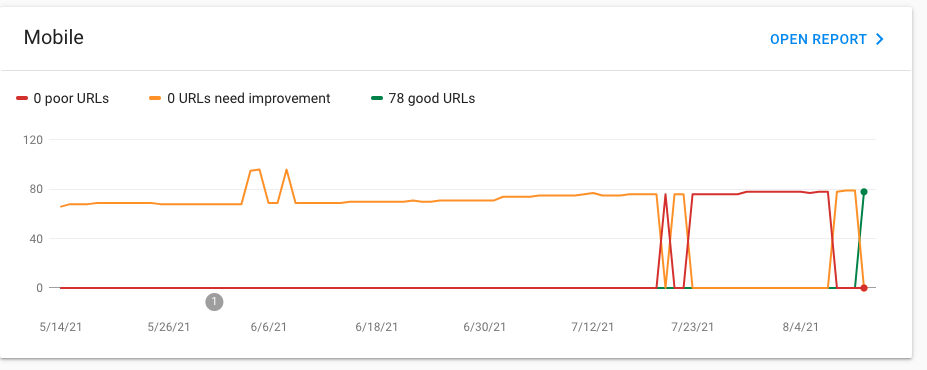

Thanks for the detailed explanation. I did it as you mentioned and good thing is that now we have all good URLS on core web vital.

I just have one more question related to the topic. We have in and around 200 URLs on our website, but now Core Web Vitals only showing 78 Good URL and nothing to improve or poor. Why is it only taking a few URLs ?

Thanks in advance.

Vishnu Menon

Rick Viscomi

Aug 12, 2021, 7:26:56 PM8/12/21

to Chrome UX Report (Discussions), ProSec GmbH, Rick Viscomi

Glad to hear you were able to get everything into the Good category, nice work!

For questions specific to Search Console like missing URLs, I'd recommend asking on the Search Central community forum.

Rick

Nikhil Sharma

Aug 24, 2021, 1:37:49 PM8/24/21

to Rick Viscomi, Chrome UX Report (Discussions)

@Rick Viscomi your reply to this issue is amazing and its clears all my doubts. Keep Coding

--

You received this message because you are subscribed to the Google Groups "Chrome UX Report (Discussions)" group.

To unsubscribe from this group and stop receiving emails from it, send an email to chrome-ux-repo...@chromium.org.

To view this discussion on the web visit https://groups.google.com/a/chromium.org/d/msgid/chrome-ux-report/e98172b1-3c01-43b5-bcd2-de97cee17e3dn%40chromium.org.

Reply all

Reply to author

Forward

0 new messages